Published October 26, 2021

Image adapted from Elentaris Photo / Shutterstock.com

Gadi Singer is Vice President and Director of Emergent AI Research at Intel Labs leading the development of the third wave of AI capabilities.

Cognitive AI, hierarchy of knowledge, and the answer to the unsustainable growth of all-inclusive deep-learning models

Can we drive effectiveness and efficiency of AI at the same time?

If we want our systems to be more intelligent, do they have to become more expensive? Our goal should be to significantly increase the capabilities and improve the results of AI technologies while minimizing power and system cost, not by increasing it.

Achieving this could be possible if we follow the architectural design observed time and again in natural control systems, that is, a hierarchy of specialized levels. This article challenges the single neural network’s current large language model (LLM) approach, which attempts to encompass all world knowledge. I will posit that operational efficiency within a heterogeneous environment at multiple orders of magnitude requires a tiered architecture.

Generative Pre-trained Transformer 3 (GPT-3) can correctly answer many factual questions. For example, it knows the location of the 1992 Olympics; it can also name the daughters of the 44th president, the code for the San Francisco airport or the distance between the earth and the sun. However, as exciting as this might sound, as a long-term trend, such memorization of endless factoids is as much a ‘bug’ as it is a ‘feature.’

Figure 1: GPT-3 is pretty good at answering trivia questions. (image credit: Intel Labs)

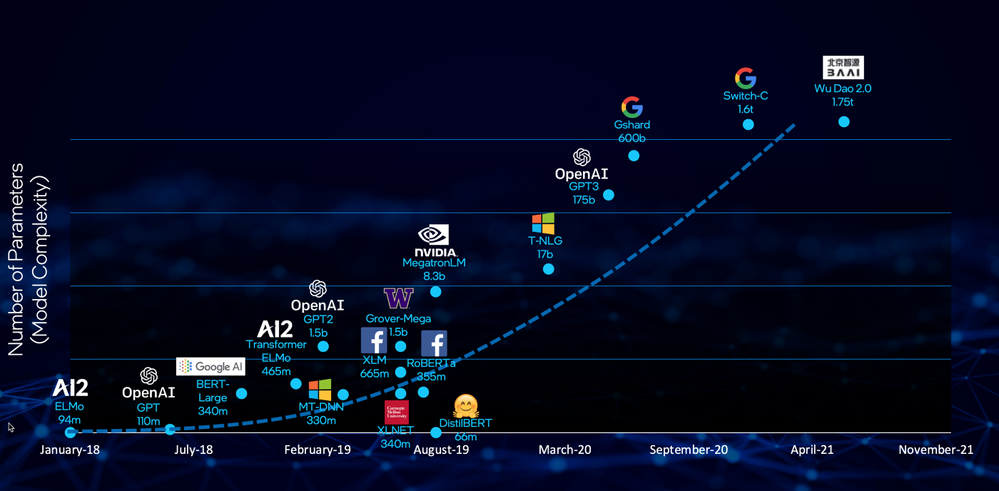

The exponential growth of neural network-based models has been a hot discussion topic over the past couple of years, and many researchers are warning about their computationalunsustainability. Variants of Figure 2 are now a common occurrence in the media, and it is becoming clear that it’s only a matter of time before the AI industry is forced to seek a different approach to scaling the capabilities of its solutions. This growth trend will be further driven by the emergence of multi-modal applications where the model has to capture both linguistic and visual information.

Figure 2: The exponential growth of neural network models cannot be sustained.

A key reason for this exponential growth in computational cost is that additional capabilities are being achieved through heavy overparameterization and vast training data sets. According to recent research done at MIT, this approach requires k² more data and k⁴ more computational cost to achieve a k-fold improvement.

A large modern neural network has, a consolidated way of storing information — its parametric memory. While this type of storage can be vast and the algorithms of its encoding very sophisticated, maintaining a uniform speed of access to an exploding number of parameters is driving up the computational cost. Such a monolithic storage style is a highly inefficient approach at the very large scale and is not something commonly seen in the architecture of computer hardware, search algorithms or even in nature.

The key insight is that single-tier systems will be fundamentally disadvantaged due to their inherent trade-off between scale and expediency. The larger the scale of items (such as chunks of information) that the system has to deal with, the slower the access to those items at inference time, assuming that computational capacity is costly or constrained. On the other hand, if the system architects choose to prioritize speed of access, the cost of retrieval can be very high. For example, GPT-3 costs 6 cents per 1000 tokens (roughly 750 words) of queries. Aside from inference time, encoding vast amounts of information into parametric memory is also very resource intensive. GPT-3 reportedly cost at least $4.6 million to train. The scale of world information relevant to AI systems is huge when considering the combination of language, visual and other knowledge sources. Even the most impressive SOTA models would pale in comparison with what could be achieved if all of that information became accessible for processing and retrieval by AI.

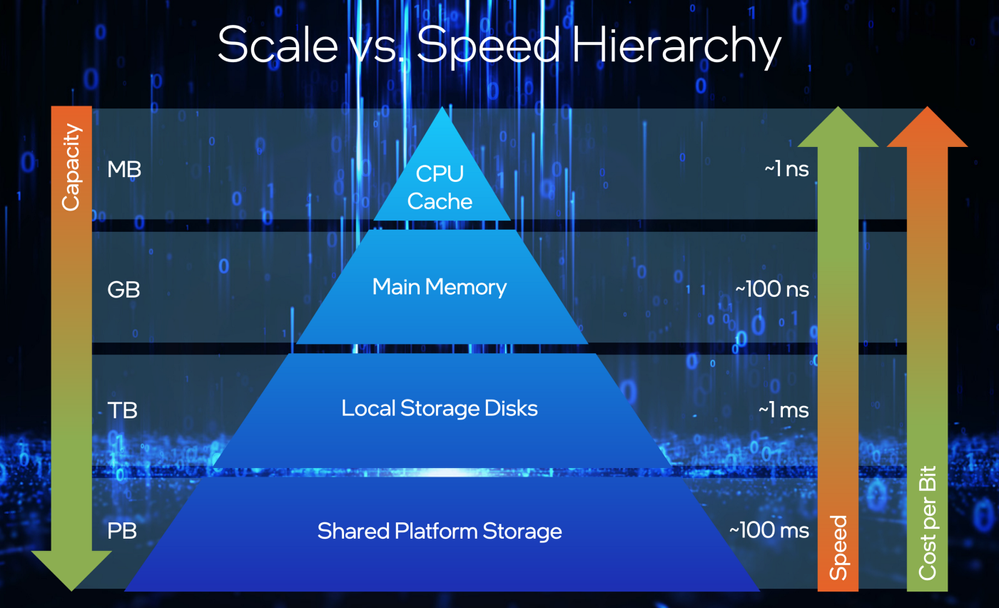

The way to address the contention between cost-effective expediency and ensuring access to all the needed information is to establish a tiered access structure. Important and frequently-required information should be most accessible, while less frequently used information needs to be stored in progressively slower repositories.

The architecture in modern computers is similar. CPU registers and cache utilize information within one nanosecond or less but have the least amount of storage space (on the scale of megabytes). Meanwhile, larger constructs like shared platform storage can retrieve information orders of magnitude slower (~100ms), but are also proportionally larger in capacity (petabyte-scale) and cheaper in terms of cost per bit.

Figure 3: In computer systems, high-speed modules are also the most expensive and have the lowest capacity.

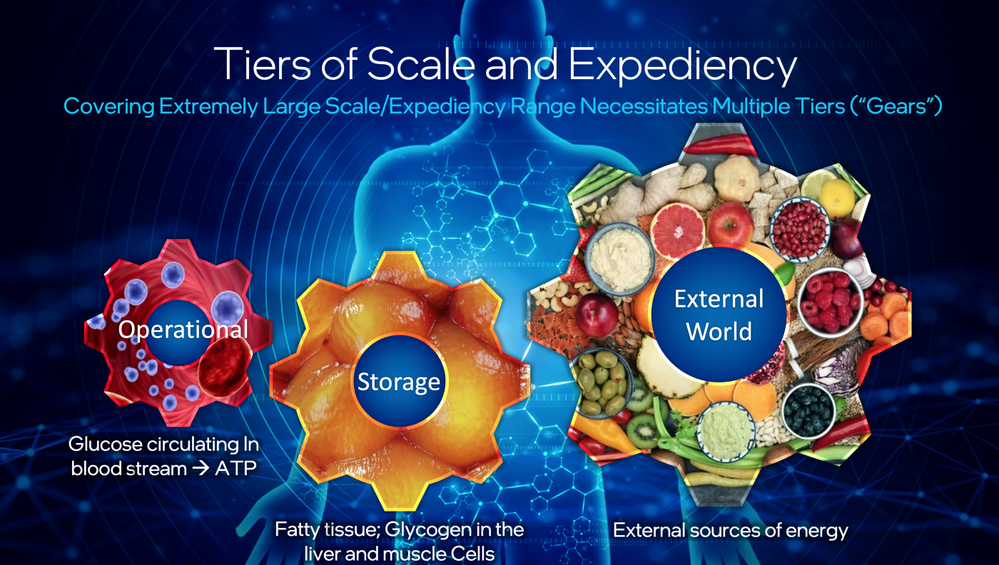

Similarly, nature also shows abundant examples of tiered architectures. One example is how human bodies access energy. There is a readily available supply of glucose circulating in the bloodstream, which can instantly convert to ATP. Glycogen stored in the liver, as well as fatty and muscle tissue, offer somewhat slower access. If the internal stores are depleted, the body can use an external energy source (food) to replenish them. Human bodies do not store all energy to be instantaneously accessible, since doing so would be prohibitively biologically expensive.

Figure 4: Efficient systems in nature are tiered.

If there is anything to learn from these examples, there is no one rung to rule them all — systems that operate at multiple scales of magnitude and scope will need to be stratified to be feasible. As this principle is applied to information in AI, the trade-off between expediency and scale can be addressed by creating a stratified architecture for our knowledge systems. This prioritization of information access is the design constraint that systems like large language models are violating by claiming to deliver scope and expediency uniformly.

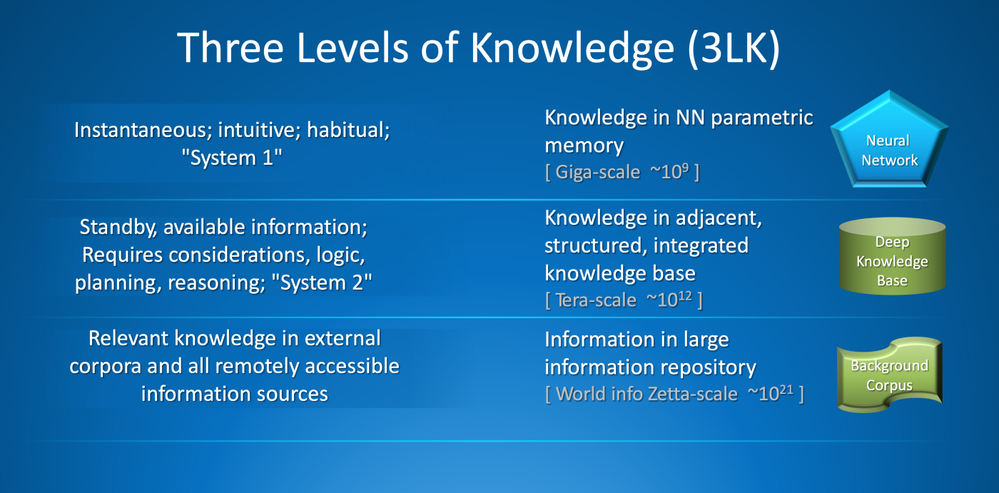

As previously described in the Thrill-K post, one approach to solving the prioritization constraint is dividing information used by the system (or, eventually, its knowledge) into three tiers: instantaneous, standby and retrieved external knowledge. The order of magnitude of these information tiers could roughly correspond to giga-, tera- and zetta- scale, as illustrated in Figure 5.

Figure 5. Information used by an AI system can be broken down into three tiers.

The choice of magnitudes in the above tiering is not arbitrary. According to Cisco, IP traffic will reach an annual run rate of 3.3 zettabytes in 2021. An average capacity of hard disk drives will be 5.4 terabytes in 2021, which is also in line with the amount of data stored in what can be considered a very large database (VLDB). The full T5 model has 11 billion parameters.

In AI systems of the future, the instantaneous knowledge tier can be directly stored in the parametric memory of the neural network. In contrast, standby knowledge can be refactored and stored in an adjacent, integrated structured knowledge base. When these two sources are insufficient, the system should retrieve external knowledge, for example, by searching through a background corpus or using sensors and actuators to collect data from its physical environment.

With tiered knowledge-based systems, a sustainable cost of scaling can be achieved. Evolutionary pressure has forced the human brain to maintain efficiency and energy consumption while growing its capabilities. Similarly, design constraints need to be applied to AI system architectures to stratify the distribution of computational resources coupled with varied expediency of information retrieval. Only then can AI systems reach the full scope of cognitive capabilities demanded by the human industry and civilization without infringing on its resources.

References

Thompson, N. C., Greenewald, K., Lee, K., & Manso, G. F. (2021, October 1). Deep Learning’s Diminishing Returns. IEEE Spectrum. https://spectrum.ieee.org/deep-learning-computational-cost

Wiggers, K. (2020, July 16). MIT researchers warn that deep learning is approaching computational limits. VentureBeat. https://venturebeat.com/2020/07/15/mit-researchers-warn-that-deep-learning-is-approaching-computational-limits/

Thompson, N. C. (2020, July 10). The Computational Limits of Deep Learning. ArXiv.Org. https://arxiv.org/abs/2007.05558

OpenAI API. (2021). https://beta.openai.com/pricing

Li, C. (2020, September 11). OpenAI’s GPT-3 Language Model: A Technical Overview. Lambda Blog. https://lambdalabs.com/blog/demystifying-gpt-3/#:%7E:text=1.%20We%20use,M

Singer, G. (2021, July 29). Thrill-K: A Blueprint for The Next Generation of Machine Intelligence. Medium. https://towardsdatascience.com/thrill-k-a-blueprint-for-the-next-generation-of-machine-intelligence-7ddacddfa0fe

Cisco. (2016). Global — 2021 Forecast Highlights. https://www.cisco.com/c/dam/m/en_us/solutions/service-provider/vni-forecast-highlights/pdf/Global_2021_Forecast_Highlights.pdf

Statista. (2021, August 12). Seagate global average hard disk drive capacity FY2015–2021. https://www.statista.com/statistics/795748/worldwide-seagate-average-hard-disk-drive-capacity/

Wikipedia contributors. (2021, October 16). Very large database. Wikipedia. https://en.wikipedia.org/wiki/Very_large_database

Exploring Transfer Learning with T5: the Text-To-Text Transfer Transformer. (2020, February 24). Google AI Blog. https://ai.googleblog.com/2020/02/exploring-transfer-learning-with-t5.html#:%7E:text=T5%20is%20surprisingly%20good%20at,%2C%20and%20Natural%20Questions%2C%20respectively.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.