0

0

2,539

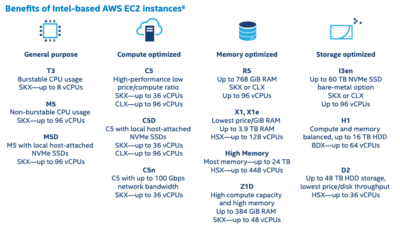

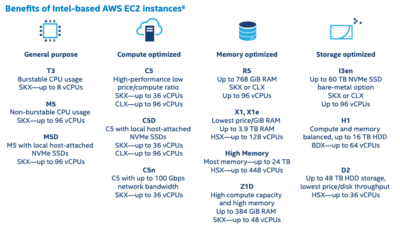

Amazon EC2 provides a wide selection of instance types optimized to run different workload categories. These instance types comprise varying combinations of CPU, memory, storage, and networking capacity and give you the flexibility to choose the appropriate mix of resources for your applications. Each instance type includes one or more instance sizes, allowing you to scale your resources to the requirements of your target workload.

In this article we will look into the following:

Here we can see the AWS instances and the Intel Xeon Processors associated with them.

Source: https://www.intel.com/content/dam/www/public/us/en/documents/product-briefs/cloudtech-brief-aws.pdf

Source: https://www.intel.com/content/dam/www/public/us/en/documents/product-briefs/cloudtech-brief-aws.pdf

Install OpenShift on AWS

By default the IPI or the Installer Provisioned Installer creates 3 x Master nodes (m5.large) and 3 x Worker nodes (m5.xlarge) on each Availability Zone.

Note, if we want to use different instance types with the installer we can customize the instance type for the aws installation as explained on https://access.redhat.com/solutions/3803201.

In OpenShift Container Platform, you can also install a customized cluster on Amazon Web Services (AWS). To customize the installation, you modify parameters in the install-config.yaml file before you install the cluster.

For this article we will use the default IPI installer on AWS.

After the deployment completes, you can log into the cluster and see the Master and Worker nodes are ready.

With the “oc describe node” command gives us detailed information about the node. As we see below it gives us information on all the Labels, Capacity, System Info, etc associated with the node.

You can display memory and CPU usage statistics about nodes, which provide the runtime environments for containers.

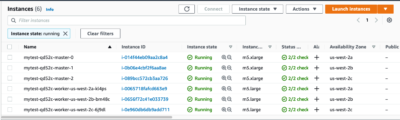

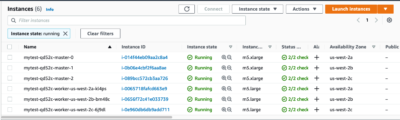

The screenshot below shows the AWS instances created by IPI for the OpenShift cluster.

We will now see how to add an c5.2xlarge instance to the OpenShift cluster using the OpenShift CLI.

MachineSets are groups of machines. MachineSets are to machines as ReplicaSets are to Pods. If you need more machines or must scale them down, you work with MachineSet to meet your compute needs.

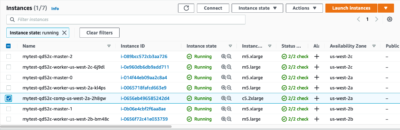

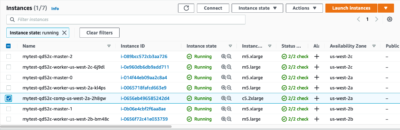

You can see that the new machineset “mytest-qd52c-comp-us-west-2a” is being created below. This will take a couple of minutes to be ready.

We can see on the AWS console that a new c5.2xlarge instance has been created.

NOTE: For HA you must create the above on at least two AZ’s in that region.

Label the new node “intel=comp-optimized”

Create a new project call myproject with node-selector set as “intel=comp-optimized”

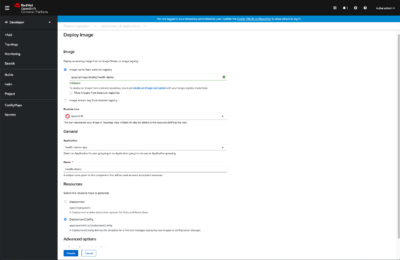

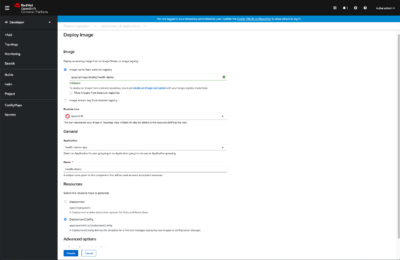

Next, I deployed an Image for the health-demo application from the Quay (quay.io/mayurshetty/health-demo)

We can see that the health-demo pod was started on the new c5.2xlarge instance.

We can see that the health-demo pod was started on the new c5.2xlarge instance.

In this post we have seen the various AWS EC2 instance types available for the different workload categories. We also looked into how OpenShift gives us the flexibility to add EC2 instances with Intel Xeon Processors to an OpenShift cluster running on AWS, and on how we can deploy our application to run on the new instance.

Written by Mayur Shetty, Principal Solution Architect, Red Hat and Raghu Moorthy, Principal Engineer, Intel Inc.

In this article we will look into the following:

- Select from Intel instances available on AWS.

- Install OpenShift on AWS.

- Add a c5.2xlarge instance with 2nd Generation Intel Xeon Scalable Processor (code name Cascade Lake) to the OpenShift cluster.

- Deploy an application such that the pods run on the newly added node.

AWS Instances with Intel Processors

Here we can see the AWS instances and the Intel Xeon Processors associated with them.

Source: https://www.intel.com/content/dam/www/public/us/en/documents/product-briefs/cloudtech-brief-aws.pdf

Source: https://www.intel.com/content/dam/www/public/us/en/documents/product-briefs/cloudtech-brief-aws.pdfInstall OpenShift on AWS

By default the IPI or the Installer Provisioned Installer creates 3 x Master nodes (m5.large) and 3 x Worker nodes (m5.xlarge) on each Availability Zone.

Note, if we want to use different instance types with the installer we can customize the instance type for the aws installation as explained on https://access.redhat.com/solutions/3803201.

In OpenShift Container Platform, you can also install a customized cluster on Amazon Web Services (AWS). To customize the installation, you modify parameters in the install-config.yaml file before you install the cluster.

For this article we will use the default IPI installer on AWS.

% ./openshift-install create cluster --dir=mydir

INFO Credentials loaded from the "default" profile in file "/Users/mshetty/.aws/credentials"

INFO Consuming Install Config from target directory

WARNING Following quotas ec2/L-0263D0A3 (us-west-2) are available but will be completely used pretty soon.

INFO Creating infrastructure resources...

INFO Waiting up to 20m0s for the Kubernetes API at https://api.mytest.ocp4-test-mshetty.com:6443.

INFO API v1.20.0+bafe72f up

INFO Waiting up to 30m0s for bootstrapping to complete...

INFO Destroying the bootstrap resources...

INFO Waiting up to 40m0s for the cluster at https://api.mytest.ocp4-test-mshetty.com:6443 to initialize...

INFO Waiting up to 10m0s for the openshift-console route to be created...

INFO Install complete!

INFO To access the cluster as the system:admin user when using 'oc', run 'export KUBECONFIG=/Users/mshetty/AWS/4.7/mydir/auth/kubeconfig'

INFO Access the OpenShift web-console here: https://console-openshift-console.apps.mytest.ocp4-test-mshetty.com

INFO Login to the console with user: "kubeadmin", and password: "sbGIs-shhAM-qPtS7-PrwTn"

INFO Time elapsed: 33m2s

After the deployment completes, you can log into the cluster and see the Master and Worker nodes are ready.

% oc get nodes

NAME STATUS ROLES AGE VERSION

ip-10-0-135-85.us-west-2.compute.internal Ready worker 4h8m v1.20.0+bafe72f

ip-10-0-155-129.us-west-2.compute.internal Ready master 4h18m v1.20.0+bafe72f

ip-10-0-164-249.us-west-2.compute.internal Ready worker 4h10m v1.20.0+bafe72f

ip-10-0-189-65.us-west-2.compute.internal Ready master 4h17m v1.20.0+bafe72f

ip-10-0-193-29.us-west-2.compute.internal Ready master 4h18m v1.20.0+bafe72f

ip-10-0-214-229.us-west-2.compute.internal Ready worker 4h8m v1.20.0+bafe72f

With the “oc describe node” command gives us detailed information about the node. As we see below it gives us information on all the Labels, Capacity, System Info, etc associated with the node.

% oc describe node ip-10-0-214-229.us-west-2.compute.internal

Name: ip-10-0-214-229.us-west-2.compute.internal

Roles: worker

Labels: beta.kubernetes.io/arch=amd64

beta.kubernetes.io/instance-type=m5.large

beta.kubernetes.io/os=linux

failure-domain.beta.kubernetes.io/region=us-west-2

failure-domain.beta.kubernetes.io/zone=us-west-2c

kubernetes.io/arch=amd64

kubernetes.io/hostname=ip-10-0-214-229

kubernetes.io/os=linux

node-role.kubernetes.io/worker=

node.kubernetes.io/instance-type=m5.large

node.openshift.io/os_id=rhcos

topology.ebs.csi.aws.com/zone=us-west-2c

topology.kubernetes.io/region=us-west-2

topology.kubernetes.io/zone=us-west-2c

Annotations: csi.volume.kubernetes.io/nodeid: {"ebs.csi.aws.com":"i-0e960db6db9add711"}

machine.openshift.io/machine: openshift-machine-api/mytest-qd52c-worker-us-west-2c-6j9dl

machineconfiguration.openshift.io/currentConfig: rendered-worker-640ffae9c463a45ced757500a238cfcf

machineconfiguration.openshift.io/desiredConfig: rendered-worker-640ffae9c463a45ced757500a238cfcf

machineconfiguration.openshift.io/reason:

machineconfiguration.openshift.io/state: Done

volumes.kubernetes.io/controller-managed-attach-detach: true

CreationTimestamp: Wed, 21 Apr 2021 10:45:04 -0700

Taints: <none>

Unschedulable: false

Lease:

HolderIdentity: ip-10-0-214-229.us-west-2.compute.internal

AcquireTime: <unset>

RenewTime: Wed, 21 Apr 2021 14:50:07 -0700

Conditions:

Type Status LastHeartbeatTime LastTransitionTime Reason Message

---- ------ ----------------- ------------------ ------ -------

MemoryPressure False Wed, 21 Apr 2021 14:46:47 -0700 Wed, 21 Apr 2021 10:45:03 -0700

KubeletHasSufficientMemory kubelet has sufficient memory available

DiskPressure False Wed, 21 Apr 2021 14:46:47 -0700 Wed, 21 Apr 2021 10:45:03 -0700

KubeletHasNoDiskPressure kubelet has no disk pressure

PIDPressure False Wed, 21 Apr 2021 14:46:47 -0700 Wed, 21 Apr 2021 10:45:03 -0700

KubeletHasSufficientPID kubelet has sufficient PID available

Ready True Wed, 21 Apr 2021 14:46:47 -0700 Wed, 21 Apr 2021 10:46:24 -0700

KubeletReady kubelet is posting ready status

Addresses:

InternalIP: 10.0.214.229

Hostname: ip-10-0-214-229.us-west-2.compute.internal

InternalDNS: ip-10-0-214-229.us-west-2.compute.internal

Capacity:

attachable-volumes-aws-ebs: 25

cpu: 2

ephemeral-storage: 125293548Ki

hugepages-1Gi: 0

hugepages-2Mi: 0

memory: 7936744Ki

pods: 250

Allocatable:

attachable-volumes-aws-ebs: 25

cpu: 1500m

ephemeral-storage: 114396791822

hugepages-1Gi: 0

hugepages-2Mi: 0

memory: 6785768Ki

pods: 250

System Info:

Machine ID: ec240bd55efb903fd58499d67a7fc761

System UUID: ec240bd5-5efb-903f-d584-99d67a7fc761

Boot ID: 6897e7a9-660e-43e5-b0a7-7b8a8b1bf9ce

Kernel Version: 4.18.0-240.15.1.el8_3.x86_64

OS Image: Red Hat Enterprise Linux CoreOS 47.83.202104010243-0

(Ootpa)

Operating System: linux

Architecture: amd64

Container Runtime Version: cri-o://1.20.2-4.rhaos4.7.gitd5a999a.el8

Kubelet Version: v1.20.0+bafe72f

Kube-Proxy Version: v1.20.0+bafe72f

ProviderID: aws:///us-west-2c/i-0e960db6db9add711

Non-terminated Pods: (16 in total)

Namespace Name CPU Requests

CPU Limits Memory Requests Memory Limits AGE

--------- ---- ------------ ---------- --------------- ------------- ---

openshift-cluster-csi-drivers aws-ebs-csi-driver-node-vvxwp 30m (2%) 0 (0%) 150Mi (2%) 0 (0%) 4h5m

openshift-cluster-node-tuning-operator tuned-xx8dc 10m (0%) 0 (0%) 50Mi (0%) 0 (0%) 4h5m

openshift-dns dns-default-4bh75 65m (4%) 0 (0%) 131Mi (1%) 0 (0%) 4h5m

openshift-image-registry node-ca-rcnrc 10m (0%) 0 (0%) 10Mi (0%) 0 (0%) 4h5m

openshift-ingress-canary ingress-canary-q5dpl 10m (0%) 0 (0%) 20Mi (0%) 0 (0%) 4h3m

openshift-machine-config-operator machine-config-daemon-xl9tx 40m (2%) 0 (0%) 100Mi (1%) 0 (0%) 4h5m

openshift-marketplace redhat-marketplace-c8sfx 10m (0%) 0 (0%) 50Mi (0%) 0 (0%) 104m

openshift-monitoring grafana-7874c696f6-v8498 5m (0%) 0 (0%) 120Mi (1%) 0 (0%) 3h59m

openshift-monitoring node-exporter-z4zk6 9m (0%) 0 (0%) 210Mi (3%) 0 (0%) 4h5m

openshift-monitoring prometheus-k8s-1 76m (5%) 0 (0%) 1204Mi (18%) 0 (0%) 3h59m

openshift-monitoring thanos-querier-8479f8f5cd-c6kvj 9m (0%) 0 (0%) 92Mi (1%) 0 (0%) 3h59m

openshift-multus multus-28dth 10m (0%) 0 (0%) 150Mi (2%) 0 (0%) 4h5m

openshift-multus network-metrics-daemon-wnw5r 20m (1%) 0 (0%) 120Mi (1%) 0 (0%) 4h5m

openshift-network-diagnostics network-check-target-5crw2 10m (0%) 0 (0%) 15Mi (0%) 0 (0%) 4h5m

openshift-sdn ovs-gx5g2 15m (1%) 0 (0%) 400Mi (6%) 0 (0%) 4h5m

openshift-sdn sdn-7kr86 110m (7%) 0 (0%) 220Mi (3%) 0 (0%) 4h5m

Allocated resources:

(Total limits may be over 100 percent, i.e., overcommitted.)

Resource Requests Limits

-------- -------- ------

cpu 439m (29%) 0 (0%)

memory 3042Mi (45%) 0 (0%)

ephemeral-storage 0 (0%) 0 (0%)

hugepages-1Gi 0 (0%) 0 (0%)

hugepages-2Mi 0 (0%) 0 (0%)

attachable-volumes-aws-ebs 0 0

Events: <none>

You can display memory and CPU usage statistics about nodes, which provide the runtime environments for containers.

% oc adm top nodes

NAME CPU(cores) CPU% MEMORY(bytes) MEMORY%

ip-10-0-135-85.us-west-2.compute.internal 271m 18% 2543Mi 38%

ip-10-0-155-129.us-west-2.compute.internal 617m 17% 5347Mi 37%

ip-10-0-164-249.us-west-2.compute.internal 213m 14% 3169Mi 47%

ip-10-0-189-65.us-west-2.compute.internal 536m 15% 5404Mi 37%

ip-10-0-193-29.us-west-2.compute.internal 678m 19% 7156Mi 48%

ip-10-0-214-229.us-west-2.compute.internal 321m 21% 2903Mi 43%

The screenshot below shows the AWS instances created by IPI for the OpenShift cluster.

Add a c5.2xlarge instance to the OpenShift cluster

We will now see how to add an c5.2xlarge instance to the OpenShift cluster using the OpenShift CLI.

MachineSets are groups of machines. MachineSets are to machines as ReplicaSets are to Pods. If you need more machines or must scale them down, you work with MachineSet to meet your compute needs.

# Select the first machineset

% SOURCE_MACHINESET=$(oc get machineset -n openshift-machine-api -o name | head -n1)

# Reformat with jq, for better diff result.

% oc get -o json -n openshift-machine-api $SOURCE_MACHINESET | jq -r > /tmp/source-machineset.json

% OLD_MACHINESET_NAME=$(jq '.metadata.name' -r /tmp/source-machineset.json )

% NEW_MACHINESET_NAME=${OLD_MACHINESET_NAME/worker/worker-comp}

# Change instanceType to the c5.2xlarge instance that we are adding, and delete some stuff

% jq -r '.spec.template.spec.providerSpec.value.instanceType = "c5.2xlarge"

| del(.metadata.selfLink)

| del(.metadata.uid)

| del(.metadata.creationTimestamp)

| del(.metadata.resourceVersion)

' /tmp/source-machineset.json > /tmp/comp-machineset.json

# Change machineset name

% sed -i "s/$OLD_MACHINESET_NAME/$NEW_MACHINESET_NAME/g" /tmp/comp-machineset.json

# Check changes via diff

% diff -Nuar /tmp/source-machineset.json /tmp/comp-machineset.json

Create the machine set

% oc create -f /tmp/comp-machineset.json

machineset.machine.openshift.io/mytest-qd52c-comp-us-west-2a created

You can see that the new machineset “mytest-qd52c-comp-us-west-2a” is being created below. This will take a couple of minutes to be ready.

% oc get machineset -n openshift-machine-api

NAME DESIRED CURRENT READY AVAILABLE AGE

mytest-qd52c-comp-us-west-2a 1 1 106s

mytest-qd52c-worker-us-west-2a 1 1 1 1 9h

mytest-qd52c-worker-us-west-2b 1 1 1 1 9h

mytest-qd52c-worker-us-west-2c 1 1 1 1 9h

mytest-qd52c-worker-us-west-2d 0 0 9h

% oc get machineset -n openshift-machine-api

NAME DESIRED CURRENT READY AVAILABLE AGE

mytest-qd52c-comp-us-west-2a 1 1 1 1 4m44s

mytest-qd52c-worker-us-west-2a 1 1 1 1 9h

mytest-qd52c-worker-us-west-2b 1 1 1 1 9h

mytest-qd52c-worker-us-west-2c 1 1 1 1 9h

mytest-qd52c-worker-us-west-2d 0 0 9h

We can see on the AWS console that a new c5.2xlarge instance has been created.

% oc get nodes

NAME STATUS ROLES AGE VERSION

ip-10-0-128-195.us-west-2.compute.internal Ready worker 70s v1.20.0+bafe72f

ip-10-0-135-85.us-west-2.compute.internal Ready worker 9h v1.20.0+bafe72f

ip-10-0-155-129.us-west-2.compute.internal Ready master 9h v1.20.0+bafe72f

ip-10-0-164-249.us-west-2.compute.internal Ready worker 9h v1.20.0+bafe72f

ip-10-0-189-65.us-west-2.compute.internal Ready master 9h v1.20.0+bafe72f

ip-10-0-193-29.us-west-2.compute.internal Ready master 9h v1.20.0+bafe72f

ip-10-0-214-229.us-west-2.compute.internal Ready worker 9h v1.20.0+bafe72f

NOTE: For HA you must create the above on at least two AZ’s in that region.

Deploy an application on the newly added node

Label the new node “intel=comp-optimized”

% oc label node ip-10-0-128-195.us-west-2.compute.internal intel=comp-optimized

node/ip-10-0-128-195.us-west-2.compute.internal labeled

%

Create a new project call myproject with node-selector set as “intel=comp-optimized”

% oc adm new-project myproject --node-selector "intel=comp-optimized"

Created project myproject

Next, I deployed an Image for the health-demo application from the Quay (quay.io/mayurshetty/health-demo)

We can see that the health-demo pod was started on the new c5.2xlarge instance.

We can see that the health-demo pod was started on the new c5.2xlarge instance.% oc get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

health-demo-1-cxzkf 1/1 Running 0 2m45s 10.131.0.13 ip-10-0-128-195.us-west-2.compute.internal <none> <none>

health-demo-1-deploy 0/1 Completed 0 2m51s 10.131.0.12 ip-10-0-128-195.us-west-2.compute.internal <none> <none>

%

Conclusion

In this post we have seen the various AWS EC2 instance types available for the different workload categories. We also looked into how OpenShift gives us the flexibility to add EC2 instances with Intel Xeon Processors to an OpenShift cluster running on AWS, and on how we can deploy our application to run on the new instance.

Reference

- Changing instance type with AWS installation

- How to force a pod to schedule to a specific node using nodeSelector in OpenShift.

Written by Mayur Shetty, Principal Solution Architect, Red Hat and Raghu Moorthy, Principal Engineer, Intel Inc.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.