- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

I want to communicate between 2 FPGA (root port and endpoint) on PCIe ver.1.1 with x1 link.

I use Quartus 13.1.

I have downloaded Design Example - PCIe Rootport Examples from

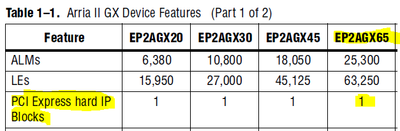

I modified this Basic Root Port demo to my HW. I have Arria II GX FPGA Development Kit Board (configured as a root port) and my own board with Arria II GX - EP2AGX65DF25C6 (configured as a endpoint).

My problem is, when I monitor LTSSM signal (never reach L0 state):

1) In Rootport the state machine is toggling between states 00 a 01 (detect.quiet and detect.active).

2) In Endpoint the state machine is toggling between states 00, 01 and 02 (detect.quiet, detect.active and pooling.active).

In Arria Kit I have configured PCIe HARD IP as Rootport and in my own board I use PCIe SOFT IP configured as Endpoint. I need to use PCIe SOFT IP in this case, because we have routed TX and RX link (x1) on PCB to FPGA Transceiver GXB_7, and this is not available in PCIe HARD IP.

I have connected TX port from Rootport to RX port of Endpoint and RX port from Rootport to TX port on Endpoint.

AC coupling capacitors:

1) Arria Kit has on TX line AC coupling capacitor 100nF and no capacitor on RX line

2) My own board (EP2AGX65DF25C6) - has no capacitor on TX line and on RX line 10nF AC coupling capacitor.

Please, can you help me, to solve this problem? I attached my Quartus projects for RootPort and Endpoint.

Thank you very much.

JD

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

For endpoint_project that use your own board (EP2AGX65DF25C6), you have the following assignments in endpoint_project.qsf

Below assignments are connected to pins at GXB_RX7and GXB_TX7 (transceiver channel 7), this seems incorrect because for x1 lane, it should use transceiver channel 1, which should be GXB_RX0 (AA23/AA24), GXB_TX0 (Y21/Y22).

Please refer to Arria II GX Device Pin-Out Files for EP2AGX65 available at https://www.intel.com/content/www/us/en/support/programmable/support-resources/devices/lit-dp.html

set_location_assignment PIN_C23 -to pcie_rx_p[0]

set_location_assignment PIN_C24 -to "pcie_rx_p[0](n)"

set_location_assignment PIN_B21 -to pcie_tx_p[0]

set_location_assignment PIN_B22 -to "pcie_tx_p[0](n)"

Also, seems that the endpoint_project do not have the assignments for refclk and pcie_rstn.

Page-12 of Application Note AN456 listed many links for PCIe endpoint reference designs, including Arria II GX.

You can download the Arria II reference design and porting to your own board OPN.

AN456: http://www.altera.com/literature/an/an456.pdf?#page=12

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello, thank you for reply.

1) In my own board Enpoint (EP2AGX65DF25C6) - Yes, assigments are connected to pins GXB_RX7 and GXB_TX7 - channel 7. This is possible(correct), because I use SOFT IP of PCIe. Quartus give no errors about it and Compilation is succesfull. I think, only in HARD IP must be x1 link conected to GXB_RX0 and GXB_TX0.

2)In my own board EndPoint (EP2AGX65DF25C6) - refclk are connected in top entity from PLL output 125MHz. On PCB I have clock generator 62.5MHz and with FPGA PLL I make 125 MHz for refclk. ( .refclk ( qsys_pll_c0_125m_clk )). Is it this possible in Arria II GX, connect output from PLL as a refclk? I think yes, there are many possibilities what clock source can be connected as refclk transceiver block.

3) pcie_rstn are connected in top level entity ( .pcie_rstn ( pcie_in_reset_n ) , ). This reset is derived from pin cpu_reset_n and PLL_locked signal.

The problem with LTSSM (never reach L0 state) as I wrote. Can it be cased by AC coupling capacitors? I wrote, that I have:

Link x1, that mean 2 differecial pairs (RX and TX). On first differecial pair I have 110nF AC capacitor and on second differencial pair I have no AC coupling capacitor.

Thank you for your answer.

JD

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

1) Noted that your endpoint is using soft IP.

2) The RX line capacitor value range from 75-200nF should be fine.

3) In usual use case, pcie refclk is a 100-MHz differential input that is driven from the PC motherboard to the board through the PCIe edge connector.

In this case, Arria II GX PCIe core has an option 'Link common clock' which is enable when the PCIe core and host are sharing the same refclk.

However in your setup, the refclk at endpoint is generated from another IC on the board itself. So pls make sure your endpoint design do not enabled the 'Link common clock'.

Few concerns here:

a) The PCI Express Base Specification requires that the refclk signal need compliance to ±300 PPM requirement. Not sure if the clock generator on your board meets this requirement.

b) Refclk must be free running and stable during pcie link trainning. In your case, it is 62.5Mhz clock generator --> through a PLL generate 125MHz--> pcie refclk.

It is possible that during link trainning, this refclk still not stable, and it also not free running because is replying on the FPGA PLL output.

You can consider signaltap the refclk, pcie_rstn, npor, dl_ltssm to observe the behaviour.

4) Another test you can try is, after the whole setup is power-up, trigger a second cpu_reset to make the link training take place again.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello, thanks for your support.

Now it is working, the LTSSM reached L0 state and links are ready to communicate. The problem was caused by AC coupling capacitors. When I give the right value (100nF) on both lines, the LTSSM reached the state L0.

1) I know that. I must use SOFT IP in Ednpoint, because I use x1 link and I have on PCB RX/TX lines routed to ARRIA II GX pins GXB_7. (In HARD IP is this not available).

2) I have 100nF AC coupling capacitor on both lines

3) 'Link common clock' are disabled. (Rootport and Enpoint too). I use CDCM61002RHB clock generator to 62.5 MHz.

4) When reset one side or second side, the link training take place again, and the LTSSM goes to L0.

I have another question. Now I have generated SOFT IP with Avalon-ST interface (in Enpoint and in Rootport). The interface looks like:

.rx_st_bardec0 ( ) , // output [7:0] rx_st_bardec0_sig

.rx_st_be0 ( ) , // output [7:0] rx_st_be0_sig

.rx_st_data0 ( rx_st_data0_sig ) , // output [63:0] rx_st_data0_sig

.rx_st_eop0 ( rx_st_eop0_sig ) , // output rx_st_eop0_sig

.rx_st_err0 ( ) , // output rx_st_err0_sig

.rx_st_mask0 ( 1'd0 ) , // input rx_st_mask0_sig

.rx_st_ready0 ( rx_st_ready0_sig ) , // input rx_st_ready0_sig

.rx_st_sop0 ( rx_st_sop0_sig ) , // output rx_st_sop0_sig

.rx_st_valid0 ( rx_st_valid0_sig ) , // output rx_st_valid0_sig

.tx_st_data0 ( tx_st_data0_sig ) , // input [63:0] tx_st_data0_sig

.tx_st_eop0 ( tx_st_eop0_sig ) , // input tx_st_eop0_sig

.tx_st_err0 ( 1'd0 ) , // input tx_st_err0_sig

.tx_st_ready0 ( tx_st_ready0_sig ) , // output tx_st_ready0_sig

.tx_st_sop0 ( tx_st_sop0_sig ) , // input tx_st_sop0_sig

.tx_st_valid0 ( tx_st_valid0_sig ) , // input tx_st_valid0_sig

I want to connect this Avalon-ST interface into QSYS system and translate it into Avalon-MM Master/Slave interface. How can do it?

(ATTENTION: I can not use IP compiler for PCI Express in QSYS, because: 1) Rootport is not support for Arria II GX and 2) Endpoint is support only with HARD IP and I can not use HARD IP, I have routed RX/TX lines on PCB to pins GXB_7).

So, how can I translate Avalon-ST transaction PCIe SOFT IP into QSYS Avalon-MM Master/Slave interface ??

Which QSYS bridges can I use ?

Thank you for your reply.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Good to know that now the link is up.

Regarding the further question about Avalon-MM to Avalon-streaming coversion, by searching into the QSys System Design Components, unfortunately do not see any canned components that support this coversion.

https://www.altera.com/content/dam/altera-www/global/en_US/pdfs/literature/hb/qts/qsys_system_components.pdf

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

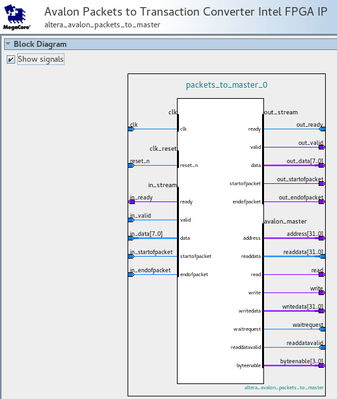

I see this: Avalon Packets to Transactions Converter.

It can not be used?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Comparing the AVST signals of PCIe core vs Avalon Packets to Transaction Converter, they are not fully compatible.

Since you have routed RX/TX lines on PCB to pins GXB_7, you can try an alternative way by utilizing the lane reversal feature in pcie core. Configure the endpoint as Hard IP so that can use the IP compiler for PCI Express in QSYS with AvMM.

The IP Compiler User Guide page#187 has information of lane reversal.

https://www.intel.co.jp/content/dam/altera-www/global/ja_JP/pdfs/literature/ug/ug_pci_express.pdf#page=187

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

In my final FPGA design for Arrria II GX (EP2AGX65) I need to use 2 instances of PCIe Core (because of our dual-star backplane topology), and Arria II GX has only one HARD IP PCIe.

So, it would be better to find final solution for SOFT IP PCIe Cores, and not one solution for HARD IP and another for SOFT IP.

When it is possible to have Avalon-MM interface in HARD IP, why is not possible to have it in SOFT IP? Really Intel does not have any component, that translate PCIe TLP´s packets from Avalon-ST interface into Avalon-MM interface? How does it make in HARD IP, it has to be similar. In the HARD IP does the solution exist, how is it done there?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There is no embedded component/bridge that can support pcie avst-to-avalon_mm conversion.

Arria II GX is a legacy device and the avmm interface support in rootport mode/soft IP is limited.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page