- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Recently a colleague of mine presented me with a surprising find, one which I do not fully understand. Maybe someone can shed some light on this.

The background behind the following example is that I try to code in a certain style which I consider "modern and safe". One of the "best practices" there is to always define variables locally.

Another one, that might look quirky, is to make a temporary variable a const if it is not supposed to change any more. The same goes for parameters to functions.

Then, the colleague comes along and tells me that my code runs slower, than the code that does not call temporaries const and also defines them in the "old C style" at the beginning of the functions.

First of all, I can see no reason why it should do.

Ok. Now the meaty bit. First mine:

#include <cmath>

using namespace std;

void f (float * const __restrict__ t,

float const * const __restrict__ x,

float const * const __restrict__ v,

int const n)

{

#pragma simd

for (int i = 0; i < n; ++i) {

float const t02 = float(i);

float const x2 = x * x;

float const v2 = v * v;

t = sqrt(t02 + x2 / v2);

}

}

And then his version:

#include <cmath>

using namespace std;

void f (float * __restrict__ t,

float * __restrict__ x,

float * __restrict__ v,

int n)

{

int i;

float t02, x2, v2;

#pragma simd

for (i = 0; i < n; ++i) {

t02 = float(i);

x2 = x * x;

v2 = v * v;

t = sqrt(t02 + x2 / v2);

}

}

Now, I did not yet benchmark it, but I ran both through the icpc compiler version 14.0.3 on x86-64 Linux and I got surprisingly differences.

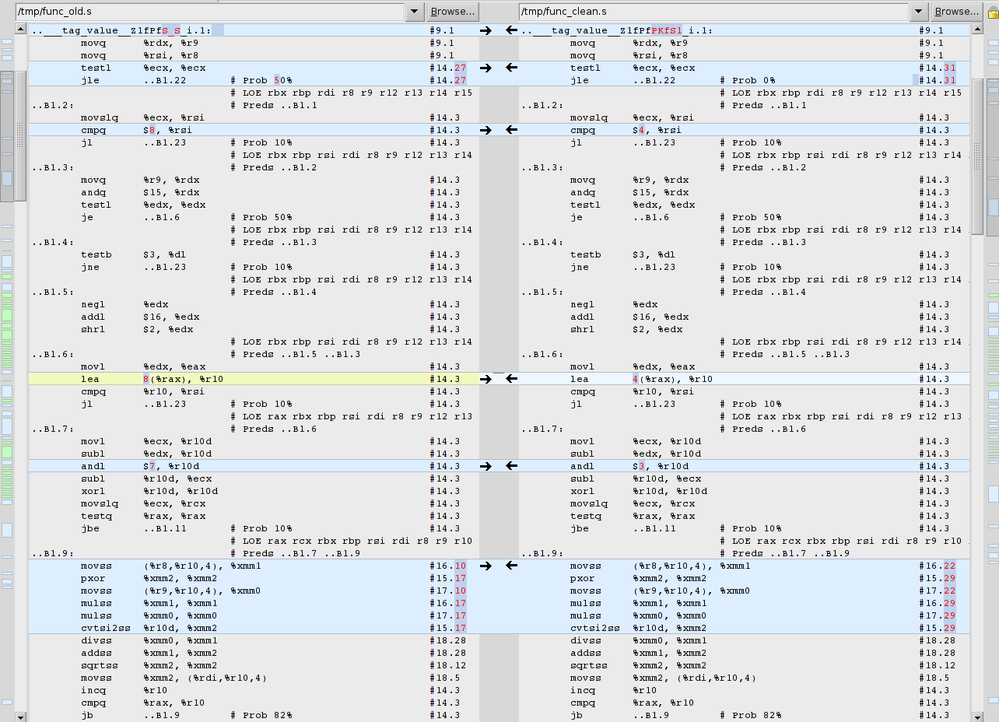

Can anyone explain the differences in the cmpq and lea?

Surely the compiler should create the same machine code from both C++ codes? Btw: g++ 4.7.2 does.

Thank you!

Best regards

Andreas

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'm not understanding what your "style" wishes to accomplish. According to most references I find, const asserts that you will not modify the value, yet you want to modify without spending any time preparing to warn you.

gcc and icc may not interpret const identically, but I don't see that as the issue you are raising. Maybe you could argue that the compiler has a bug if it doesn't complain.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Tim,

I do not think it is about the consts. I will try to remove them and see if this changes something, but I would be surprised.

The rationale behind the excessive use of consts is that temporaries will not be abused for something else. But as I mentioned in my original post, this is more a quirk than something interesting.

The issue seems to be related to the scope of declaration for the temporaries.

But for both the const and the scope, I can not see a difference with regards to the operations the machine has to perform. I therefore do not expect any differences in the assembler code. But there they are with the intel compiler. Any idea why?

Best regards

Andreas

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Of more concern is not the difference between 4-byte and 8-byte alignment tests, rather the fact that (on both) the loop is scalar, not vector. The statement inhibiting vectorization is the t02 = float(I).

If the sample routine is an actual function you use (as opposed to dummied up example), then I suggest you rewrite the code to permit vectorization.

There is clean, and there is fast

#include "stdafx.h"

#include <cmath>

using namespace std;

#define __restrict__ restrict

void f (float * __restrict__ t,

float * __restrict__ x,

float * __restrict__ v,

int n)

{

float x2, v2;

const int iStrip = 32;

const float fStrip = float(iStrip);

_declspec(align(64)) float t02[32];

_declspec(align(64)) static float t02init[32] =

{0.0f, 1.0f, 2.0f, 3.0f, 4.0f, 5.0f, 6.0f, 7.0f,

8.0f, 9.0f, 10.0f, 11.0f, 12.0f, 13.0f, 14.0f, 15.0f,

16.0f, 17.0f, 18.0f, 19.0f, 20.0f, 21.0f, 22.0f, 23.0f,

24.0f, 25.0f, 26.0f, 27.0f, 28.0f, 29.0f, 30.0f, 31.0f };

// init t02 (even though we may skip the strip section

#pragma simd

for (int j=0; j < iStrip; ++j)

t02 = t02init;

int i=0;

if(n >= iStrip) {

// process iStrip at t a time

for(; n >= iStrip; n -= iStrip) {

__assume_aligned(x, 64);

__assume(i%iStrip == 0);

#pragma simd

for (int j=0; j < iStrip; ++j) {

x2 = x[i+j] * x[i+j];

v2 = v[i+j] * v[i+j];

t[i+j] = sqrt(t02 + x2 / v2);

t02 += fStrip;

}

i += iStrip;

}

} // if(n >= iStrip)

#pragma simd

for (int j=0; j < n; ++j) {

x2 = x[i+j] * x[i+j];

v2 = v[i+j] * v[i+j];

t[i+j] = sqrt(t02 + x2 / v2);

}

}

Jim Dempsey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Here is above example using CEAN (C Extension for Array Notation)

#include "stdafx.h"

#include <cmath>

using namespace std;

#define __restrict__ restrict

void f (float * __restrict__ t,

float * __restrict__ x,

float * __restrict__ v,

int n)

{

float x2, v2;

const int iStrip = 32;

const float fStrip = float(iStrip);

_declspec(align(64)) float t02[32];

_declspec(align(64)) static float t02init[32] =

{0.0f, 1.0f, 2.0f, 3.0f, 4.0f, 5.0f, 6.0f, 7.0f,

8.0f, 9.0f, 10.0f, 11.0f, 12.0f, 13.0f, 14.0f, 15.0f,

16.0f, 17.0f, 18.0f, 19.0f, 20.0f, 21.0f, 22.0f, 23.0f,

24.0f, 25.0f, 26.0f, 27.0f, 28.0f, 29.0f, 30.0f, 31.0f };

// init t02 (even though we may skip the strip section

t02[0:iStrip] = t02init[0:iStrip];

int i=0;

if(n >= iStrip) {

// process iStrip at t a time

for(; n >= iStrip; n -= iStrip) {

__assume_aligned(x, 64);

__assume(i%iStrip == 0);

t02 = sqrt(t02[0:iStrip] + x*x + v*v);

t02[0:iStrip] += fStrip;

i += iStrip;

}

} // if(n >= iStrip)

#pragma simd

for (int j=0; j < n; ++j) {

x2 = x[i+j] * x[i+j];

v2 = v[i+j] * v[i+j];

t[i+j] = sqrt(t02 + x2 / v2);

}

}

Jim Dempsey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

When n is large enough (you test) speed-up will be quite good for the last two suggestion. The non-CEAN (ugly) may be faster due to CEAN inserting alignment tests and peal, plus remainder code. The ugly route will have less so. For large n the main loop is:

vmovups ymm2, YMMWORD PTR [rsi+rbp*4] ;11.6 vmovups xmm0, XMMWORD PTR [r10+rbp*4] ;11.6 vmovups xmm5, XMMWORD PTR [r13+rbp*4] ;43.5 vmulps ymm4, ymm2, ymm2 ;42.5 vinsertf128 ymm1, ymm0, XMMWORD PTR [16+r10+rbp*4], 1 ;11.6 vmulps ymm3, ymm1, ymm1 ;41.5 vdivps ymm1, ymm3, ymm4 ;43.5 vmovups xmm4, XMMWORD PTR [32+r10+rbp*4] ;11.6 vinsertf128 ymm0, ymm5, XMMWORD PTR [16+r13+rbp*4], 1 ;43.5 vaddps ymm2, ymm0, ymm1 ;43.5 vmovups xmm5, XMMWORD PTR [32+r13+rbp*4] ;43.5 vmovups ymm1, YMMWORD PTR [32+rsi+rbp*4] ;11.6 vinsertf128 ymm0, ymm4, XMMWORD PTR [48+r10+rbp*4], 1 ;11.6 vsqrtps ymm3, ymm2 ;43.5 vmovups XMMWORD PTR [r15+rbp*4], xmm3 ;11.6 vmulps ymm2, ymm0, ymm0 ;41.5 vextractf128 XMMWORD PTR [16+r15+rbp*4], ymm3, 1 ;11.6 vmulps ymm3, ymm1, ymm1 ;42.5 vdivps ymm1, ymm2, ymm3 ;43.5 vinsertf128 ymm0, ymm5, XMMWORD PTR [48+r13+rbp*4], 1 ;43.5 vaddps ymm2, ymm0, ymm1 ;43.5 vsqrtps ymm3, ymm2 ;43.5 vmovups XMMWORD PTR [32+r15+rbp*4], xmm3 ;11.6 vextractf128 XMMWORD PTR [48+r15+rbp*4], ymm3, 1 ;11.6 add rbp, 16 ;40.3 cmp rbp, r9 ;40.3 jb .B1.20 ; Prob 82% ;40.3

Each iteration is processing 16 floats

Jim Dempsey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

In principle, defining the scalar variables outside the for() and putting them in a simd private list ought to accomplish the same thing as defining them with scope inside the for(). I agree I have seen situations where the Intel compilers choke up with too many designated private variables, but that would seem excusable only with a larger number of them.

Is there a confusion between (float)i and float(i) ? The former (or simple assignment with implicit cast) shouldn't inhibit vectorization, so I wouldn't expect making a temporary array to be as effective. I like to follow the old adage about programming Fortran in any language, but that means assuring that the C is actually equivalent.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jim, Tim,

Thank you very much for your help and suggestions.

Jim:

The code is a dumbed down example to show the issue with the scope of variables.

The loop will be executed usually between 1000 and 2000 times, but could be more or less.

Jim, Tim:

I have not worked with x86 assembler code too much, so I relied on the message from the Intel compiler on the assumption that the loop is vectorised.

andreas@computer:/tmp$ icpc -vec-report3 -Ofast -xSSE4.2 -S -o func.s func.cc func.cc(14): (col. 3) remark: LOOP WAS VECTORIZED

But if I look up the documentation about the SSE instructions, MOVSS, MULSS, ADDSS, DIVSS, SQRTSS is only scalar.

So why is the compiler (version 14) telling me the wrong thing here?

And is there no packed/streamed/vector equivalent to the CVTSI2SS conversion instruction? Do you really need a vector for that as well?

Even more mysterious is the fact that when I remove the #pragma simd, the compiled assembly is identical between the two???

This gets more mysterious by the hour :-)

Best regards

Andreas

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

All,

Sorry about part of the last post: The compiler does vectorise (irrespective of float(i), (float)i or static_cast< float >(i).

..B1.13: # Preds ..B1.11 ..B1.13

movups (%r9,%rax,4), %xmm2 #17.22

mulps %xmm2, %xmm2 #17.29

cvtdq2ps %xmm0, %xmm8 #15.30

movups (%r8,%rax,4), %xmm4 #16.22

rcpps %xmm2, %xmm3 #18.28

mulps %xmm4, %xmm4 #16.29

mulps %xmm3, %xmm2 #18.28

mulps %xmm3, %xmm2 #18.28

addps %xmm3, %xmm3 #18.28

movups .L_2il0floatpacket.11(%rip), %xmm5 #18.12

paddd %xmm1, %xmm0 #18.5

movups .L_2il0floatpacket.10(%rip), %xmm7 #18.12

subps %xmm2, %xmm3 #18.28

mulps %xmm3, %xmm4 #18.28

addps %xmm4, %xmm8 #18.28

rsqrtps %xmm8, %xmm6 #18.12

andps %xmm8, %xmm5 #18.12

cmpleps %xmm5, %xmm7 #18.12

andps %xmm6, %xmm7 #18.12

mulps %xmm7, %xmm8 #18.12

mulps %xmm8, %xmm7 #18.12

subps .L_2il0floatpacket.8(%rip), %xmm7 #18.12

mulps %xmm7, %xmm8 #18.12

mulps .L_2il0floatpacket.9(%rip), %xmm8 #18.12

movups %xmm8, (%rdi,%rax,4) #18.5

addq $4, %rax #14.3

cmpq %rcx, %rax #14.3

jb ..B1.13 # Prob 82% #14.3

jmp ..B1.18 # Prob 100% #14.3

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

>>And is there no packed/streamed/vector equivalent to the CVTSI2SS conversion instruction? Do you really need a vector for that as well?

Try this alternative:

float i_f = 0.0f; // same value as first i blow.

for(int i=0; i < n; ++i) {

...

i_f += 1.0f;

}

The code optimizer might be able to handle that.

Jim Dempsey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jim,

Thank you very much for your tips.

Unfortunately my followup post is in "review" at the moment. Maybe this will get posted.

I do not think that it is not vectorising. The compiler says it does and in the assembler code, I can see the following that looks like a vectorised loop to me:

..B1.16: # Preds ..B1.11 ..B1.16

movaps (%r9,%rax,4), %xmm2 #17.22

mulps %xmm2, %xmm2 #17.29

cvtdq2ps %xmm0, %xmm8 #15.29

rcpps %xmm2, %xmm3 #18.28

mulps %xmm3, %xmm2 #18.28

paddd %xmm1, %xmm0 #18.5

mulps %xmm3, %xmm2 #18.28

addps %xmm3, %xmm3 #18.28

movaps (%r8,%rax,4), %xmm4 #16.22

subps %xmm2, %xmm3 #18.28

mulps %xmm4, %xmm4 #16.29

mulps %xmm3, %xmm4 #18.28

movaps .L_2il0floatpacket.11(%rip), %xmm5 #18.12

addps %xmm4, %xmm8 #18.28

rsqrtps %xmm8, %xmm6 #18.12

movaps .L_2il0floatpacket.10(%rip), %xmm7 #18.12

andps %xmm8, %xmm5 #18.12

cmpleps %xmm5, %xmm7 #18.12

andps %xmm6, %xmm7 #18.12

mulps %xmm7, %xmm8 #18.12

mulps %xmm8, %xmm7 #18.12

subps .L_2il0floatpacket.8(%rip), %xmm7 #18.12

mulps %xmm7, %xmm8 #18.12

mulps .L_2il0floatpacket.9(%rip), %xmm8 #18.12

movups %xmm8, (%rdi,%rax,4) #18.5

addq $4, %rax #14.3

cmpq %rcx, %rax #14.3

jb ..B1.16 # Prob 82% #14.3

I am just after some guidance with regards to the "scope of temporaries". In my mind it should not make a difference performance wise, if they are declared local or "more global".

Best regards

Andreas

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

When you declare a variable outside the scope of the loop .AND. those variables are used after the loop, then the code generation might not always assume the last values used within the loop are not used outside the loop. This one reason why you should use

for(int i=0; ...

and not

int i;

...

for(i=0;...

When the function ends just after the loop, then it becomes a moot point as to if the end value is propagated after the loop.

In your sample code, placement won't make a difference as your function immediately ended after the loop. *** but, you are getting into a programming style habit the may make a detrimental difference in other places.

The above code is vectorized.

However, it appears to be doing much more work than required. Possibly trying to improve precision due to using the less precise reciprocal sqrt function (rsqrtps).

What are your options regarding floating point?

Jim Dempsey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jim,

The thing I still do not understand is that if I use the declaration of the variables in the scope of the function (as opposed to the loop scope), the vectorised assembler code looks even more complicated:

..B1.16: # Preds ..B1.11 ..B1.16

movaps (%r9,%rax,4), %xmm2 #17.10

movaps 16(%r9,%rax,4), %xmm9 #17.10

mulps %xmm2, %xmm2 #17.17

cvtdq2ps %xmm0, %xmm8 #15.18

mulps %xmm9, %xmm9 #17.17

rcpps %xmm2, %xmm3 #18.28

rcpps %xmm9, %xmm10 #18.28

mulps %xmm3, %xmm2 #18.28

mulps %xmm10, %xmm9 #18.28

mulps %xmm3, %xmm2 #18.28

addps %xmm3, %xmm3 #18.28

mulps %xmm10, %xmm9 #18.28

addps %xmm10, %xmm10 #18.28

subps %xmm2, %xmm3 #18.28

subps %xmm9, %xmm10 #18.28

movaps (%r8,%rax,4), %xmm4 #16.10

paddd %xmm1, %xmm0 #18.5

movaps 16(%r8,%rax,4), %xmm11 #16.10

mulps %xmm4, %xmm4 #16.17

cvtdq2ps %xmm0, %xmm15 #15.18

mulps %xmm11, %xmm11 #16.17

mulps %xmm3, %xmm4 #18.28

mulps %xmm10, %xmm11 #18.28

addps %xmm4, %xmm8 #18.28

addps %xmm11, %xmm15 #18.28

rsqrtps %xmm8, %xmm6 #18.12

rsqrtps %xmm15, %xmm13 #18.12

movaps .L_2il0floatpacket.11(%rip), %xmm5 #18.12

paddd %xmm1, %xmm0 #18.5

movaps .L_2il0floatpacket.11(%rip), %xmm12 #18.12

andps %xmm8, %xmm5 #18.12

movaps .L_2il0floatpacket.10(%rip), %xmm7 #18.12

andps %xmm15, %xmm12 #18.12

movaps .L_2il0floatpacket.10(%rip), %xmm14 #18.12

cmpleps %xmm5, %xmm7 #18.12

cmpleps %xmm12, %xmm14 #18.12

andps %xmm6, %xmm7 #18.12

andps %xmm13, %xmm14 #18.12

mulps %xmm7, %xmm8 #18.12

mulps %xmm14, %xmm15 #18.12

mulps %xmm8, %xmm7 #18.12

mulps %xmm15, %xmm14 #18.12

subps .L_2il0floatpacket.8(%rip), %xmm7 #18.12

subps .L_2il0floatpacket.8(%rip), %xmm14 #18.12

mulps %xmm7, %xmm8 #18.12

mulps %xmm14, %xmm15 #18.12

mulps .L_2il0floatpacket.9(%rip), %xmm8 #18.12

mulps .L_2il0floatpacket.9(%rip), %xmm15 #18.12

movups %xmm8, (%rdi,%rax,4) #18.5

movups %xmm15, 16(%rdi,%rax,4) #18.5

addq $8, %rax #14.3

cmpq %rcx, %rax #14.3

jb ..B1.16 # Prob 82% #14.3

I suppose the Intel compiler gurus will have to figure out why this is happening?

Thank you very much for the hint of the reciprocal sqrt. I will look into whether I can make use of that. I though about using some kind of Taylor expansion...

I think that accuracy for the use case at hand is quite important, but there might be some other use-case where accuracy is not so big an issue.

Thank you very much again for all your very helpful suggestions.

Best regards

Andreas

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

>>>I do not think that it is not vectorising. The compiler says it does and in the assembler code, I can see the following that looks like a vectorised loop to me>>>

Coming late to this interesting discussion:)

Yes it seems that code has been this time vectorised. You can see XXXPS instructions.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The second (more complicated) loop is unrolled twice, note rsqrtps being issued twice.

What I assume is also not obvious to you is the major performance improvement from the unroll does not come from the reduction (halving) of the manipulation of the loop control variable (and instruction cache recycling), but rather from the ability to interleave the two unrolled loops to remove (to some extent) the dependencies (results register used after operation but before results available). Interleaving, in some cases can nearly double throughput.

In a real example, if precision is of concern, you might want to use a compiler option that does not use rsqrtps with relative error of 1.5*2^-12 (12.5 bits of precision). You may want (need) the full precision (23 bits) of square root using sqrtps

Jim Dempsey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The code shown uses iteration to improve precision to about 23 bits for both division and sqrt. There is an option (not invoked) to suppress the iteration and stop with half precision. The options -prec-div -prec-sqrt would suppress those approximation methods ("throughput optimization") and require IEEE754 24-bit accurate instructions. The asm code would be more concise and legible (and may not benefit much from unrolling).

Looking back through the thread, I'm having difficulty understanding the fuss about the minor difference between local definition of those scalars, and definition outside the scope of the for(). The latter requires the compiler to look out for any firstprivate or lastprivate style dependencies, and it's hardly surprising (to me) if the register allocations come out with more differences than were demonstrated, even if the compiler sees in the end there are no such dependencies.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Tim

Would you happen to know if the default for /O3 use the full precision or the faster truncated precision for sqrt?

My preference lies with "Do no harm" and for the default for /O3 to use full precision. I'd rather choose where it is acceptable to sacrifice precision for speed as opposed to experiencing modeling failures. IOW require me to explicitly add the option to use the faster sqrt, and/or specifically use #pragma option ... at the line of code.

Jim Dempsey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Default is to use the iterative method, which comes within 1 bit of full IEEE precision (except that it may fail entirely near the limits for over/underflow). The iterative method is invoked only during vectorization. The imf-precision options allow you to change the treatment, by individual math functions if you choose. Personally, I'm derogatory about the recommendation sometimes made to cut back math functions to single precision in double precision code. There is a small time saving sometimes in setting 44-bit precision, which might sometimes be acceptable.

On most recent host CPUs (Sandy Bridge possibly excepted), I prefer to set -prec-div -prec-sqrt, as the IEEE divide and sqrt implementation is excellent.

Current MIC has no IEEE divide or sqrt in the simd instruction set, so the svml functions for IEEE accurate are significantly slower than the default ones.

If you wish to change locally from the style of divide or sqrt set by your compile options, I think the only tool is use of intrinsics.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Tim,

Thank you for your comments.

I agree that this thread was set up and has become confusing.

The original problem, I think still stands and is not about optimising this loop (at least that was not my intent).

The concern/question I have is that if I declare the variables scope local and use the #pragma SIMD, the compiler does not seem to unroll the loop (as can be seen I believe already in the screenshot I posted ("global scope" declaration left - "local scope" declaration on the right). But please keep in mind (and I am sorry that this is confusing) that all those assembler code snippets I posted are only parts of the entire assembler output (the ones I considered "interesting").

If the variables are declared in the global scope of the function (and that is the only difference), the compiler seems to unroll the loop and with that, if I understand you correctly, improves the runtime potentially significantly.

So, I suppose the original question could be rephrased as:

"Why does the compiler unroll the loop if the temporary variables are declared in the global function scope, but not if they are defined loop local?"

Best regards

Andreas

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

That is a good question. What happens when you insert an explicit #pragma unroll...?

Jim Dempsey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jim,

Ok:

1) loop local declarations and #pragma simd -> no unrolling

2) loop local declarations and no #pragma simd -> unrolling, identical assembler code as 4

3) loop local declarations and #pragma unroll=2 followed by #pragma simd -> unrolling, slightly different assembler code sequence

4) function global declarations and #pragma simd -> unrolling and identical assembler code as in 2

Strange!

I do not like it when compilers do this and I cannot explain the reasons why.

Best regards

Andreas

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page