- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I would welcome suggestions as to the source of an error within the impi code, for reasons that I don't know as I do not have access to it.

I have a crash "integer divide by zero" using impi which gives me an error message (first part of the trace):

0 0x0000000000b696ed next_random() /localdisk/jenkins/workspace/workspace/ch4-build-linux-2019/impi-ch4-build-linux_build/CONF/impi-ch4-build-linux-release/label/impi-ch4-build-linux-intel64/_buildspace/release/../../src/mpid/ch4/shm/posix/eager/include/intel_transport_types.h:1809

1 0x0000000000b696ed impi_bcast_intra_huge() /localdisk/jenkins/workspace/workspace/ch4-build-linux-2019/impi-ch4-build-linux_build/CONF/impi-ch4-build-linux-release/label/impi-ch4-build-linux-intel64/_buildspace/release/../../src/mpid/ch4/shm/posix/eager/include/intel_transport_bcast.h:667

2 0x0000000000b6630d impi_bcast_intra_heap() /localdisk/jenkins/workspace/workspace/ch4-build-linux-2019/impi-ch4-build-linux_build/CONF/impi-ch4-build-linux-release/label/impi-ch4-build-linux-intel64/_buildspace/release/../../src/mpid/ch4/shm/posix/eager/include/intel_transport_bcast.h:798

3 0x000000000018ef6d MPIDI_POSIX_mpi_bcast() /localdisk/jenkins/workspace/workspace/ch4-build-linux-2019/impi-ch4-build-linux_build/CONF/impi-ch4-build-linux-release/label/impi-ch4-build-linux-intel64/_buildspace/release/../../src/mpid/ch4/shm/src/../src/../posix/intel/posix_coll.h:124

4 0x000000000017335e MPIDI_SHM_mpi_bcast() /localdisk/jenkins/workspace/workspace/ch4-build-linux-2019/impi-ch4-build-linux_build/CONF/impi-ch4-build-linux-release/label/impi-ch4-build-linux-intel64/_buildspace/release/../../src/mpid/ch4/shm/src/../src/shm_coll.h:39

5 0x000000000017335e MPIDI_Bcast_intra_composition_alpha() /localdisk/jenkins/workspace/workspace/ch4-build-linux-2019/impi-ch4-build-linux_build/CONF/impi-ch4-build-linux-release/label/impi-ch4-build-linux-intel64/_buildspace/release/../../src/mpid/ch4/src/intel/ch4_coll_impl.h:303

6 0x000000000017335e MPID_Bcast_invoke() /localdisk/jenkins/workspace/workspace/ch4-build-linux-2019/impi-ch4-build-linux_build/CONF/impi-ch4-build-linux-release/label/impi-ch4-build-linux-intel64/_buildspace/release/../../src/mpid/ch4/src/intel/ch4_coll_select_utils.c:1726

7 0x000000000017335e MPIDI_coll_invoke() /localdisk/jenkins/workspace/workspace/ch4-build-linux-2019/impi-ch4-build-linux_build/CONF/impi-ch4-build-linux-release/label/impi-ch4-build-linux-intel64/_buildspace/release/../../src/mpid/ch4/src/intel/ch4_coll_select_utils.c:3356

8 0x0000000000153bee MPIDI_coll_select() /localdisk/jenkins/workspace/workspace/ch4-build-linux-2019/impi-ch4-build-linux_build/CONF/impi-ch4-build-linux-release/label/impi-ch4-build-linux-intel64/_buildspace/release/../../src/mpid/ch4/src/intel/ch4_coll_globals_default.c:129

9 0x000000000021c02d MPID_Bcast() /localdisk/jenkins/workspace/workspace/ch4-build-linux-2019/impi-ch4-build-linux_build/CONF/impi-ch4-build-linux-release/label/impi-ch4-build-linux-intel64/_buildspace/release/../../src/mpid/ch4/src/intel/ch4_coll.h:51

10 0x00000000001386e9 PMPI_Bcast() /localdisk/jenkins/workspace/workspace/ch4-build-linux-2019/impi-ch4-build-linux_build/CONF/impi-ch4-build-linux-release/label/impi-ch4-build-linux-intel64/_buildspace/release/../../src/mpi/coll/bcast/bcast.c:416

11 0x00000000000e8924 pmpi_bcast_() /localdisk/jenkins/workspace/workspace/ch4-build-linux-2019/impi-ch4-build-linux_build/CONF/impi-ch4-build-linux-release/label/impi-ch4-build-linux-intel64/_buildspace/release/../../src/binding/fortran/mpif_h/bcastf.c:270

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Inlined with >

We are able to build the application with different oneAPI versions starting from 2021.1.1 (even this version is not supported we were hoping to reproduce "divided by zero" -- please see next paragraph). We are getting just hangs with any oneAPI version. The hangs seem to occur around " allocate legendre 175.5 MB ..."

> The I/O is buffered, so that is not the actual location, it is the last released write.

For completeness -- we are running on one node with InfiniBand in interactive mode allocated by Slurm. Simple MPI jobs run fine on these node(s) -- I'm always checking before running your app.

I consulted with our IMPI developers regarding intel_transport_types.h:1809 (IMPI2021.1) -- their response: "function (at this location) cannot generate division by zero. The function is simple enough." I and another engineer also looked a the code and so far we cannot come up with an explanation how divided by zero was generated there (nor we can reproduce this error yet with 2021.1)

> I expect that the code was overwritten, and/or it was a line or so before or after; I do not know what compilation options were used in impi so I can only speculate.

> We might try a debug version of IMPI library for more clues. We also tried to compile with "-g -backtrace" and run under gdb with one rank. But it looks like it may take us some time to find why the application hangs.

> That will take forever. I think you mean -traceback, which I already did in my code. Valgrind might be better.

Meanwhile, may I ask these questions:

- would it be possible to get a smaller workload that would run just a few minutes.

> No, because that will not have the issue!

The workload you gave is supposed to run ~ 70 min. I'm still not sure if we are using your scripts correctly -- and smaller workload would help to catch that. BTW, have you been able to run with smaller workloads?

> I am routinely running with 120 cores, also smaller versions (which have no issues) as are my postdocs. Around the world there are probably 100 people running smaller workloads of the same program at any given time.

You can run the 63 core case, that will complete -- I thought this was done before.

- Is there a last version of IMPI that you were able to run with? From any older Parallel Studio packages maybe?

> I did not have access to a 64 core machine before, so I never tested.

- Would it be possible for us to compile and run (your app) with different MPI? We have OpenMPI, MVAPICH, MPICH installation on our cluster.

> The code works, except for this case. Since your developers are telling you that the Intel ifort output is wrong, it does not look like this is a good approach. I can think of several things I can do when I have time. Perhaps it is best if I solve this, as I have some coding and debugging experience -- I just don't have the source code.

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Thanks for posting in the Intel forums.

Could you please provide us with the command line and steps to reproduce the issue on our end?

Could you also provide us with the OS details, mpi version?

Thanks & Regards

Shivani

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The crash occurred within a program of approximately 50,000 lines of Fortran (Density Functional Theory code Wien2k, www.Wien2k.at) when I was doing a 64 core calculation using Linux on a Gold 6338 which takes ~30 minutes. If you (Intel) are willing to assign someone with sufficient core access to investigate, they should contact me outside this list so we can work out how to proceed.

Or you can provide me with information about the code that crashed.

Or both.

What is not possible is some simple command to reproduce this.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Could you also provide us with the OS details, mpi version?

Thanks & Regards

Shivani

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The Intel version is 2021.1.1 . Later versions have worse problems, hanging with no information.

$ uname -a

Linux qnode1058 3.10.0-1160.71.1.el7.x86_64 #1 SMP Wed Jun 15 08:55:08 UTC 2022 x86_64 x86_64 x86_64

GNU/Linux

[lma712@qnode1058 ~]$ head -20 /proc/cpuinfo

processor : 0

vendor_id : GenuineIntel

cpu family : 6

model : 106

model name : Intel(R) Xeon(R) Gold 6338 CPU @ 2.00GHz

stepping : 6

microcode : 0xd000363

cpu MHz : 2000.000

cache size : 49152 KB

physical id : 0

siblings : 32

core id : 0

cpu cores : 32

apicid : 0

initial apicid : 0

fpu : yes

fpu_exception : yes

cpuid level : 27

wp : yes

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Thanks for providing the reproducer and the steps to reproduce it.

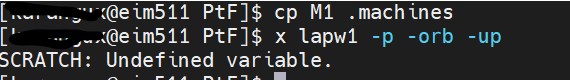

As provided in the README file I have followed the Step1 below are the results

While trying to follow Step 2 below are the results.

Could you please help us with how to proceed further?

Thanks & Regards

Shivani

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Apologies, my errors when extracting part of a large code into a small reproducer.

For the first approach, do first "export SCRATCH=./" before running it.

For the second, edit the Makefile so it has

"ELPAROOT = ../elpa22/"

I forgot the "/" at the end, it is needed

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

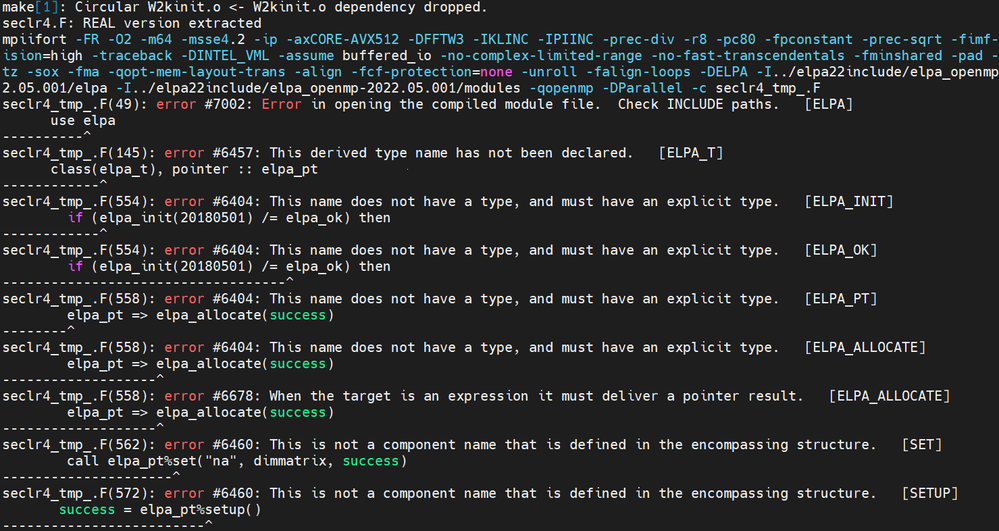

Hi,

I tried following the steps provided in README and this is the error I'm getting when trying to run this step

x lapw1 -p -orb -up

I have sourced oneAPI setvars.sh script still I'm getting the mpirun command not found error.

Thanks & Regards

Shivani

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You may also need to do "ssh eim511 which mpirun" & "ssh eim512 ldd lapw1_mpi".

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Could you please let us know how much time the below command takes to execute?

x lapw1 -p -orb -up

I have been waiting for more than 1.5 hours but did not get any output.

Thanks & Regards

Shivani

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You can do tail PtF.outputup_1, which should have something.

N.B., this is with a .machines file with 1:node01:64

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

>>>"You can do tail PtF.outputup_1, which should have something".

Could you please let us know how can we get the file PtF.outputup_1?

Thanks & Regards

Shivani

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Are you following the instructions I gave? You question suggests that you are not.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I am following the instructions given by you.

As mentioned in my previous post I am unable to proceed further after the command "x lapw1 -p -orb -up".It got Struck and could not proceed further, so unable to get the file PtF.outputup_1 after running the command.

Could you please let us know how to proceed further?

Thanks & Regards

Shivani

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

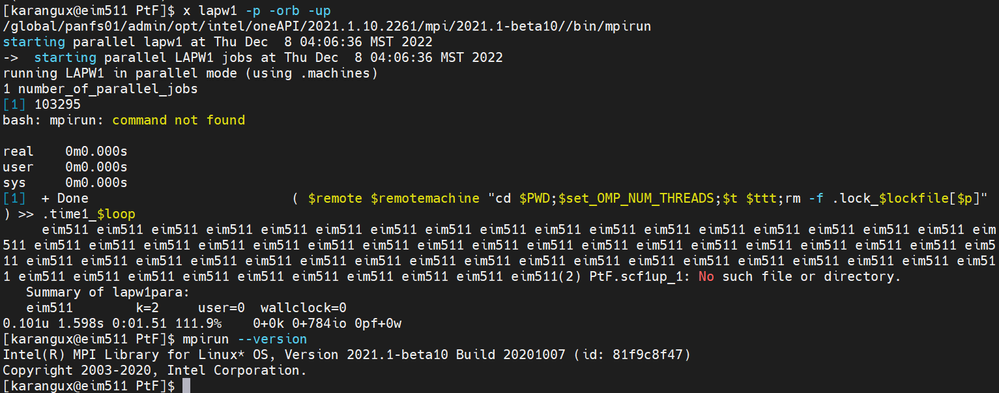

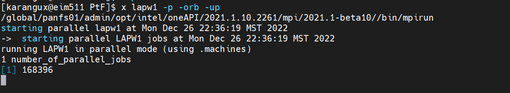

Hi,

Could you please let me know if I'm on right track in replicating the issue that you are observing?

Please refer to the below document for the output of x lapw1 -p -orb -up command and tail PtF.output1up_1 (5th step in Readme).

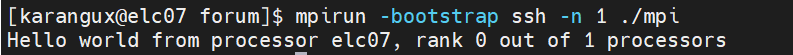

>>>"I suggest that you do a simple test such as a mpi "hello world"".

I am able to run the simple test case mpi hello world program. Please refer below screenshot for the results.

Thanks & Regards

Shivani

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You are reproducing the problem.

Depending upon which version of oneapi you are using ( as I mentioned before), it may hang forever -- what you are seeing -- or give the error code I reported in my first message. Since I do not have access to the impi source code I do not know what the error is due to, which is why I posted in the first case.

For reference, if instead of M1 you use M1b or M1c attached the program should run through without the problem -- but that is not a real cure. I will also suggest replacing PtF.klist with the version attached, as it will be faster. (They are in a tgz for tar.gz which is attached).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Apologies for the delay.

>>>"For reference, if instead of M1 you use M1b or M1c attached the program should run through without the problem"

Even though I have replaced M1 with M1b I am facing the same error. Could you please let us know if there is anything else that should be changed?

Thanks & Regards

Shivani

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am not sure that you are doing anything wrong. Please provide PtF.output1up_1 for me to check.

How long are you waiting for the program to run?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Thank you for your patience.

We are able to reproduce the issue with M1 and are able to run without any problem with M1b. We are working on it and will get back to you.

Thanks & Regards

Shivani

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page