- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi All,

I am writing to ask some questions related to CFD model result different by mpi_hosts order.

I would like to hear your opinion theoretically becuase the code is long and complex and it would be difficult to reproduce it through the sample codes.

The current situation is that when nodes in different infiniband switches perform parallel computations, case #1 works well, but case #2 doesn't work well.

"Doesn't work well" means that there is a difference in values.

Background: host01-host04 in IB switch#1 and host99 in IB switch#2.

Case#1: host01, host02, host03, host04, host99(i.e. header node is hosts01)

Case#2: host99, host01, host02, host03, host04(i.e. header node is hosts99)

As far as I can guess(It's a hypothetical scenario with no theoretical basis),

1) There are miss communication problems while the header node is on another switch.

2) Myranks are reversed while working on MPI_COMM_RANK several times.

3) There are some problems(broken or mismatch) in MPI_COMM_WORLD.

4) Synchronization excludes header nodes.

First of all, for debugging, I'm putting the print statement in several places to see which subroutine or function changes the value.

(I'll post more when the situation is updated.)

However, no matter what function I finally find, I am not sure it's a part of code-level resolution, so I post to the forum to hear a story about a similar experiences.

Thank you.

- Tags:

- Cluster Computing

- General Support

- Intel® Cluster Ready

- Message Passing Interface (MPI)

- Parallel Computing

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Kihang,

Ideally, this shouldn't be the case.

The order of hosts given in the hostfile is the order in which ranks are distributed among nodes based on -ppn.

Could you please confirm that the results are correct when you run solely on host99.

Also, provide how many ranks you are launching and how you are distributing them in both the cases(the mpirun command).

Thanks

Prasanth

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It is well known that any floating point reduction operation is dependent upon the order of reduction. Both locally (multi-threaded) or distributed (via MPI). Might the two different configurations perform reductions in different orders?

Jim Dempsey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks all,

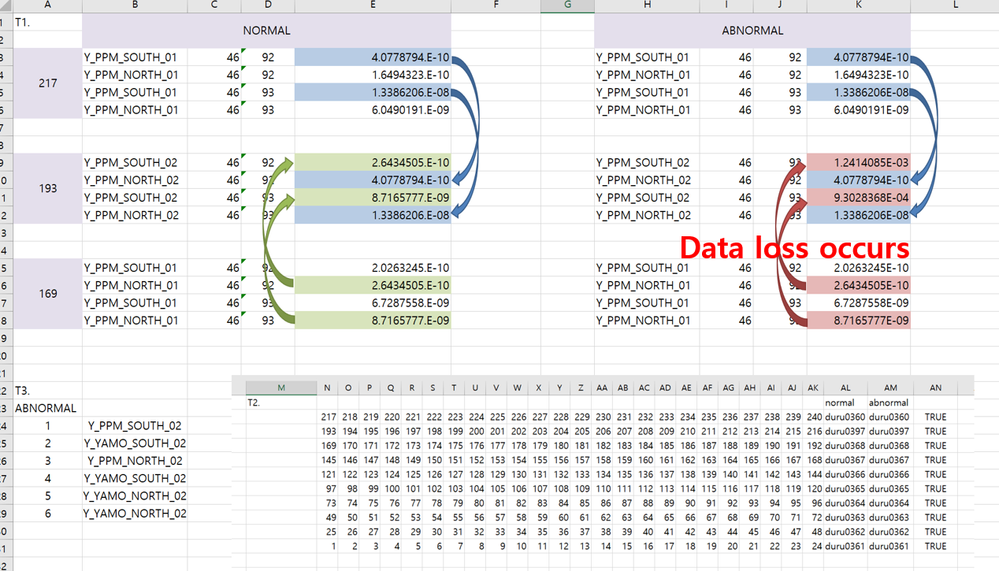

First of all, The error is not just floating point reduction in abnormal case. it is quite big mis-computation(that cause the iterational blow-up).

And, cn099 is not a problem because I tested another nodes(even one of the cn001~cn004).

And my mpirun command with LSF job scheduler is below,

#BSUB -n 120

#BSUB -R "span[ptile=24]" ## that mean "-ppn 24"

#BSUB -m "cn[001-004] cn099" ## In this option, the scheduler allocates two type of host lists randomly(#1: 1,2,3,4,99 & #2: 99,1,2,3,4)

mpiexec -n 120 ./program.exe

What I learned after analyzing more functions and results is that the problem doesn't repeat every time in the same host list and doesn't repeat at same index of the array(randomly but in the same array).

The array in the problem is a pointer type variable, and I suspect that the pointer might have been mis-designated.

Is it more appropriate for Fortran forum than MPI forum?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Are you using asynchronous messaging? Where either the pointer or buffer it points to gets modified prior to message completion?

IOW the race condition normally fails to conflict, meaning you normally see good results

Occasionally conflicts, meaning you see grossly erroneous results.

If you eliminate conflicts while using asynchronous messaging, then you may need to insert some diagnostic code:

1) After each send, insert a receive that expects an acknowledgement to receive an ACK from who got the message (or ACK timeout)

2) After each receive that is not an acknowledgement, report an ACK back to the sender

(The ACK contains information about the source and data received, which may contain a checksum, and anything else necessary for debugging.)

Jim Dempsey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks Jim,

After further debugging, as Jim said, I think there is a problem with asynchronous communication.

Let me just briefly explain the code.(I'm not sure about the copyright of the code, so I can't go into details.)

This code has SEND, IRECV communication and progresses in the following order.

IF(ISRECV)THEN ALLOCATE(RECV(NUM)) CALL MPI_IRECV(RECV, NUM, MPI_REAL, RPROC, 0, MPI_COMM_WORLD, IREQ, IERR) ENDIF IF(ISSEND)THEN ALLOCATE(SEND(NUM)) LOCAL -> SEND ! DO LOOP: 3D(LOCAL) -> 1D(SEND) CALL MPI_SEND(SEND, NUM, MPI_REAL, SPROC, 0, MPI_COMM_WORLD, IERR) DEALLOCATE(SEND) ENDIF IF(ISRECV)THEN CALL MPI_WAIT(IREQ,ISTATUS,IERR) RECV -> LOCAL ! DO LOOP: 1D(RECV) -> 3D(LOCAL) DEALLOCATE(RECV) ENDIF RETURN

For additional information, the above subroutine is repeated twice at the same time (south->north & north->south)

Our team suspects that the quick deallocation of SEND(line#10) caused the problem.

There are three solutions that I think are:

1) Insert MPI_BARRIER at the beginning

2) Insert MPI_BARRIER at the end and then deallocate temporary(SEND,RECV) variables

3) Change IRECV to RECV

I think I want to test all three of them and choose the best after the performance evaluation(speed, stability).

But there's a problem. The problem is not reproduced after many PRINT statements are inserted above and below the subroutine.

I think the PRINT statements role a kind of barrier. Therefore, it would be difficult to conduct a rigorous stability test.

I think I can repeat it with only minimal PRINT statements left and conduct an experimental stability test.(If there's an error, we'll know that.)

I'll share it again when the results come out.

Please let me know if you have any comments.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If not present, after all MPI_xxx(..., IERR) insert code to report IERR /= 0.

Information not provided in posts above:

Is your program multi-threaded (example OpenMP or other threading) as well as multi-process (MPI)? If so, then check for conflicting buffer usage amongst threads. This includes the IREQ structure.

Next, I would verify your suspicion relating to deallocate. MPI_SEND is (supposedly) a blocking send (waits until send complete). A test to make is to insert at line 9.5 SEND(NUM) = -12345.6; SEND(1) = -12345.6 ! last, then first

IOW trash the beginning and end of the buffer. Choose a trash value that would never appear in the data. Then on the receiving process, check for trashed data. If you observe trashed data, then MPI_SEND is reporting completion on the send side before all data has been sent. Bug with MPI_SEND.

Unlikely, but potential bug beyond your control, depending on your fabric, the asychronous send will report done to the MPI_SEND when the fabric has absorbed (buffered) the data, which can preceed the receiving side of receiving all the data (buffered data still in flight). The hypothetical bug would be that the send complete is issued when the DMA operation is setup as opposed to complete. Observation of the trashing of the buffer would confirm this.

If this is a fabric transport bug, report this to Intel (Bug button on top of forum), include link to this thread, and any additional comments you have.

You should be aware that if your 3D array is contiguous (stride = 1 for all ranks) that you can SEND/RECV directly from/to the 3D buffer.

Additional caution when using OpenMP .AND. performing concurrent send/recv of different slices of the same 3D array:

Depending on how you perform your DO loop copy to 3D array and instruction set available to the CPU and data layout of the 3D array (strided > 1), the code generated in the DO loop might be using SIMD instructions to merge the new (strided) data into the 3D array. If hardware scatter is not available or masked write not used, the merge would not be performed in an atomic manner.

So, if each process is also using OpenMP and working on different slices of the same 3D array,add

!DIR$ NOVECTOR

! DO LOOP: 1D(RECV) -> 3D(LOCAL)

I suggest you not use the MPI_BARRIER (sledgehammer approach) as this will add too much latency.

If you can function without a SEND and RECV buffer, please do so.

If not, consider something like:

Subroutine ...(...)

...

REAL(MPI_REAL), ALLOCATABLE, DIMENSION(:), SAVE :: SENDbuffer, RECVbuffer

!$OMP THREADPRIVATE(SENDbuffer, RECVbuffer) ! Note, no effect if not compiled as OpenMP

...

IF(ISRECV)THEN

IF(.NOT. ALLOCATED(RECVbuffer)) THEN

ALLOCATE(RECVbuffer(NUM))

ELSE

IF(SIZE(RECVbuffer) < NUM) THEN

DEALLOCATE(RECVbuffer)

ALLOCATE(RECVbuffer(NUM))

ENDIF

ENDIF

CALL MPI_IRECV(RECVBUFFER, NUM, MPI_REAL, RPROC, 0, MPI_COMM_WORLD, IREQ, IERR)

ENDIF

IF(ISSEND)THEN

IF(.NOT. ALLOCATED(SENDbuffer)) THEN

ALLOCATE(SENDbuffer(NUM))

ELSE

IF(SIZE(SENDbuffer) < NUM) THEN

DEALLOCATE(SENDbuffer)

ALLOCATE(SENDbuffer(NUM))

ENDIF

ENDIF

LOCAL -> (SENDbuffer! DO LOOP: 3D(LOCAL) -> 1D(SENDbuffer)

CALL MPI_SEND(SENDbuffer, NUM, MPI_REAL, SPROC, 0, MPI_COMM_WORLD, IERR)

ENDIF

IF(ISRECV)THEN

CALL MPI_WAIT(IREQ,ISTATUS,IERR)

RECVbuffer -> LOCAL ! (novector) DO LOOP: 1D(RECV) -> 3D(LOCAL)

ENDIF

RETURN

Jim Dempsey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

By the way, consider

!$!DIR$ NOVECTOR

IOW, when compiled with OpenMP the above is "!DIR$ NOVECTOR"

when the above is compiled without OpenMP the above is not a compiler directive (it is a non-functioning comment)

Jim Dempsey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Dear Jim,

Before we started, thank you for your detailed answer.

I'll tell you additional information from the experiment and information that I didn't tell you.

Additional information:

1. This program use only MPI library but OpenMP.

I have not tried the structures you recommend (IREQ, THREADPRIVATE, NOVECTOR).

2. Test results

As I said before, I tried two technique (ISEND and BARRIER) but it doesn't work.

1) ISEND: Even though it works, the same message loss occurs.

2) BARRIER: It's a little weird, I'm sure all the procs are going into the subroutine, and they're going into an infinite waiting(hang).

3) SBUF,RBUF(Jim): I reduce a deallocation as possible, but the same message loss occurs.

3. I think subroutine is not a problem

The reason I don't think it's a subroutine problem,

1) When the host is assigned within the same IB switch, the message has never been lost in 20 repeats.

ex)

host004(IB1): 16 17 18 19 20

host003(IB1): 11 12 13 14 15

host002(IB1): 06 07 08 09 10

host001(IB1): 01 02 03 04 05 : always fine

2) As the East-West communication was always conducted on the same node by adjusting the domain (NROW,NCOL), there was no problem.

(E-W communication uses same subroutine)

It has always been a communication between different IB switches that causes problems in South-North communications.

ex)

host037(IB2): 16 17 18 19 20 <- message lost occurs in S-N communication

host003(IB1): 11 12 13 14 15

host002(IB1): 06 07 08 09 10

host001(IB1): 01 02 03 04 05

4. Isn't there a similar reason for hang when using a barrier?

Aren't the one IB switch nodes (host001-host004) waiting for the other IB switch (host037) but host037 passing through the barrier?

Are there any of these mpi options that can be improved and modified?

I'm going to check if other MPI libaries(openmpi, mvapich, mpich) have the same error.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Kihang,

I must first state that I am not well experienced with various fabric issues. Just application issues.

>>1... THREADPRIVATE, NOVECTOR

The threadprivate was only helpful should the (any) process be multi-threaded. The omp directive is ignored when not compiling with OpenMP

The NOVECTOR can be useful should you see data corruption. There have been an occasional bug with three level nested loops that perform a scatter store have experienced issues using SIMD instructions.

(cause mitigated by re-ordering loop nest order)

This is not related to your hanging problem.

>>2.1)... message loss

There is a distinction to be made between data loss (some of the message didn't make it) and message loss (all of it didn't make it). I was assuming the former.

>>2.2) ... BARRIER... I'm sure all the procs are going into the subroutine

The barrier requires all procs to reach the barrier. While the execution of the code is designed (intended) for all procs to reach the barrier, there are some unintended circumstances that can circumvent a process from reaching the barrier.

Examples:

Code in one or more process crashes (usually the application aborts, but not always)

One (or more) of the processes (falsely or unexpectedly) assumes or detects or signals a "get out of main loop" condition, and thus does not enter the send/recv procedure as you expect.

Your doWork that processes the messages contains convergence code that never converges.

Can you use the debugger to "attach to process" for each process to figure out where they are located.

You might first use top/Task Manager/other tool to see if one or more of the processes is in a compute loop (locate convergence issue).

Also, prior to making test run of problem-some configuration, insert code that follows the END DO of the major loop that you assume all processes are located within (not the subroutine that performs the send/recv, but rather after the exit of the outer most loop that you assume the processes are located within). Place there PRINT *, myId, "done" or something like that to identify the exceptional process.

>>2.3)...message loss occurs

Message loss is different from data corruption or dropout.

>>3. I think subroutine is not a problem

From what you have shown, I would agree. It would seem more like a cause as listed in 2.2)

If application hangs (as opposed to abort), check to see if all processes are at the barrier.

no process exited the main loop

no convergence issue

no process abort without aborting all processes (would cause a hang with no visible feedback).

If the above is futile in locating the hang issue, then I would add a means to probe the application to see what is happening.

1) insturment the application to log where the execution is at. For example, copy the FPP generated text for __FILE__ and __LINE__ into module data. Update these locations prior to, and following, the call to you send/recv routine and any place else. You can also expand the window data as needed.

2) add to the application startup code that uses MPI_WIN to instantiate an MPI One-sided communication window to the where am I file and line number.

3) write a simple additional application that uses the same module and does the same MPI_WIN to instantiation. But this simple application has simple console input to direct probes into the hung application.

4) the application launch then becomes:

mpiexec ... yourApplication ... : ... yourProbingApplication ...

IOW use the ":" token to tack on the additional application as an additional rank (all ranks need not be the same image). Of course, you will want to locate the yourProbingApplication at a specific node (the one you are sitting at).

When the hang occurs, this additional application can peek at what is going on in the hung application. You will likely need to expand information held in the MPI_WIN window as you delve deeper into the problem.

See: https://pages.tacc.utexas.edu/~eijkhout/pcse/html/mpi-onesided.html

to get you started.

Jim Dempsey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Dear Jim,

Thank you for your detailed guide and reference.

As we dive into the MPI_Barrier in more detail,

When the barrier statement was put in one function (used in several places) and executed program several times repeatly, the stop positions were different in each trial. So I figured this out as a bug or system problem not software.

And, I will distinguish between message loss and data loss (data corruption).

Honestly, it's a bit burdensome to analyze it in more detail with mpi_win. I felt there was a lot to learn in the mpi world. :-)

(I will list it in my future plans and research later.)

And the current situation is as follows.

As I said in the last post, I have tested it with a different MPI library, and when I compiled it with OPENMPI, the same problem did not occur in 500 repeats. OPENMPI works fine, so I don't test it with MPICH or MVAPICH. I plan to compare the performance of OPENMPI and intel MPI in the same IB switch, and replace it with OPENMPI if there is no significant performance difference.

If there is a performance difference, Is it okay to report a bug by excerpting the part of the code where the problem occurs(If it is possible to excerpt the code to be reproducible)?

Kihang

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

>>So I figured this out as a bug or system problem not software.

It is always easier to point the finger at something else.

Your problem has the characteristics of a timing issue .OR. an assumption in one rank about what is going on in another rank. Be aware that changing the MPI library can affect the timing. Therefor, should the timing issue be located within your application, changing the MPI library could potentially hide the issue (with your application)... for a while. The Heisenbug principal is all software bugs are fixable during test. During production, well that is a different story.

Can you show your complete inter-rank messaging routine? (this assumes it is centralized into one subroutine)

As well as show any broadcasting messaging.

Additional question regarding your application behavior

Does your application use a broadcast in addition to a point-to-point messaging?

In particular, would the manner in which you perform the broadcast (together with the timing of receive by other ranks) interfere with the sequencing of your hand-shake point-to-point messaging?

Jim Dempsey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Kihang,

You can check the correctness of an application using Intel® Trace Analyzer and Collector(ITAC).

Steps to run a sample to use ITAC:

source /opt/intel/itac/bin/itacvars.sh

mpirun -check_mpi -n 4 ./app

This will help in pointing out where your application is failing.

Let us know if the insights provided by the tool helps you in debugging the issue.

Thanks

Prasanth

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Kihang,

We are closing this thread assuming that your issue has been resolved.

Please raise a new thread for further queries.

Regards

Prasanth

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page