- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi all,

In my transcoder application, video decode and preprocess(rescale, deinterlace) base on ffmpeg/libav(use cpu), and video encode base on Intel QSV(use GPU).

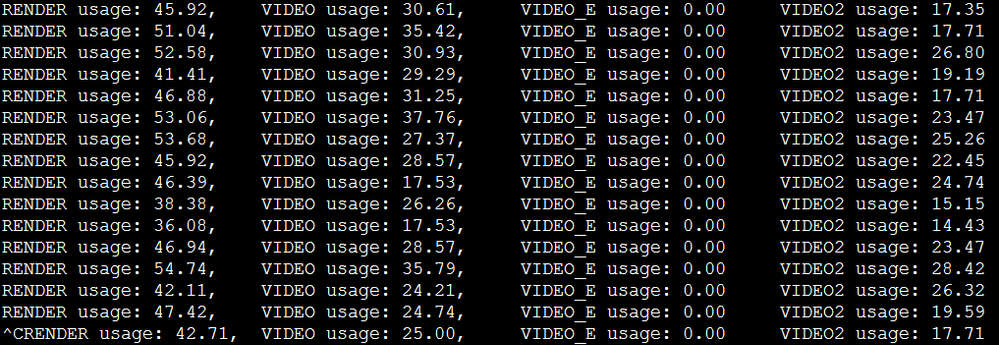

When the CPU is full(nearly 100%), the Inter QSV encode performance is slowdown, but the GPU usage is not very high, below is the metrics_monitor output:

I want to know whether full CPU usage can slowdown GPU performance .

first, I use sample_encode_drm do some test:

@localhost samples]$ time sudo ./__cmake/intel64.make.release/__bin/release/sample_encode_drm h264 -i ~/input_1080P.yuv -o ~/output.h264 -w 1920 -h 1080 libva info: VA-API version 0.35.0 libva info: va_getDriverName() returns 0 libva info: Trying to open /usr/lib64/dri/i965_drv_video.so libva info: Found init function __vaDriverInit_0_32 libva info: va_openDriver() returns 0 Encoding Sample Version 0.0.000.0000 ... ... Processing started Frame number: 1000 Processing finished real 0m3.217s user 0m0.982s sys 0m0.690s

real time is 0m3.217s

second, I use ffmpeg to encode a 1920x1080 video with best quality to make the CPU full(nearly 100%), and use sample_encode_drm to test encode again:

@localhost samples]$ time sudo ./__cmake/intel64.make.release/__bin/release/sample_encode_drm h264 -i ~/input_1080P.yuv -o ~/output.h264 -w 1920 -h 1080 libva info: VA-API version 0.35.0 libva info: va_getDriverName() returns 0 libva info: Trying to open /usr/lib64/dri/i965_drv_video.so libva info: Found init function __vaDriverInit_0_32 libva info: va_openDriver() returns 0 Encoding Sample Version 0.0.000.0000 ... ... Processing started Frame number: 1000 Processing finished real 0m3.509s user 0m2.012s sys 0m1.221s

real time is 0m3.509s

Of course, CPU is full, the I/O performance maybe slower.

Sorry for my bad English.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Yabo,

I don't think occupying CPU completely should reduce Graphics performance, they work independently of each other workload. One reason why your test is not reflecting same because in sample_encode_drm, it is reading an input from a file and writing the o/p which involves file I/O and done on core, also it is not optimized enough which could reduce the performance of your encode test if CPU is fully occupied. You can test without counting the read and write function in your test(only GPU is being used) and check the test time or fps in both scenarios when your CPU is idle and completely loaded.

Thanks,

Surbhi

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Yabo,

I don't think occupying CPU completely should reduce Graphics performance, they work independently of each other workload. One reason why your test is not reflecting same because in sample_encode_drm, it is reading an input from a file and writing the o/p which involves file I/O and done on core, also it is not optimized enough which could reduce the performance of your encode test if CPU is fully occupied. You can test without counting the read and write function in your test(only GPU is being used) and check the test time or fps in both scenarios when your CPU is idle and completely loaded.

Thanks,

Surbhi

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Surbhi,

I had do some test as your suggestion. I add some code to sample_encode/src/pipeline_encode.cpp:

1192

1193 + static long getCurrentTime()

1194 + {

1195 + struct timeval tv;

1196 + gettimeofday(&tv,NULL);

1197 + return tv.tv_sec * 1000000 + tv.tv_usec;

1198 + }

1314 for (;;)

1315 {

1316 + long start_ms = getCurrentTime();

1317 // at this point surface for encoder contains either a frame from file or a frame processed by vpp

1318 sts = m_pmfxENC->EncodeFrameAsync(NULL, &m_pEncSurfaces[nEncSurfIdx], &pCurrentTask->mfxBS, &pCurrentTask->EncSyncP);

1319 + fprintf(stderr, "EncodeFrameAsync() take %lf\n", (getCurrentTime() - start_ms) / (double)1000000);

If the CPU is idle, sample_encode_drm output:

EncodeFrameAsync() take 0.000006 EncodeFrameAsync() take 0.000006 EncodeFrameAsync() take 0.000006 EncodeFrameAsync() take 0.000006 EncodeFrameAsync() take 0.000005 EncodeFrameAsync() take 0.000003 EncodeFrameAsync() take 0.000003 EncodeFrameAsync() take 0.000003 EncodeFrameAsync() take 0.000003 EncodeFrameAsync() take 0.000003 EncodeFrameAsync() take 0.000003 EncodeFrameAsync() take 0.000006 EncodeFrameAsync() take 0.000005 EncodeFrameAsync() take 0.000006 EncodeFrameAsync() take 0.000006 EncodeFrameAsync() take 0.000005 EncodeFrameAsync() take 0.000005 EncodeFrameAsync() take 0.000004 EncodeFrameAsync() take 0.000003 EncodeFrameAsync() take 0.000003 EncodeFrameAsync() take 0.000003

If the CPU is completely loaded,sample_encode_drm output:

EncodeFrameAsync() take 0.000008 EncodeFrameAsync() take 0.000008 EncodeFrameAsync() take 0.000007 EncodeFrameAsync() take 0.000020 EncodeFrameAsync() take 0.000018 EncodeFrameAsync() take 0.000009 EncodeFrameAsync() take 0.000025 EncodeFrameAsync() take 0.000016 EncodeFrameAsync() take 0.000009 EncodeFrameAsync() take 0.000021 EncodeFrameAsync() take 0.000007 EncodeFrameAsync() take 0.000009 EncodeFrameAsync() take 0.000032 EncodeFrameAsync() take 0.000017 EncodeFrameAsync() take 0.000009 EncodeFrameAsync() take 0.000018 EncodeFrameAsync() take 0.000018 EncodeFrameAsync() take 0.000010 EncodeFrameAsync() take 0.000023 EncodeFrameAsync() take 0.000009 EncodeFrameAsync() take 0.000009

completely loaded CPU take more time than idle CPU.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page