- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Intel IGP CL team,

I'm seeing a huge performance regression between Haswell and Broadwell when comparing 64-bit ulong's or performing min/max operations.

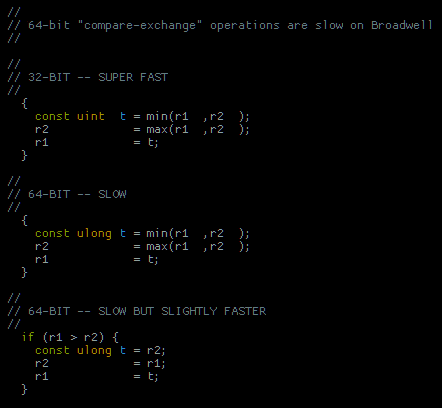

Please check the number of instructions that Broadwell is generating for the two ulong (64-bit) "compare-exchange" sequences below.

The 64-bit compare-exchange sequences are running half as fast on Broadwell when compared to Haswell.

The 32-bit compare-exchanges appear to be correct (they're very fast).

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Allan,

Could you send a kernel and possibly a reproducer with this? What are the buffer sizes you are testing this on? Your driver version on BDW and HSW?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The driver is the most recent for HSW/BDW: 10.18.14.4156.

Buffer sizes were constructed to mostly fill the register file of each EU. Over 100 registers are in use per work item and the goal is to have EUs running in SIMD8.

- Haswell HD4600: 448,000 bytes

- Broadwell HD6000: 1,075,200 bytes

To be more clear, HSW/BDW seem to execute the 32-bit (uint) kernel well.

On the same buffer size, HSW executes 64-bit (ulong) variants of the attached compare-exchange sequences at less than half the throughput as 32-bit -- this is reasonable and expected. [ Edit: it's a lot closer to 1/2 rate on other platforms but those devices have min/max support in hardware. ]

But BDW executes 64-bit (ulong) compare-exchanges at 1/4th to 1/5th the rate instead of less than 1/2. That's a major regression from HSW and should be easy to spot in the native code.

I can't send the kernel. I pasted the suspect sequences in the original message. The answer should be revealed by dumping the actual generated GEN instructions. Benchmarking a small reproducer probably wouldn't be useful since the global loads/stores would dominate the comp-exch performance. Ping me if you need to know more.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'm still working this issue.

Broadwell's kernel codegen appears broken for high register count kernels.

It would be great to solve this issue so I can publish my performance numbers.

Could it be possible the performance issue I'm seeing on BDW is due to spills?

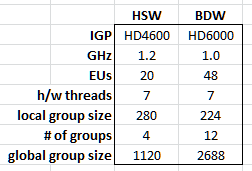

I'm launching a fully-loaded workgroup that occupies the IGP with 7 threads per EU.

Haswell performs spectacularly while Broadwell just wheezes and dies.

A performance plot leads me to hypothesize that the BDW kernel is limited to 64 registers/workitem, as if it were a SIMD16 kernel, and thus spilling like crazy.

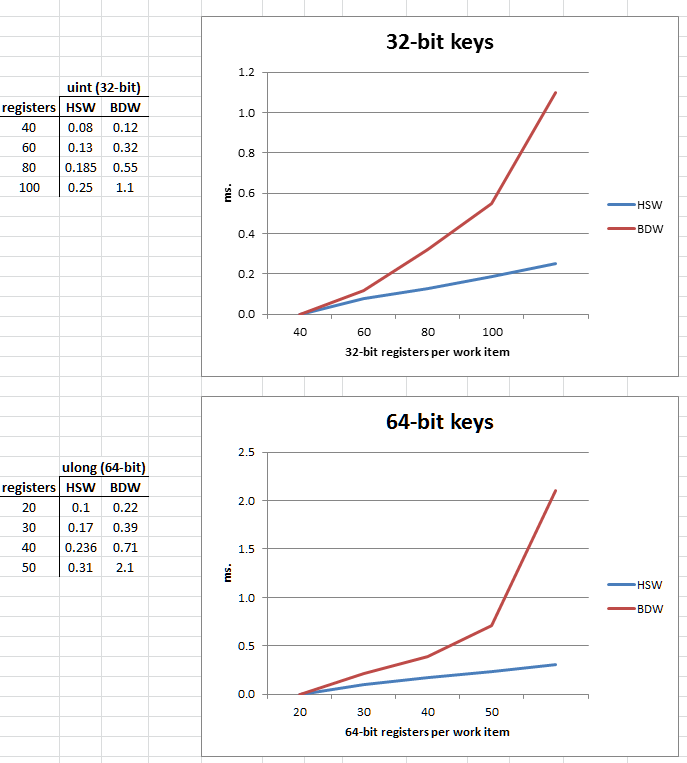

The plots shows two sorting kernels.

The top chart plots the execution time of a 32-bit sorting kernel on an HSW HD4600 and BDW HD6000. Kernels with 40-100 registers per work-item are plotted.

The bottom plots the execution time of 64-bit key sorting kernel with between 20-50 64-bit registers per work-item.

The 20 EU Haswell HD4600 is using at least 100 live registers (probably close to the 128 limit) and performs really really well.

The OpenCL Code Builder's "Deep Analysis" shows that each IGP runs exactly 7 EU threads per EU.

The plot below should illustrate why I think there is a bug:

Both test PCs are running Win7/x64 + a 4K LCD over displayport.

I'm also running the brand new 10.18.14.4170 driver. There was no performance change from the previous driver.

Both PCs have dual-channel DDR3 1866 memory and benchmark at ~20 GB/sec.

I've also tested a kernel that simply loads and stores the < 1 MB of data (no sorting) and its runtime is negligible.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Allan,

Is there any chance you could send SPIR files instead of the actual code (you should be legally protected)? The other option is for us to sign the NDA.

Do you use barriers, local memory? If yes, how much? The local WG sizes: do you need them to be like that? Did you try the kernel analysis to figure out if the local WG sizes are the best for your kernel?

I forwarded this discussion to a driver architect (unfortunately, he is out today), but I am afraid there is not enough info here.

The BDW perf looks like a spilling issue.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Got it. I'll message you directly w/the details.

Thanks, Robert.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Allan,

I got your private message and forwarded it to our Compute Architect for further analysis. I will keep you posted on anything he finds out. Thank you for providing the info!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Is there any progress on this issue?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Allan,

As far as I know there was no progress on this issue. I will ping the architect again. Sorry that it is taking so long.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Testing this morning on Windows 10 and the new Code Builder shows a significant improvement on a Broadwell HD 6000 on a NUC 5i5RYX.

On Broadwell I'm now seeing almost double the performance of Haswell on the high register count block sorting kernels.

These are pure 32-bit and 64-bit integer kernels so perhaps Broadwell's integer throughput improvements are finally revealed?

Any confirmation if my issue was intentionally solved?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I hadn't benchmarked my Broadwell kernels in a while but there seem to have been further improvements to the OpenCL driver.

Using the .4444 Beta driver on a Win10/x64 and a 5i5RYH NUC I'm getting some excellent sorting rates on the Broadwell EUs.

An HD6000 launching a single 224 thread workgroup can sort 28x400 64-bit keys in 62 usecs (execution time).

For these kernels, a 224 thread workgroup is basically half of a subslice and, presumably, would only be executing on at most 8 EUs (right?).

By comparison, a 384 core Quadro K620 sorts 16,384 64-bit keys in 120 usecs using an entire 128 core multiprocessor.

Since the HD6000 has 48 EUs it's also worth looking at how the IGP performs when fully utilized.

In this benchmark, 12 "slabs" of 336x400 64-bit keys can be simultaneously sorted in as little as 159 usecs (execution time).

That's really really good.

When I get some time I'll port the sorted "slab" merging kernels and benchmark sorting of larger arrays.

I can only imagine how a Skull Canyon 72 EU Skylake IGP would perform!

Edit: Looking back at my old Haswell benchmarks, the Broadwell EUs appear to double the throughput of Haswell EUs. I believe this makes sense because 64-bit integer comparisons are probably benefiting from the BDW's doubled IOP throughput -- assuming the "SEL" op is doubled as well? I'd have to inspect the assembly to find out. Also, the Haswell HD 4600 is clocked higher than the HD 6000.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Allan,

Yes, BDW has two FPUs per EU that are capable of both Integer and Floating point ops, so that's what you are seeing.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Are there any general optimization rules when loading/storing 64-bit elements to local memory?

I've continued porting some code from CUDA to an 5775C/HD6200 (it's quite a bit faster than the non-Iris Pro HD6000) but I'm seeing a lot of time spent on what I think is local memory load/store overhead.

From what I've gleaned, Intel Processor Graphics has a local memory region that has 16 32-bit banks, right?

Is there anything beyond that fact that I would need to know when dealing with 64-bit load/store ops?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

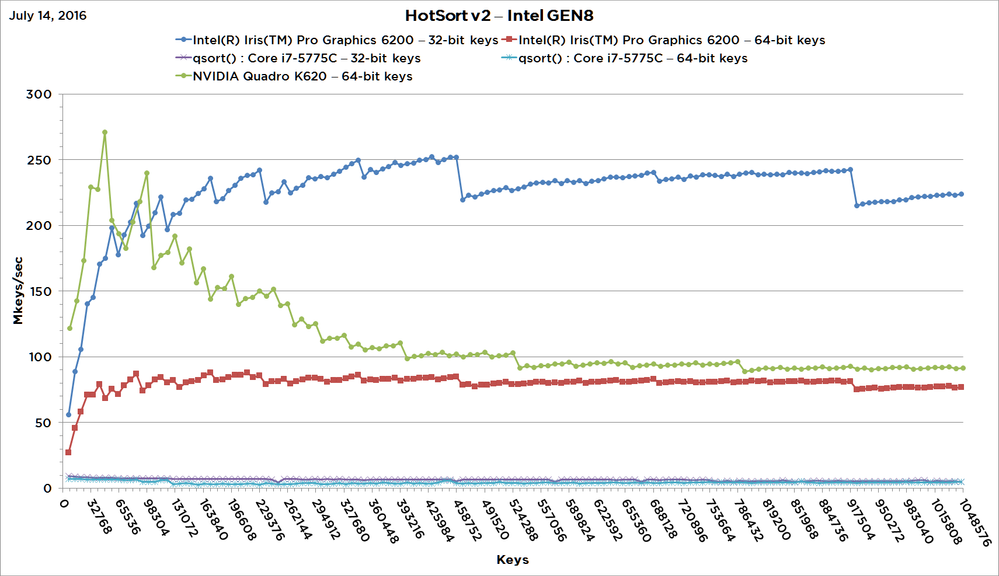

I had some time over the July 4th holiday to finish up porting the merging kernels to my HotSort sorting library and have some early and excellent numbers to share.

When sorting 1m 32-bit keys on an HD 6200, the HotSort v2 algorithm is over 40x faster than the VS2013 library's qsort() algorithm!

The attached plot compares the sorting rates of HotSort on an H6200 and Quadro K620.

Note that an HD 6200 and Quadro K620 both have 384 FPUs running at ~1.1 GHz and have 29 GB/sec of DDR3 bandwidth.

The HD 6200 results are very good and hold up well against discrete GPUs.

The 32-bit sorting kernels appear to be working as expected but the 64-bit kernels are underperforming.

My guess is that either the 64-bit comparisons, 64-bit SIMD8 lane shuffles or 64-bit load/stores to SLM are more than the expected 2x cost over 32-bit equivalents.

Even with these unfixed bugs/optimizations, the 64-bit GEN8 library throughput is within 85% of a Quadro K620.

More here!

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page