- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This is a follow up on my previous question: https://community.intel.com/t5/Intel-oneAPI-HPC-Toolkit/Debugging-MPI-codes-with-gtool-or-gdb-hangs-nothing-happens-when/m-p/1284089

I was told the cause of the issue is that I did not use a supported OS at the time but now I am actually using a supported OS (Ubuntu 20.04.4 LTS) that is freshly installed just a couple hours ago.

I still get the same error here when I try to use -gtool option to attach GDB to my code. Here is what I used to run my code

mpirun -n 2 -gtool "gdb:0,1=attach" ./fogoand this is what I get

mpigdb: attaching to Cannot access memory

mpigdb: hangup detected: while read from [0]

mpigdb: attaching to Cannot access memory

mpigdb: hangup detected: while read from [1]I have also tried to replace gdb with gdb-oneapi but got the same thing. I could use this option on our Compute Canada clusters but most of the nodes are slow for debugging / compilation.

I get the same error when running

mpirun -n 3 -gtool "gdb:0,1=attach" $I_MPI_ROOT/bin/IMB-MPI1Any idea what is causing this issue?

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Thanks for reaching out to us.

We have reported this issue to the concerned development team. They are looking into your issue.

Thanks & Regards,

Santosh

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Could you please try Intel MPI 2021.7 and let us know if the issue persists?

Thanks & Regards,

Santosh

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

We haven't heard back from you. Could you please try Intel MPI 2021.7 and let us know if the issue persists?

Thanks & Regards,

Santosh

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Please follow the below steps to resolve your issue:

Steps:

- sudo sysctl -w kernel.yama.ptrace_scope=0

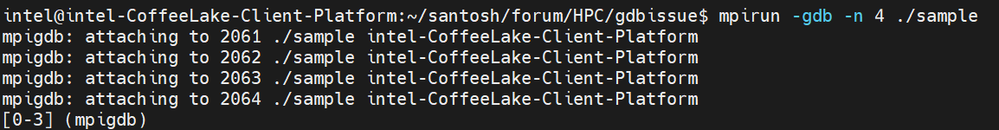

- mpirun -gdb -n 4 ./sample

We have tried at our end & we are able to get the desired results as shown below:

Please try from your end & let us know if you face any issues.

Thanks & Regards,

Santosh

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Santosh,

Thanks very much for this. I have tried your solution and it does seem that it's also working on my computer. However, it won't work without first changing the settings as you suggested, which is not entirely unacceptable.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

The issue is specific to the systems having PTRACE scope to "1"(kernel.yama.ptrace_scope = 1).

- The PTRACE system is used for debugging. With it, a single user process can attach to any other dumpable process owned by the same user. In the case of malicious software, it is possible to use PTRACE to access credentials that exist in memory (re-using existing SSH connections, extracting GPG agent information, etc).

- A PTRACE scope of "0" is the more permissive mode. A scope of "1" limits PTRACE only to direct child processes (e.g. "gdb name-of-program" and "strace -f name-of-program" work, but gdb's "attach" and "strace -fp $PID" do not). The PTRACE scope is ignored when a user has CAP_SYS_PTRACE, so "sudo strace -fp $PID" will work as before.

For more details see: https://wiki.ubuntu.com/SecurityTeam/Roadmap/KernelHardening#ptrace

In general, PTRACE is not needed for the average running Ubuntu system. To that end, the default is to set the PTRACE scope to "1". This value(kernel.yama.ptrace_scope = 1) may not be appropriate for developers or servers with only admin accounts.

Thus, the issue can be simply resolved by setting kernel.yama.ptrace_scope = 0 & it it not an issue related to Intel MPI.

Thanks & Regards,

Santosh

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Santosh,

Thanks very much for your detailed explanation. I now understand!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Thanks for the quick response.

As your issue is resolved, we are closing this thread. If you need any additional information, please post a new question as this thread will no longer be monitored

by Intel.

Thanks & Regards,

Santosh

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page