"Intel_Labs" Posts in "Artificial Intelligence (AI)"

Success! Subscription added.

Success! Subscription removed.

Sorry, you must verify to complete this action. Please click the verification link in your email. You may re-send via your profile.

- Intel Community

- Blogs

- Tech Innovation

- Artificial Intelligence (AI)

- "Intel_Labs" Posts in "Artificial Intelligence (AI)"

Intel Labs’ Top Moments of 2024

01-03-2025

This year, Intel Labs researchers developed innovative technologies, received awards, obtained fello...

1

Kudos

0

Comments

|

Intel Labs AI Researchers Featured as Part of Innovation Selects 2024

12-20-2024

Intel’s Innovation Selects features a collection of specially curated technical talks and demos, inc...

0

Kudos

0

Comments

|

CLIP-InterpreT: Paving the Way for Transparent and Responsible AI in Vision-Language Models

12-17-2024

CLIP-InterpreT offers a suite of five interpretability analyses to understand the inner workings of ...

0

Kudos

0

Comments

|

LVLM-Interpret: Explaining Decision-Making Processes in Large Vision-Language Models

12-13-2024

Understanding the internal mechanisms of large vision-language models (LVLMs) is a complex task. LVL...

1

Kudos

0

Comments

|

Building Trust in AI: An End-to-End Approach for the Machine Learning Model Lifecycle

12-11-2024

At Intel Labs, we believe that responsible AI begins with ensuring the integrity and transparency of...

0

Kudos

0

Comments

|

Intel Presents Novel AI Research at NeurIPS 2024

12-10-2024

The Conference on Neural Information Processing Systems (NeurIPS 2024) will run from Tuesday, Decemb...

0

Kudos

0

Comments

|

Intel Researchers Organize Workshops & Socials at NeurIPS 2024

12-03-2024

Intel employees have organized several workshops at NeurIPS 2024, which are co-located with this yea...

0

Kudos

0

Comments

|

Intel Labs Introduces RAG-FiT Open-Source Framework for Retrieval Augmented Generation in LLMs

10-09-2024

Intel Labs introduces RAG-FiT, an open-source framework for augmenting large language models for ret...

2

Kudos

0

Comments

|

The AI Developer’s Dilemma: Proprietary AI vs. Open Source Ecosystem

10-02-2024

Fundamental Choices Impacting Integration and Deployment at Scale of GenAI into Businesses

0

Kudos

0

Comments

|

Intel Labs and Collaborators Present Novel Computer Vision Approaches at ECCV 2024

09-30-2024

Intel Labs and collaborators will present six papers focused on computer vision and machine learning...

1

Kudos

0

Comments

|

ACL 2024 Outstanding Paper Awarded to Intel Labs Collaboration on Evaluating Opinions in LLMs

08-12-2024

Research collaborators from Bocconi University, Allen Institute for AI, Intel Labs, University of Ox...

1

Kudos

0

Comments

|

Intel Research on LiDAR Localization with Semantic Awareness Receives Highlight Award at CVPR 2024

07-31-2024

Researchers at Intel Labs, in collaboration with Xiamen University, have presented LiSA, the first s...

0

Kudos

0

Comments

|

Intel Labs Presents Six Cutting-Edge Machine Learning Research Papers at ICML 2024

07-22-2024

Intel Labs will present six papers at ICML 2024 on July 21-27, including three poster papers at the ...

0

Kudos

0

Comments

|

Nature Machine Intelligence Publishes Intel Labs’ Neuromorphic Research on Visual Perception

07-15-2024

Two Intel Labs research papers present novel neuromorphic computing solutions using Intel’s Loihi ne...

0

Kudos

0

Comments

|

Intel Labs Releases Models for Computer Vision Depth Estimation: VI-Depth 1.0 and MiDaS 3.1

03-22-2023

Intel Labs introduces VI-Depth version 1.0, an open-source model that integrates monocular depth est...

1

Kudos

1

Comments

|

Intel Labs Presents 24 Papers on Innovative AI and Computer Vision Research at CVPR 2024

06-17-2024

Intel Labs researchers will present 24 papers at CVPR 2024 on June 17-21

2

Kudos

0

Comments

|

Advancing Gen AI on Intel Gaudi AI Accelerators with Multi-Modal Panorama Generation AI Technology

06-04-2024

Researchers at Intel Labs introduced Language Model Assisted Generation of Images with Coherence, a ...

1

Kudos

0

Comments

|

VNDF importance sampling for an isotropic Smith-GGX distribution

05-22-2024

In this blog post, we introduce an optimized importance sampling routine for GGX materials. Such mat...

0

Kudos

0

Comments

|

Intel Presents SYCL implementation of Fully-Fused Multi-Layer Perceptrons for Intel GPUs

05-17-2024

Intel is proud to present the first SYCL implementation of fully-fused Multi-Layer Perceptrons appli...

1

Kudos

0

Comments

|

Intel Labs Presents State-of-the-Art AI Research at ICLR 2024

05-07-2024

This year’s International Conference on Learning Representations (ICLR) is hosted in Vienna Austria ...

1

Kudos

0

Comments

|

Good AI Starts with Good Data: Battling Silent Data Corruption with Computational Storage

05-07-2024

Could the risks of silent data corruption hinder the quality of AI training data?

0

Kudos

0

Comments

|

Intel® Shows OCI Optical I/O Chiplet Co-packaged with CPU at OFC2024, Enabling Explosive AI Scaling

03-21-2024

At the Optical Fiber Conference (OFC) in San Diego on March 26-28, 2024, Intel demonstrated our adva...

1

Kudos

0

Comments

|

International Women’s Day: Celebrating Accomplishments of Women at Intel Labs

03-08-2024

There are many outstanding women at Intel Labs, and in honor of International Women’s Day, we would ...

4

Kudos

1

Comments

|

Intel Labs Research Work Receives Spotlight Award at Top AI Conference (ICLR 2024)

02-27-2024

Researchers at Intel Labs, in collaboration with Xiamen University and DJI, have introduced GIM, the...

1

Kudos

0

Comments

|

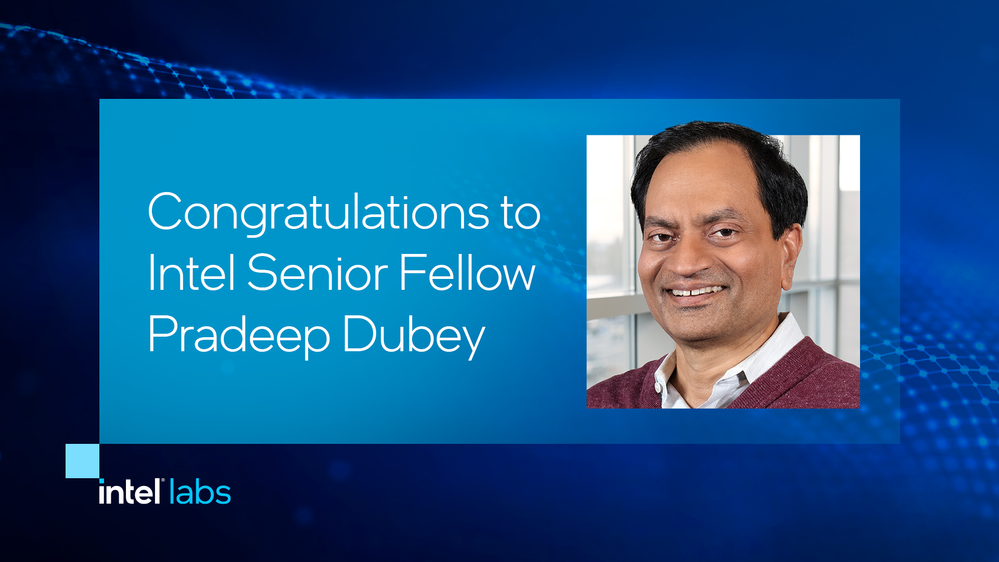

Intel Senior Fellow Receives ACM Fellowship for Parallel Processing of Data-Intensive Applications

02-05-2024

Intel Senior Fellow Pradeep K. Dubey was named a 2023 ACM Fellow for his lifelong technical contribu...

0

Kudos

0

Comments

|

Community support is provided Monday to Friday. Other contact methods are available here.

Intel does not verify all solutions, including but not limited to any file transfers that may appear in this community. Accordingly, Intel disclaims all express and implied warranties, including without limitation, the implied warranties of merchantability, fitness for a particular purpose, and non-infringement, as well as any warranty arising from course of performance, course of dealing, or usage in trade.

For more complete information about compiler optimizations, see our Optimization Notice.