My previous blog post about the new simple RISC-V* virtual platform in the Public Release of the Intel® Simics® Simulator covered system-level simulation and networking within and outside the simulator. In this post, I will look at how the presence or absence of instruction set extensions affect the software stack execution – and how to debug any issues that follow.

Configurable Instruction Set

The virtual platform should allow both positive and negative testing of software. If a program works on the virtual platform it should work on a real hardware implementation, and if does not work on the real hardware, it should fail on the virtual platform. RISC-V makes it easy to demonstrate and test this aspect.

RISC-V is a modular instruction set, and each specific hardware implementation chooses which instruction set extensions it supports. The simple RISC-V virtual platform is designed as a generic platform that does not correspond to any specific hardware implementation, and the precise set of instruction set extensions used in a simulation can be configured. As discussed previously, other aspects of the platform can also be configured, like the number of cores and size of memory and disks.

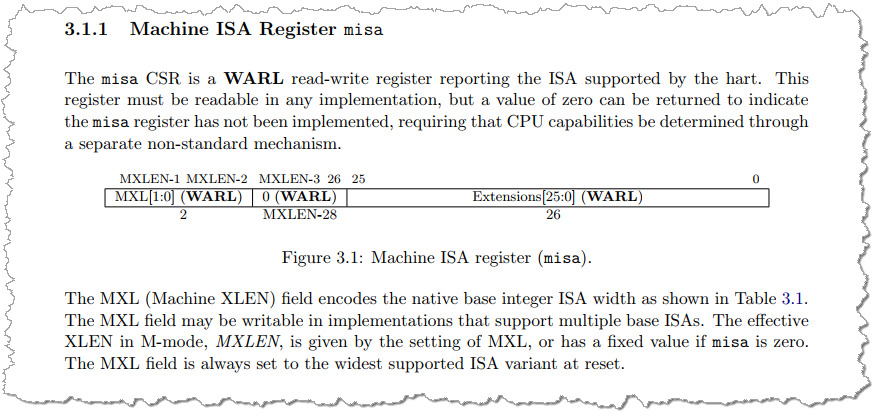

Some of the instruction set extensions available are communicated to software using the misa register, while most are communicated by trying to run an instruction from the set and checking for an exception.

RISC-V misa register, from “The RISC-V Instruction Set Manual Volume II: Privileged Architecture. Document Version 20211203”.

Turning off Floating-Point Instructions

Simics instruction-set simulators for RISC-V are configured using the extensions sub-object of the processor object. Each available extension has a Simics simulator attribute that can be changed. To test the effect of changing the instruction set, the following script turns off all floating-point support in the processors in the standard RISC-V simple virtual platform:

foreach $h in (list-objects class = riscv-rv64 -all) {

$h.extensions->F = FALSE

$h.extensions->D = FALSE

$h.info ## generate output showing the ISA state of the core

}

After this, the processor core simulators report their instruction set as “RV64IMAC”.

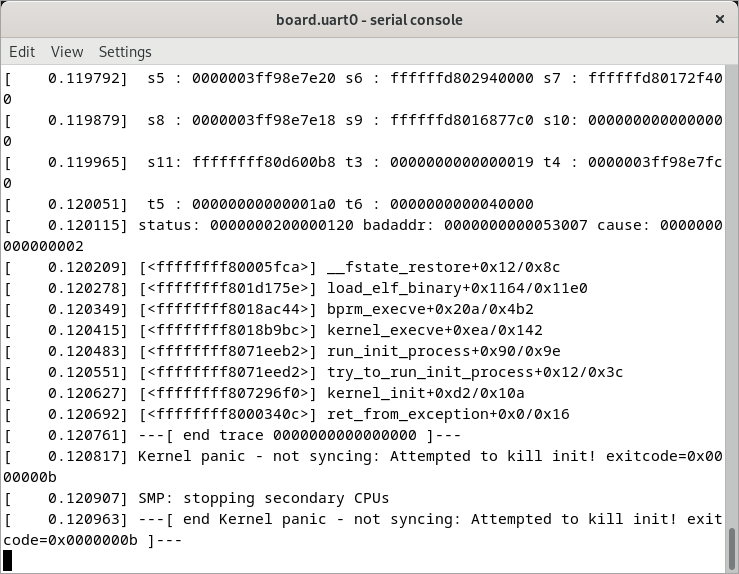

Trying to boot the default software stack (built using Buildroot) results in a kernel panic from an illegal instruction:

The kernel is then stuck in an infinite loop – which is what you expect after a fatal event like this.

Analyzing the Kernel Panic

It would be interesting to know the precise instruction causing the kernel panic, and which part of the Linux stack it comes from. This can be analyzed by using the built-in Simics simulator debug functionality. It is too late when the kernel panic has hit, as the execution has moved on from the illegal instruction.

Since the simulator is deterministic, the solution is to start a new simulation session. Disable the floating-point instructions. Set a breakpoint on illegal instruction exceptions, and then run the simulation forward.

This reveals that each core in the system triggers one illegal instruction in the bootloader, before Linux is even started! These exceptions happen even if floating point is not disabled, and are part of the standard flow of the bootloader:

# Set up floating point as shown above, and then:

simics> bp.exception.break object ="board" -recursive Illegal_Instruction

simics> r

[board.hart[0]] Breakpoint 2: board.hart[0] Illegal_Instruction(2) exception triggered

simics> enable-debugger

Debugger enabled.

simics> add-symbol-file targets/risc-v-simple/images/linux/fw_jump.elf

Context query * currently matches 6 contexts.

Symbol file added with id '1'.

simics> bt

#0 0x800084ec in hart_detect_features(scratch=(struct sbi_scratch *) 0x80024000) at /home/jengblo/Simics/buildroot/output/build/opensbi-0.9/include/sbi/sbi_hart.h:35

Looks like the bootloader is checking for processor features by trying to run them and handling any resulting illegal instruction exception. However, the second illegal instruction exception on the first processor is the real deal. To tell where in the kernel it is located, debug symbols from the vmlinux file produced during the Buildroot build is used. This file only provides symbols, not any real debugging information, but it is enough to determine which code is running when the illegal instruction is hit.

…

simics> r

[board.hart[0]] Breakpoint 2: board.hart[0] Illegal_Instruction(2) exception triggered

simics> da

v:0xffffffff80005fca p:0x0000000080205fca illegal instruction: 07 30 05 00

simics> add-symbol-file /home/jengblo/Simics/buildroot/output/build/linux-6.1.14/vmlinux

Context query * currently matches 6 contexts.

Symbol file added with id '1'.

simics> bt

#0 0xffffffff80005fca in __fstate_restore()

#1 0xffffffff80003782 in start_thread()

#2 0xffffffff801d175e in load_elf_binary()

#3 0xffffffff8018ac44 in bprm_execve()

#4 0xffffffff8018b9bc in kernel_execve()

#5 0xffffffff8071eeb2 in run_init_process()

#6 0xffffffff8071eed2 in try_to_run_init_process()

#7 0xffffffff807296f0 in kernel_init()

#8 0xffffffff8000340c in ret_from_syscall_rejected()

Hard to tell what the illegal instruction is; all that is shown is the instruction bytes found in memory. Most likely it is a floating-point instruction, but since the processor core simulator has been configured not to support floating point, it will not disassemble it. Makes sense. One way to figure this out is to dig through the RISC-V instruction manuals to work out the encoding. Another way is to use the simulator and “cheat”, by re-enabling the floating-point instruction extensions and re-disassembling the instruction.

simics> board.hart[0].extensions->F=TRUE

simics> board.hart[0].extensions->D=TRUE

simics> da

v:0xffffffff80005fca p:0x0000000080205fca fld ft0,0(a0)

The “trick” worked, and it shows that the failing instruction is a floating-point load (the complete __fstate_restore function is found in arch/riscv/kernel/fpu.S). The kernel calls this function when creating a new thread, if it believes that a target system has floating-point available.

Booting without Floating-Point Instructions

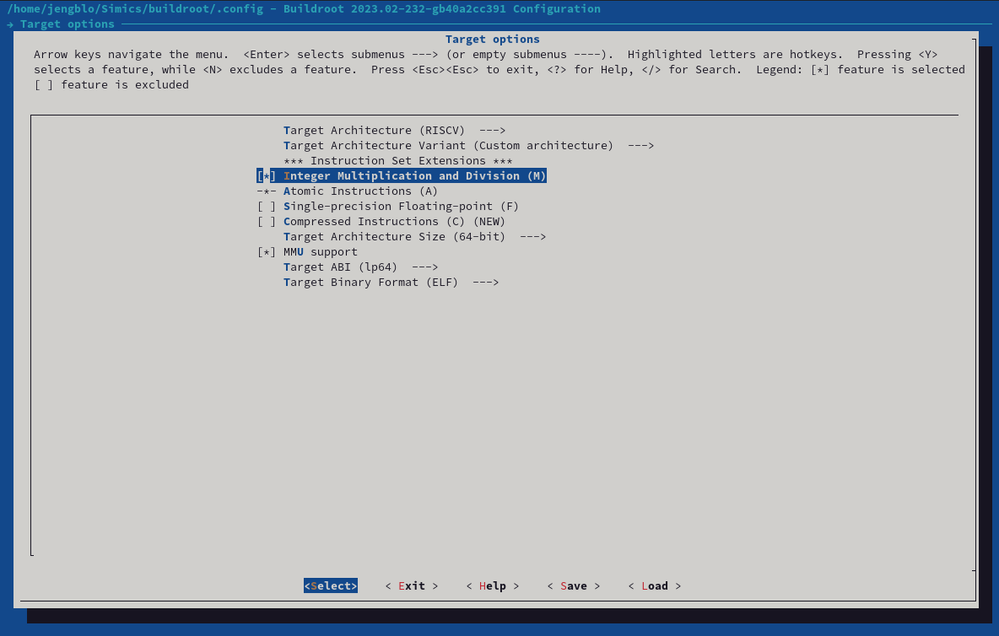

Given the above, it appears that the software stack needs to be rebuilt without floating-point support (which might or might not be obvious depending on which type of software you are used used to). Including the Linux kernel, bootloader, and all the software in the root filesystem. In this way, no floating-point instructions should be used and the software should work nicely.

Buildroot makes this easy. Use make menuconfig and dig into Target options. To remove floating-point support, change to using a Custom architecture and make sure that floating-point is not checked. The D mode hides behind the F mode, and by not enabling F, D is implicitly also disabled. The precise behaviors of the flags depend on the Buildroot and Linux kernel versions used.

However…

After rebuilding the software stack and redeploying it to the target, the Linux boot fails in the same way as before.

The root cause is that the floating-point-using assembly code is still part of the kernel, and the kernel still calls it. Looking closer at what is going on, the activation of the code is not related to the compilation flags for the build, but rather what the device tree tells the Linux kernel about the hardware.

The real lesson here is that guesses must be validated by tests and that you need to understand a hardware-software stack before starting to make changes from a known-good state.

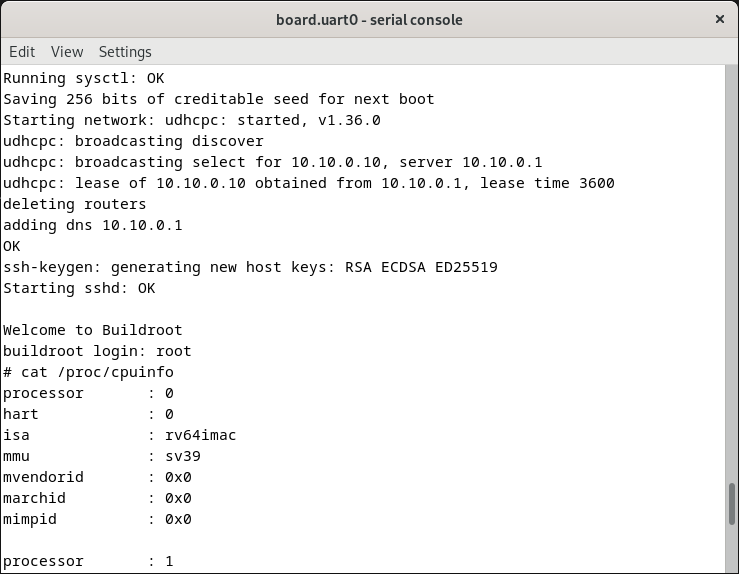

Really Booting without Floating-Point Instructions

The device tree declares the nature of the hardware to the software, and when the hardware changes, the device tree needs to change. The precise coverage of a device tree depends on the particular software platform. In the case of RISC-V Linux, the kernel does not dynamically detect the presence or absence or floating-point instructions, but instead expects the device tree to provide the information. This makes perfect sense in the current context. The presence or absence of floating-point in the hardware is not going to change and the software stack philosophy of Buildroot is to build a setup targeting a specific hardware platform.

This is quite different from the Intel® Architecture ecosystem, where it is expected that a given operating system image can run on a wide variety of hardware. The use of PCIe enables the dynamic detection of available devices in the hardware, and the CPUID instruction lets software adapt to the precise set of instruction set extensions currently available.

To make the Linux kernel avoid floating-point instructions, the instruction-set specification of the device tree nodes for the affected processor cores is changed from rv64imafdc to rv64imac. Starting from the default device tree specification shipping with the RISC-V simple virtual platform, the specification for each processor core would be changed to:

cpu@0 {

/* [...] */

riscv,isa = "rv64imac"; /* instruction-set specification */

/* [...] */

};

};

The RISC-V simple virtual platform comes with a default device tree in source-code form (a “dts” file). To disable floating-point, the dts file is copied and the information for all processor cores changed. The file is compiled into a binary file (“dtb”) that is read by Linux during the boot. The needed compiler is built as part of the Buildroot build. With this change, the system boots without floating-point:

Measuring the Impact

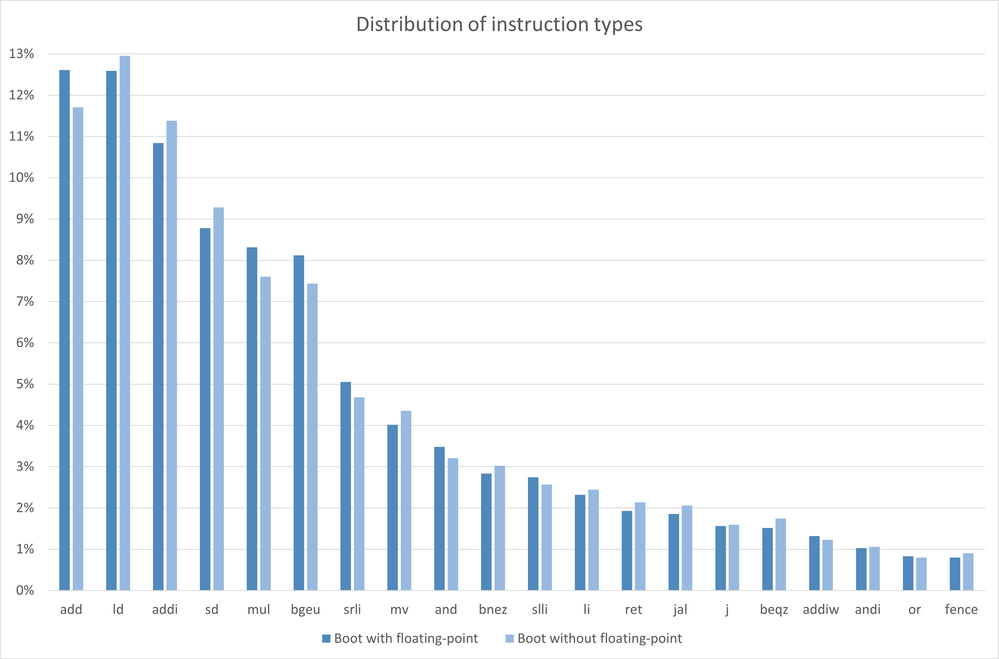

Using the same instruction counting tools as used in the previous blog post, the distribution of instruction types can be compared between the two boot cases. In the current set of experiments, the boot without floating point takes slightly shorter time, using slightly fewer total instructions. Booting with floating-point takes 2.8 seconds and requires 4.8 billion instructions. Without floating-point, it takes 2.6 seconds and 4.2 billion instructions. 12% fewer instructions, 8% less time – since some part of the boot is running in parallel.

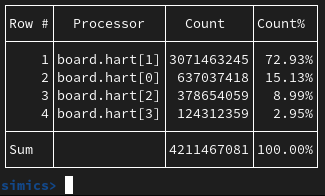

For the no-floating-point case, the execution is split between cores in the following way:

That the boot is faster without floating-point instructions enabled might look like an unexpected result, but it makes sense since such instructions are very rarely used during the boot. The most popular instruction is a floating-point move that happens less than one million times, or about one instruction in 5000. Instead of slowing things down, it seems the code benefits from not having to care about floating-point. There is a reason for why many embedded systems skip floating-point support.

The top 20 instruction types when booting with and without floating point are shown in the diagram below, sorted by how often they appear in the floating-point case. The top 20 instruction types account for more than 90% of all instructions, but there is a long tail of more than 100 other instruction types. The least-used instructions are seen no more than a handful of times, compared to the 600 million occurrences of the most-used instructions.

The distributions are roughly similar, even though the details differ. This difference as well as the difference in overall instruction count shows just how sensitive software can be to changes. It might be reasonable to expect to see a simple replacement of floating-point instructions with integer emulation, but that is not what happened.

These experiments show just why it is important to test and validate hardware and software changes. Preferably in pre-silicon and using a simulator that makes it easy to make changes.

Implementation Note

The experiments in this blog were carried out using the following software versions:

- The Public Release of the Intel Simics Simulator, version 2023-19

- Buildroot 2023.02

Precise numbers of instructions, boot times, code addresses, etc., might change with new versions of the Simics simulator as well Buildroot.

Script Used to Count the Instructions

The following script code was used to count the instructions:

## Set up target system, get the system root component name

$ns = fp

load-target "risc-v-simple/linux" $ns

$system = (params.get $ns + ":system:components:system")

## Set up histogram and instruction counter

$h=(new-instruction-histogram -connect-all)

$i=(new-instruction-count -connect-all)

## End condition –

## Run the simulation until the Linux prompt appears

bp.console_string.run-until object = $system.console.con "# "

## Print statistics and time

$h.histogram

$i.icount

list-processors -time -cycles -steps

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.