- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This post is related to perf profiler working in sampling mode.

I decided to measure so called "self-overhead" of perf profiler itself in terms of few dozens of micro-architectural events sampled on Intel platform (CPU: Intel(R) Core(TM) i5-6200U CPU @ 2.30GHz).

In order to do so effectively I created a small executable containing only an empty main function (circa. 10 machine code instructions) and Compiler inserted additional code and data structures(ELF sections, call to start function, ...etc).

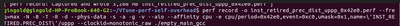

As it was expected most of the CPU cycles were spent in dynamic linker address space. My main motivation was to investigate the precision of triple-p (ppp) switch which was added to following perf command:

I did expected to see following result where the aforementioned low-skid setting worked seemingly properly:

As you may see the PREC_DIST sampling in this case enabled recording of low skid precise distribution of contributing instruction to fine degree of precision as it was expected from the simple micro-benchmark executed probably many million or billion times.

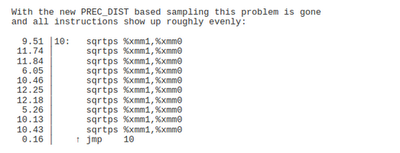

When confronted with real code example the created instruction distribution breakdown was totally different.

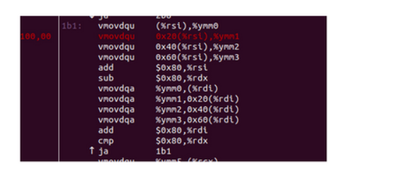

Here is an example:

Perf assigned 100% of measured event count i.e. INST_RETIRED.PREC_DIST to single machine code instruction of AVX-memmove function unrolled 4 times. The number of samples was 9 and approximated count was 154080, out of this value the aforementioned __memmove_avx_unaligned_erms function was responsible for roughly 11% of total count. That loop was probably a local hotspot. What is more puzzling is the seemingly low precision of skid reduced event INST_RETIRED.PREC_DIST. The exact details of implementation are unknown and I can only assume that some kind of executing uops tracking was enabled when the counter was close to overflow in order to record the overflow instruction or rather its uop(s) trigger(s).

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You'll probably get a better distribution with smaller sampling periods. PDIR may not work well with a very small number of samples (9 in your case with only 154080 retired instructions). Try with increasingly smaller periods and see if and at what point the distribution becomes good enough.

Profiling overhead is proportional to the sampling period/frequency, but the number of samples should be high enough to identify code regions or instructions where bottlenecks most probably exist and obtain a better distribution of samples.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Hadi,

The small number of samples might be a culprit of low precision. I recall, that by lowering the perf command period to 5000 the precision of distribution increased a bit.

I contacted Andi Kleen by e-mail and asked his opinion in regards to that problem. Andi explained that in case of memcpy-like code -- probably the first memory touch caused a miss and wait cycles enabled PDIR circuitry to track and mark that machine code instruction.

I presume, that in case of simple micro-benchmarks iterated huge number of times the precision of PDIR might be higher than in case of real-world code.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The small number of samples might be a culprit of low precision. I recall, that by lowering the perf command period to 5000 the precision of distribution increased a bit.The total number of retired instructions may also be another factor that impacts the distribution, but I'm not sure.

I contacted Andi Kleen by e-mail and asked his opinion in regards to that problem. Andi explained that in case of memcpy-like code -- probably the first memory touch caused a miss and wait cycles enabled PDIR circuitry to track and mark that machine code instruction.This suggests that PDIR also has bias toward high-latency instructions. To be fair, Intel has only said that "[INST_RETIRED.PREC_DIST] allows for a more unbiased distribution of samples," so the only guarantee that we have is that the distribution is not worse than normal sampling.

I presume, that in case of simple micro-benchmarks iterated huge number of times the precision of PDIR might be higher than in case of real-world code.I think the problem INST_RETIRED.PREC_DIST is trying to solve is that if you have a large function calling a small function, something like this:

ins1

ins2

if(...) {

call func2()

}

ins3

...

The small function (func2) could be inlined or not. If the distribution is too much unbiased, one may get the impression that func2 is cold and so, for example, a profile-guided optimizer may rearrange the code accordingly. A better distribution of samples may reveal that the condition of the call to the second function is almost always true, which indicates that it'd be better to keep it arranged sequentially.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Hadi,

This suggests that PDIR also has bias toward high-latency instructions. To be fair, Intel has only said that "[INST_RETIRED.PREC_DIST] allows for a more unbiased distribution of samples," so the only guarantee that we have is that the distribution is not worse than normal sampling.

Yes I completely agree with your opinion. My own observations suggest that a direct correlation exists between the code complexity in term of various instruction composition, their interdependence, latencies and throughput and observable PDIR precision (as reported by perf tool).

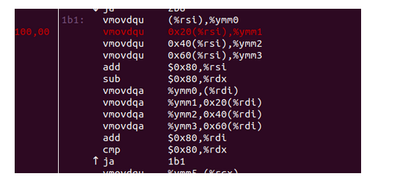

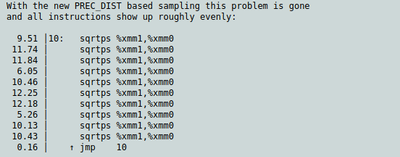

Here is a screenshot from Andi Kleen's article

https://lkml.org/lkml/2015/10/19/861

You may see, that in case of simple loop containing the same instructions stream the shadowing effect is reduced in vicinity of loop control machine code. During my measurement I have never seen so precise distribution and measured code (a part of ld.so dynamic linker) executed a lengthy loop. The distribution was more chaotic and random with large bias.

In my opinion the the PDIR might employ some kind of additional high-precision counter which may receive so called "pre-overflow" signal from PMU specific counter which is tracking the number of retired instructions. This pre-overflow signal will arm the high-precision counter which will be incremented probably by more accurate tracking of concurrently executed uops and by some rate of retirement instructions.

In my opinion the tracking of concurrent uops execution will be easier in case of that simple sqrtps loop which has a easily predictable patter. Everything will be more complicated in case of complex loop or some block of code which contains a different interdependent instruction with varying latencies and dependent on cache hit/miss ratio.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Note that Andi is using PREC_DIST to sample cycles spent and which instructions they correspond to, by "abusing the cmask" as he puts it, while as I understand it you use the event without any special cmask, so would actually be sampling instructions retired (i.e., every instruction should have the same weight).

So even if everything is working well, I would expect that you'd get different results.

If you ask me, the example in Andi's article never made that much sense: the samples in the example without and with PDIR are almost exactly the same, just shifted down by one instruction. It's almost like a skid of 1 is removed (of course, :pp should already remove the skid of 1). The numbers themselves are the same. Yes, the PDIR result is still better in that it is likely the jmp instruction is the one that really should get close to 0% sample, but still I find the explanation of what PREC_DIST does unsatisfying.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The "CMASK" was unchanged during my analysis. In my example (the first post) the skid reduction was set to "ppp".

I completely agree with respect to Andi's example it does not reflect much the real world scenario and his example should not be the main focus of PDIR bias analysis with respect to more complex machine code instruction execution window.

but still I find the explanation of what PREC_DIST does unsatisfying.

What kind of explanation (PREC_DIST) were you talking about? I can not find any reliable in-depth source.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The "CMASK" was unchanged during my analysis.

Unchanged from what though? From the default? As I understand it, you are using cpu/event=0xc0,umask=0x1/ which will use the default cmask and inv values. This counts "instructions retired".

The thing Andi is measuring in the example you posted (10 sqrt instructions) though is very different! He's using the "cmask trick" to turn any counter into a counter that counts cycles. Setting inv=true and cmask=16 or whatever, so he's measuring "cycles where NOT 16 or more instructions were retired". Since that condition is satisfied every cycle, this simplifies to "cycles", nothing really to do with instructions (and you could apply this to any counter, really).

This is what Andi refers to as:

abusing the inverse cmask to get all cycles

This is the trick they use to implement cycles:ppp, and I mention it only to make clear that as I far as I can tell you are measuring a very different thing and it is important to be clear about what you want to measure. Neither is right or wrong: there are valid reasons to want to measure both cycles and instructions.

> completely agree with respect to Andi's example it does not reflect much the real world scenario

Well my claim was slightly different: that this example doesn't even show PDIR really making a more even distribution at all: the distribution looks almost exactly the same except "shifted" by one instruction. See also this example where only one out of 4 nops gets sampled (due to the retirement pattern) and there prec_dist is used (without cmask or inv, in this case) and the result is almost exactly the same: only change is that the first nop now gets some additional samples.

> What kind of explanation (PREC_DIST) were you talking about? I can not find any reliable in-depth source.

I also can't find any good in-depth source, so I was really talking about anything I can find, which are basically the one sentence description in the manual, Andi's comments on a few LKML or perf-users mailing lists and a couple of other places. There was a longer paragraph or so in some other Intel document (maybe part of VTune, or a slide deck), but I can't find it now: but it similarly left me wanting.

I'm also looking for exactly what bias PDIR is meant to eliminate and how it does it. For example, there is the so-called "PEBS shadow" effect: is this what PDIR is meant to eliminate?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

>>>The thing Andi is measuring in the example you posted (10 sqrt instructions) though is very different! He's using the "cmask trick" to turn any counter into a counter that counts cycles. Setting inv=true and cmask=16 or whatever, so he's measuring "cycles where NOT 16 or more instructions were retired". Since that condition is satisfied every cycle, this simplifies to "cycles", nothing really to do with instructions (and you could apply this to any counter, really).>.>>

I did not know that "cmask trick" -- thanks for the explanation.

>>>As I understand it, you are using cpu/event=0xc0,umask=0x1/ >>>

Yes I took a look at perfcmd file and the modifiers were exactly the same as you posted.

>>>that this example doesn't even show PDIR really making a more even distribution at all:>>>

While analyzing the precision of PDIR I saw the perplexing situation where there was some correlation between the code complexity (the code stream containing various instruction potentially causing occurrence of measured hardware-events) and uneven sample distribution by PDIR. In case of simple code (Andi's sqrtps and your example) the distribution had higher degree of precision. That was not situation in case of my measurements. I measured mainly the dynamic linker (ld.so) performance.

Here is the screenshot (ld.so memcpy-like loop)

>>>'m also looking for exactly what bias PDIR is meant to eliminate and how it does it. For example, there is the so-called "PEBS shadow" effect: is this what PDIR is meant to eliminate?>>>

I can speculate that, PDIR precision may be directly related to PEBS process latency and that process latency might be so called "shadow-effect". The aforementioned process latency might span time period from the specific counter overflow up to completion of writing the PEBS event record to be written by machine code handler. The microcode may be responsible for gathering the TSC,RIP and other register context in the case of overflow. During that period probably some events are missed. I wonder how long is that period and how it can be measured if even?

The other question is potential "pipelining" at the level of PEBS mechanism -- here I wonder if the counter can start counting the next event when microcode is finalizing the event record gathering. Additional question which bothers me is the sustained ratio of multiply overflowing counters nearly simultaneously and PMU unit can handle 4 PEBS events at the same time -- or is it even possible? In my opinion the PDIR may attempt to somehow reduce that PEBS shadow effect.

P.s.

I have a lot material in form of PDF documentation created by me (circa 20 pages) collected during my analysis and if you are interested I can share that data and details with you.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Bernard wrote:

That was not situation in case of my measurements. I measured mainly the dynamic linker (ld.so) performance.

Right. Your measurement seems inaccurate, especially since you are measuring instructions, not cycles. You could argue this sampling is correct for cycles, since maybe that particular instruction suffers a cache miss and dominates the timing compared to the other instructions. However, if you are trying to count instructions, this doesn't apply: "obviously" every instruction in a basic block should have the same nominal count (plus sampling error).

I haven't found a good way to sample actual instructions retired (or executed), including PDIR: see also this SO question. If you are willing to use PT (processor trace) you can get exact counts, however.

>>>'m also looking for exactly what bias PDIR is meant to eliminate and how it does it. For example, there is the so-called "PEBS shadow" effect: is this what PDIR is meant to eliminate?>>>

I can speculate that, PDIR precision may be directly related to PEBS process latency and that process latency might be so called "shadow-effect". The aforementioned process latency might span time period from the specific counter overflow up to completion of writing the PEBS event record to be written by machine code handler. The microcode may be responsible for gathering the TSC,RIP and other register context in the case of overflow. During that period probably some events are missed. I wonder how long is that period and how it can be measured if even?

Here's impression specifically of the "PEBS shadow" bias (there may be other biases, such as the "retirement happens in groups of 4" bias):

Normally, even when a PEBS event is configured, the PEBS subsystem is not "armed", since apparently this causes some slowdown or because an armed PEBS subsystem will capture on every occurrence of the event. "Not armed" means that a PEBS record cannot be captured. Now, events are still counted normally during this time and when the specified count (or maybe the count minus 1) is reached, after some delay an internal assist/interrupt is taken which arms the PEBS subsystem. Then on the next occurrence of the event, a PEBS record is captured. This capturing is fully accurate in the sense that the right RIP, register values, etc, are captured with zero skid.

The shadow then arises because of the delay in arming PEBS after the second-to-last event. As mentioned, after the event occurs and before arming, there is a delay. Imagine that delay is 10 ns (or maybe it's measured in cycles, I'm not sure), and you have three spots in your code which trigger this event, separated (in execution time) by 4, 4, and 100 ns. That is, they are close together like: xxxxExExExxxxx with E causing the event and x some other stuff. Here, no matter which of the E is the arming (second-to-last) event, only the first E will ever be sampled! The other two Es are too close, even if the arming happens on that first event, the subsequent two Es are in the "shadow" of the first (and the third is in the shadow of the second), so they can never be sampled.

That's my understanding of the PEBS shadow, but I would like to corrected if this is wrong. The net effect is that when instructions that can cause the event are clustered closely together, the PEBS mechanism tends to bias towards the first location, and against locations that follow shortly (in time) after another location.

The other question is potential "pipelining" at the level of PEBS mechanism -- here I wonder if the counter can start counting the next event when microcode is finalizing the event record gathering. Additional question which bothers me is the sustained ratio of multiply overflowing counters nearly simultaneously and PMU unit can handle 4 PEBS events at the same time -- or is it even possible? In my opinion the PDIR may attempt to somehow reduce that PEBS shadow effect.

P.s.

I have a lot material in form of PDF documentation created by me (circa 20 pages) collected during my analysis and if you are interested I can share that data and details with you.

Yes, I am not sure about any of these scenarios. E.g., are events lost in the arming->assist interval? How does it work if two PEBS events want to arm very close in time (e.g., after event 1 arms, but before the next event 1 creates the PEBS record, if event 2 overflows, how does arming work?).

It would be possible to determine a lot of these details with controlled tests (not easily with "instructions" but with some event like split loads where you can exactly control where it occurs).

Yes, I am interesting in any materials related to this stuff!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Normally, even when a PEBS event is configured, the PEBS subsystem is not "armed", since apparently this causes some slowdown or because an armed PEBS subsystem will capture on every occurrence of the event. "Not armed" means that a PEBS record cannot be captured. Now, events are still counted normally during this time and when the specified count (or maybe the count minus 1) is reached, after some delay an internal assist/interrupt is taken which arms the PEBS subsystem. Then on the next occurrence of the event, a PEBS record is captured. This capturing is fully accurate in the sense that the right RIP, register values, etc, are captured with zero skid.

Can you point me to the source of this information?

It is very interesting.

>>>this doesn't apply: "obviously" every instruction in a basic block should have the same nominal count (plus sampling error).>>>

I'm well aware, that posted measurement (memcpy-loop) is incorrect for the retired instruction count and suffers from the same low precision of distribution as your SO example. In the case of optimal (correct) measurement each instruction should have very similar or the same count if not plagued by the sampling error.

Here's impression specifically of the "PEBS shadow" bias (there may be other biases, such as the "retirement happens in groups of 4" bias):

This bears some similarity to what I wrote, but you supplied more detailed information. By looking at Eranian pdf http://cscads.rice.edu/Eranian-perf_events-CScADS-2011.pdf I suppose, that PDIR might be implemented as a secondary fine grain counter with maximum count value smaller that regular programmable or fixed counter. That max(count-value) might be averaged over the statistical variance of arming process N-cycles latency (unless its value is fully deterministic). The "PDIR" counter might be triggered before the main counter overflow maybe by sending the pre-overflow activating signal to be in ready state when main counter overflows and PEBS transition to arming state. At that point of time the secondary counter might start to monitor the signal coming from the retirement unit (the count of retired instructions) and increment on receiving the signal, beside the instruction its virtual address might be sent as well to some specific buffer. At the same time the logic of secondary counter might be receiving the feedback signal from the PEBS arming process waiting for its process completion. Upon completion the shadow is measured i.e. P+n (where n=instruction retired) and removed by probably some comparator which compares the offset in retired instructions between the start of arming and end of arming.

Yes, I am interesting in any materials related to this stuff!

Actually that stuff is close to 120 pages and encompasses some VTune anomalies also. I will contact you by e-mail (your performance *blog)

*Very interesting stuff and professionally analyzed and presented.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Bernard wrote:

Normally, even when a PEBS event is configured, the PEBS subsystem is not "armed", since apparently this causes some slowdown or because an armed PEBS subsystem will capture on every occurrence of the event. "Not armed" means that a PEBS record cannot be captured. Now, events are still counted normally during this time and when the specified count (or maybe the count minus 1) is reached, after some delay an internal assist/interrupt is taken which arms the PEBS subsystem. Then on the next occurrence of the event, a PEBS record is captured. This capturing is fully accurate in the sense that the right RIP, register values, etc, are captured with zero skid.

Can you point me to the source of this information?

It is very interesting

It is hard to find much resources on this. You can search for PEBS shadow, but most of the hits seem to already assume you know what it is. In fact, the PREC_DIST event includes this term for most CPUs:

Precise instruction retired event with HW to reduce effect of PEBS shadow in IP distribution.

One day I did a longer than usual search and I think I finally came across a reasonable description in this slide deck on page 12. It describes however the general problem: my specific example above is my own invention of how this arming delay can introduce a bias. This whole thing is not entirely obvious because not all types of delay cause a bias: if you interrupt the CPU to take a sample of "CPU time" and this interrupt takes 1000 ns to take effect and stop the CPU, it seems to me this does not introduce any bias: taking a sample at time t or t+1000 are both unbiased samples, right?

You might also be interested in this link which I ran across looking for that slide deck which discusses a bit how PEBS sampling is different from normal sampling and how using PEBS for cycles:pp doesn't necessarily even really report the same thing as the other cycles measurements. It also refers to the "cmask trick".

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It is hard to find much resources on this. You can search for PEBS shadow, but most of the hits seem to already assume you know what it is. In fact, the PREC_DIST event includes this term for most CPUs:

I found two conflicting description of the "PEBS shadow". One was given by S. Eranian paper and second was given by D. Levinthal.

Levinthal's description stated that PEBS shadow is a time delay measured in cycles between the counter overflow and reaching the PEBS arming state readiness.

https://software.intel.com/sites/products/collateral/hpc/vtune/performance_analysis_guide.pdf

Eranian's description stated that PEBS shadow is time length of the whole arming period.

http://cscads.rice.edu/CSCADS_2012_perf_events_status_update.pdf

As you may see totally different explanation.

I tend to believe more in Eranian's explanation, unfortunately his explanation is only illustrative and not technical one thus creating more additional questions unanswered. What is the arming period exactly and how long it is (in cycles or nanoseconds), what HW operations are performed during the arming period, is there possibility of some kind "pipelined" operations at the pebs level, how many pebs units are present per core, is there any load balancing between those unit available, how pebs logic reacts to simultaneous 4 instruction retirement (for example: nop case) at the exact counter overflow point, which instruction is sampled first in that case, what are the steps of pebs during the pre-overflow count when more than one retired instruction or event trigger increases the count ...etc.

One day I did a longer than usual search and I think I finally came across a reasonable description in this slide deck on page 12

Yes this is the S. Eranian's explanation, but it lacks the PDIR improvements details.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Levinthal's description stated that PEBS shadow is a time delay measured in cycles between the counter overflow and reaching the PEBS arming state readiness.

Eranian's description stated that PEBS shadow is time length of the whole arming period.

Perhaps we are using somewhat different definitions of "arming" and so on, because as far as I can tell those definitions sound effectively the same?

Briefly, after the counter overflows there is a "shadow" before the PEBS system is ready. Only after it is ready will the next event capture a sample.

This shadow is composed of at least the time the PMU takes to detect the overflow, inform the PEBS subsystem and then for the PEBS system to arm itself. What portion of the time each of those things takes (and possibly time taken other things I have not described) doesn't really seem to matter for this effect? At least the only difference I can see between their descriptions is maybe what exactly is the arming period versus the period before arming starts or whatever.

Long before PEBS there was this delay between PMC overflow and an interrupt, so I suppose some of the time taken before PEBS is fully armed is a similar delay.

I don't actually know how PDIR improves this situation, although I heard something like the processor slows down near when the sample will be collected (perhaps entering this mode some number of samples before the counter would normally overflow) so maybe the shadow time is much less when counted in cycles/instructions.

I'm still interested in that material if you want to send it to me. You can use the email you saw on my blog.

Thanks for the link to the Levinthal stuff. I had read that before and maybe it's where I even remembered the description of the PEBS shadown problem from. I think you can trust Levinthal's description.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I don't actually know how PDIR improves this situation, although I heard something like the processor slows down near when the sample will be collected (perhaps entering this mode some number of samples before the counter would normally overflow) so maybe the shadow time is much less when counted in cycles/instructions.

I came to similar conclusion. I do not think, that Core will serialize its execution when the PMC counter is near overflow state. I rather think, that maybe there is a more "exact" tracking of retired instruction as counter value is very close to overflow. The other option is to track more closely the uop dispatch queue (maybe it head) when the overflow is close.

I'm still interested in that material if you want to send it to me. You can use the email you saw on my blog.

Sorry for the delay, recently I have been overloaded with my job. I will sort the relevant information and share the data privately on your blog.

Long before PEBS there was this delay between PMC overflow and an interrupt, so I suppose some of the time taken before PEBS is fully armed is a similar delay.

THis is a plausible explanation. I think, that Levinthal's description is more probable (than Eranian's), beside that he claims, that the arming delay is ~8 cycles.

In regards to PEBS mechanism I found this excerpt (Andy Glew)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'm still interested in that material if you want to send it to me. You can use the email you saw on my blog.

The material was sent (2 days ago) to your gmail e-mail (Performance matters blog).

I hope it was the correct e-mail address.

Long before PEBS there was this delay between PMC overflow and an interrupt, so I suppose some of the time taken before PEBS is fully armed is a similar delay.

From the PMC overflow to APIC PMI interrupt signalling the delay was a 9 cycles (as per Andy Glew) article. The delay between sending the APIC PMI and acknowledge of receiving by PMU was 6 cycles. For the "untrained eye" it is very hard to know exactly the reason for 6-cycles delay. IMHO it could have included a wire trip delay, possibly rerouting delay and some kind of processing through a pipeline (if any).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have found very interesting paper which analyzes the PEBS overhead (mainly record store to memory)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks, I will take a look at that paper.

I can't edit my post above ("internal error"), but the part at the start of PEBS shadow should say "this is my impression about how "PEBS shadow" bias works, specifically".

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page