Key Takeaways

- Intel is helping Tencent to optimize and improve its PRN network-based 3D digital face reconstruction solution and promote its adoption in various game products.

- Technical practices Tencent and Intel carried out show that low-precision quantization is an effective way to accelerate inference and improve AI application performance.

Blog Originally published 03-29-2021

UPDATE 01-31-2022: Revised name to Intel® Neural Compressor (formerly known as Intel® Low Precision Optimization Tool, LPOT)

The rapid development of deep learning has drawn more attention to face-focused applications in the computer vision and pattern recognition domain. As a result, 3D digital face reconstruction technologies that refine and bring 2D images to life are increasingly found in online games, education, and e-commerce areas.

Tencent Games is working with Intel to build a new 3D digital face reconstruction solution. Initial adopters are using this solution in game character generation and character model shifting, bringing gamers a smoother gaming experience. To deliver the performance and capabilities for this solution, Tencent Games is using the new 3rd Gen Intel® Xeon® Scalable Processors (formerly codenamed Ice Lake) built-in AI acceleration through Intel® Deep Learning Boost (Intel® DL Boost) as well as, the Intel® Neural Compressor (formerly known as Intel® Low Precision Optimization Tool), part of Intel® oneAPI AI Analytics Toolkit. Together, these technologies significantly improve inference efficiency while ensuring accuracy and precision. By quantizing the Position Map Regression Network (PRN) from FP32-based inference down to INT8, Tencent Games is able to improve inference efficiency and provide a practical solution for 3D digital face reconstruction.

This blog introduces the Tencent Games teams exploration of PRN network-based 3D digital face reconstruction solution. We will also discuss various performance enhancements made possible with the new 3rd Gen Intel® Xeon® Scalable Processors and Intel-optimized software.

PRN Network-based 3D Digital Face Reconstruction Solution

As Artificial Intelligence (AI) technologies continue to evolve and use cases expand, 3D digital face reconstruction is gaining more attention. Unlike 2D facial images, 3D digital face reconstruction requires not only color and texture information but also key deep information to create curved surfaces. Currently, AI scientists are leveraging a series of deep learning methods, such as the 3D Morphable Model (3DMM) and Volumetric Representation Network (VRN) for the 3D reconstruction of 2D face images.

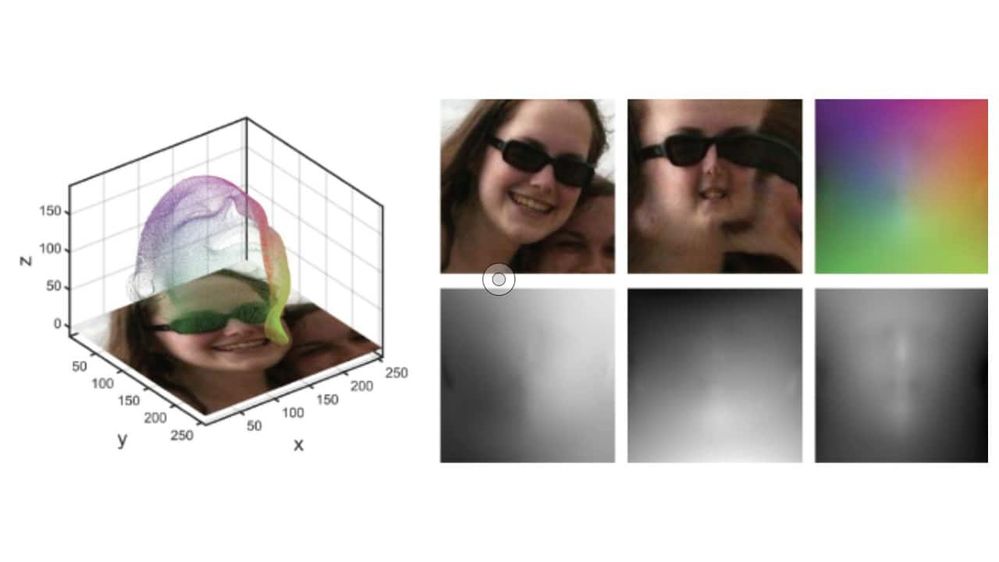

However, the requirement for predefined basic 3D digital face model or template and complicated regression network is causing high computing complexity and poor real-time processing. The central idea of the PRN network is to record all 3D coordinates of face Point Clouds through a UV Position Map - preserving semantic information of each UV polygon. The second step is to design an encoder-decoder convolutional neural network (CNN) for projection from the 2D face images to their corresponding positions in the UV polygon. The PRN network does not rely on the predefined basic 3D face model or template. Rather it canreconstruct the whole face with only the semantic information of the 2D image; As a light-weight extension of the CNN network, it performs better than other deep learning networks and has drawn significant industry attention.

Figure 1. PRN network leveraging UV polygon projection1

Tencent Games is building a 3D face reconstruction solution based on the PRN network to incorporate AI capabilities into its product lines. When developing games or other products with 3D character modeling requirements, UI engineers can leverage the 3D face reconstruction technologies to convert 2D figures into draft 3D models by batches. They can adjust the details, saving modeling time and improving development efficiency. In some games, players can upload their face photo and leverage the “intelligent face-making” feature to create a more customized 3D profile, with their own facial characteristics. This capability, shown in Figure 2, significantly improves the user’s gaming experience.

Figure 2. Game character generation leveraging 3D digital face reconstruction technologies

Tencent Games Boosts 3D Digital Face Reconstruction via Low-Precision Inference

While the PRN network enables high-performance 3D digital face reconstruction, Tencent Games hopes to introduce more optimization solutions that will further improve implementation efficiency and the user experience.

As an extended model of the CNN network, a typical PRN network uses an encoder-decoder architecture, with face images as input and the projected position map as the output. The encoder structure consists of 10 cascading residual blocks and the decoder structure 17 deconvolution layers. The activation layer is powered by the ReLU function, and the output layer the Sigmoid function. Large-scale convolution and deconvolution computing consume massive computing power during training and inference.

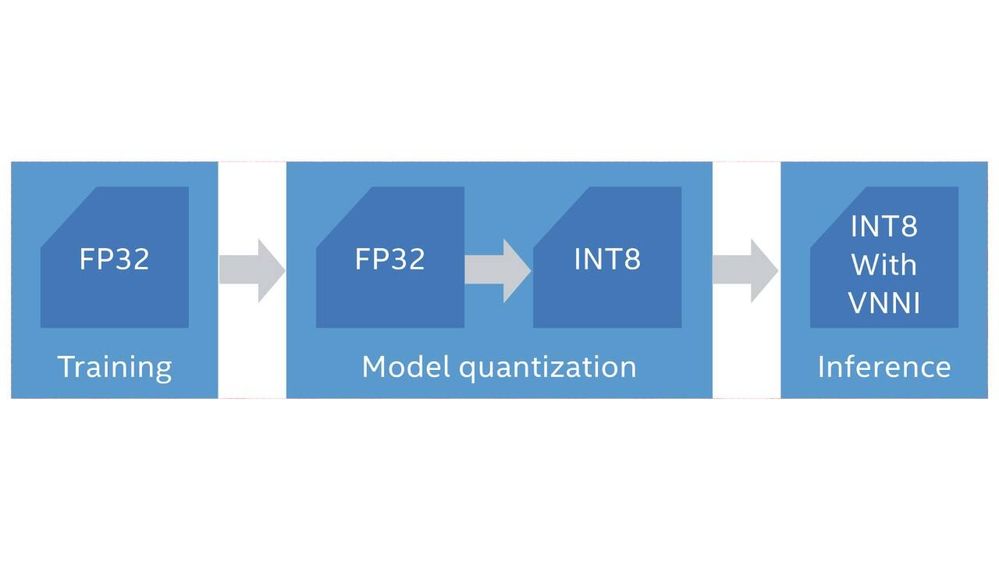

In the past, deep learning training and inference were performed with the FP32 data set, which is highly demanding on processor performance, memory, and even power consumption. Recent studies have shown that using low-precision datasets such as INT8 , can improve the efficiency of processors and memories without significantly impacting deep learning scene results, such as facial image processing.2 Low-precision data sets can also help conserve computing power and improve implementation efficiency for frequently used deep learning computing tasks such as convolution and deconvolution computing.

Figure 3 shows models trained with FP32 dataset can be converted to INT8 through quantization and continue with subsequent inference tasks. Such a quantization solution can maintain accuracy while training high-precision datasets and accelerate the task with INT8 dataset during the implementation of higher-frequency inference workloads These capabilities significantly improve the overall performance of the solution.

Figure 3. INT8 quantization of training models

3rd Gen Intel® Xeon® Scalable Processors and Quantization Tool for Overall Solution Optimization

For better working efficiency, Tencent Games has adopted the new 3rd Gen Intel® Xeon® Scalable Processors as the infrastructure of the solution. The platform provides more cores, further optimized architecture, higher memory capacity, and enhanced security technologies to support the user with stronger computing power and Intel® DL Boost for AI acceleration.

As a unique AI accelerator developed by Intel, Intel® DL Boost is based on Vector Neural Network Instruction (VNNI). It provides a variety of deep learning-oriented features to help users run complex AI workloads on Intel® architecture-based platforms. For example, Intel® DL Boost can integrate multiple instructions into one to support optimization of multiplication computing under an 8- or 16-bit low-precision dataset. This capability is essential for solutions running massive matrix multiplication computing, such as the PRN network. Practices have shown that developers can gain the following advantages by adopting the VNNI instruction set provided by Intel® DL Boost:

- Improved computing speed: Some computing tasks can gain a theoretical peak 4x improvement in computing speed with the new instruction set under INT8 dataset compared with FP323;

- Reduced memory access workloads: The new instruction set helps reduce model weight size and bandwidth threshold for memory access during INT8 inference;

- Improved inference efficiency: By integrating with processor hardware features, Intel® DL Boost technology helps improve the efficiency of INT8 multiplications. At the same time, Intel® Optimizations for TensorFlow and Apache MXNet also support INT8 to boost inference efficiency.

Tencent Games implements the INT8 quantization of the solution with Intel® Neural Compressor and Intel® Optimization for TensorFlow, both provided as part of Intel® oneAPI AI Analytics Toolkit as a simple, easy to use interoperable package. The toolkit includes optimized python frameworks and libraries built using oneAPI to maximize performance of end-to-end data science and machine learning workflows on Intel architectures.

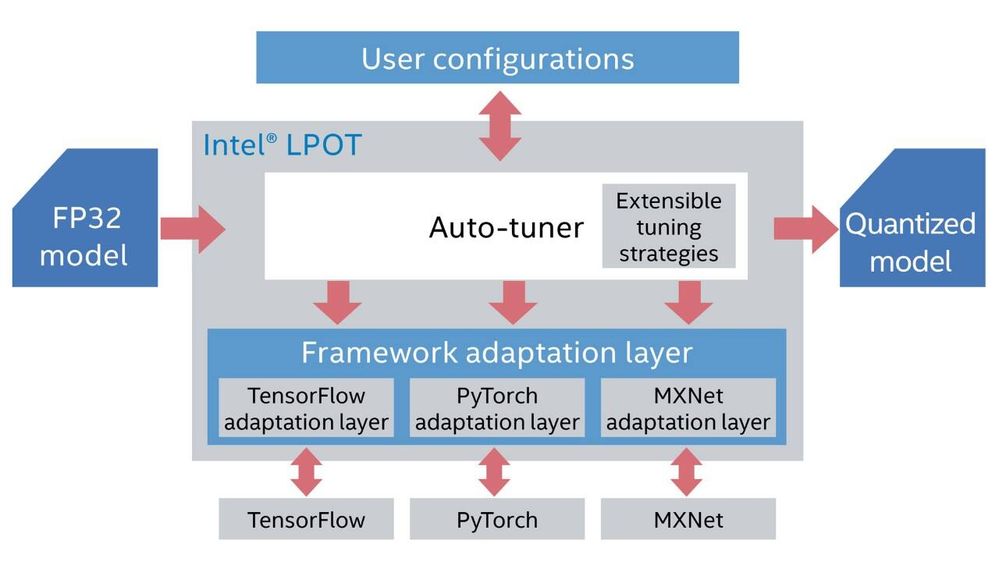

Intel® Neural Compressor, an open-source Python library, can maintain a similar level of accuracy and precision after quantization while accelerating the deployment of low-precision inference solutions on popular deep learning architectures. It achieves these capabilities through a series of built-in strategies for auto-adjustments. Figure 4 shows the basic structure of Intel® Neural Compressor.

Figure 4. Basic structure of Intel® Neural Compressor

Intel® Neural Compressor facilitates adaptation to popular deep learning architectures via the framework adaptation layer, which reduces the tasks of Tencent Games for architecture adaptation and optimization. Intel® Neural Compressor facilitates auto-tuning of quantization strategies of the PRN network model given actual demands during the 3D digital face reconstruction process with its core Auto-tuner. Such tuning strategies may come from the built-in extensible tuning strategies or be set by the user regarding performance, model size, and encapsulation method. Intel® Neural Compressor can also perform architecture optimization via built-in APIs, such as KL divergence calibration for TensorFlow and MXNet4.

Validation Results

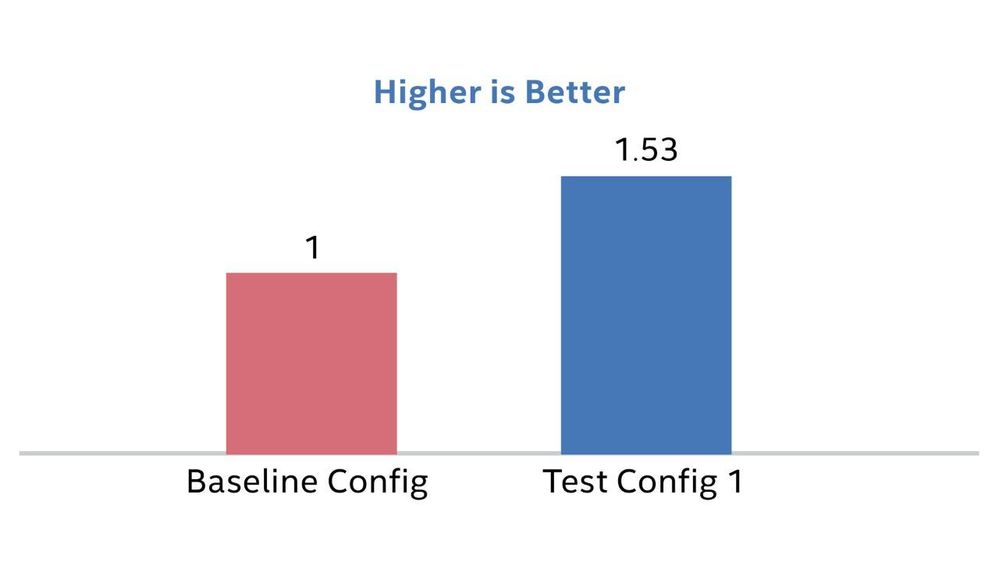

Tencent Games and Intel carried out multi-dimensional validation tests on the 3D digital face reconstruction solution to validate the performance improvement provided by 3rd Gen Intel® Xeon® Scalable Processors and Intel® Neural Compressor based INT8 quantization. The first test compared the performance of the 2nd Gen Intel® Xeon® Scalable Processors (Cascade Lake) without INT8 quantization. As Figure 5 shows, the PRN network model deployed on 3rd Gen Intel® Xeon® Scalable Processors (Test Config) saw a 53 percent improvement in inference speed5.

Figure 5. Improvement in inference performance enabled by 3rd Gen Intel® Xeon® Scalable Processors without INT8 quantization (normalized data comparison)

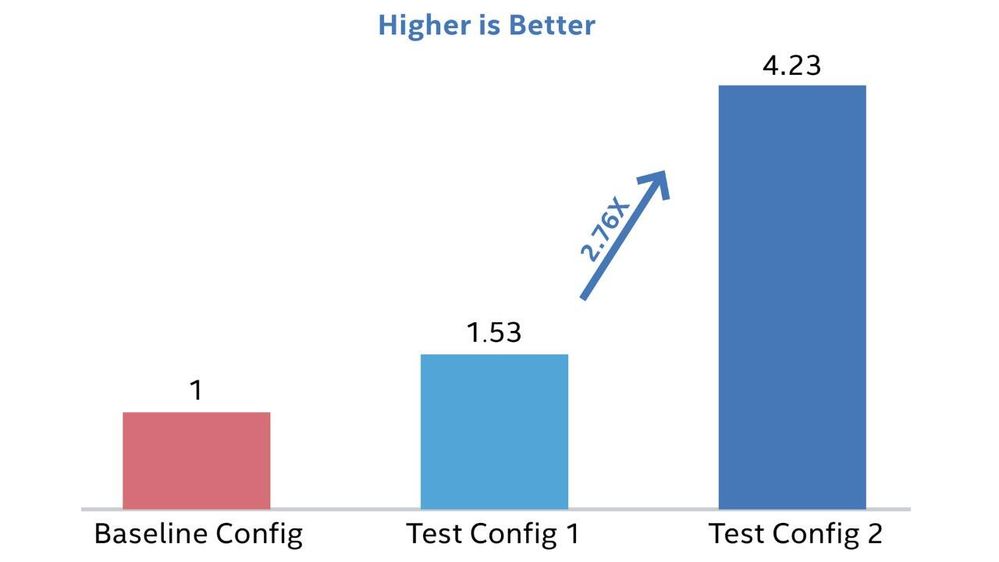

Figure 6 shows the INT8 quantization performance for, inference with Intel® Neural Compressor on 3rd Gen Intel® Xeon® Scalable processors. Test group 2-with INT8 quantization saw a 2.76x speed improvement compared with inference on a 3rd Gen Intel® Xeon® Scalable processors without INT8 quantization (Test Config 1) Test group 2 also showed a 4.23x improvement in overall inference performance compared with inference on the previous generation platform without INT8 quantization (Baseline Config)6.

Figure 6. Improvement in inference performance enabled by 3rd Gen Intel® Xeon® Scalable Processors with INT8 quantization (normalized data comparison)

This result is validated more explicitly with game developers. According to Tencent Games internal data, 3D digital face reconstruction required tens of milliseconds before and only 10-20 milliseconds after migrating to the new platform7. These speedups resulted in significant savings in modeling time and while improving development efficiency for game development with massive character modeling tasks.

The benchmarking team also conducted validation tests to see whether the solution can maintain a high level of precision while ensuring efficiency improvement after INT8 quantization. The results show that INT8 quantization will not have a significant impact on accuracy and precision, whether on the 3rd Gen Intel® Xeon® Scalable processors or the last generation platform. Take accuracy, for example – the accuracy loss caused by quantization was only 0.00018.

Looking to the Future

The technical practices Tencent Games and Intel carried out show that low-precision quantization is an effective way to accelerate inference and improve AI application performance. The 3rd Gen Intel® Xeon® Scalable processors with built-in Intel® DL Boost can provide outstanding computing power and AI acceleration, helping maintain the level of accuracy and precision while boosting the 3D digital face reconstruction solution.

Based on this successful exploration, Intel is helping Tencent Games to further optimize and improve its 3D digital face reconstruction solution and promote its adoption in various game products. Tencent Games and Intel plan to leverage the strong computing power and AI acceleration capacity of Intel® architecture-based products to improve the efficiency of AI applications for wider adoption in additional scenarios.

Notice and Disclaimers

1 Related figures and introduction to PRN network cited from: Yao Feng, Fan Wu, Xiaohu Shao, Yanfeng Wang, Xi Zhou, Joint 3D Face Reconstruction and Dense Alignment with Position Map Regression Network, https://arxiv.org/abs/1803.07835

2 For more information, please visit:https://www.intel.com/content/www/us/en/artificial-intelligence/posts/lowering-numerical-precision-increase-deep-learning-performance.html

3 Data cited from: https://www.intel.com/content/www/us/en/artificial-intelligence/posts/intel-low-precision-optimization-tool.html

4 For more information about Intel® Neural Compressor, please visit: https://community.intel.com/t5/Blogs/Tech-Innovation/Artificial-Intelligence-AI/Faster-Easier-Optimization-with-Intel-Neural-Compressor/post/1335769

5.6.8 Testing configuration: Test by Intel as of 3/19/2021 Baseline Config: processor: 2S Intel® Xeon® Platinum 82XX Processor (Cascade Lake), 24-core/48-thread, TurboBoost on, Hyper-Threading on; memory: 12*16GB DDR4 2933; storage: Intel® SSD *1; NIC: Intel® Ethernet Network Adapter X722*1; BIOS: SE5C620.86B.0D.01.0438.032620191658(ucode:0x5003003);OS: CentOS Linux 8.3; Kernel: 4.18.0-240.1.1.el8_3.x86_64; network model: PRN network; data set: Dummy and 300W_LP,12 instances/2 Sockets; deep learning framework: Intel® Optimization for TensorFlow 2.4.0; OneDNN:1.6.4; Intel® Neural Compressor:1.1; model data format: FP32; Test Config 1: processor: 2S Intel® Xeon® Platinum 83XX Processor (Ice Lake), 36-core /72-thread, TurboBoost on, Hyper-Threading on; memory: 16*16GB DDR4 3200; storage: Intel® SSD *1; NIC: Intel® Ethernet Controller X550T *1; BIOS: SE5C6200.86B.3020.P19.2103170131 (ucode: 0x8d05a260);OS: CentOS Linux 8.3; Kernel: 4.18.0-240.1.1.el8_3.x86_64; network model: PRN network; data set: Dummy and 300W_LP,18 instances/2 Sockets;deep learning framework: Intel® Optimization for TensorFlow 2.4.0; OneDNN:1.6.4; Intel® Neural Compressor:1.1; model data format: FP32; Test Config 2: processor: 2S Intel® Xeon® Platinum 83XX Processor (Ice Lake), 36-core /72-thread, TurboBoost on, Hyper-Threading on; memory: 16*16GB DDR4 3200; storage: Intel® SSD *1; NIC: Intel® Ethernet Controller X550T *1; BIOS: SE5C6200.86B.3020.P19.2103170131 (ucode: 0x8d05a260);OS: CentOS Linux 8.3; Kernel: 4.18.0-240.1.1.el8_3.x86_64; network model: PRN network; data set: Dummy and 300W_LP,18 instances/2 Sockets;deep learning framework: Intel® Optimization for TensorFlow 2.4.0; OneDNN:1.6.4; Intel® Neural Compressor:1.1; model data format: INT8.

7 Data source: Tencent Games internal statistics. For more information, please contact Tencent Games :https://game.qq.com

Performance varies by use, configuration and other factors. Learn more at www.Intel.com/PerformanceIndex.

Performance results are based on testing as of dates shown in configurations and may not reflect all publicly available updates. See backup for configuration details. No product or component can be absolutely secure.

Your costs and results may vary.

Intel technologies may require enabled hardware, software or service activation.

Intel does not control or audit third-party data. You should consult other sources to evaluate accuracy.

© Intel Corporation. Intel, the Intel logo, and other Intel marks are trademarks of Intel Corporation or its subsidiaries. Other names and brands may be claimed as the property of others.

Чтобы добавить комментарий, необходимо зарегистрироваться. Если вы уже зарегистрированы, войдите в систему. В противном случае вам необходимо зарегистрироваться.