Almost universally, today's systems must operate within limited system-level power budgets. For these power-bound systems, saving energy anywhere in the system enables more energy for compute and hence higher system performance. Moving data around the system consumes energy without directly contributing to computation. A tantalizing opportunity exists to achieve system-energy savings by keeping data commutes between memory and processing as short as possible. Energy savings should be the primary goal, our North Star for computing near memory.

At the recent International Solid-State Circuits Conference (ISSCC), I gave a presentation titled: "We have rethought our commute; Can we rethink our data's commute?" Here we took a system-level view during his presentation, rather than focusing on the memory device plus small computational kernel view often taken by Near Memory Compute (NMC) researchers. From this point of view, we described why now may be the time for NMC to enter systems, as well as the system-level barriers to overcome.

What is the Problem?

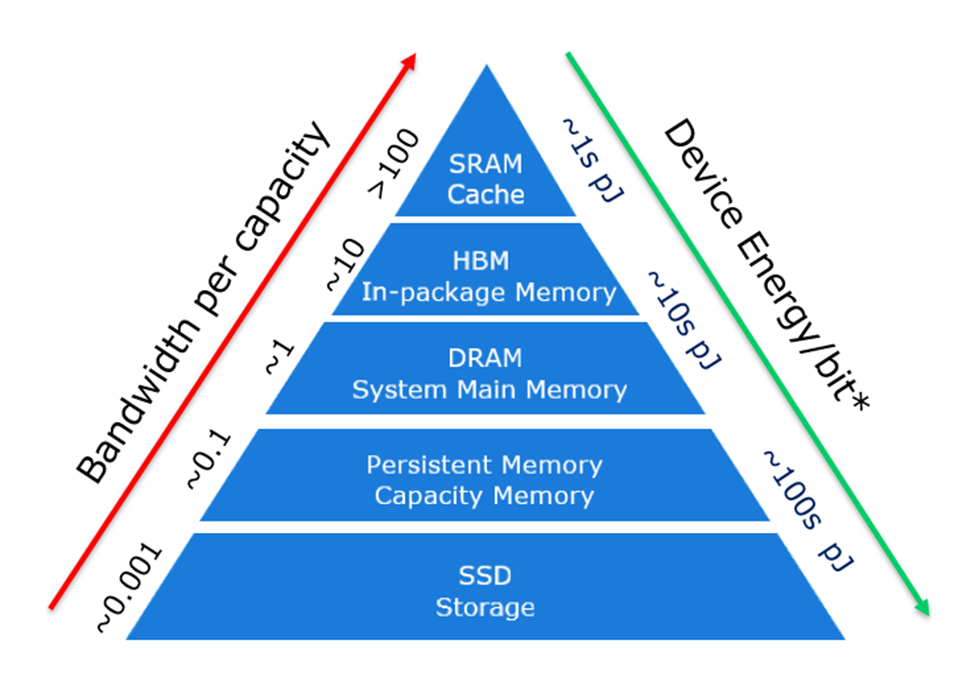

Since the inception of computing, some memory technologies have been used for higher capacity while others have been used for higher performance. The memory and storage hierarchy used in today's systems is represented in the pyramid (see Figure 1). This hierarchy takes advantage of a fundamental property of various memory technologies - designed for different cost-per-bit by varying the bandwidth-per-capacity.

The memories at the top of the pyramid offer much higher throughput and lower latency per capacity but come at a cost-per-capacity premium. As the hierarchy is traversed downward, the system handles order-of-magnitude increases in latency to deliver higher capacity at a reasonable cost. However, another byproduct of this traversal is significantly higher energy-per-bit-accessed. This increase in energy is due to both the memory technology as well as the movement of data from memory to the processing unit (note: the energies listed are memory device energies since data movement energy varies drastically based on system scope).

Figure 1: Memory/Storage Hierarchy*

Intel & Shen, Meng, “Silicon Photonics for Extreme Scale Systems”, Journal of Lightwave Technology, 2019

As data sets continue to grow at a pace greater than memory density, the energy devoted to system-level data movement increases. Deep learning (DL) datasets in AI data processing are a particularly strong example, with model parameters increasingly extending in size beyond the local high-bandwidth memories of the GPU or CPU to involve the rest of the hierarchy. The flow of data – from various tiers to the compute engines and back – becomes a source of system energy lost from computation.

Targeting our approach

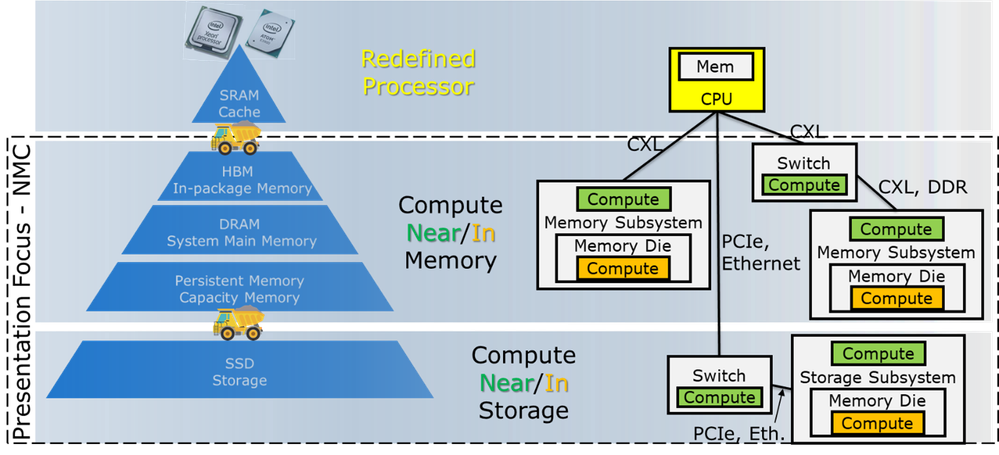

Near Memory Compute (NMC or CNM), Near Memory Processing (NMP or PNM), Processing in Memory (PiM), and many more acronyms are used to denote moving selected compute to memory. This blog takes a system-level approach and aggregates all these terms into NMC, referring to the concept of compute, simple to more complex, that is physically closer to memory than to the CPU or GPU. Within NMC there are three important system-level location distinctions: with the processor, in the memory, and with the memory. In this blog, we leave “with the processor” aside since that system location implies that data movement energy was not saved. We also leave “in the memory” to the memory designers for now, since that location implies a new, more expensive memory.

Our prediction is that NMC starts with the happy medium in between, with memory controllers housed with the memory at the system level, but still built on logic processes. This NMC provides access to memory-resident data with lower energy consumed and potentially at a higher bandwidth than in today’s systems while eliminating the need to challenge memory economies already operating at scale. NMC could include compute in a CXLTM memory module, or within a memory/storage fabric switch, or in an SSD.

Why Now?

NMC has been explored since the 60s, but it is not used in our large commercial systems today. The question we need to ask ourselves is, ‘What has changed?’ What is different now that might make NMC viable?

There are three reasons why ‘The Time May Be Now’ for NMC:

First, the slowdown in Dennard scaling has limited the ability to reduce power at the device level. Scaling transistors no longer delivers the traditional gains in power reduction. Without this historically key lever at the device level, system innovation must pick up the energy reduction baton.

Second, computing models allowing different compute engines (say a CPU and a GPU, or CPU and accelerator) to cooperate to solve a problem more efficiently have proliferated. Rapid introduction of this form of system-level heterogeneous computing, and the ability to program such architectures from high-level languages (often driven completely by libraries, runtimes, and compilers and so invisible to the programmer) is essential if NMC is to succeed.

Today we use high-level languages to execute, not just on a CPU, but on heterogeneous CPU-GPU systems. In the AI space, programmers often write code with PyTorch, TensorFlow, or at a similar level of abstraction. Intel has been continuously improving its oneAPI open-source programming model, which enables heterogeneous computing. This type of programming model could be extended to NMC compute agents.

Third, the introduction of a new memory interconnect, CXLTM, pioneered by Intel and now led by an industry-wide consortium, provides a pathway to Near Memory Compute. Today, we connect DRAM into the system with a DDR interface that requires data to arrive within a fixed nano-second time period. CXLTM enables variable delay memory accesses, potentially allowing time for NMC to access data, perhaps multiple data elements and complete compute before returning an answer. CXLTM modules include a memory controller built in a logic process, connecting the CXLTM link and the memories and likely performing error correction. This is an obvious place to introduce NMC.

System Integration Barriers are Formidable

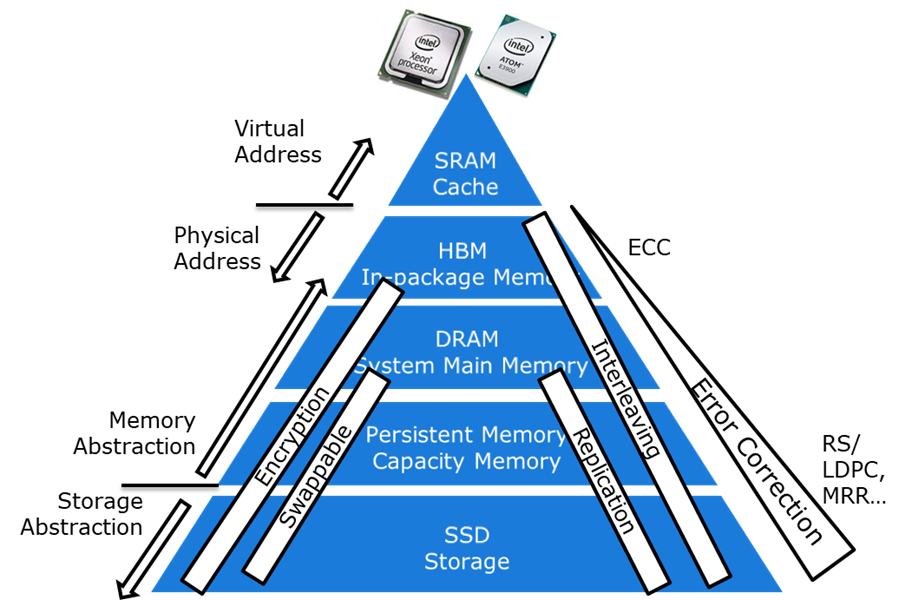

Memory hierarchies have evolved over decades at the system level. They include interleaving for performance, error correction for reliability, and encryption for security. Within the hierarchy itself multiple memory address spaces are relevant, (for example virtual and physical,) as well as multiple abstractions, (for example memory and storage.) As another example, it is not realistic to assume that all the data needed for computation resides on a single memory component or that data which does reside there has been error corrected.

As depicted in Figure 3, successful NMC approaches will be implemented via system designs, taking all these factors into account.

A Scorecard for NMC Approaches

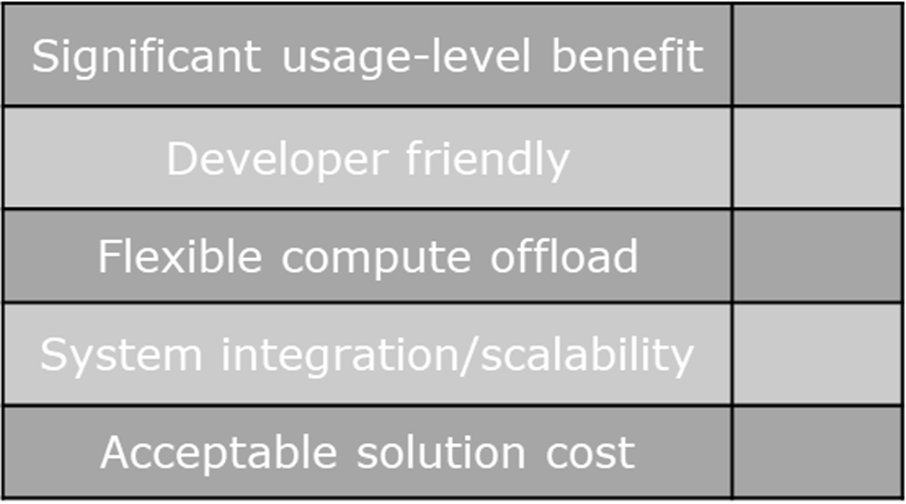

Change is hard, so the first hurdle to NMC is truly significant advantages in power, performance, and cost - advantages large enough to motivate the significant system-level work partitioning changes inherent in NMC.

For NMC to be useful it must be integrated into the system in a manner that works in harmony with the rest of the system. Developer friendliness is a must. Alterations to application code should not be required to reach the significant system-level advantages promised. Changes to software infrastructure are allowed. In fact, a software infrastructure capable of enabling applications to take advantage of NMC on systems where it is present, and still run effectively when it is not, is likely required.

The offload engine itself must be flexible enough to accommodate changes to algorithms operating on software timelines, rather than the hardware timelines of many NMC proposals. Especially in AI, rapid algorithm innovations continue to deliver rapid performance improvements, and these must be embraced by a successful NMC design.

And of course, any solution must integrate cleanly into the system as already discussed and provide a path to scale to large systems. Finally, the cost must be acceptable. It is not realistic to assume a new memory die will cost the same per-capacity when produced only in small volumes.

When researching a new NMC technology, or reviewing an NMC technology from others, think through the scorecard in Figure 4 to judge how serious a contender that technology is for actual system-level deployment.

Energy Savings will drive Near Memory Computing

There is a long history of Near Memory Compute research resulting in little impact thus far to real systems. As reducing system energy overhead becomes central to the strong system's design that keeps data center costs down and addresses global energy consumption, NMC is likely to find its footing. NMC system integration accomplished in a holistic, programmer-friendly manner may well find application in the rapidly evolving AI space. Perhaps we are on the cusp of a new renaissance in computer architecture ushering in a competitive market for compute near memory solutions that take us further from our Von Neumann roots.

Disclaimers and Notices:

Intel technologies may require enabled hardware, software or service activation.

© Intel Corporation. Intel, the Intel logo, and other Intel marks are trademarks of Intel Corporation or its subsidiaries. Other names and brands may be claimed as the property of others.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.