Nilesh Jain is a Principal Engineer leading intelligent infrastructure and systems research at Intel Labs with a focus on visual/AI applications. His co-authors for this project are Pablo Muñoz, Chaunté Lacewell, Nikolay Lyalyushkin, Anastasia Senina, Alexander Kozlov, Yury Gorbachev, and Ramesh Chukka.

Key Messages:

- Automated machine learning (AutoML) enables the machine-driven design of AI algorithms. Intel Labs' hardware-aware AI-based automation tools (AutoX) enable rapid adoption of AI models for different deployment configurations to address productivity challenges.

- Intel Labs developed the BootstrapNAS software framework to automatically discover alternative high-performing models optimized for Intel AI platforms from any pre-trained deep learning model. For the MLPerf 2.0 data center open submission, BootstrapNAS optimized Torchvision’s pre-trained ResNet-50 model for 3rd Gen Intel® Xeon® Scalable processors (codenamed Ice Lake). We submitted three variants of optimized models to showcase the flexibility of our solution, allowing users to pick the right model for accuracy and performance trade-offs.

- Our results show that the INT8 BNAS-A model delivered 2.55x higher throughput compared to INT8 quantized (OpenVINO) Torchvision ResNet-50 model. The BNAS-B model delivered 1.43x higher throughput, and model BNAS-C delivered 1.89x higher throughput. Comparing the same results to a FP32 Torchvision ResNet50 model, our BNAS-A model delivers 11.37x throughput improvement. All optimized models achieved this incremental acceleration while maintaining within 1% of Top-1 accuracy of the baseline model in FP32 precision.

Automated machine learning (AutoML) is gaining popularity because of its ability to address the industry-wide shortage of Artificial Intelligence (AI) professionals. There is an increasing need for scalable hardware-aware solutions that can be applied to a variety of artificial intelligence models. To address these challenges, Intel Labs is enabling AI innovation with hardware-aware automated machine-learning tools (AutoX), that help improve the productivity of AI developers, and the performance of AI models on Intel platforms.

Intel Labs is developing an automated hardware-aware model optimization tool called BootstrapNAS to simplify the optimization of pre-trained AI models on Intel hardware, including Intel® Xeon® Scalable processors, which delivers built-in AI acceleration and flexibility. The tool will provide considerable time savings with respect to finding an optimal model design for a given AI platform, while simultaneously improving performance significantly.

BootstrapNAS applies Neural Architecture Search (NAS) algorithms that automate the discovery of high-performing artificial neural networks (ANN). These algorithms have successfully trained super-networks and searched for efficient sub-networks. However, designing super-networks from an arbitrary AI architecture is challenging. BootstrapNAS is enabling NAS with automated super-network generation so developers do not need to manually generate their design spaces. As a result, BootstrapNAS enables NAS techniques to scale to various models saving time and resources.

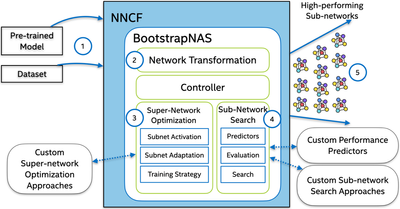

Figure 1. BootstrapNAS’ architecture.

We implemented BootstrapNAS within OpenVINO’s Neural Network Compression Framework (NNCF). As illustrated in Figure 1, (1) it takes a pre-trained model from a popular architecture, e.g., ResNet50, or from a custom design, (2) automatically creates a super-network out of it, (3) then uses state-of-the-art NAS techniques to train the super-network, (4) searches for high-performing sub-networks using hardware efficiency metrics, and (5) returns a selection of these sub-networks to the end-user. The resulting sub-networks significantly outperform the given pre-trained model. Virtually any AI model can be automated and optimized for Intel's Xeon platform using BootstrapNAS.

To highlight the flexibility of our solution, our submission to the MLPerf v2.0 datacenter open division includes three models that demonstrate trade-offs between accuracy and performance. Our optimized models show significant performance gains with limited to no impact on accuracy by automatically generating models using BootstrapNAS.

MLPerf v2.0 Inference Submission Results

Our datacenter Open Division submission of MLPerf v2.0 has leveraged BootstrapNAS to automatically discover equivalent models for the pre-trained Torchvision ResNet50 model targeted for the recently launched 3rd Gen Intel Xeon Scalable processors (codenamed Ice Lake). We used an Ice Lake server with two Intel® Xeon Platinum 8380 CPUs @ 2.30GHz, 40 cores per socket (80 cores total), 64GB DIMMs, and with hyper-threading and turbo mode enabled. Ice Lake delivers more compute and memory capacity/bandwidth than the previous generation (codenamed Cascade Lake). We measured both FP32[1,3] and INT8[2] performance for the server and offline scenarios of MLPerf. Our official submission includes only the INT8 performance comparison. All software used for this submission is available through the MLPerf v2.0 inference repository.

We used Torchvision's pre-trained ResNet-50 model as an input to BootstrapNAS to automatically produce high-performing equivalent models and leverage INT8 acceleration on the Ice Lake platform. The Top-1 accuracy of Torchvision’s ResNet50 is 75.836% for FP32 and 75.714% for INT8. This accuracy was measured with the MLPerf framework and is slightly lower than the accuracy reported by Torchvision. The Top-1 accuracy of generated models is summarized below in Table 1. To make the comparison easier, we normalized the measured accuracies of all models to the reported accuracy for the MLPerf Closed Division ResNet50 reference model. The MLPerf ResNet-50 reference model for PyTorch has a Top-1 accuracy of 76.014% in FP32 and 75.79% for INT8.

|

Models |

% FP32 Ref. Acc. |

% INT8 Ref. Acc. |

Scenarios |

|

Torchvision Baseline |

99.77 |

99.90 |

- |

|

BootstrapNAS A |

98.81 |

98.82 |

Offline, Server |

|

BootstrapNAS B |

100.32 |

100.50 |

Offline, Server |

|

BootstrapNAS C |

99.83 |

99.95 |

Offline, Server |

Table 1. Comparison of BootstrapNAS models submitted to MLPerf v2.0 and Torchvision baseline model to MLPerf’s ResNet-50 reference model.

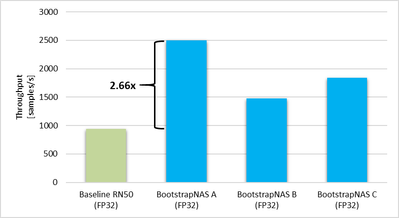

The BootstrapNAS software framework can provide an improvement in throughput and still maintain within 1% of the Top-1 accuracy of the baseline model in FP32 precision as shown in Figure 2. BootstrapNAS optimized FP32 model demonstrates 1.57-2.66x throughput improvement.

Figure 2. Comparison of throughput, samples per second, to the Torchvision baseline model. The BootstrapNAS framework can achieve up to 2.66x improvement without quantization.

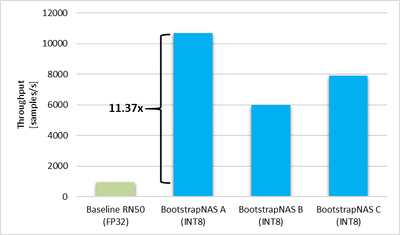

The models generated by BootstrapNAS provide additional improvements in throughput by using OpenVINO for quantization and optimizations. The quantized BootstrapNAS A model can boost the performance of the pre-trained Torchvision ResNet-50 model (FP32) by 11.37x in throughput, as shown in Figure 3. Overall, throughput improvements range between 6.39-11.37x in comparison to Torchvision’s pre-trained FP32 ResNet-50 baseline model. while maintaining a Top-1 accuracy within 1%.

Figure 3. Comparison of throughput of our BootstrapNAS models to the FP32 Torchvision baseline model. The BootstrapNAS framework along with OpenVINO can achieve up to 11.37x improvement.

In the MLPerf submission, we included our results for both offline and server scenarios. The Offline scenario focuses on batch-processing applications. In this scenario, the system receives a single query that includes all samples, and the system can process the data in any order. The Server scenario receives queries, one sample each, with a Poisson distribution within 15 milliseconds and this scenario represents online server applications. For this scenario, we could not obtain valid MLPerf results for the FP32 baseline model therefore the comparison is limited to only INT8. A summary of our results is shown in Table 2. BootstrapNAS A provides the largest improvement in throughput, in terms of samples per second, with a 2.66x improvement in FP32 and a 2.55x improvement in INT8 compared to Torchvision’s ResNet50 baseline model.

|

|

FP32 |

INT8 |

||

|

Model |

Server[1] [queries/s] |

Offline[1] [samples/s] |

Server[2] [queries/s] |

Offline[2] [samples/s] |

|

Torchvision[3] |

- |

939.14 |

3347.57 |

4181.64 |

|

BootstrapNAS A |

2048.95 |

2498.24 |

8242.91 |

10679.00 |

|

BootstrapNAS B |

849.98 |

1476.28 |

5041.17 |

5999.10 |

|

BootstrapNAS C |

1250.37 |

1837.11 |

6597.48 |

7916.33 |

Table 2. Measured performance of Torchvision's pre-trained ResNet-50 and three BootstrapNAS models compiled with OpenVINO in FP32 and INT8

Looking Ahead

Our results demonstrate the tool’s ability to help developers use their existing training pipelines and assist them in improving the performance of their AI models on Intel’s platform. In the near future, we plan to release BootstrapNAS capabilities as part of Intel’s OpenVINO toolchain, allowing developers to combine other sophisticated model compression techniques such as pruning, quantization, and distillation.

Notices & Disclaimers

Performance varies by use, configuration and other factors. Learn more at www.Intel.com/PerformanceIndex .

Performance results are based on testing as of dates shown in configurations and may not reflect all publicly available updates. See backup for configuration details. No product or component can be absolutely secure.

Your costs and results may vary.

Intel technologies may require enabled hardware, software or service activation.

© Intel Corporation. Intel, the Intel logo, and other Intel marks are trademarks of Intel Corporation or its subsidiaries. Other names and brands may be claimed as the property of others.

References

[1] Unverified MLPerf ™ v2.0 Inference Open ResNet-v1.5 Offline and Server. BootstrapNAS FP32 Result not verified by MLCommons Association.

[2] MLPerf™ v2.0 Inference Open ResNet-v1.5 Offline and Server, entry 2.0-155, 2.0-156, and 2.0-157; Retrieved from mlcommons.org/en/inference-datacenter-20 6 April 2022

[3] Unverified MLPerf ™ v2.0 Inference Closed ResNet-v1.5 Server and Offline. Torchvison Baseline Result not verified by MLCommons Association.

Configuration details: Intel Ice Lake 40 Core

Result verified by MLCommons Association. The MLPerf ™ name and logo are trademarks of MLCommons Association in the United States and other countries. All rights reserved. Unauthorized use strictly prohibited. See www.mlcommons.org for more information.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.