Introduction

Some deep learning models require additional contextual information to make valid predictions. This contextual information is typically collected from past predictions and stored in the model memory as internal model state. Models for speech recognition and time series forecasting take advantage of this context, where predictions for the next set of data depend not only on the model input, but also on the inference from previous sets of data.

Models that have internal memory mechanisms to hold state between inferences are known as stateful models. Starting with the 2021.3 release of OpenVINO™ Model Server, developers can now take advantage of this class of models. In this article, we describe how to deploy stateful models and provide an end-to-end example for speech recognition.

What is OpenVINO™ Model Server?

OpenVINO™ Model Server is a scalable, high-performance solution for serving machine learning models optimized for Intel® architectures. The server provides an inference service via gRPC or REST API – making it easy to deploy deep learning models at scale.

With OpenVINO™ Model Server, you don’t need to write custom inference code for many of your applications. Simply use the same standard API to infer any model, from any application, regardless of the execution framework, hardware, or deployment size. Model Server exposes a network API, enabling centralized management of deployed models. The models can be loaded and deployed into a stand-alone service, so that your applications can request inference remotely without needing to load models into local memory to make predictions.

For a complete list of features, see the official documentation.

Stateful vs. Stateless Models

Stateful models use prior information acquired by the model from previous inference requests, while stateless models do not leverage or store this memory. This “memory” represents the state of the model, which is why they are known as stateful.

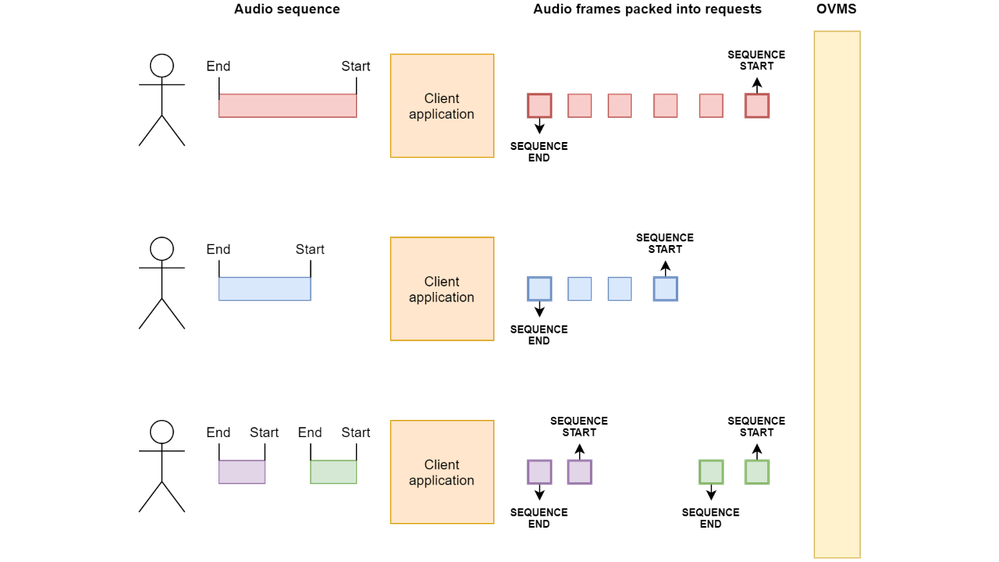

The order in which sets of data are inferred determines the state of the model. Because of this, requests to stateful models are sent in sequences. The model will maintain and update state for each sequence in order to produce responses with the correct results for each request.

Deploy Stateful Models

Deploying stateful model in OpenVINO™ Model Server is like deploying a stateless model. For both types of models, you need to provide a path to the model, so the server knows where to find it, and a name to identify it. When deploying a stateful model, you need to set the stateful flag in the model configuration to let the model server know that this model should be recognized as stateful.

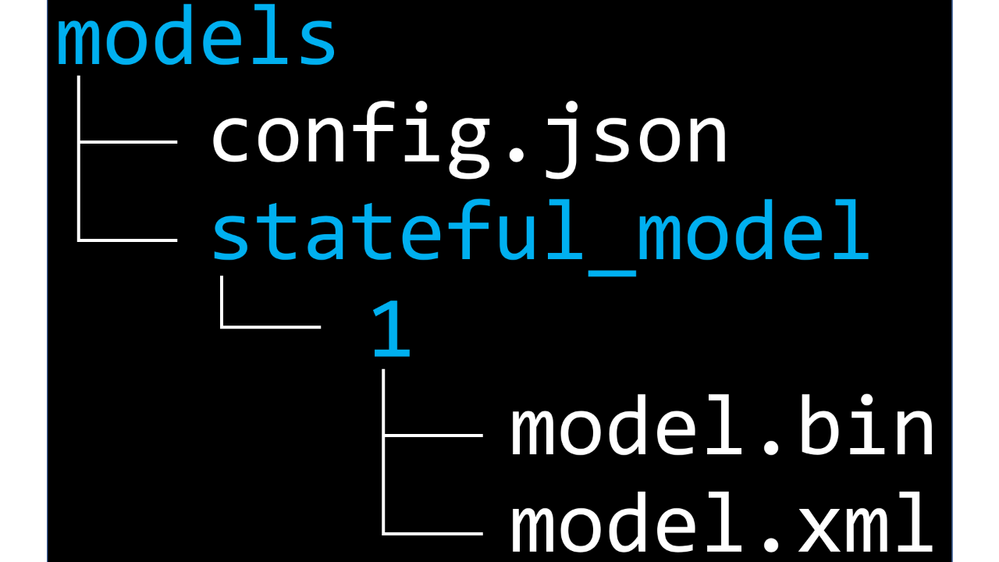

Let’s assume you have following directory structure:

The command to run the model server with a single, stateful model named stateful_model saved in a local model repository, would look like this:

docker run -d -u $(id -u):$(id -g) -v <host_models_dir>:/models -p 9000:9000 openvino/model_server:latest \

--port 9000 --model_path /models/stateful_model --model_name stateful_model

--stateful

Alternatively, the model can be defined using a configuration file named config.json saved in the model repository, like this:

{

"model_config_list":[

{

"config": {

"name":"stateful_model",

"base_path":"/models/stateful_model",

"stateful": true

}

}

]

}

And the command to start the model server with such configuration file would be:

docker run -d -u $(id -u):$(id -g) -v <host_models_dir>:/models -p 9000:9000 openvino/model_server:latest \

--port 9000 --config_path /models/config.json

When the stateful flag is set, there are 3 optional model parameters available:

- max_sequence_number

- idle_sequence_cleanup

- low_latency_transformation

Model Server can process many sequences of requests in parallel. If a developer wants to limit the number of concurrently processed sequences, to reduce memory consumption, the max_sequence_number parameter should be set to the highest number of sequences a model can process at a time. Trying to start a new sequence once this number is reached will be rejected by the server.

To infer a sequence of requests the user shall explicitly start the sequence with the first request and end it with the last one. On sequence start signal, model server allocates resources for the sequence and on the end signal, frees them. Sometimes, the model might not receive the end signal for the sequence due to, for example, dropped network connection, when the user is not capable of gracefully finishing the sequence. In this case, the Model Server will free the resources allocated for the sequence when it sees it’s inactive. This behavior can be configured per model with idle_sequence_cleanup flag. If it’s set, a model is subject to periodic idle sequence cleanup performed by the model server. Otherwise, the idle sequences will exist until they are terminated manually. The interval between two consecutive scans of the cleaning procedure is configured for all models and is set with command-line argument sequence_cleaner_poll_wait_minutes. If there are no inference requests belonging to specific sequence between two consecutive scans, the sequence gets removed and its resources are freed.

The remaining parameter – low_latency_transformation is a flag that determines if model server should perform LowLatency transformation on the network on model load or not. LowLatency transformation is described in more detail in the section below.

Model Topology Considerations

To maintain model memory state between inference requests, OpenVINO™ toolkit uses the ReadValue and Assign layers. Sometimes after importing a model with RNN operations, the Intermediate Representation (IR) contains TensorIterator layer instead of ReadValue and Assign layers. The model with TensorIterator processes the whole sequence in a single inference request. The network expects to get all data from the input and iterate over its chunks internally. Such models do not provide intermediate results for each element in the sequence, only output of the final result once the entire input is processed. This may be undesirable, if any of the following are true:

- You don’t know the sequence size in advance

- Your use-case requires real-time processing with low latency

- You want results for each element in the sequence

If so, you can change network structure to include ReadValue and Assign layers instead of TensorIterator. This can be accomplished using Low Latency transformation, which gives the model the ability to maintain state between inference requests – making it a better fit for streaming-like scenarios that require instant predictions with low latency for each element in the sequence.

You can learn more about LowLatency transformation in the OpenVINO documentation.

Sending Inference Requests to Stateful Models

Similar to stateless models, the server executes inference requests sent to the gRPC or REST API. However, requests sent to stateful models require additional context. First, the server needs to know with which sequence to associate each request. Second, there must be a way to tell the server when the sequence starts and when it ends in order to manage resources effectively. This extra information is passed using additional inputs in each inference request:

- sequence_id, positive number (in the scope of uint64 type) which identifies sequence in the scope of a model instance

- sequence_control_input, which indicates start or end of the sequence. Can be one of three values:

o 0 – has no effect, equivalent of not setting this input at all

o 1 – indicates start of the sequence

o 2 – indicates end of the sequence

Those additional inputs are included in the request body among the model inputs, but they are entirely consumed by the model server logic and do not participate in inference on the model.

Both sequence_id and sequence_control_input shall be provided as tensors with 1 element array (shape:[1]) and appropriate precision.

sequence_id is also included in the outputs of every inference response coming from a stateful model.

In order to successfully infer the sequence, perform these actions:

1. Send the first request in the sequence and signal sequence start.

To start the sequence, you need to add sequence_control_input with value of 1 to your request's inputs. You can also:

- add sequence_id with the value of your choice or

- add sequence_id with 0 or do not add sequence_id at all. In this case model server will provide unique id for the sequence and since it'll be appended to the outputs, you'll be able to read it and use with the next requests.

2. Send remaining requests except the last one.

To send requests in the middle of the sequence you need to add sequence_id of your sequence.

In this case sequence_control_input must be empty or 0.

3. Send the last request in the sequence and signal sequence end.

To end the sequence, you need to add sequence_control_input with the value of 2 to your request's inputs. You also need to add sequence_id of your sequence.

To send a sequence via gRPC with Python first you need to import the necessary modules. For basic processing, use the following:

import grpc

from tensorflow_serving.apis import prediction_service_pb2_grpc, predict_pb2

from tensorflow import make_tensor_proto, make_ndarray

Then set variables for the values which indicate sequence start, end and id:

SEQUENCE_START = 1

SEQUENCE_END = 2

sequence_id = 10

Assuming the server runs on localhost at port 9000, establish a connection like so:

channel = grpc.insecure_channel("localhost:9000")

stub = prediction_service_pb2_grpc.PredictionServiceStub(channel)

Then send the first request to initiate the sequence.

Create request object:

first_request = predict_pb2.PredictRequest()

Set the name of the model that will receive the request. It must be the same name of the model used by the server. In this example, the model name is set to "stateful_model":

first_request.model_spec.name = "stateful_model"

Set the input for inference data. The names of the inputs for inference data are model specific. In this example, we assume the model has one input called "data" and the variable passed to make_tensor_proto function is a numpy array filled with inference data:

first_request.inputs['data'].CopyFrom(make_tensor_proto(data))

Since this is the first request in the sequence, set special input sequence_control_input to SEQUENCE_START value to indicate the beginning of the sequence:

first_request.inputs['sequence_control_input'].CopyFrom(

make_tensor_proto([SEQUENCE_START], dtype="uint32"))

Set another special input sequence_id to associate the sequence with the identifier:

first_request.inputs['sequence_id'].CopyFrom(

make_tensor_proto([sequence_id], dtype="uint64"))

Send a request and receive a response with the inference results:

response = stub.Predict(first_request, 10.0)

The sequence with its provided sequence id is initiated and recognized by the server. You can now read the results of the inference request output as numpy arrays. The name of the outputs are model specific. In this example we assume model has one output and it's called prediction:

result = make_ndarray(response.outputs['prediction']))

When the first request in the sequence is processed, you can start sending intermediate requests, with sequence_id used with the first request, but without sequence_control_input:

request = predict_pb2.PredictRequest()

request.model_spec.name = "stateful_model"

request.inputs['data'].CopyFrom(make_tensor_proto(data))

request.inputs['sequence_id'].CopyFrom(

make_tensor_proto([sequence_id], dtype="uint64"))

response = stub.Predict(request, 10.0)

result = make_ndarray(response.outputs['prediction']))

To finish the sequence, send a request like the original, but with sequence_control_input input set to SEQUENCE_END:

last_request = predict_pb2.PredictRequest()

last_request.model_spec.name = "stateful_model"

last_request.inputs['data'].CopyFrom(make_tensor_proto(data))

last_request.inputs['sequence_control_input'].CopyFrom(

make_tensor_proto([SEQUENCE_END], dtype="uint32"))

last_request.inputs['sequence_id'].CopyFrom(

make_tensor_proto([sequence_id], dtype="uint64"))

response = stub.Predict(last_request, 10.0)

result = make_ndarray(response.outputs['prediction']))

See also: https://github.com/openvinotoolkit/model_server/blob/main/docs/stateful_models.md#stateful_inference for more details on inference on stateful models.

Speech recognition with ASpIRE Chain Time Delay Neural Network

A common use case that leverages stateful models is speech recognition. In this section we describe how to use the ASpIRE Chain TDNN model, deployed with OpenVINO™ Model Server, to get text predictions from a speech sample audio file.

Before we start, prepare a temporary asr_demo directory with two subdirectories on your host machine: workspace, and models.

export WORKSPACE_DIR=$HOME/asr_demo/workspace

export MODELS_DIR=$HOME/asr_demo/models

mkdir -p $WORKSPACE_DIR $MODELS_DIR

To prepare and then serve the model, you will need an OpenVINO™ Model Server container image and a container image with the OpenVINO™ toolkit developer tools. You can pull both images from Docker Hub:

docker pull openvino/model_server

docker pull openvino/ubuntu18_dev

Once the model is deployed, you will need a client to prepare the data, send it and decode the results. A separate container with all the required dependencies can serve as a client. Such a container needs to include Kaldi, Aspire TDNN decoding graph compiled and client dependencies installed. The container image can be built by modifying the Dockerfile provided by Kaldi.

cd $WORKSPACE_DIR

wget https://raw.githubusercontent.com/kaldi-asr/kaldi/e28927fd17b22318e73faf2cf903a7566fa1b724/docker/debian10-cpu/Dockerfile

sed -i 's|RUN git clone --depth 1 https://github.com/kaldi-asr/kaldi.git /opt/kaldi #EOL|RUN git clone https://github.com/kaldi-asr/kaldi.git /opt/kaldi \&\& cd /opt/kaldi \&\& git checkout e28927fd17b22318e73faf2cf903a7566fa1b724|' Dockerfile

sed -i '$d' Dockerfile

echo '

RUN cd /opt/kaldi/egs/aspire/s5 && \

wget https://kaldi-asr.org/models/1/0001_aspire_chain_model_with_hclg.tar.bz2 && \

tar -xvf 0001_aspire_chain_model_with_hclg.tar.bz2 && \

rm -f 0001_aspire_chain_model_with_hclg.tar.bz2

RUN apt-get install -y virtualenv

RUN git clone https://github.com/openvinotoolkit/model_server.git /opt/model_server && \

cd /opt/model_server && \

virtualenv -p python3 .venv && \

. .venv/bin/activate && \

pip install tensorflow-serving-api==2.* kaldi-python-io==1.2.1 && \

echo "source /opt/model_server/.venv/bin/activate" | tee -a /root/.bashrc && \

mkdir /opt/workspace

WORKDIR /opt/workspace/

' >> Dockerfile

docker build -t kaldi:latest .

Once the container image is built, download the speech recognition model and unpack it in the workspace directory:

cd $WORKSPACE_DIR

wget https://kaldi-asr.org/models/1/0001_aspire_chain_model.tar.gz

mkdir aspire_kaldi

tar -xvf 0001_aspire_chain_model.tar.gz -C $WORKSPACE_DIR/aspire_kaldi

Then convert the model to IR format using OpenVINO development container with the workspace directory mounted:

docker run --rm -it -u 0 -v $WORKSPACE_DIR:/opt/workspace openvino/ubuntu18_dev python3 /opt/intel/openvino_2021/deployment_tools/model_optimizer/mo_kaldi.py --input_model /opt/workspace/aspire_kaldi/exp/chain/tdnn_7b/final.mdl --output output --output_dir /opt/workspace

After the Model Optimizer tool successfully converts the model, an .xml and .bin file will appear in your workspace directory. These files make up the Intermediate Representation (IR).

Now that you have the IR, prepare the model repository where the server can find your models. Inside the models directory, create a subdirectory called “aspire” and then a numerical subdirectory “1” which indicates the model version.

mkdir -p $MODELS_DIR/aspire/1

Once you have these directories created, copy the model files that were generated in the model conversion process (final.xml and final.bin) to the model version directory:

cp $WORKSPACE_DIR/final.xml $WORKSPACE_DIR/final.bin $MODELS_DIR/aspire/1

With the models in place, launch OpenVINO™ Model Server:

docker run --rm -d -p 9000:9000 -v $MODELS_DIR:/opt/models openvino/model_server:latest --model_name aspire --model_path /opt/models/aspire --port 9000 --stateful

With the server running in the background, start the Kaldi client container in interactive mode:

docker run --rm -it --network="host" kaldi:latest bash

When container starts you should see that the working directory is /opt/workspace and the .venv with the Model Server Python client dependencies activated.

In the client container, download sample audio for speech recognition:

wget https://github.com/openvinotoolkit/model_server/raw/main/example_client/stateful/asr_demo/sample.wav

Then run the speech recognition script:

/opt/model_server/example_client/stateful/asr_demo/run.sh /opt/workspace/sample.wav localhost 9000

The run.sh script takes 3 arguments, first is the absolute path to the .wav file, the second is the server address and the third is the port where the server is listening. First, the MFCC features and ivectors are extracted, they are then sent to the server via the grpc_stateful_client. Finally, the results are decoded and parsed into text.

After the command executes successfully, you will see the .txt file in the same directory as the .wav file:

cat /opt/workspace/sample.wav.txt

/opt/workspace/sample.wav today we have a very nice weather

The workflow above, along with an additional step for capturing speech from a microphone can be found inside the OpenVINO™ Model Server repository.

Conclusion

Starting with the 2021.3 release of OpenVINO™ Model Server, developers can now take advantage of an additional class of models known as stateful models. For tasks like speech recognition, time series and market prediction, it is now possible to deploy models that analyze streams of data and make predictions based on historical data. For more details about deploying stateful models see:

https://github.com/openvinotoolkit/model_server/blob/main/docs/stateful_models.md

Notices & Disclaimers

Performance varies by use, configuration and other factors. Learn more at www.Intel.com/PerformanceIndex.

Performance results are based on testing as of dates shown in configurations and may not reflect all publicly available updates. See backup for configuration details. No product or component can be absolutely secure.

Your costs and results may vary.

Intel technologies may require enabled hardware, software or service activation.

Intel does not control or audit third-party data. You should consult other sources to evaluate accuracy.

© Intel Corporation. Intel, the Intel logo, and other Intel marks are trademarks of Intel Corporation or its subsidiaries. Other names and brands may be claimed as the property of others.

Mary is the Community Manager for this site. She likes to bike, and do college and career coaching for high school students in her spare time.

Mary is the Community Manager for this site. She likes to bike, and do college and career coaching for high school students in her spare time.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.