Author: Ragesh Hajela

Open Model Zoo provides a set of public and Intel pre-trained models. Public models can be identified with standard names like MobileNet V3, whereas Intel models have got more descriptive names including four-digit version number at the end for example, asl-recognition-0004 (uses MobileNet V3).

Model Converter reads model.yml file and converts a public model into Inference Engine IR format (*.xml and *.bin files) using Model Optimizer whereas Intel models are already pre-converted into IR format files. Intermediate Representation (IR) of the network can be inferred with the Inference Engine and OpenVINO™ toolkit is used for optimizing and deploying AI inference.

Based on the number of IR files included, there are two types of models available:

1. Models with single pair of IR files, e.g. asl-recognition-0004

name: FP32/asl-recognition-0004.xml

name: FP32/asl-recognition-0004.bin

2. Models with more than one pair of IR files, e.g. action-recognition-0001

name: FP32/action-recognition-0001-decoder.xml

name: FP32/action-recognition-0001-decoder.bin

name: FP32/action-recognition-0001-encoder.xml

name: FP32/action-recognition-0001-encoder.bin

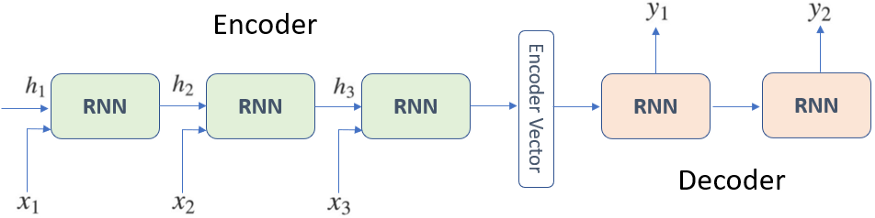

These are called encoder-decoder networks, also called the sequence to sequence (seq2seq) network, an architecture that can be implemented with LSTM, RNNs or with Transformers. This architecture aims to map a fixed-length input with a fixed-length output where the length of input and output may differ. It’s quite popular for different tasks like action recognition, machine translation, video or image captioning etc.

omz_downloader, which is a command-line tool from the openvino-dev package, automatically creates a directory structure and downloads the selected model.

# Directory where model will be downloaded

base_model_dir = "model"

# Model name as named in Open Model Zoo

model_name = "action-recognition-0001"

# Selected precision (FP32, FP16, FP16-INT8)

precision = "FP16"

model_path_decoder = (

f"model/intel/{model_name}/{model_name}-decoder/{precision}/{model_name}-decoder.xml"

)

model_path_encoder = (

f"model/intel/{model_name}/{model_name}-encoder/{precision}/{model_name}-encoder.xml"

)

if not os.path.exists(model_path_decoder) or not os.path.exists(model_path_encoder):

download_command = f"omz_downloader " \

f"--name {model_name} " \

f"--precision {precision} " \

f"--output_dir {base_model_dir}"

! $download_command # Encoder and Decoder initialization

Next, we will load and initialize the two models for this particular architecture, Encoder and Decoder. You can try action recognition yourself with this demo.

from openvino.runtime import Core

from openvino.runtime.ie_api import CompiledModel

# Initialize inference engine

ie_core = Core()

def model_init(model_path: str) -> Tuple:

"""

Read the network and weights from file, load the

model on the CPU and get input and output names of nodes

:param: model: model architecture path *.xml

:retuns:

compiled_model: Compiled model

input_key: Input node for model

output_key: Output node for model

"""

# Read the network and corresponding weights from file

model = ie_core.read_model(model=model_path)

# compile the model for the CPU (you can use GPU or MYRIAD as well)

compiled_model = ie_core.compile_model(model=model, device_name="CPU")

# Get input and output names of nodes

input_keys = compiled_model.input(0)

output_keys = compiled_model.output(0)

return input_keys, output_keys, compiled_model

# Encoder initialization

input_key_en, output_keys_en, compiled_model_en = model_init(model_path_encoder)

# Decoder initialization

input_key_de, output_keys_de, compiled_model_de = model_init(model_path_decoder)

Alternatively, Model Downloader module can also help to download files pre-computed IR files.

1. Download all relevant IR files, including encoder and decoder models, if exists.

!omz_downloader --name action-recognition-0001 -o raw_model

2. Download encoder and decoder IR files separately. Model name as named in Open Model Zoo.

!omz_downloader --name action-recognition-0001-encoder -o raw_model

!omz_downloader --name action-recognition-0001-decoder -o raw_model

As an additional utility, download_file helps to download any data file and save it to the local filesystem. For example, 210-ct-scan-live-inference demo uses this feature to download validation video for live inference.

from notebook_utils import download_file

filename = download_file(

f"https://storage.openvinotoolkit.org/data/test_data/openvino_notebooks/kits19/case_{117:05d}.zip"

)

Notices & Disclaimers

Intel technologies may require enabled hardware, software or service activation.

No product or component can be absolutely secure.

© Intel Corporation. Intel, the Intel logo, and other Intel marks are trademarks of Intel Corporation or its subsidiaries. Other names and brands may be claimed as the property of others.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.