Published August 9th, 2021

Jose Lopez is an AI/ML researcher at Intel Labs. Here, he explores the use of machine learning for audio understanding and industrial predictive maintenance.

Highlights:

- Intel Labs wins first place in the IEEE Audio and Acoustic Signal Processing Society (AASP) Challenge on Detection and Classification of Acoustic Scenes and Events (DCASE2021), Task 2.

- Research in anomalous sound detection to improve semiconductor manufacturing production for both Intel and its partners by using artificial intelligence (AI) and audio detection to monitor machine condition and health.

Intel® operates six wafer fabrication (fab) sites and four test manufacturing locations globally, producing, on average, five billion transistors per second. Keeping these fabrication facilities running smoothly is a top priority as malfunctions can be costly. In addition, ensuring chip manufacturing production targets are met is vital due to the fact that some expect the chip shortage to continue into 2023.

Keeping fabs running is no easy task. Each has many expensive components, and when there is a malfunction, it can mean upwards of $60,000 in lost revenue. Any information about a malfunction could help mitigate its impact. Suppose a robot arm started making a strange noise, for example. In that case, it is much cheaper to stop the process and evaluate the problem rather than waiting for a malfunction to cause damage to the wafer potentially. In fact, there is a field concerned with obtaining this kind of information called predictive maintenance.

There was a time when predictive maintenance was conducted exclusively by listening for problems with the human ear. These days, machines are monitored with vibration sensors or contact acoustic-emission sensors attached to a machine. While these techniques have worked reasonably well, they are not perfect. For one, predictive maintenance sensors can be expensive. A simple setup for one machine can easily reach tens of thousands of dollars. They also generally only work for a single machine. So, if a fab has a thousand machines, it can getcostly.

The Audio Understanding team, part of Intel Labs’ Human and AI Systems Research (HAR) Lab, set out to explore if we could solve this problem. We decided to use microphones and machine learning to monitor fab components because microphones are comparatively inexpensive and are easy to set up. Of course, Intel is not the only ones to have this idea. Last year, the annual IEEE Audio and Acoustic Signal Processing Society (AASP) Challenge on Detection and Classification of Acoustic Scenes and Events (DCASE2020) included a task called “Unsupervised Detection of Anomalous Sounds for Machine Condition Monitoring.”

The goal was to create a solution to automatically detect a mechanical failure and identify whether sounds emitted from an observed machine were normal or anomalous. We took on this challenge and placed seventh out of 40, and on a large subset of the data, our team ranked third. While that was a nice accomplishment, the real work began after the challenge. Our team saw this as an opportunity to kickstart our own audio anomaly detection project and learn from this initial challenge so that we could improve our ability to deliver even better results.

During this time, we cultivated relationships with external and internal partners to help their predictive maintenance efforts while improving our algorithms using real world data. As the time approached for the next DCASE competition, we decided to participate again.

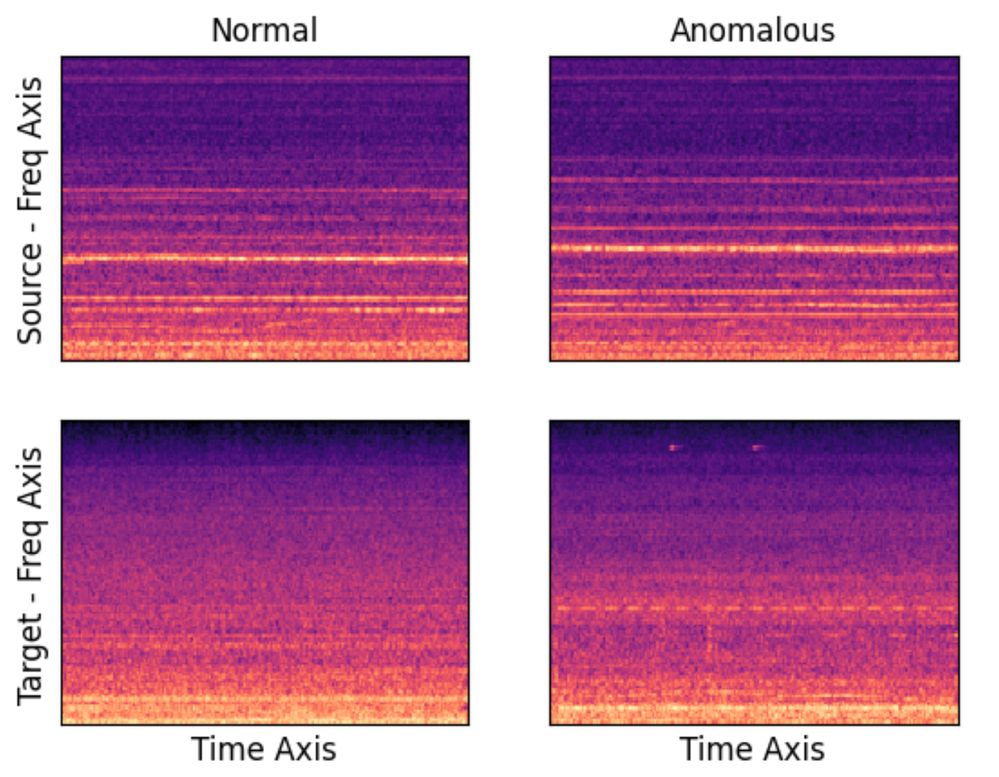

The DCASE2021 Challenge proved to be more complex and involved data for an additional machine category and an added a domain shift, which meant that the training data and testing data were recorded under different conditions. The organizers provided data from a source domain and just three 10s clips from the shifted domain. Again, the team had to identify whether the sound produced from a machine was normal or anomalous for two domains with only a few files of the domain shifted data, and without access to anomalous samples.

Figure 1: Spectrograms Per Domain & Condition for Pump Machine Category

When we tried last year’s model on the new data, it didn’t perform nearly as well. Despite having familiar machine categories, this was a much harder problem. We toiled over it for the next 15 weeks reading domain adaptation literature, running experiments, and holding weekly meetings to review the results. Two weeks before the deadline, we tried a new architecture based on a recent machine learning technique called “normalizing flows” with intriguing results. We experimented with this approach in the remaining time.

The team ended up including this model, which included two improved variants of last year’s model and an ensembled system that blended the three. This last combined system ended up taking the top spot in this year’s contest and it is described in the technical report, “Ensemble of Complimentary Anomaly Detectors Under Domain Shifted Conditions,” that was submitted to the contest. Despite this year’s result, there is much more to learn so we can create products and solutions that help keep fabs running smoothly and efficiently. We look forward to developing these concepts further and applying them to predictive maintenance applications and beyond.

For more information on the HAR Lab’s Audio Understanding team and these efforts, contact jose.a.lopez@intel.com.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.