Javier Felip is a Research Scientist at Intel Labs where he focuses on probabilistic algorithms for robotics, perception and machine learning.

Highlights

- Researchers at Intel Labs introduced a method that improves the execution fluency in human-robot collaboration tasks.

- The method is based on the analysis-by-synthesis paradigm, where the robot creates online hypotheses about the potential human behavior and ranks them according to partial observations. Ranked hypotheses are formulated into a probability density function of the potential human actions and are used to determine what action the robot will perform next.

- Two core innovations allow the system to perform real-time computations thousands of times per observationgenerating proactive robot behaviors that result in a more fluid, safe, and intuitive human-robot interaction.

When performing collaborative tasks, humans continuously make predictions about their co-workers’ intents and adapt their actions to perform tasks as fluidly as possible. So far, robots are unable to interpret non-verbal communication and lack such predictive skills. By improving robots’ intent prediction capabilities, they can anticipate human motion and act accordingly, making collaboration more intuitive, efficient, and fluid without safety trade-offs.

Researchers Javier Felip Leon, David Gonzalez-Aguirre, and Lama Nachman from Intel Labs will present the paper “Intuitive & Efficient Human-Robot Collaboration via Real-time Approximate Bayesian Inference” at IROS 2022 in Kyoto. See the preprint here.

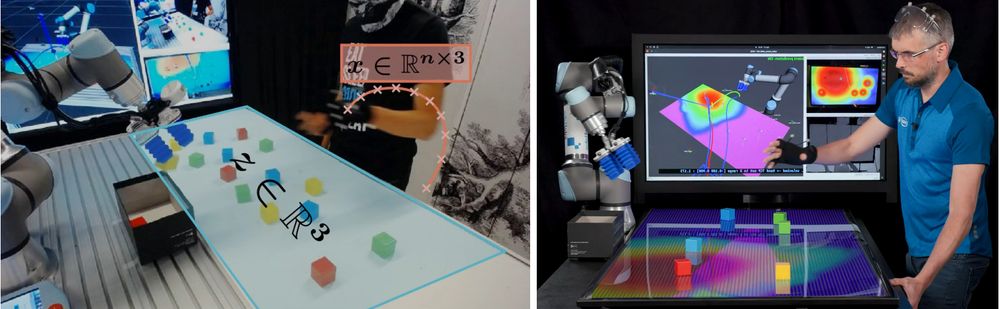

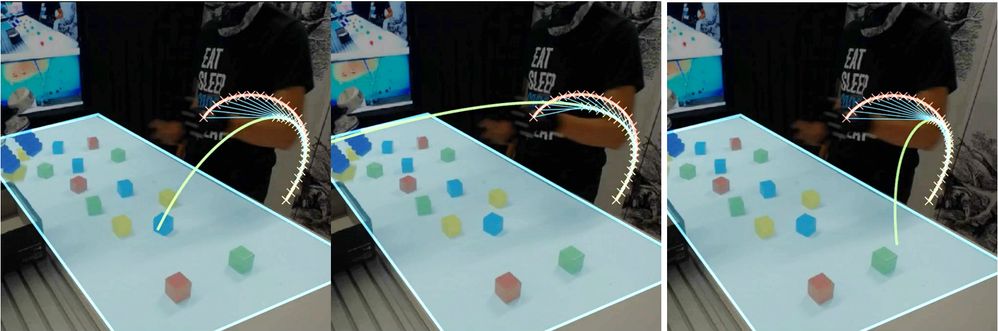

Analysis-by-Synthesis for Probabilistic Intent Prediction

To predict the human’s intent, the robot “asks” itself: What is the probability that the human is reaching toward this specific point in the table? To answer that question, the robot “imagines” what the motion of the human would look like if the human was indeed reaching toward that point. The robot determines the likelihood of this specific action by comparing the partially observed human motion captured by the camera system to the imagined trajectory. This process must be repeated for every possible point on the table. After normalizing the likelihoods among all the possible points, the reaching probability density function is obtained.

Making Analysis-by-Synthesis Run in Real-time

Generating plausible trajectories that a human could execute requires the robot to compute collision-free paths that satisfy human kinematics and dynamics. This can be accomplished by physics simulation rollouts. However, generating each trajectory can take around 100ms. The robot needs to evaluate tens of thousands of hypotheses per frame making the approach unfeasible for real-time applications. Our researchers combined the power of Intel CPU vectorization and neural networks to develop a neural emulator that learns to generate human-like trajectories from the simulator. This way, the robot can generate and evaluate thousands of hypotheses in less than 30ms, the time interval between two observations.

“In the concrete implementation for intent prediction workload discussed in this paper, the neural surrogate can sample and evaluate 9,100 samples in 0.01s instead of 1,365s using the original single-threaded, physics simulation generative model. More than a 100,000x improvement.”

Using Intent Prediction to Improve Task Fluency

The computed probability provides a spatial notion of where the human is reaching, namely a heat map depicting intent likelihood at each location. The robot takes advantage of those localized estimates and starts operating in a location on the workspace where the human is unlikely to operate. This way, the robot can proactively and effectively operate safely without interrupting the human by postponing its next action until the human arm is in retreat. The researchers evaluated performance using productivity metrics such as total task time and subjective metrics like task fluency. The experimental results show that incorporating intent prediction into collaborative tasks improves metrics across the board.

The Human-Robot Collaboration team at Intel Labs strives to develop robots that perform effective actions that are intuitive, predictable, and dependable. Intent prediction capabilities will be one of the pillars for the acceptance and productivity of robots as flexible co-workers on future factory floors.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.