Author: Milosz Zeglarski

Introduction

In this blog, you will learn how to perform inference on JPEG images using the gRPC API in OpenVINO Model Server. Model servers play an important role in smoothly bringing models from development to production. They serve models via network endpoints and expose APIs for interacting with them. Once a model is served, a set of functions are required to call the API from our application.

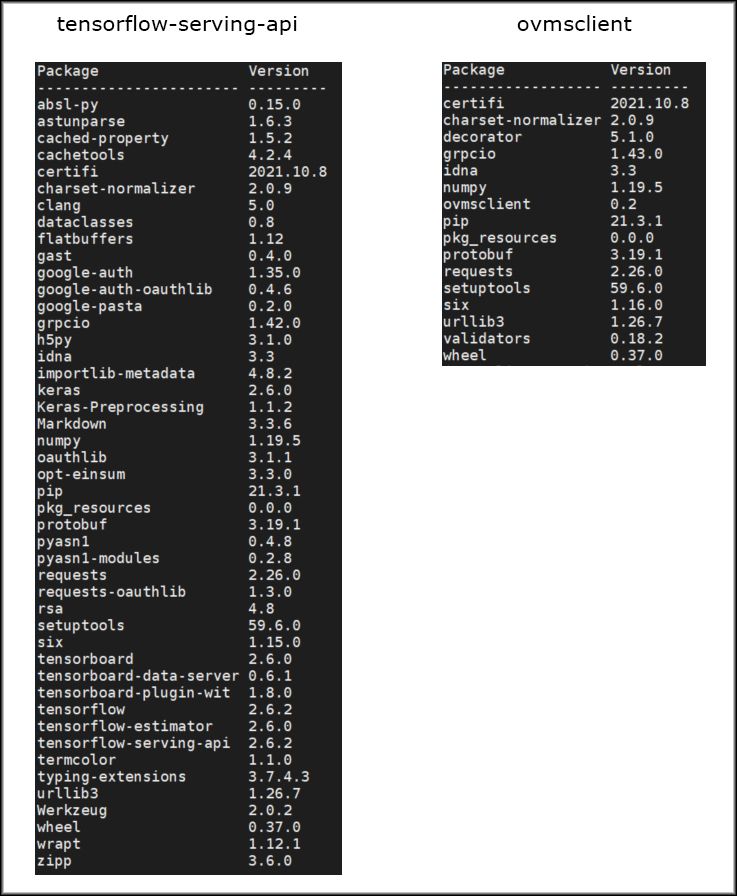

OpenVINO™ Model Server and TensorFlow Serving share the same frontend API, meaning we can use the same code to interact with both. For Python developers, the typical starting point is using the tensorflow-serving-api pip package. Unfortunately, since this package includes TensorFlow as a dependency, its footprint is quite large.

The road towards a lightweight client

Since tensorflow-serving-api and its dependencies are roughly 1.3GB in size, we decided to create a lightweight client with only the necessary functions to perform API calls. In the latest version of OpenVINO Model Server, we introduced a preview version of the Python client library - ovmsclient. This new package, with all its dependencies, is less than 100MB - making it about 13x smaller than tensorflow-serving-api.

In addition to having a larger binary size, importing tensorflow-serving-api in an application also consumes more memory. See the results below of running the top command for Python scripts that perform the same operations — one with tensorflow-serving-api and the other with

|

Package |

VIRT [KB] |

RES [KB] |

SHR [KB] |

|

tensorflow-serving-api |

4543760 |

293516 |

155708 |

|

ovmsclient |

3500296 |

52008 |

23040 |

Memory usage comparison.

Note the difference in RES [KB] column that indicates the amount of physical memory used by the task.

Importing packages with a large footprint will also increase initialization time, another benefit of using the lightweight client. The new Python client package is also simpler to use than tensorflow-serving-api, as it provides utilities for end-to-end interaction with model servers. When using ovmsclient, an application developer doesn’t need to know the details of the server API. The package provides a set of convenient functions for every stage of interaction — from setting up the connection and making API calls, to unpacking the results in a standard format. Previously, developers needed to know which service accepts what type of request or how to manually prepare requests and handle responses.

Let’s use ovmsclient

To run a prediction using a ResNet-50 image classification model, deploy OpenVINO Model Server with the model. You can do that with the command below:

docker run -d --rm -p 9000:9000 openvino/model_server:latest \

--model_name resnet --model_path gs://ovms-public-eu/resnet50-binary \

--layout NHWC:NCHW --port 9000

This command starts the server with a ResNet-50 model downloaded from a public bucket on Google Cloud Storage. With Model Server listening for gRPC calls on port 9000, you can start interacting with the server. Next, let’s install ovmsclient package using pip:

pip3 install ovmsclient

Before running the client, download an image to classify and corresponding ImageNet labels file to interpret prediction results:

wget

https://raw.githubusercontent.com/openvinotoolkit/model_server/main/demos/common/static/images/zebra.jpeg

wget

https://raw.githubusercontent.com/openvinotoolkit/model_server/main/demos/common/python/classes.py

Picture of a zebra used for prediction

Step 1: Create a gRPC connection to the server:

Now you can open Python interpreter and create a gRPC connection to the Model Server.

$ python3

Python 3.6.9 (default, Jul 17 2020, 12:50:27)

[GCC 8.4.0] on linux

Type "help", "copyright", "credits" or "license" for more information.

>> from ovmsclient import make_grpc_client

>> client = make_grpc_client("localhost:9000")

Step 2: Request the model metadata:

The client object has three methods: get_model_status, get_model_metadata, and predict. To create a valid inference request, you need to know the model input. For that, let’s request model metadata:

>> client.get_model_metadata(model_name= "resnet")

{'model_version': 1, 'inputs': {'0': {'shape': [1, 224, 224, 3], 'dtype': 'DT_FLOAT'}}, 'outputs': {'1463': {'shape': [1, 1000], 'dtype': 'DT_FLOAT'}}}

Step 3: Send a JPEG image to the server:

From model metadata, we learn that the model has one input and one output. The input name is “0” and it expects data in shape (1,224,224,3) with floating point datatype. Now you have all the information needed to run inference. Let’s use the image of a zebra that was downloaded in the previous step. In this example, we will use the Model Server binary inputs feature, which requires just loading a JPEG and requesting prediction on the encoded binary – no preprocessing is needed when using the binary inputs.

>> with open("zebra.jpeg", "rb") as f:

... img = f.read()

...

>> output = client.predict(inputs={ "0": img}, model_name= "resnet")

>> type(output)

<class 'numpy.ndarray'>

>> output.shape

(1, 1000)

Step 4: Map the output to an imagenet class:

The prediction returned successfully with an output numpy ndarray in shape (1, 1000) – the same as was described in the model metadata in the “outputs” section. The next step is to interpret the model output and extract the classification result.

The shape of the output is (1, 1000), where the first dimension represents batch size (number of processed images) and the second is probability of the image belonging to each of the ImageNet classes. To get the classification result you need to get the index of the maximum value in the second dimension of the output. Then use imagenet_classes dictionary from classes.py downloaded in previous step to perform mapping of the index number to class name and see the result.

>>> import numpy as np

>>> from classes import imagenet_classes

>>> result_index = np.argmax(output[0])

>>> imagenet_classes[result_index]

'zebra'

Conclusion

The new ovmsclient package is smaller, consumes less memory, and is easier to use than tensorflow-serving-api. In this blog, we learned how to get model metadata and run predictions on binary encoded JPEG image via the gRPC interface in OpenVINO Model Server.

See more detailed examples for running predictions with NumPy arrays, checking model status, and using the REST API on GitHub: https://github.com/openvinotoolkit/model_server/tree/main/client/python/ovmsclient/samples

To learn more about ovmsclient capabilities, see the API documentation:

https://github.com/openvinotoolkit/model_server/blob/main/client/python/ovmsclient/lib/docs/README.md

This is the first release of the client library. It will evolve over time, but is already capable of running predictions with OpenVINO Model Server and TensorFlow Serving with just a minimal Python package. Have questions or suggestions? Please open an issue on GitHub.

Notices and Disclaimers

Performance varies by use, configuration and other factors. Learn more at www.intel.com/PerformanceIndex.

Intel technologies may require enabled hardware, software or service activation.

Intel does not control or audit third-party data. You should consult other sources to evaluate accuracy.

Intel disclaims all express and implied warranties, including without limitation, the implied warranties of merchantability, fitness for a particular purpose, and non-infringement, as well as any warranty arising from course of performance, course of dealing, or usage in trade.

© Intel Corporation. Intel, the Intel logo, and other Intel marks are trademarks of Intel Corporation or its subsidiaries. Other names and brands may be claimed as the property of others.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.