Subarna Tripathi is a research scientist at Intel Labs, working in computer vision and machine learning.

Highlights:

- Intel Labs proposes a novel video representation method based on sparse spatio-temporal graphs which leads to context aggregation over 10X longer time support.

- This method shows that graph-centric architectures can achieve state-of-the-art results at a fraction of the memory and compute cost of transformers.

- On a wide range of settings, the longer temporal support enabled by the graph-based representations consistently increases recognition accuracy.

- Spatio-temporal learning achieves state-of-the-art results on the Active Speaker Detection task, competitive results on Action Detection tasks, and encouraging progress on other applications including event boundary detection, action localization, and temporal action segmentation.

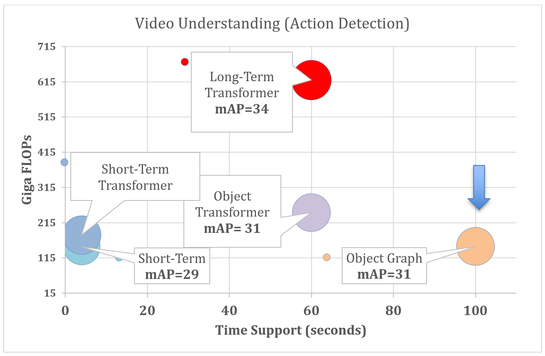

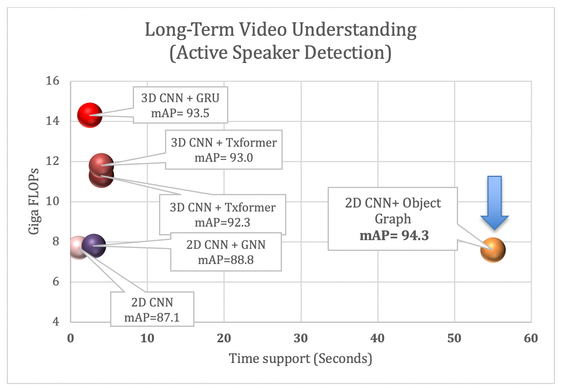

Current video understanding systems accurately recognize patterns in short video clips. However, they fail to capture how the present connects to the past or future in a world of never-ending visual stimuli. Existing video architectures tend to hit computation or memory bottlenecks after processing only a few seconds of video content. So, how do we enable accurate and efficient long-term visual understanding? An important first step is to have a model that practically runs on long videos. To that end, we propose a novel video representation method based on Spatio-Temporal Graphs Learning (SPELL) to equip it with long-term reasoning capability. Figure 1 shows the time support capability for SPELL vs other methods.

To put things into perspective, when analyzing content from a 5-minute video clip at 30 frames/second, we need to process a bundle of 9,000 video frames. None of the existing models utilizing 3D CNN or Transformers can operate on a sequence of 9,000 frames as a single processing unit. Assuming that, on average, there are 5 object instances per frame, the associated dense graph will have 45,000 such objects as nodes, and over 2 billion edges connecting pairs of nodes. With the advent of distributed large-graph training strategies, it is now possible to train such graphs.

Let G = (V, E) be a graph with the node set V and edge set E. For domains such as social networks, citation networks, and molecular structure, the V and E are available to the system, and we say the graph is given as an input to the learnable models. Now, let’s consider the simplest possible case in a video where each of the video frame is considered a node leading to the formation of V. However, it is not clear whether and how node t1 (frame at time=t1) and node t2 (frame at time=t2) are connected. Thus, the set of edges, E, is not provided. Without E, the topology of the graph is not complete resulting into unavailability of the “ground truth” graphs. One of the most important challenges remains how to convert a video to a graph. This graph can be considered as a latent graph since there is no such labeled (or “ground truth”) graph available in the dataset.

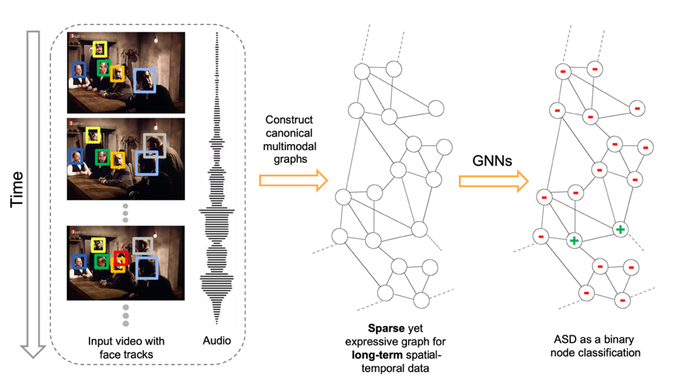

Figure 2 illustrates an overview of our framework when used on an Active Speaker Detection (ASD) task. Taking the audio-visual data, we construct a multimodal graph and cast the ASD as a graph node classification task. First, we create a graph where the nodes correspond to each person within each frame, and the edges represent spatial or temporal relationships among them. Figure 2 shows a visualization of such a graph. The initial node features are constructed using simple and lightweight 2D convolutional neural networks (CNNs) instead of a complex 3D CNN or a transformer. Next, we perform binary node classification – active or inactive speaker – on this graph by learning a three-layer graph neural network (GNN) model each with a small number of parameters. In our framework, graphs are constructed specifically for encoding the spatial and temporal dependencies among the different facial identities. Therefore, the GNN can leverage this graph structure and model the temporal continuity in speech as well as the long-term spatial-temporal context, while requiring low memory and computation.

Figure 2. For ASD, SPELL converts a video into a canonical graph from the audio-visual input data, where each node corresponds to a person in a frame, and an edge represents a spatial or temporal interaction between the nodes. The constructed graph is dense enough for modeling long-term dependencies through message passing across the temporally- distant but relevant nodes, yet sparse enough to be processed within low memory and computation budget. The ASD task is posed as a binary node classification in this long-range spatial-temporal graph.

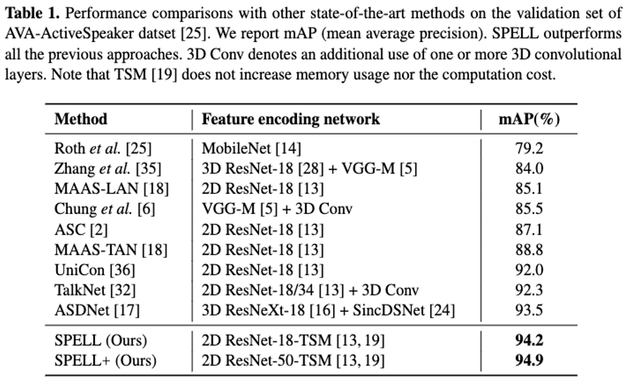

We demonstrate the efficacy of SPELL through extensive experiments on the AVA-ActiveSpeaker dataset. Our results show that SPELL outperforms all previous state-of-the-art (SOTA) approaches. Thanks to ~95% sparsity of the constructed graphs, SPELL requires significantly less hardware resources for the visual feature encoding (11.2M #Params) compared to ASDNet (48.6M #Params), one of the leading state-of-the-art methods.

Long-term temporal context: The hyperparameter τ (= 0.9 second in our experiments) in SPELL imposes additional constraints on direct connectivity across temporally distant nodes. The face identities across consecutive timestamps are always connected. Below is the estimate of the effective temporal context size of SPELL. The AVA-ActiveSpeaker dataset contains 3.65 million frames and 5.3 million annotated faces, resulting in 1.45 faces per frame. Averaging 1.45 faces per frame, a graph with 500 to 2000 faces in sorted temporal order can span 345 to 1379 frames, corresponding to anywhere between 13 and 55 seconds for a 25 frame/second video. In other words, the nodes in the graph might have a time difference of about 1 minute, and SPELL is able to effectively reason over that long-term temporal window within a limited memory and compute budget. It is noteworthy that the temporal window size in MAAS is 1.9 seconds and TalkNet uses up to 4 seconds as long-term sequence-level temporal context.

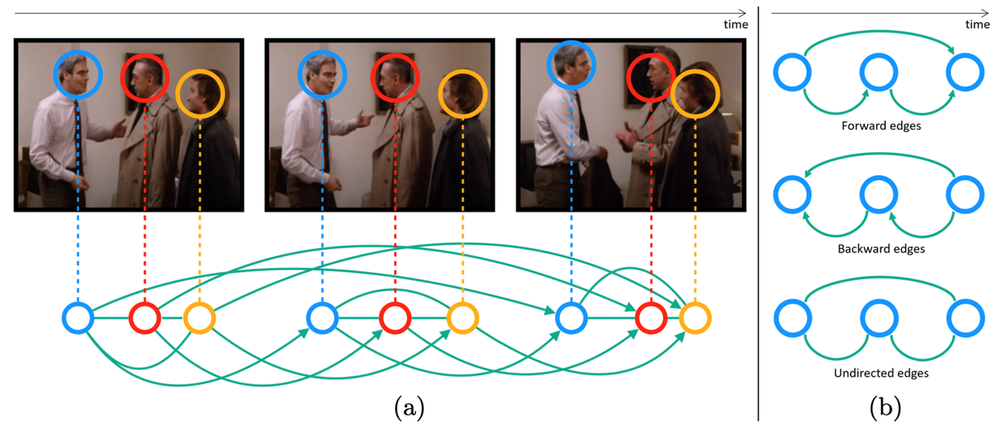

Figure 3. (a): An illustration of our graph construction process. The frames above are temporally ordered from left to right. The three colors of blue, red, and yellow denote three identities that are present in the frames. Each node in the graph corresponds to each face in the frames. SPELL connects all the inter-identity faces from the same frame with the undirected edges. SPELL also connects the same identities by the forward/backward/undirected edges across the frames (controlled by a hyperparameter, τ) . In this example, the same identities are connected across the frames by the forward edges, which are directed and only go in the temporally forward direction. (b): The process for creating the backward and undirected graph is identical, except in the former case the edges for the same identities go in the opposite direction and the latter has no directed edge. Each node also contains the audio information which is not shown here.

Leaderboards: SPELL achieved 2nd place in the AVA-ActiveSpeaker challenge at ActivityNet 2022. SPELL also achieved the top position in the very recently launched Ego4D audio-video diarization challenge at ECCV 2022.

The ASD problem setup in Ava active speaker dataset has access to the labeled faces and labeled face tracks as input to the main algorithm. That largely simplifies the construction of the graph in terms of identifying the nodes and edges. For other problems, such as Action Detection, where the ground truth object locations and tracks are not provided, we use pre-processing to detect objects and object tracks, then utilize SPELL for the node classification problem. On average, we achieve ~90% sparse graphs; a key difference compared to visual transformer-based methods which rely on dense General Matrix Multiply (GEMM) operations. Our sparse GNNs allow us to:

- Achieve slightly better performance than transformer-based models.

- Aggregate temporal context over 10x longer windows compared to transformer-based models (100s vs 10s).

- Achieve 2-5X compute savings compared to transformers-based methods.

Figure 4. SPELL for Action Detection. Our GNN based solution reaches ~SOTA using a 90% sparse GNN and can extract temporal features >100 seconds compared to <10 seconds for Visual Transformers. SPELL uses a SlowFast feature extractor and a lightweight GNN head for larger temporal context. MeMVit uses additional data from Kinetics700.

When a video is modeled as a spatio-temporal graph, many video understanding problems can be formulated as either node classification or graph classification problems. In addition to Active Speaker Detection and Action Detection, we utilize a SPELL-like framework for other tasks in video understanding such as Action Boundary Detection and Temporal Action Segmentation.

The work on spatio-temporal graphs for active speaker detection has been accepted for publication at ECCV 2022. The manuscript can be found here and the code for Active Speaker Detection can be found here.

In Intel’s AI Lab, we are extremely interested in video representation learning. This work is just one thread within this broader research effort around video understanding including forecasting in egocentric videos (Figure 5, CVPR 2022), dynamic video scene graph generation (Figure 6, WACV 2023) and temporal learning of video-text sparse transformers.

In Intel’s AI Lab, we are extremely interested in video representation learning. This work is just one thread within this broader research effort around video understanding including forecasting in egocentric videos (Figure 5, CVPR 2022), dynamic video scene graph generation (Figure 6, WACV 2023) and an ongoing work around temporal learning of video-text sparse transformers. Our video-text sparse transformers are equipped with improved temporal reasoning capability without hitting the memory and compute bottlenecks.

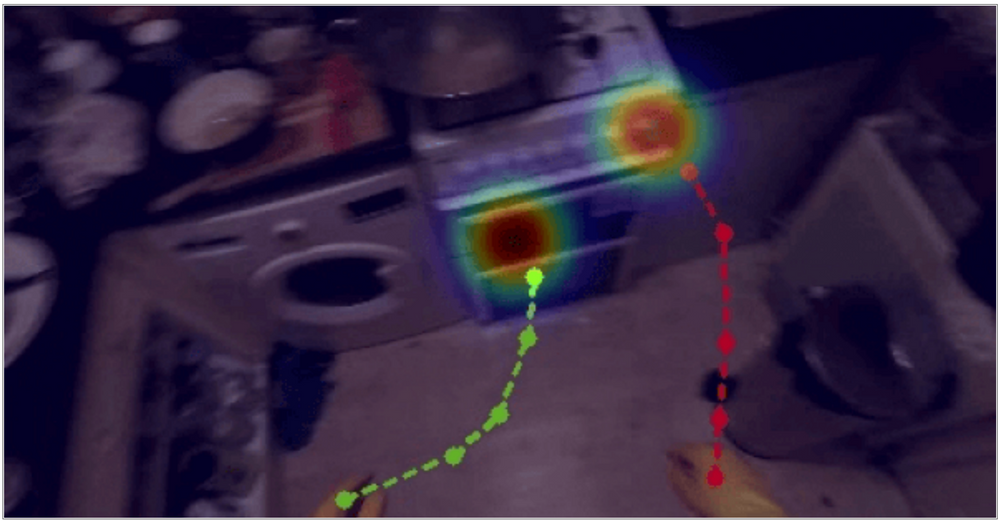

Video 1. Play the video to see a demonstration of the forecasting problem we tackle. Given observation frames of the past, we predict future hand trajectories (green and red lines) and object interaction hotspots (heatmaps).

Figure 5. Given observation frames of the past, we predict future hand trajectories (green and red lines) and object interaction hotspots (heatmaps). Details of this CVPR 2022 paper can be found in https://stevenlsw.github.io/hoi-forecast/

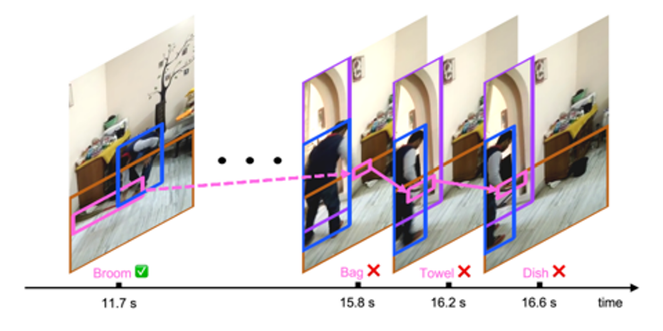

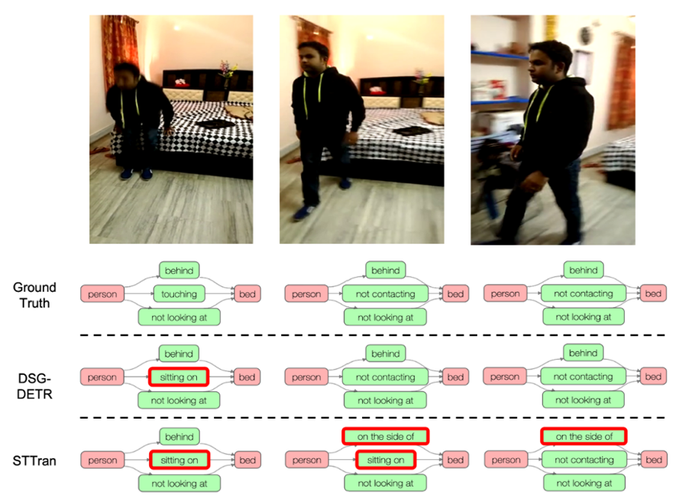

Figure 6: Dynamic scene graph generation exploiting long-term dependencies. Top: an example where modeling the short-term temporal dependency fails. The broom, bounded by the pink bounding boxes, is quite challenging to recognize on the rightmost three frames. Previous methods capturing only the short-term dependencies (indicated by the solid pink arrows) fail to make the correct prediction, while our method, DSG-DETR, capturing the long-term dependencies (over more than 4 seconds), can recognize the broom and predict the human-broom relationship. Bottom: Scene graphs generated by DSG-DETR and STTran (current SOTA) for three key frames sampled from an action which embeds long-term dependencies.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.