Using Red Hat Advanced Cluster Management (ACM) for Kubernetes

Hybrid and multicloud infrastructures are becoming a major part of data center deployments, whether you’re talking about databases, AI, machine-learning, or telecommunications workloads. By combining these two technologies, today’s cloud-native infrastructures benefit from a hybrid multicloud approach, with some workloads running in private clouds, some running in public clouds, and others running on-premise. Distributing workloads is especially important for edge applications that need to run on-premise and for customers who want to control where they store applications and sensitive data.

By responding to customer interest in multicloud and promoting the value of Intel hardware plus Red Hat OpenShift as the backbone of an effective hybrid multicloud architecture, we show customers how they benefit from this technology’s flexibility. To this end, Red Hat has made substantial progress in enabling OpenShift service offerings across multiple cloud providers as well as working on-premises, supporting bare-metal or virtual infrastructure platforms from VMware and OpenStack.

Red Hat’s hybrid multicloud architecture is powered by Intel technologies which are highly optimized for third party workloads. For edge and private cloud solutions, the architecture offers the capability to adopt specific compute, network, memory, and accelerator resource needs. For public cloud deployments at scale, the Intel Xeon Scalable platform offers a compelling price performance on industry standard infrastructure, available in multiple configurations at different cloud service providers, offering choice and flexibility in your scaling needs.

Red Hat and Intel have a long history of collaboration and together we drive open source innovation to accelerate digital transformation in the industry. With Intel providing the infrastructure hardware and Red Hat providing the infrastructure software, together we enable solutions to transition workloads from VMs to containers, build hybrid or multicloud infrastructures, and leverage the power of microservices and Kubernetes. By deploying Red Hat OpenShift and Intel Xeon Scalable platform across all cloud instances, we can offer a true hybrid multicloud solution. A solution that provides flexibility and application portability across many different footprints.

Key to this hybrid multicloud technology is Red Hat Advanced Cluster Management (ACM) for Kubernetes. ACM manages a complete Kubernetes infrastructure from a single window and can be deployed across different footprints. ACM extends the value of Red Hat OpenShift by deploying apps, managing multiple clusters, and enforcing policies across multiple clusters at scale.

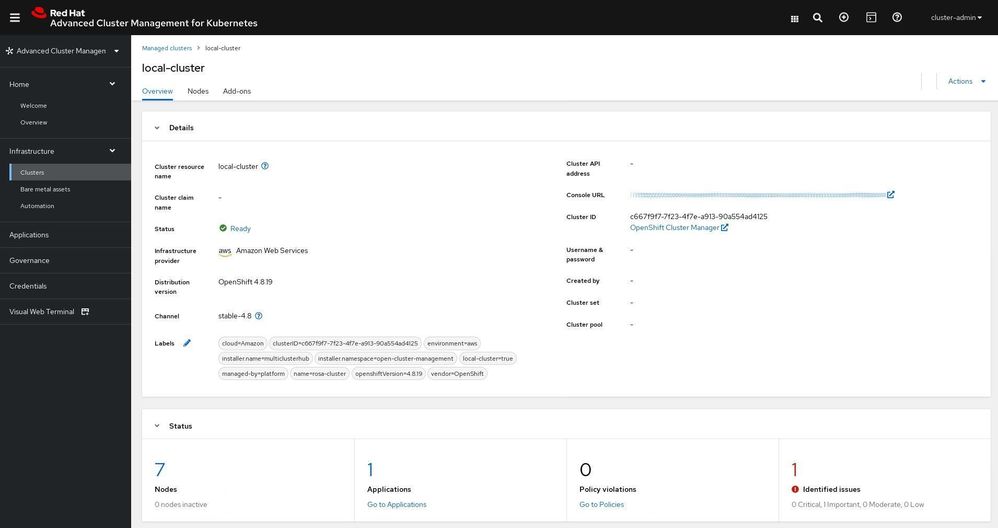

For this exercise, we have installed ACM on an AWS (ROSA) cluster (in our demo the AWS Availability zone used was in eu-central-1, Frankfurt, Germany). ACM is deployed in a hub and spoke model, with the AWS cluster acting as the hub that manages the spoke OpenShift clusters located elsewhere.

The ROSA Cluster

First, we must install the ROSA cluster. To install the ROSA cluster, follow the instructions per the documentation here:

https://docs.openshift.com/rosa/rosa_getting_started/rosa-getting-started-workflow.html

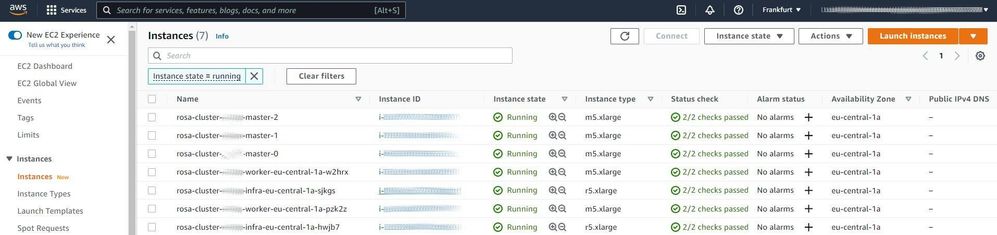

After the installation of the ROSA cluster, we can see on the AWS console that 7x EC2 instances have been created as part of the cluster.

- 3 masters: m5.xlarge

- 2 infra nodes: r5.xlarge

- 2 worker nodes: m5.xlarge

The following screenshot shows the instances created on the AWS console.

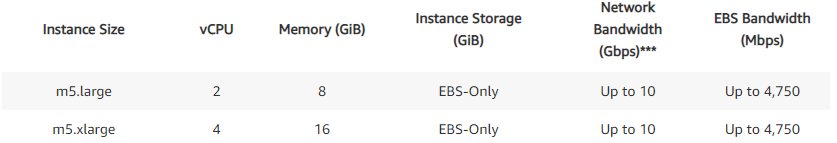

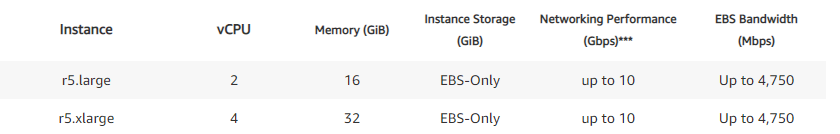

The screens here give the details of the instances that were used for the Master, Infra, and Worker nodes (https://aws.amazon.com/ec2/instance-types/ ).

Instances are based on Intel Xeon Scalable processors (1st and 2nd generations). An option to select different Intel-based instance types depending on the needs also exists.

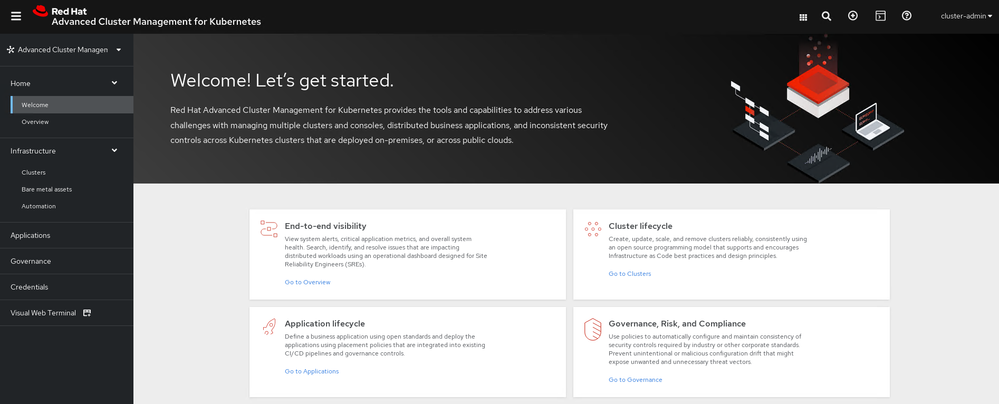

The version of the OpenShift used on ROSA is 4.8.19, and on ACM is 2.3.3.

Once logged into the ACM console, you will see the ROSA cluster (local-cluster).

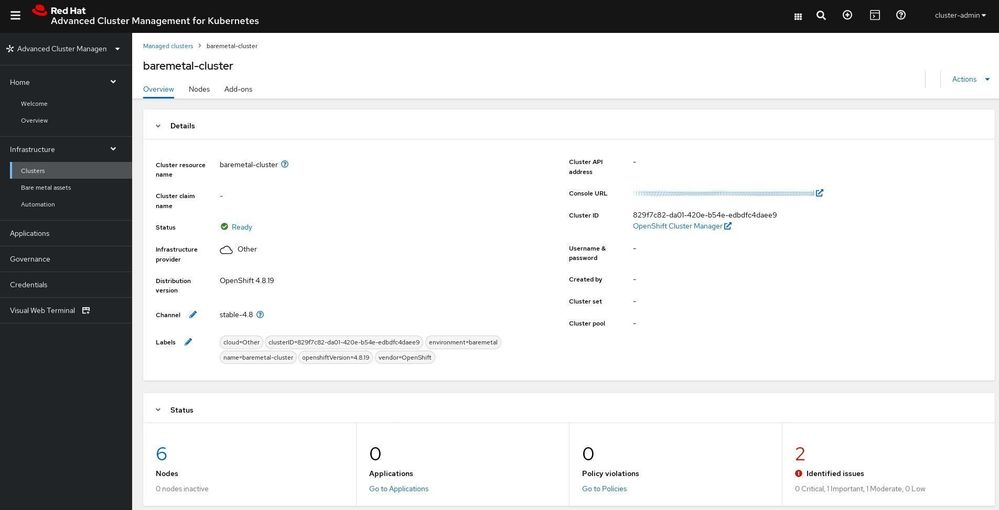

The Bare-Metal Cluster

The first spoke cluster for this exercise is a bare-metal OpenShift cluster deployed in an Intel data center in Russia. The installation of the cluster is done according to the OpenShift docs.

The bare-metal cluster is running OpenShift version 4.8.19, and the hardware configuration used for the nodes is based on 3rd Generation Intel® Xeon® Scalable processors.

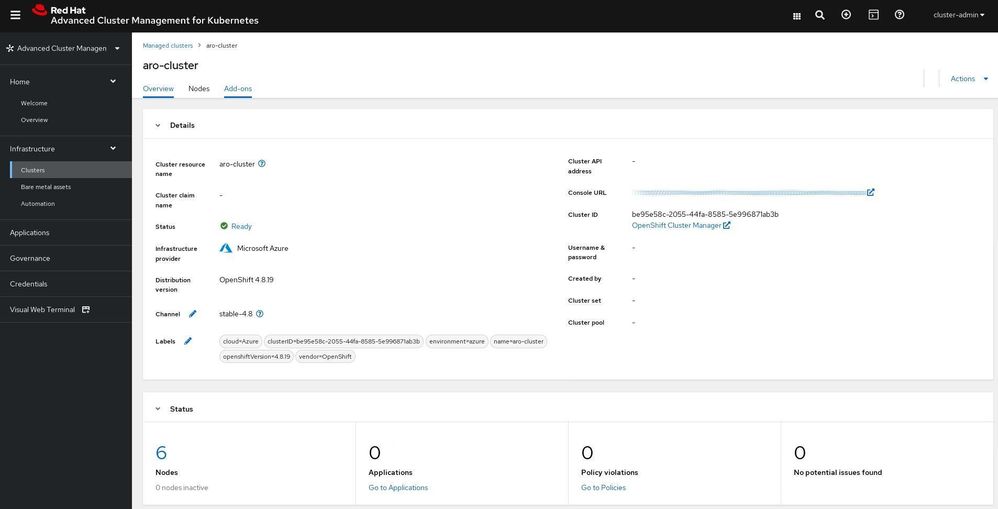

The Azure Cluster

The second spoke cluster is a Microsoft Azure Red Hat OpenShift (ARO) cluster (in our demo the ARO server was in West Europe). The installation of the cluster is done according to the OpenShift docs. Azure account configuration details found here: https://docs.openshift.com/container-platform/4.8/installing/installing_azure/installing-azure-account.html. ARO cluster installation instructions can be found here: https://docs.microsoft.com/en-us/azure/openshift/tutorial-create-cluster.

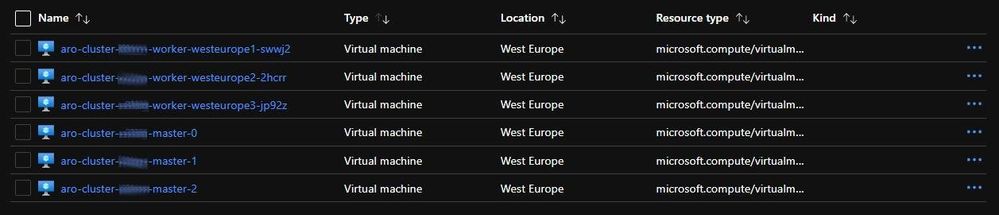

After the installation of the ARO cluster, we can see on the Azure console that 6x VM instances have been created as part of the cluster.

- 3 masters: Standard D8s v3

- 3 worker nodes: Standard D4s v3

Dsv3-series sizes run on Intel® Xeon® Scalable (1st and 2nd generation) or Intel Xeon E5 v3/v4 processors (https://azure.microsoft.com/en-us/pricing/details/virtual-machines/series). Also, an option to select different Intel-based instance types depending on the needs exists.

The following screenshot shows the instances created by ARO on the Azure console.

Managing the Clusters with ACM

ACM can be used to manage the lifecycle of an OpenShift cluster, i.e. we can deploy an OpenShift cluster, pull in an existing cluster into ACM, and delete the clusters.

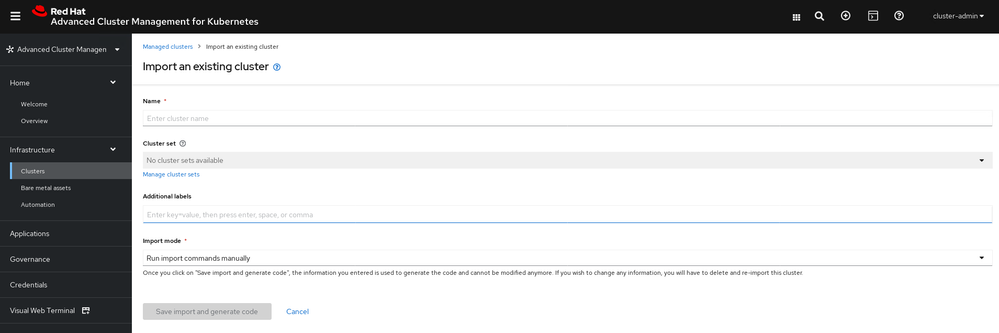

For this demo, we pulled in a previously deployed OpenShift cluster on bare-metal and an ARO cluster. To do this, click the “Import cluster” button.

This takes us to the Importing an existing cluster page.

From the Import screen, copy the command and run it on the cluster being imported into ACM.

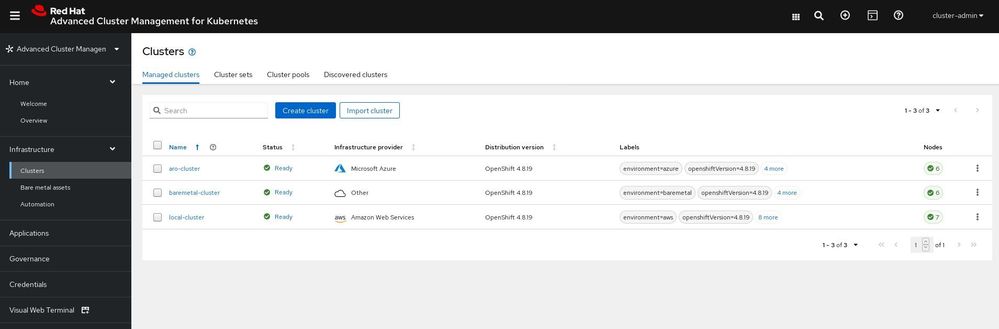

After adding the 2 spoke clusters (bare metal and ARO), the cluster management console will look like this:

The Workload

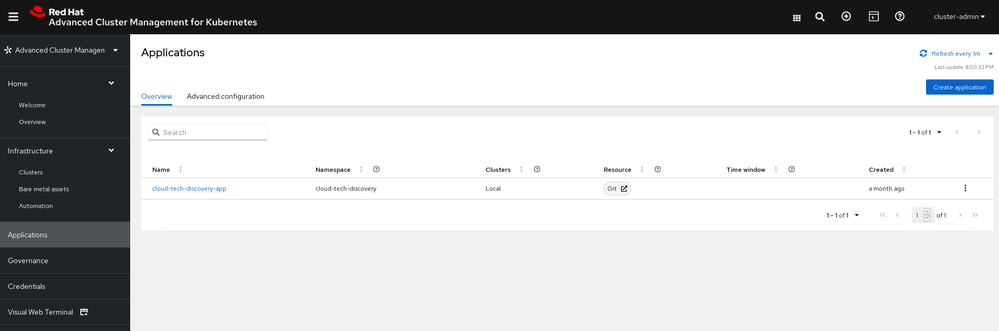

As part of this exercise, we want to demonstrate the process of an application migration across the on-premise cluster and the cloud OpenShift clusters managed by ACM. For testing purposes, we use an application that discovers infrastructure platforms and environment technologies that can be used to accelerate data processing for different kinds of use cases. It could be used as a starting point to understand currently available technologies across different clouds.

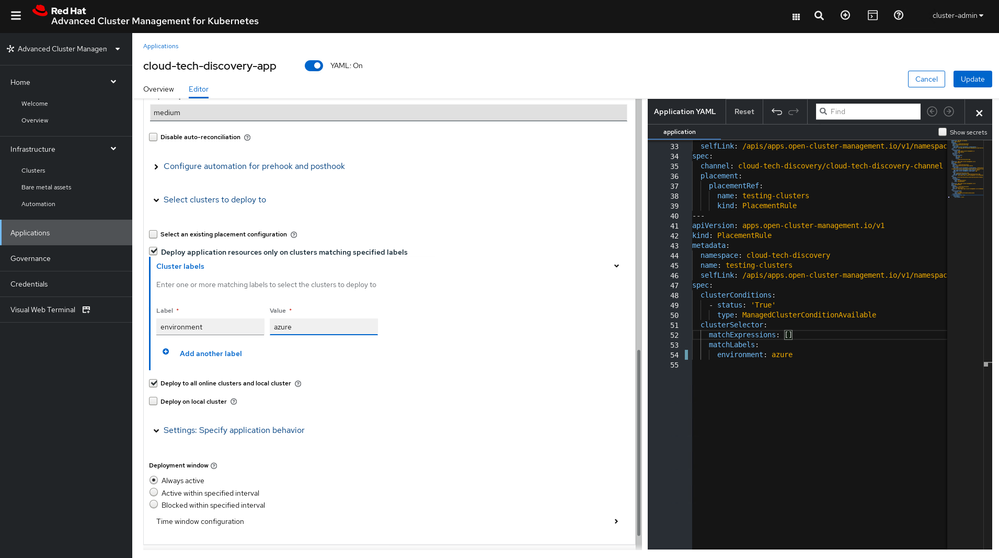

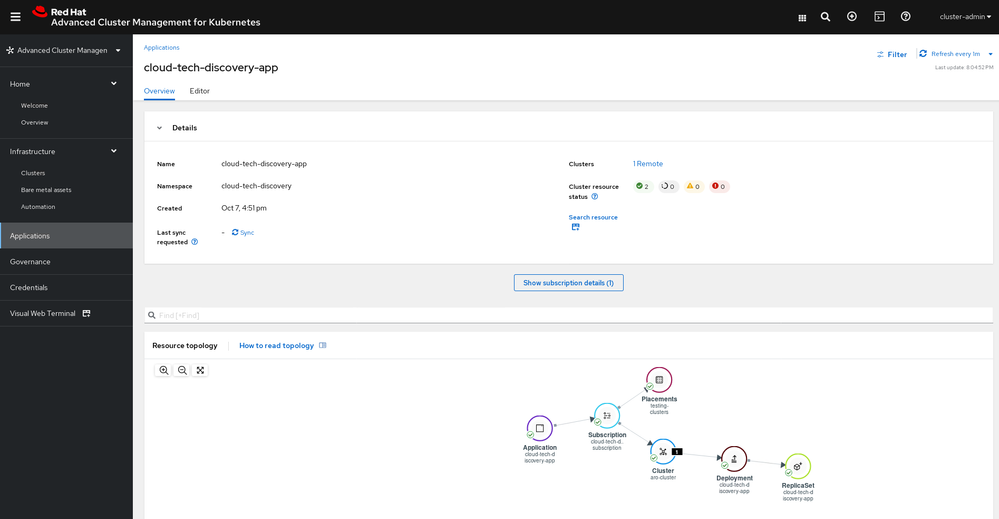

An application consists of a deployment that runs one test pod in the cluster. Once you create an application it appears in the Applications section of ACM. Application placement rule is based on the variable called “environment”. Depending on the settings, it can be “aws” - for the ROSA cluster, “azure” - for the ARO cluster and “baremetal” for the on-premise bare-metal cluster.

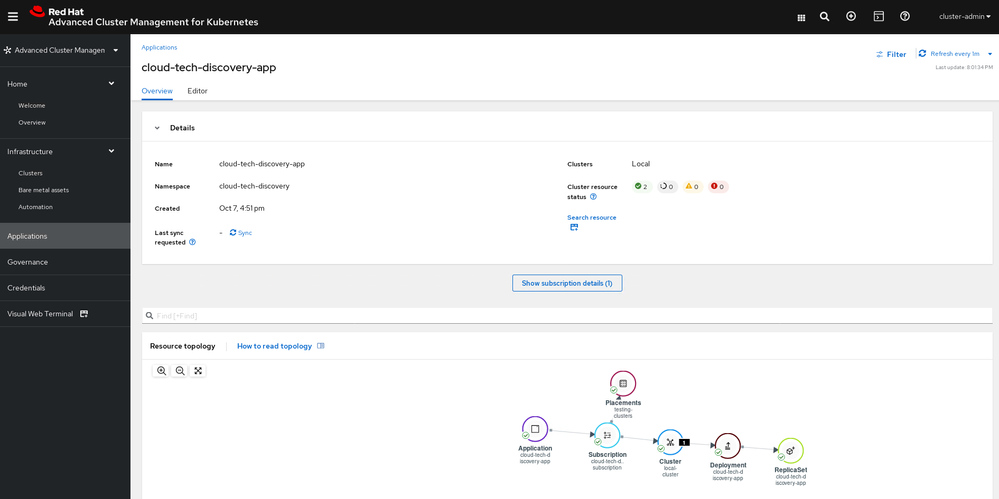

This screenshot shows an application running on the ROSA cluster.

Because ACM is installed on ROSA in the UI, you can see the selected cluster as a local cluster.

Let us move the application from ROSA to ARO as an example. To accomplish this, we will use Editor and change the label environment from “aws” to “azure”.

Once we save the application, it will be terminated on the ROSA cluster and scheduled on the ARO cluster.

The process for moving the application to the on-premise cluster is the same except the value is set to “baremetal”.

Conclusion

The ability to shift between on-premise and multiple cloud providers with ease offers:

Flexibility

- Application portability

- Capability and agility to optimize deployments across any footprint

Efficiencies

- Payment flexibility

- Automation of application deployment

- A best of both worlds model that uses the scale of cloud with the capabilities of on-premise

Strategic value

- Write application once and deploy anywhere

- Low bar of entry for new technology adoption

In this article, we discussed how to migrate an application between an on-prem bare metal OpenShift cluster and Managed OpenShift clusters on ROSA and ARO. In the next blog post, we will walk you through migrating data between the OpenShift clusters and managing data storage.

For a video demo of the material we have discussed in this blog, click here.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.