Author: bing.zhu@intel.com

Deep learning models are generated based on a large amount of training data and computing power which have very high commercial value. The trained models can be deployed on virtual nodes provided in the public cloud by cloud service providers (CSPs).

How to protect these models deployed on public clouds from being stolen, and how to ensure these models can be used but invisible to adversaries at the same time? These concerns are faced by both model owners and cloud service providers. This solution is introduced to solve these concerns by ensuring the models' confidentiality with hardware Intel® Software Guard Extensions (Intel® SGX) technology.

Intel® SGX is an exclusive security feature of Intel CPUs. It is a set of instructions that increase the security of application code and data, offering them more protection from disclosure or modification. Developers can partition sensitive information into a hardware-based trusted execution environment (TEE) or enclave — an area of memory with a higher level of security protection. The technology helps ensure the root of trust is limited to a small portion of the central processing unit’s hardware, better protecting the confidentiality and integrity of code and data.

Framework

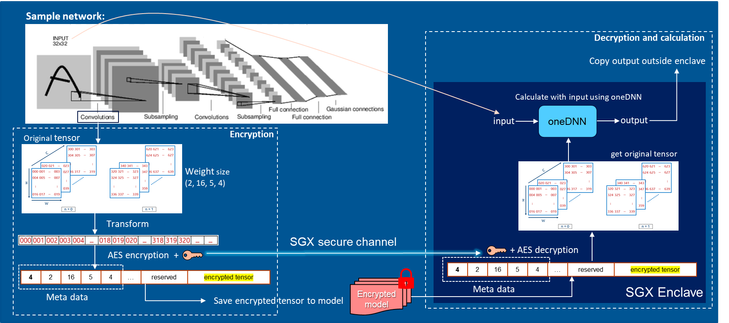

This solution can protect model parameters when processing model inference. The model parameters are stored in ciphertext encrypted by the model owner at the deployment stage, then the inference process are performed in an Intel® SGX enclave. Model parameters are only decrypted in the Intel® SGX enclave deployed in CSP’s node, and the decryption key is transmitted through a secure channel established by Intel® SGX remote attestation.

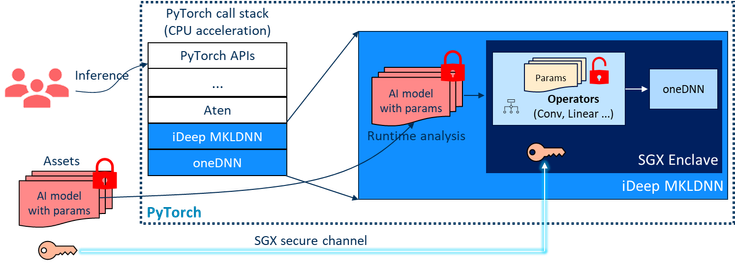

Figure1: Architecture Overview

Specifically, the parameters of the trained model are encrypted on the user’s side, and the encrypted model will be transmitted and deployed on CSPs. When using the model to do inference tasks, the model will get the key through Intel® SGX secure channel and decrypt the parameters in the Intel® SGX enclave. Only the output result of an operator can be seen outside the enclave. The parameters are protected by Intel® SGX technology.

Figure 2: Encryption and Decryption Process

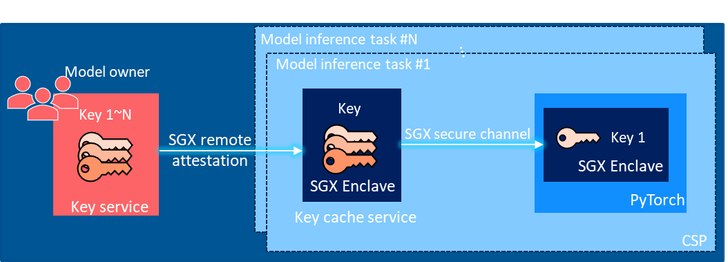

The key retrieval process is shown in Figure 3. The key distribution service manages all model keys and model IDs. It runs in an Intel® SGX enclave and provides the key to the model's enclave when necessary.

Figure 3: Key Transfer Process

When the model is used to do inference in the public cloud, the Pytorch enclave will automatically request the model key from the local key cache service. The key will be encrypted and sent to the enclave via the Intel® SGX secure channel. The enclave started by PyTorch uses the key to decrypt the model parameters and perform calculations. Model parameters under Intel® SGX-based hardware protection throughout the entire process, the model can ensure available and invisible at the same time.

Conclusion

This article introduces an Intel® SGX-based solution to protect Pytorch model parameters when processing inference. By using this solution, the Intel® SGX enclave can ensure the model’s confidentiality when inference in public clouds. This solution has been successfully verified on Alibaba Cloud ECS instances. You can refer to the Alibaba best practice here. More details can also be found in the GitHub.

Acknowledgement

This post reflects the work of many people at Intel, including Yang Huang, Hanyu Long, Jiong Gong, Shaopu Yan, Bing Zhu, Kai Wang, Zhenlong Ji and our partner Alibaba Cloud Security team.

Contact bing.zhu@intel.com for more information.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.