- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Greetings, I am contemplating buying an NCS2 for my Raspberry Pi 4B. I have a VGG16 model that uses a sigmoid layer for binary classification. Also, I am using grad-cam with TensorFlow for class activation maps. I'd like to know if it's possible to implement a VGG16 with binary classification problems and grad-cam on my Raspberry Pi with the NCS2 device? I read the compatible layers PDF and I did not find anything in regards to the sigmoid layer.

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Publio,

Two steps are required to identify whether your custom models can be inferred by Intel® Neural Compute Stick 2:

1) Model Optimizer can convert your custom model to IR format files.

2) The IR format files can be inferred by the Inference Engine MYRIAD plugin.

You can check the supported framework layers based on your custom model.

You can also verify your custom model by running Deep Learning Workbench in the Intel® DevCloud for the Edge. By doing so, you can select Intel® Neural Compute Stick 2 as the device just through an Internet connection. If you are able to create a project by importing your converted IR format files, selecting MYRIAD as the device and generating a graph for the performance in the end, then your custom model is able to be inferred by Intel® Neural Compute Stick 2.

Referring to Class Activation Map methods implemented in Pytorch, target layer is required to be chosen based on the selected Pytorch model to compute CAM. However, inferencing on Intel® Neural Compute Stick 2 is required to convert the model into IR. Hence, it is not possible to employ grad-cam with Intel® Neural Compute Stick 2.

Regards,

Yu Chern

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Yu,

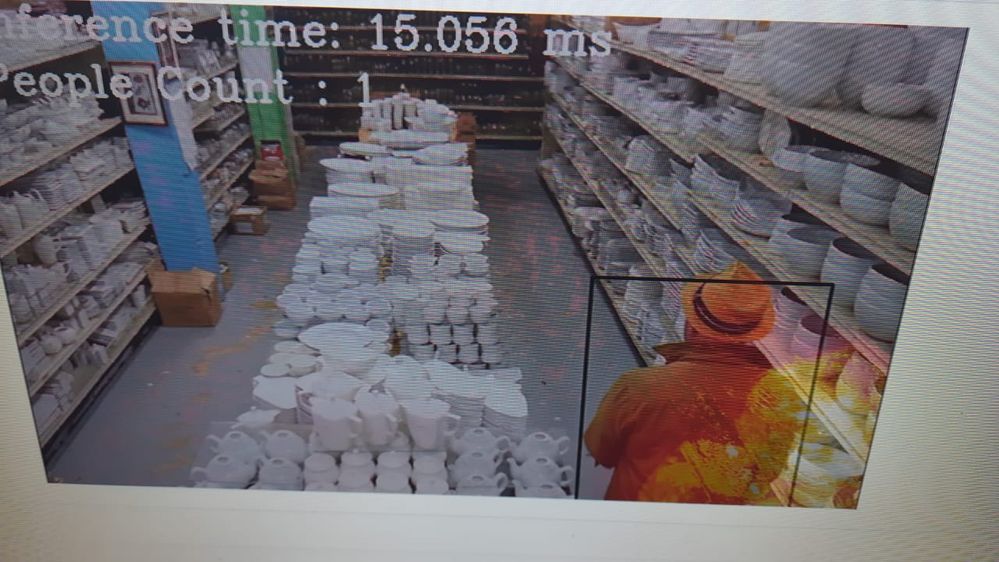

Thank you for your reply. I could not access the notebook from the optimize button in the edge section of the website. I was not able to test my model. Additionally, I'd like to ask if converting a model to an intermediate representation (IR) impacts the model accuracy? Also, from my understanding, do I need to run two models to execute grad-cam? Not necessarily using Pytorch. I'd like to be able to run inference and grad-cam to visualize the heat map. I did find information two days ago about some users who applied grad-cam to their model using c++. I was not able to find the support question again, but I did save a picture. I'd like to do the same for image classification and in Python

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Publio,

You have to sign in your account and also enroll Intel DevCloud with your account. After you received a welcome email from Intel, you are able to access to the Deep Learning Workbench with Intel DevCloud.

Model Optimizer performs a few optimizations to remove excess layers and group operations when possible into simpler, faster graphs. Often this performance boost is achieved at the cost of a small accuracy reduction.

Regarding grad-cam, you might be interested in this tutorial.

The Store Aisle Monitor Demo is also available in Python. The motion heatmap in this demo is using OpenCV with Python.

Regards,

Yu Chern

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Publio,

This thread will no longer be monitored since we have provided a solution. If you need any additional information from Intel, please submit a new question.

Regards,

Yu Chern

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page