- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'm trying to run the Human Pose Estimation Demo with the RealSense Depth Camera D435 on os x.

sudo ./human_pose_estimation_demo -m /Users/mars/intel/openvino_2019.3.376/deployment_tools/open_model_zoo/tools/downloader/intel/human-pose-estimation-0001/FP32/human-pose-estimation-0001.xml -i /dev/bus/usb/020/017 Password: InferenceEngine: API version ............ 2.1 Build .................. 32974 Description ....... API Parsing input parameters [ ERROR ] Failed to create plugin /Users/mars/intel/openvino_2019.3.376/deployment_tools/inference_engine/lib/intel64/libMKLDNNPlugin.dylib for device CPU Please, check your environment Cannot load library '/Users/mars/intel/openvino_2019.3.376/deployment_tools/inference_engine/lib/intel64/libMKLDNNPlugin.dylib': dlopen(/Users/mars/intel/openvino_2019.3.376/deployment_tools/inference_engine/lib/intel64/libMKLDNNPlugin.dylib, 1): Library not loaded: @rpath/libmkl_tiny_tbb.dylib Referenced from: /Users/mars/intel/openvino_2019.3.376/deployment_tools/inference_engine/lib/intel64/libMKLDNNPlugin.dylib Reason: image not found

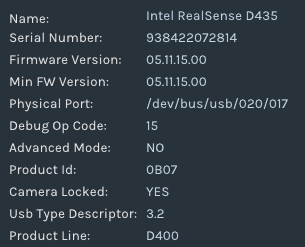

The input device name I found via the realviewer:

How can I use the camera as input device?

Link Copied

1 Reply

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It's because of the program cannot find the libmkl_tiny_tbb.dylib library to load libMKLDNNPlugin.dylib. I believe the easiest way to fix this problem is to copy the file next to other libraries. Hopefully, this command will fix the problem.

Do not forget to execute the command after initializing the environment variables

sudo cp $INTEL_OPENVINO_DIR/deployment_tools/inference_engine/external/mkltiny_mac/lib/libmkl_tiny_tbb.dylib $INTEL_OPENVINO_DIR/deployment_tools/inference_engine/lib/intel64/

Reply

Topic Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page