- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

+ Description:

There is a large error between IR inference in ‘GNA_SW_FP32’ and ‘GNA_HW’.

+ Question:

*Q1: Is the result (description) ‘reasonable’ ?

*Q2: If the result is not reasonable, can I get a chance to make the result reasonable?

+ Sample code: attached file

* requirement packages : VEN_requirement_date20211129.txt

* Script 1 (GNA_HW) : Issue00_GNAPrecisionIssue_Infer_GNA_HW.ipynb

* Script 2 (GNA_SW_FP32) : Issue00_GNAPrecisionIssue_Infer_GNA_SW_FP32.ipynb

* LSTM( Pytorh) : Export_Main00\CPU_TraceONNX_LSTM_CellTEST

* LSTM(ONNX from Pytorh) : Export_Main00\CPU_TraceONNX_LSTM_CellTEST.onnx

* LSTM(IR) : CPU_TraceONNX_LSTM_CellTEST.xml

* LSTM(IR) : CPU_TraceONNX_LSTM_CellTEST.bin

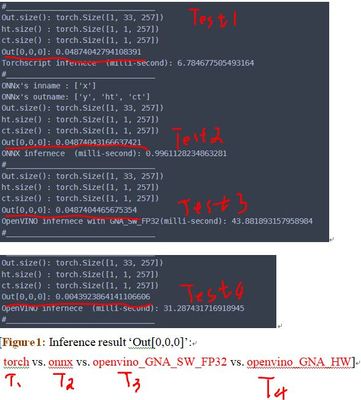

+Example & Test:

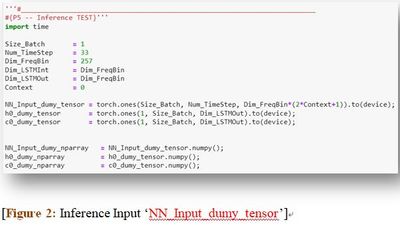

* Input: A tensor with the shape [batch size :1, time step:33, feature bins:257].

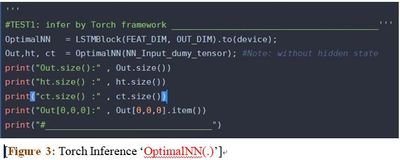

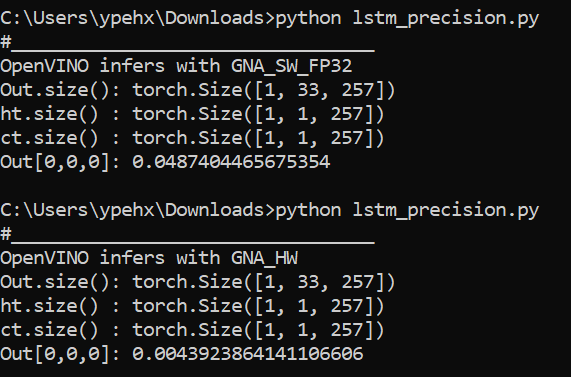

* Test 1: Inference LSTM_Torch with the precision FP32, and the result is 0.0487.

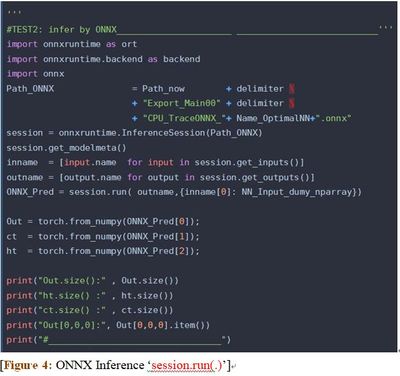

* Test 2: Inference LSTM_Onnx with the precision FP32, and the result is 0.0487.

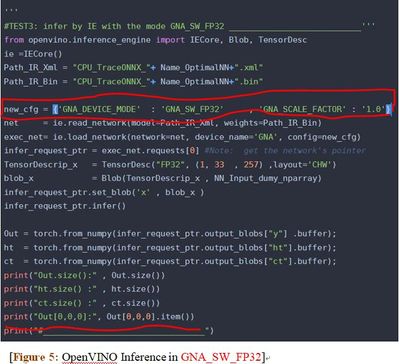

* Test 3: Inference LSTM _IR with mode ‘GNA_SW_FP32’, and the result is 0.0487.

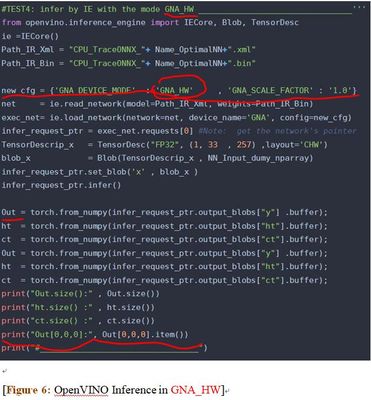

* Test 4: Inference LSTM _IR with mode ‘GNA_HW’, and the result is 0.0043.

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi tea6329714,

Thanks for reaching out to us and sharing your findings with us.

I also get the same results as you.

GNA_SW_FP32 = 0.0487404465675354

GNA_HW = 0.0043923864141106606

Besides, I also try with other execution modes (GNA_AUTO, GNA_SW_EXACT,GNA_HW_WITH_SW_FBACK) but all of these modes getting the same precision, 0.0043923864141106606.

Looking at the description of Execution Modes, it might be reasonable as executing in GNA_SW_FP32 execution mode, GNA-compiled graph is executed on CPU but substitutes parameters and calculations from low precision to floating point (FP32). While looking at the Supported Configuration Parameters, the GNA_PRECISION is in I16 (default value).

Regards,

Peh

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi tea6329714,

This thread will no longer be monitored since we have provided an answer. If you need any additional information from Intel, please submit a new question.

Regards,

Peh

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page