Hello,

when I run the mpitune on my cluster, some errors happend.

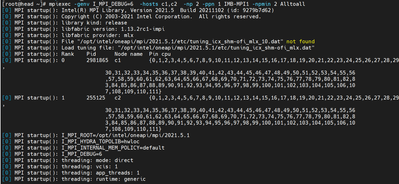

#mpitune command

mpitune -genv I_MPI_DEBUG=6 -np 2 -ppn 1 -hosts c1,c2 -m collect -c /opt/intel/oneapi/mpi/latest/etc/tune_cfg/sample_alltoall.cfg

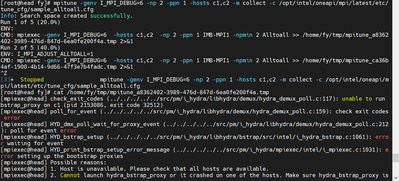

#Error

[mpiexec@c1] check_exit_codes (../../../../../src/pm/i_hydra/libhydra/demux/hydra_demux_poll.c:117): unable to run bstrap_proxy on c2 (pid 2567391, exit code 32512)

[mpiexec@c1] poll_for_event (../../../../../src/pm/i_hydra/libhydra/demux/hydra_demux_poll.c:159): check exit codes error

[mpiexec@c1] HYD_dmx_poll_wait_for_proxy_event (../../../../../src/pm/i_hydra/libhydra/demux/hydra_demux_poll.c:212): poll for event error

[mpiexec@c1] HYD_bstrap_setup (../../../../../src/pm/i_hydra/libhydra/bstrap/src/intel/i_hydra_bstrap.c:1061): error waiting for event

[mpiexec@c1] HYD_print_bstrap_setup_error_message (../../../../../src/pm/i_hydra/mpiexec/intel/i_mpiexec.c:1031): error setting up the bootstrap proxies

[mpiexec@c1] Possible reasons:

[mpiexec@c1] 1. Host is unavailable. Please check that all hosts are available.

[mpiexec@c1] 2. Cannot launch hydra_bstrap_proxy or it crashed on one of the hosts. Make sure hydra_bstrap_proxy is available on all hosts and it has right permissions.

[mpiexec@c1] 3. Firewall refused connection. Check that enough ports are allowed in the firewall and specify them with the I_MPI_PORT_RANGE variable.

[mpiexec@c1] 4. Ssh bootstrap cannot launch processes on remote host. Make sure that passwordless ssh connection is established across compute hosts.

[mpiexec@c1] You may try using -bootstrap option to select alternative launcher.

But when I run the mpiexec command in sample_alltoall.cfg, the command can be executed successfully.

mpiexec -genvlist I_MPI_DEBUG=6 -hosts c1,c2 -np 2 -ppn 1 IMB-MPI1 -npmin 2 Alltoall

Could you provide any suggestions about it ?

Thanks.

連結已複製

Hi,

Thanks for reaching out to us.

Could you please provide us with the below details to investigate more on your issue?

- Operating system & its version.

- The version of Intel MPI Library (or) the version Intel oneAPI HPC Toolkit.

Could you please confirm if you observed these errors in temporary files(mpitune*.tmp) generated from the mpitune command?

Thanks & Regards,

Santosh

Hi,

We haven't heard back from you. Could you please provide us with the above-requested details?

Also, could you please let us know the use case behind running the mpitune command on your cluster?

Thanks & Regards,

Santosh

Hi,

Sorry for my late reply.

1. Operating system & its version.

My system is Centos. 8.3.

2.The version of Intel MPI Library (or) the version Intel oneAPI HPC Toolkit.

Intel oneAPI HPC Toolkit is 2022.1.2

And I can run the mpiexec command successfully after setting the environment < export UCX_TLS=tcp>.

Steps:

1. source /opt/intel/oneapi/setvars.sh

2.export UCX_TLS=tcp

3. run the mpiexec command and the mpitune command.

Hi,

Intel® MPI Library provides the following tuning utilities:

- Autotuner

- mpitune_fast

- mpitune

Given below are the scenarios where we can use a specific tuning utility:

- Autotuner is the recommended utility for application-specific tuning.

- mpitune_fast is the recommended easy-to-use utility for cluster-wide tuning.

- mpitune could be used for application-specific and cluster-wide tuning

For more information regarding the Intel MPI tuning utilities, please refer to the below link:

We were able to reproduce your issue from our end. We have reported this issue to the concerned development team. They are looking into your issue.

If you want to do application-specific tuning, then for the time being you can use Autotuner.

If you want to do cluster-wide tuning, then you can use mpitune_fast.

Thanks & Regards,

Santosh

Yes. I have tested it in the latest version and the error is not occurred. Thanks.