- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I calculate the Max Flops on Skylake with cpu- frequency*16.

Is cpu-frequency*32 on GOLD version of Skylake?

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If I recall correctly, all of the Gold 6000 processors have two AVX512 units, so they are capable of 32 DP FLOPS/cycle. The Gold 5000 processors have one AVX512 unit (except for the Gold 5122, which has two), so they are capable of 16 DP FLOPS/cycle.

The frequency that you will get when running AVX512 instructions will be lower than the nominal frequency in most cases. The minimum and maximum values for each processor model are included in the Xeon Scalable Processor Specification Update (document 336065).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Gold 5000 processors: 16 DP = 512 / 64 * 2

How can I understand the "2"?

Gold 6000 processor: 32 DP = 512 / 64 * 2 * 2

How can I understand the two "2"?

Is that mean 2 calculate unit or 4 calculate unit?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There are fp airth 128Bit, 256Bit on Skylake.

There are fp airth 128Bit, 256Bit, 512Bit on Skylakex.

Is that mean only E3 V5 is Skylake, Scalable processor is Skylakex?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

GHui, the *2 is due to the peak FLOPS being achieved with FMA instructions that do 2 flops (multiply and add) together in 1 instruction.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Starting with the Xeon E5 v3 (Haswell) core, each floating-point vector unit supports the Fused Multiply-Add instructions, which perform two operations on each element. So for AVX512, each unit performs 2 operations on each of 8 elements in one cycle.

The use of the "Skylake" label is quite confusing.

- When used by itself, "Skylake" refers to the "Skylake client" core. This uses the new core architecture, but does not support the AVX512 instruction set. The AVX2/FMA instruction set provides the highest FP operation rate, with 2 256-bit functional units providing a total of: 2 functional units * 4 64-bit elements/functional unit * 2 operations/element = 16 FP ops/cycle.

- "Skylake Xeon" refers to cores that include support for the AVX512 instruction set. These may have either one or two 512-bit functional units for AVX512 instructions, depending on the model.

- Server processors (i.e, "Xeon" processors) can be built using either the "Skylake client" core or the "Skylake Xeon" core.

- Xeon D-21xx processors appear to use the "Skylake Xeon" core with one AVX512 unit.

- Xeon E3-12xx v5 processors use the "Skylake client" core and do not support AVX512.

- Xeon E3-15xx v5 processors use the "Skylake client" core, and all include integrated graphics engines.

- Xeon W-21xx processors use the "Skylake Xeon" core with either one or two AVX512 units.

- Xeon Scalable processors (Bronze, Silver, Gold, Platinum) use the "Skylake Xeon" core with either one or two AVX512 units.

No doubt things will become even more confusing in the future....

The information above comes from https://en.wikipedia.org/wiki/List_of_Intel_Xeon_microprocessors and from checking out the links to specific processor information pages on https://ark.intel.com/

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I cat /proc/cpuinfo to get model.

I get model 85 on Xeon Gold 6613, and model name "Intel(R) Xeon(R) Gold 6133 CPU @ 2.50GHz".

Does the Xeon D-21xx, Xeon E3-12xx, Xeon E3-15xx, Xeon W-21xx, Gold 6000, Gold 5000, and so on, are the same model number?

Can I use the model to get CPU with one or two AVX512 units?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The only way I know to obtain this information is to look at the specific processor product page under https://ark.intel.com/#@Processors

Examples:

- https://ark.intel.com/products/136436/Intel-Xeon-D-2177NT-Processor-19_25M-Cache-1_90-GHz

- "# of AVX-512 FMA Units 1"

- https://ark.intel.com/products/97463/Intel-Xeon-Processor-E3-1505M-v6-8M-Cache-3_00-GHz

- "Instruction Set Extensions" does not include AVX-512

- https://ark.intel.com/products/125036/Intel-Xeon-W-2123-Processor-8_25M-Cache-3_60-GHz

- "# of AVX-512 FMA Units 2"

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have get CPU model name "Intel(R) Xeon(R) Gold 6133 CPU @ 2.50GHz", but I'm not sure the 4th word is "Processor Number".

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The Xeon Gold 6133 processor does not appear in Intel's list of Xeon Scalable Processors at https://ark.intel.com/products/series/125191/Intel-Xeon-Scalable-Processors, but it may be a special "OEM" version. There are a number of these listed in the section on "Skylake SP" processors at https://en.wikipedia.org/wiki/List_of_Intel_Xeon_microprocessors -- they either say "OEM" or have a blank in the "Release Price" column.

The only way to be sure of the number of AVX-512 units is to run a benchmark test -- it does not look like the number of AVX-512 units is available from the CPUID instruction or through any other hardware reference.

Given that every other Xeon Gold 6000 processor has 2 AVX-512 FMA units, I would guess that this one does as well, but if it is an OEM part it could have been specially requested to only have one AVX-512 FMA unit.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

What benchmark test can do that? How can I get it?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If there no AVX512 (like E3-1585 v5), I set the AVX512 performance counter, what will happened.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

On a Linux system, the command "cat /proc/cpuinfo" will include a list of "flags" that show which features the processor supports. If AVX512 is supported, then the AVX512 subsets that are supported will be listed. On a Xeon Platinum 8160, for example, I get:

# head -26 /proc/cpuinfo | grep 512

flags : fpu vme de pse tsc msr pae mce cx8 apic sep mtrr pge mca cmov pat pse36 clflush dts acpi mmx fxsr sse sse2 ss ht tm pbe syscall nx pdpe1gb rdtscp lm constant_tsc art arch_perfmon pebs bts rep_good nopl xtopology nonstop_tsc aperfmperf eagerfpu pni pclmulqdq dtes64 monitor ds_cpl vmx smx est tm2 ssse3 fma cx16 xtpr pdcm pcid dca sse4_1 sse4_2 x2apic movbe popcnt tsc_deadline_timer aes xsave avx f16c rdrand lahf_lm abm 3dnowprefetch epb cat_l3 cdp_l3 invpcid_single intel_pt tpr_shadow vnmi flexpriority ept vpid fsgsbase tsc_adjust bmi1 hle avx2 smep bmi2 erms invpcid rtm cqm mpx rdt_a avx512f avx512dq rdseed adx smap clflushopt clwb avx512cd avx512bw avx512vl xsaveopt xsavec xgetbv1 cqm_llc cqm_occup_llc cqm_mbm_total cqm_mbm_local dtherm ida arat pln pts hwp hwp_act_window hwp_epp hwp_pkg_req

This shows that the processors supports the AVX-512F (Foundations) instruction set, as well as the "DQ", "CD", "BW", and "VL" subsets. This is the expected set for a Skylake Xeon processor.

Assuming that the processor supports AVX512, the performance of Intel's optimized LINPACK benchmark should make it very clear whether the processor has 1 or 2 AVX-512 FMA units. For Linux, the description of the benchmark is at https://software.intel.com/en-us/mkl-linux-developer-guide-intel-optimized-linpack-benchmark-for-linux. ; There is also a Windows version of the benchmark that is easy to find.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have ran the runme_xeon64, and its output as follow. Which show the AVX-512 FMA units.

[root@centos71611 linpack]# ./runme_xeon64 This is a SAMPLE run script for running a shared-memory version of Intel(R) Distribution for LINPACK* Benchmark. Change it to reflect the correct number of CPUs/threads, problem input files, etc.. *Other names and brands may be claimed as the property of others. 2018年 06月 01日 星期五 15:06:49 CST Sample data file lininput_xeon64. Current date/time: Fri Jun 1 15:06:49 2018 CPU frequency: 2.493 GHz Number of CPUs: 2 Number of cores: 12 Number of threads: 12 Parameters are set to: Number of tests: 15 Number of equations to solve (problem size) : 1000 2000 5000 10000 15000 18000 20000 22000 25000 26000 27000 30000 35000 40000 45000 Leading dimension of array : 1000 2000 5008 10000 15000 18008 20016 22008 25000 26000 27000 30000 35000 40000 45000 Number of trials to run : 4 2 2 2 2 2 2 2 2 2 1 1 1 1 1 Data alignment value (in Kbytes) : 4 4 4 4 4 4 4 4 4 4 4 1 1 1 1 Maximum memory requested that can be used=16200901024, at the size=45000 =================== Timing linear equation system solver =================== Size LDA Align. Time(s) GFlops Residual Residual(norm) Check 1000 1000 4 0.010 69.5946 8.724688e-13 2.975343e-02 pass 1000 1000 4 0.008 88.2280 8.724688e-13 2.975343e-02 pass 1000 1000 4 0.009 78.1615 8.724688e-13 2.975343e-02 pass 1000 1000 4 0.008 88.9556 8.724688e-13 2.975343e-02 pass 2000 2000 4 0.045 119.8063 4.565348e-12 3.971294e-02 pass 2000 2000 4 0.041 131.0556 4.565348e-12 3.971294e-02 pass 5000 5008 4 0.506 164.7312 2.416245e-11 3.369259e-02 pass 5000 5008 4 0.499 167.1883 2.416245e-11 3.369259e-02 pass 10000 10000 4 3.743 178.1716 8.700884e-11 3.068020e-02 pass

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

None of the sizes shown in this output are big enough to see asymptotic performance on this system....

The "runme_xeon64" script launches the "xlinpack_xeon64" binary. Running "xlinpack_xeon64 -e" prints out the extended help for the benchmark.

If you set OMP_NUM_THREADS to 1 before running the test, it will limit the execution to a single core and you should get close to asymptotic performance for the problem sizes in the 10000 to 15000 range. Since we don't know what the minimum AVX512 or maximum AVX512 frequencies are for this processor, I would wrap the command in "perf stat" to get the average frequency.

On my Xeon Platinum 8160, modifying the input file to run a problem size of 10000 four times, I get

Performance Summary (GFlops)

Size LDA Align. Average Maximal

10000 10000 4 79.0453 79.4813

The output of "perf stat" showed an average of 3.36 GHz.

Dividing 79 GFLOPS by 3.36 GHz gives 23.5 FP operations per cycle, which is much higher than the peak of 16 FP operations per cycle that would be appropriate for a processor with only one AVX512 FMA unit.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

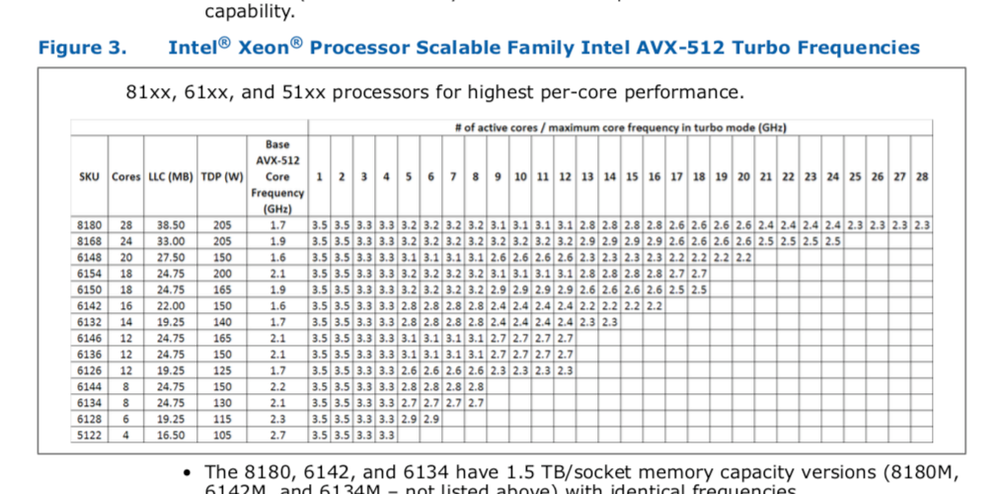

How could I understand the "Base AVX-512 Core Frequency(GHz)"? Does that affect the Max Gflops?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Where can I find this document for platinum 8360Y? I need to know the frequency table. Thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

For whatever reason, Intel quit putting this information into the specification update document for Ice Lake Xeon, and instead produced a separate document for the Turbo frequencies. The version of the document that I have (dated 2021-04-22) is not marked "Confidential", but I have been unable to find it on the Intel web site (or anywhere else). There is no document number in the document or its metadata, which makes searching more challenging.

For the Xeon Platinum 8360Y, the values in this version of the table are included in the table below -- NOTE that this is from the document dated 2021-04-22 and may not reflect later updates to the document (if any).

| Number of Active Cores | |||||||||||||||||||||||||||||||||||||

| Xeon Platinum 8360Y | Base Frequency GHz | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 | 12 | 13 | 14 | 15 | 16 | 17 | 18 | 19 | 20 | 21 | 22 | 23 | 24 | 25 | 26 | 27 | 28 | 29 | 30 | 31 | 32 | 33 | 34 | 35 | 36 |

| Non-AVX | 2.4 | 3.5 | 3.5 | 3.5 | 3.5 | 3.5 | 3.5 | 3.5 | 3.5 | 3.5 | 3.5 | 3.5 | 3.5 | 3.5 | 3.5 | 3.5 | 3.5 | 3.5 | 3.5 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.3 | 3.3 | 3.1 | 3.1 | 3.1 | 3.1 | 3.1 | 3.1 |

| AVX 2.0 | 2.1 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.3 | 3.3 | 3.3 | 3.3 | 3.3 | 3.3 | 3.3 | 3.3 | 3.3 | 3.3 | 3.3 | 3.3 | 3.1 | 3.1 | 3.1 | 3.1 | 3.1 | 3.1 |

| AVX-512 | 1.8 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.4 | 3.0 | 3.0 | 3.0 | 3.0 | 3.0 | 3.0 | 2.8 | 2.8 | 2.8 | 2.8 | 2.7 | 2.7 | 2.6 | 2.6 | 2.6 | 2.6 | 2.6 | 2.6 |

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks a lots.

at the moment, on a cluster of Lenovo nodes with 2x Platinum 8360Y (72 cores, no hyper-threading) and 512GB of ram per nodes, HPL on single node drops the frequency to 1.8 or 1.89 Ghz starting from 3.1Ghz in turbo mode.

At the end of HPL, we get 3.9TF per nodes.

Is it strange to have 1.8Ghz when I use 36 core with AVX-512 (HPL with avx-512) . I should have 2.6Ghz according to the table you posted (then more TF). Is there any explanation or solution to increase the performance?

Thanks,

Giuseppe.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The way to read these tables is:

(1) 1.8 GHz is the base ("guaranteed" minimum) frequency when running AVX512 code.

(2) If Turbo mode is enabled, the processor will run as fast as possible subject to limitations on package power and package temperature, but no higher than 2.6 GHz (for AVX-512 mode and all cores active).

Your observed values of 1.8 to 1.9 GHz are consistent with the specifications. With SKX and CLX processors, we typically see average frequencies when running HPL that are a bit higher (relative to the "base"), but part of that is due to our fairly aggressive cooling system.

The average frequency that will be sustained for a particular code will depend on the arithmetic intensity of the code, the temperature of the processor package, and the ratio of active power to leakage power for that particular piece of silicon.

In a cluster of 1736 2-socket Xeon Platinum 8160 processors, we saw single-node HPL values that varied by node, with a range of about 13% between the fastest and slowest nodes.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

One more comment....

At 1.8 GHz the peak performance of a 2s system is 1.8*72*32 = 4.1472 TF, increasing to 4.3776 TF.

Your observed HPL performance of 3.9 TF/node is therefore in the range of 89% to 94% of peak. This is exactly the range that I expect for recent Intel architectures -- typically 92%.

The value will drop a bit if the average frequency is different in the two sockets (due to differences in the intrinsic leakage current of the processors or differences in the effectiveness of the cooling system).

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page