- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I've a question based on what described in this thread https://community.intel.com/t5/Intel-Moderncode-for-Parallel/How-does-WC-buffer-relate-to-LFB/m-p/1174771#M8085

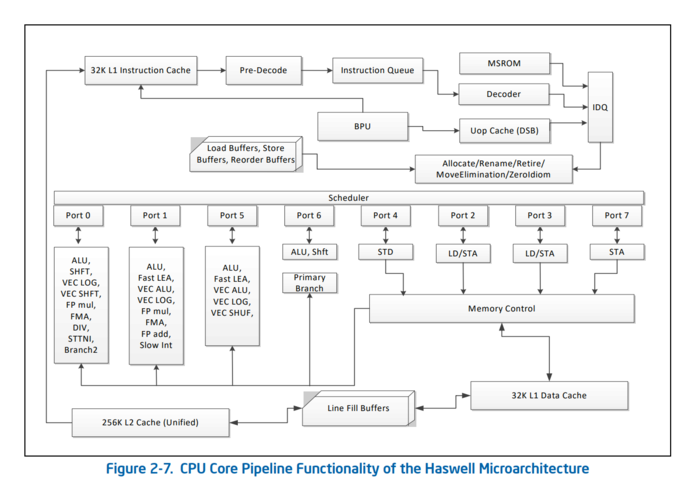

Just to fix ideas consider the Haswell pipeline and a loop of 64 independent regular (no NT) stores to WB memory each having as target a different byte belonging to the same 64-byte cache line.

My understanding of the store execution is as follows:

- Store instructions are in-order decoded by the Front End

- Store uops go in the Reorder Buffer and have assigned a store buffer each

Store buffers (aka write buffers) hold the store's data until the store uop retires and then accesses the L1D cache. In case L1D store-miss occurs, a RFO request is performed to bring the requested cache line in the right coherence state. In this case, as explained in the aforementioned thread, a LFB buffer is allocated to keep track of the outstanding RFO request (upon the store miss).

Coming back to the loop of 64 independent regular stores, since they are independent each other, they can be dispatched from the Scheduler (RS) to the STA ports (when they are available) to write values in their associated store buffers (i.e. execute stores).

Now when the first store in the loop retires the L1D cache has not the corresponding cache line, hence it starts a RFO request to get the cache line from the memory hierarchy. The DRAM memory controller eventually will serve the request say after about 200 clock cycles. Meanwhile the other 63 stores in the loops retire.

My understanding is that the LFB that keeps track of the first L1D cache store-miss is actually "shared" so when the LFB will eventually receive the "current" copy of the cache line from memory all the 64 stores will be actually "combined/merged" in the LFB cacheline-sized buffer before being sent to the L1D cache.

If the above is correct, then the L1D cache write latency is hidden and the loop is actually optimized.

Does it make sense ? Thank you.

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The best overview I have seen is at https://stackoverflow.com/questions/54876208/size-of-store-buffers-on-intel-hardware-what-exactly-is-a-store-buffer and its linked references.

If each of the stores requires the allocation of a different store buffer, then the processor will run out of store buffers before the full set of 64 (Haswell has 42 store buffers) causing a stall. Once the RFO completes and the L1D has write permission on the line, the store buffers can dump their payloads and free themselves for the remaining stores. If this is the case, there should be a significant slowdown for executing 64 single-byte stores vs 32 two-byte stores (for which there are enough store buffers).

Interpretation will require considering several interaction factors:

- 64 single-byte stores will require 64 cycles to issue, vs 32 cycles for the 32 two-byte stores.

- For L1-resident data, the cycle counts should track these values.

- For contiguous accesses, the number of store buffers interacts with the width of the data to determine how many cache lines have concurrent operations in progress.

- My first guess at the behavior as a function of store size is:

- 1 byte : 64 stores per cache line : store-buffer limited : ~1 LFB in use at a time

- 2 bytes : 32 stores per cache line : store-buffer limited : ~2 LFB's in use at a time (42/32 = 1.3125)

- 4 bytes : 16 stores per cache line : store-buffer limited : ~3 LFB's in use at a time (42/16 = 2.625)

- 8 bytes : 8 stores per cache line : store-buffer limited : 5-6 LFB's in use at a time (42/8 = 5.25)

- 16 bytes : 4 stores per cache line : LFB-limited : 42/4 = 10.5

- 32 bytes : 2 stores per cache line : LFB-limited : 42/2 = 21

- As mentioned in the stack overflow thread, I don't think we have a clear idea of how many cycles it will take to process the "backlog" of retired stores once the RFO completes.

- I have tested the throughput of partial-cacheline writes on Haswell (?), but only to confirm that writes into two different fields of the same cache line does not slow down the write rate of 1 per cycle.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page