- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I would like to profile a particular function in a program, and especially on one specific core.

My problem is that the first time this function is executed, it lasts 70µs on one core that was busy just before, and more than 120 µs on other cores. This function is called almost at the same time on all cores

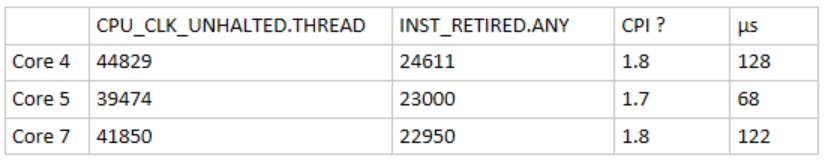

I have read these two counters before / after the function to calculate the Cycle Per Instruction like vTune, but is not very different.

I also read MEM_LOAD_UOPS_RETIRED.L3_MISS and MEM_LOAD_UOPS_RETIRED.L2_MISS, there aren't much either.

my questions are :

- Does vTune could be any help here ? This happening only once in the program execution so I guess not

- Why does the timing are so different when changing cores ? In that function, all is longer on the cores 4 & 7. For example a simple local array filling of 8 bits lasts 6µs, and on core 5 it is less than 0.5µs.

- And which performance counters could i check ?

Sorry if i was not clear i am not very familiar with processor low level architecture.

Thanks in advance for the help

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

How can 44829 core cycles be equivalent to 128 microseconds? This means that the average frequency is about 0.35GHz, which is very unlikely.

The difference between core 4 and core 5 is only about 5,000 cycles. This could be the amount of time it takes to handle one or two minor page faults. Also the number of L2 or L3 misses doesn't have to be much to explain the difference. For example, if core 4 incurs about 200-300 L3 misses more than core 5, then it's not unreasonable to be slower by several thousand cycles. Anyway, it's impossible to tell for sure without seeing the code and understanding exactly how the code is compiled and how performance counters are measured.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Measurement of performance on code segments that are this short can be challenging.

My notes at http://sites.utexas.edu/jdm4372/2018/07/23/comments-on-timing-short-code-sections-on-intel-processors/ are primary targeted at measurements for even shorter code segments, but the methodology and computation of derived metrics may be helpful.

At these scales (40,000 cycles), many different overheads can be significant:

- Reading performance counters can take several thousand cycles each if you are using PAPI or perf_events or the MSR interfaces.

- Scheduler interrupts can take between 5,000-10,000 cycles.

- Cores can be halted for voltage and/or frequency changes for periods of a few microseconds to tens of microseconds.

- System Management Interrupts can take away cores to service firmware requests.

All of these are difficult to predict and/or control, but most can at least be observed. It is usually good to start with the computed metrics in the post above -- particularly the core utilization, average frequency, and user/kernel cycle split. For Xeon Scalable Processors, I often have to add counters for the "power licence levels" to see if changes in SIMD width are causing frequency & voltage changes (each of which "halts" the core(s) for the transition).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

thanks for your answers !

I used rdpmc instruction (so very few overhead) to read performance counters before and after the function. The time spent in that function is calculated with rdtsc. I guess sudden change in frequency is not taken into account. But in our real time sensitive case, having cores at different speed would not be great.

There is no scheduler interrupts on these cores, only core 0 can be interrupt.

All of this is highly reproductible. Another thing : If the function is called and all the code is executed before (like 100ms before) the "real" call, then time spent is significantly lower for the real call.

I used the fixed-function to check whether it differs from the two registers I used, but i have inconsistent results (same duration as above) :

core 5 : 54939 Instructions / 8658 Cycles

core 4 : 32822 Inst. / 48808 Cycles

core 7 : 37449 Inst. / 47329 Cycles

I will check your blog post, thanks

Simon

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

For code sections executed a single time, Instruction Cache misses serviced by memory can cause significant delays.... If the code has run on another core associated with the same (inclusive) L3, then the overhead for the cache-to-cache transfers should be minimal. For Xeon Scalable Processors, the L3 is fully exclusive, so there will not be a duplicate of the instructions in the L3. It is possible that the cache lines containing the instructions could be provided by another L2, but I have not looked at the details of coherence for instructions on Intel processors.

Core C-states can also cause significant overhead for short functions. The details vary by processor family and model, and depend on how the software is configured, but in the default configuration of Xeon Scalable processors, the core drops to 1.0 GHz whenever it leaves the C0 (active) state. In order to "wake up" an idle core, the OS writes to the address used by the MONITOR/MWAIT instructions, waits for a C-state-dependent amount of time, then generates an InterProcessor Interrupt to start a process on the newly-awakened core. The core starts at 1.0 GHz with only the bottom 128-bits of the SSE/AVX/AVX2 SIMD pipelines active. Depending on what SIMD width you are using, there may be 1 or 2 processor stalls while the other SIMD lanes are activated. Then there is another stall for the core to be transitioned to full frequency. The entire process takes something like 100 microseconds, so short functions can be heavily impacted.

In some processors, the automatic frequency drop can be overridden with an MSR setting, but I have not found a way to do this with the Xeon Scalable Processors. Instead, the only way to ensure full frequency is to boot the operating system with "idle=poll", so that idle cores remain in a full-frequency spin wait instead of using C-states. Having all the cores "active" reduces the Turbo frequencies significantly, but can make the timings more predictable for short functions and for interrupt handlers.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Which counter could i check to check the Instruction Cache misses ? I use Broadwell Xeon that has 2GHz

For the C-state, is there a thresold to leave this state ? Because theses cores are busy for few micro seconds every 200 µs at least 500ms before the problem occurs.

I will try the idle=poll.

Anyway from your blog post what i can say is that when every thing is fine (like just after the long first call of the function and before for other functions), the number of unhalted cycles matches the tsc

For the problematic case above, there is a lot of unhalted cycles. Even on core 5 the tsc value is 6 times higher than the number of unhalted cpu cycle ! And every time the reference_cycle and actual_cycles are the same.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

According to Section 19.6 of Volume 3 of the Intel Architectures Software Developer's Manual, the Broadwell core can measure instruction cache misses using:

- ICACHE.MISSES -- Event 0x80, Umask 0x02 -- should count all L1 instruction cache misses

- L2_TRANS.CODE_RD -- Event 0xF0, Umask 0x04 -- L2 cache accesses when fetching instructions (may overcount if some accesses are rejected and retried)

C-states are controlled by the operating system. The infrastructure in Linux is fairly complex, with both scheduler history and pending high-resolution timer expirations used to determine which C-state to use for idle logical processors. On a Xeon E5-2680 v4 (Broadwell), the information about the each of the C-states is in /sys/devices/system/cpu*/cpuidle/state*/*. The name of each state and the wakeup latency in microseconds for this processor are

$ cat /sys/devices/system/cpu/cpu0/cpuidle/state*/name

POLL

C1-BDW

C1E-BDW

C3-BDW

C6-BDW$ cat /sys/devices/system/cpu/cpu0/cpuidle/state*/latency

0

2

10

40

133

The "POLL" state leaves the core in C0 (active), and just spins until work is available.

The C1 and C1E states leave much of the processor core powered up, so they have short wakeup times (2 microseconds and 10 microseconds). Both of these states turn off the core clocks, but leave the core power enabled. Both states leave the L1 & L2 caches operational. C1E drops the core's voltage (and cache frequency) to the "maximum efficiency frequency", which is probably 1.2 GHz on Broadwell processors.

The C3 and C6 states power down much of the processor core, so they have longer wakeup times.

You can limit C-states by opening /dev/cpu_dma_latency and writing a signed 32-bit integer to the file. The integer value is the longest allowable "wakeup" latency (in microseconds). The OS will honor that limit as long as the user process that wrote the signed 32-bit integer keeps the file descriptor open. As soon as the user process closes the file (or exits), the latency limit request is dropped. When we have needed to do this, we have typically picked a number that would allow the C1 states, but prohibit the higher-numbered states (e.g., 20 microseconds). I have not used this feature recently....

You don't need to mess with /dev/cpu_dma_latency if you boot with "idle=poll" -- this will prevent use of any state other than C0 (active).

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page