- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

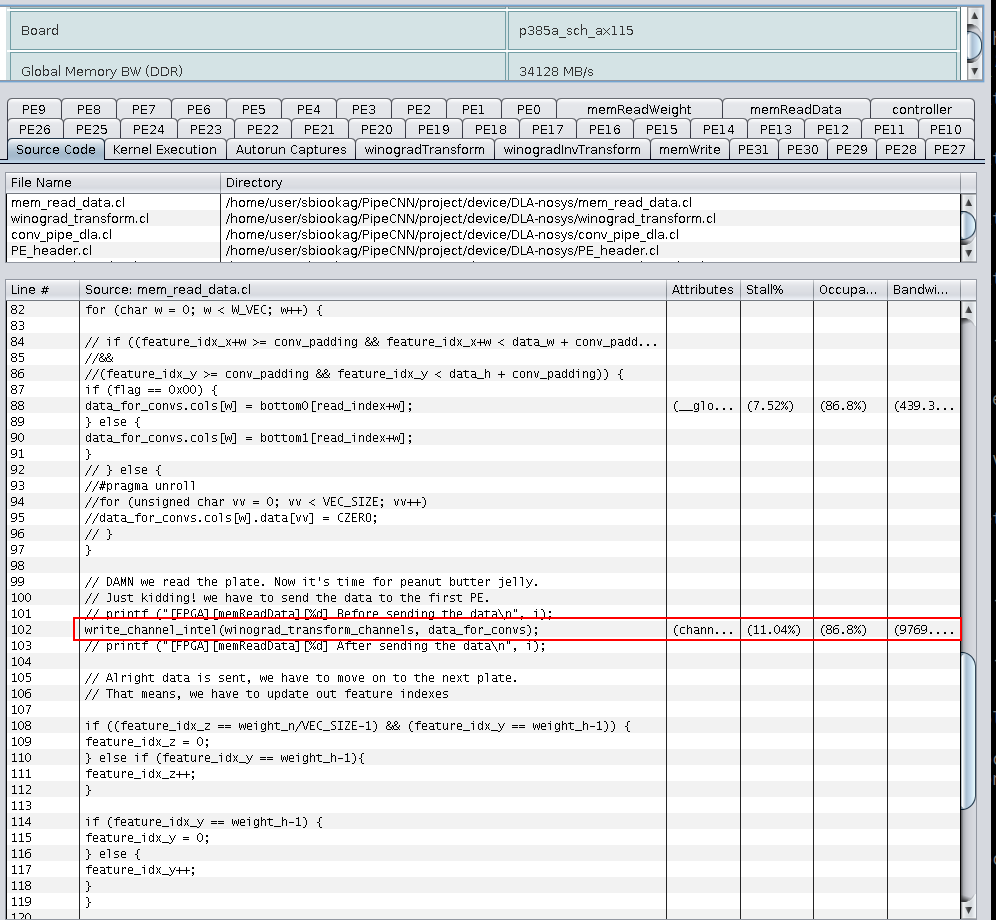

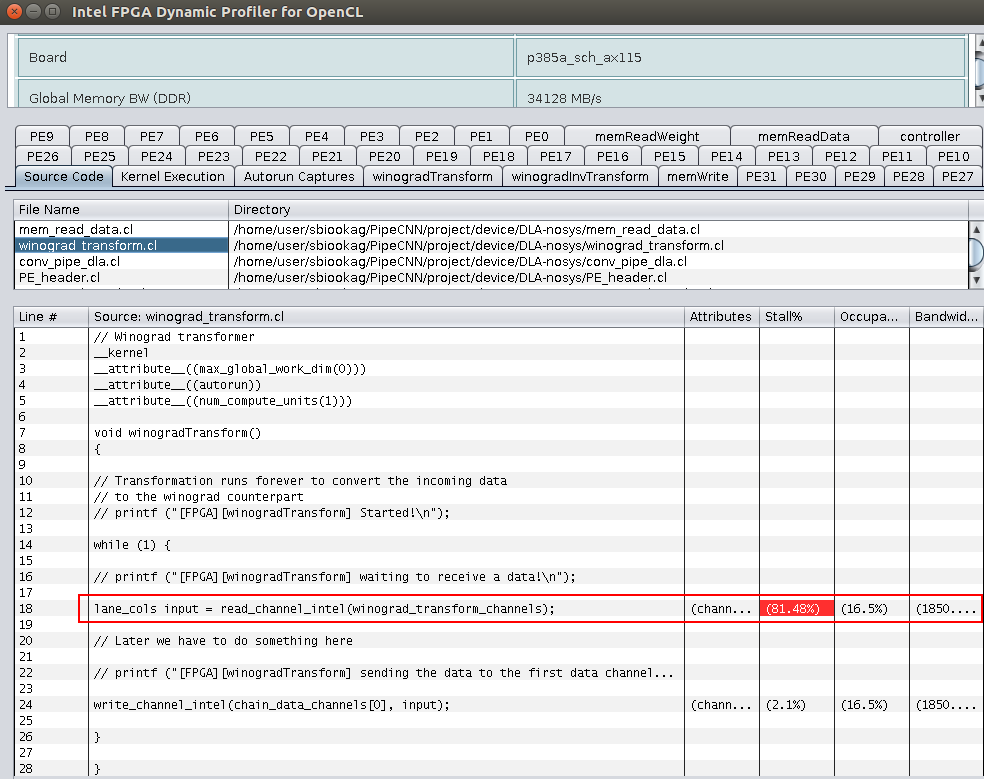

I have a design implementation, where one kernel send some data through a channel to another autorun kernel. When I profile the kernels, I see mismatched bandwidth result on both sides of the channel. Here are screen shots for the bandwidths:

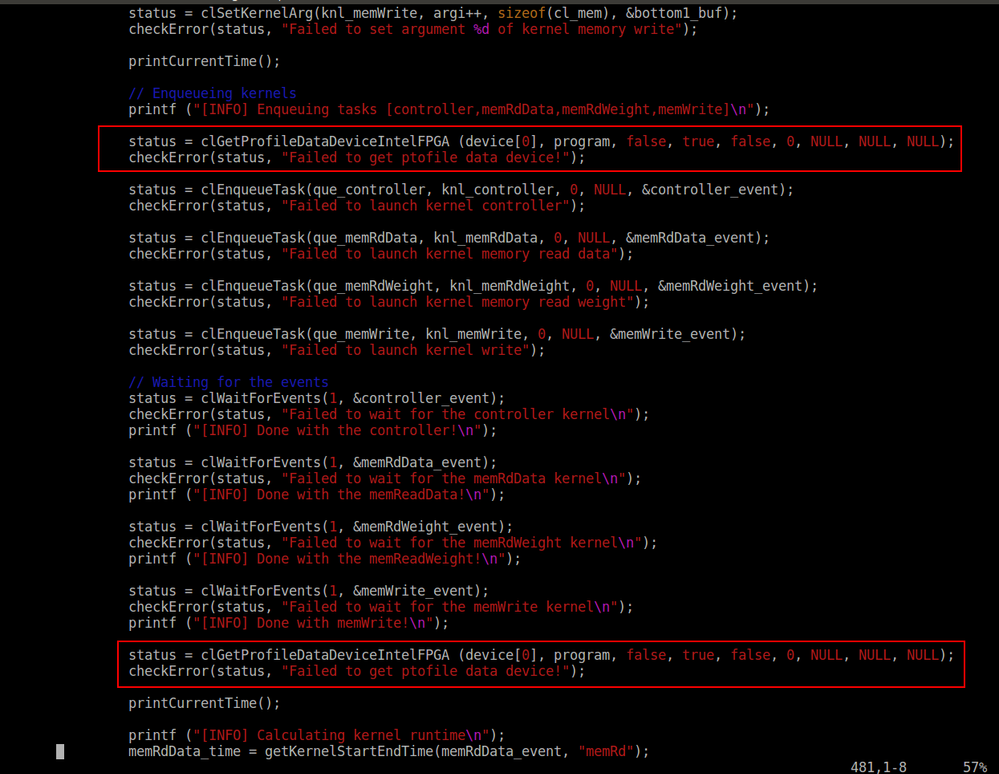

As you can see, "mem_read_data.cl" sends the data to the "winograd_transform_channels" with speed of 9769 MB/s. On the other hand the "winograd_transform.cl" (autorun) receives the data with the rate of "1850 MB/s". The stall percentage on this side seems to be 81%. I cannot fully understand, how such thing is possible. For more information, this is how I reset the autorun profile counters on the host side:

I assume that I'm resetting the counters in the right spots.

Any idea what is going on here?

Thanks

- Tags:

- OpenCL™

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Have you checked further down the pipeline? Especially the memory write at the very end? The stalls could be propagating from there all the way up the pipeline. Also, are the IIs of all your loops the same?

P.S. Fantastic comments in your code. :D

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have checked the other PEs and also the final stage which receives the final output and writes it back to the memory. they suffer from the same stall.

I'm kinda afraid that I'm not capturing the PEs counter numbers properly.

BTW, about the comments, that's how a software engineer survives FPGA programming :D

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Then it sounds like the stalls are propagating from the bottom of the pipeline. I am afraid I have never profiled autorun kernels, so I cannot comment on the correctness of the way you are capturing the counters. However, I find it very unlikely for regular compute PEs or on-chip channel to become a performance bottleneck. As a test, you can remove all your PEs from the kernel, and just keep the memory read/write kernels directly connected to each other through a channel. If you get similar stalling on this simplified kernel, the problem is coming from memory. Note that if you are exhausting the external memory bandwidth, seeing such stalls on the channels is completely normal.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Alright,

Thanks much. Will try the new approach and see how things will change. Will update you here :)

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page