Authors: Raghu Yeluri, Sr. Principal Engineer and Lead Security Architect in the Office of the CTO/Security Architecture and Technology Group & Haidong Xia Principal Engineer, Cloud Software Security Engineer Manager at Intel Corporation.

Application Containers provide a very popular software packaging and delivery model for microservices and applications in cloud, edge and also facilitating a seamless migration of workloads between on-prem and cloud. At the same time, confidential computing continues to gain momentum as an effective way to protect in-use data and code by executing them in Trusted Execution Environments (TEE). Combining the two approaches and applying zero-trust, independently verified attestation of the trust worthiness of and encrypted, containers enables powerful new end-to-end (E2E) protections for containers through all the data protection modalities (at rest, in-transit and in-execution). For enterprises, confidential computing makes it possible to easily scale and move containers securely across environments and cloud providers.

In this blog, we’ll look at what confidential containers are, how container confidential computing works, and how Intel’s Project Amber provides an independent verification of trust worthiness of definitive trustworthiness. We’ll then highlight two critical use cases being heavily focused on by the industry as the community builds out enabling technologies for broader deployment of confidential containers.

Understanding Container Confidential Computing

Confidential computing is an emerging paradigm that seeks to enhance data security by performing execution in a safe, isolated enclave. Encrypted data and code are loaded into the trusted execution environment, then decrypted for in-memory processing. The aim is to protect against access, modification, theft and tampering by malicious or unauthorized actors. Financial services, government, retail, healthcare, cloud service providers (CSPs) and many others, especially in regulated industries, are piloting and adopting this new approach.

Container confidential computing applies the confidential computing concepts and many of the same technologies and architecture to this fast-growing environment for cross-platform development and deployment. An open-source community project called ‘confidential containers’ is working to enable cloud-native confidential computing. These are some of the key goals for the confidential container project:

- Allow cloud-native application owners to enforce application security requirements

- Provide transparent deployment of unmodified containers

- Support multiple TEE and hardware platforms

- Supply a trust model which separates Cloud Service Providers (CSPs) from guest applications

- Enable least-privilege principles for the Kubernetes Cluster administration capabilities which impact delivering Confidential Computing for guest application or data inside the TEE.

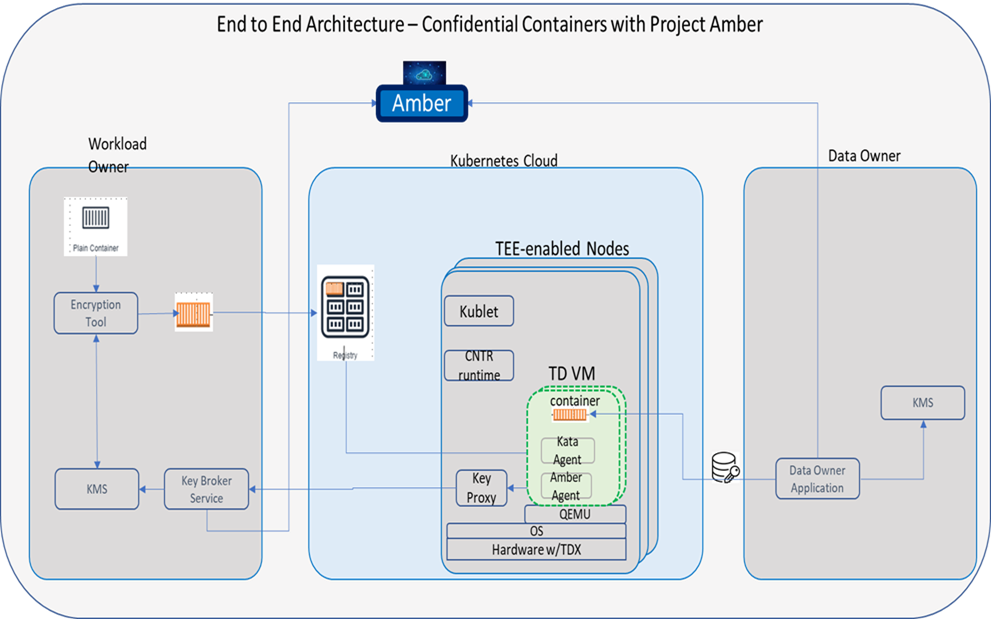

To these ends, the Confidential Container project is defining many of the elements required to seamlessly run OCI-compliant containerized applications inside trusted execution environments. These elements are defined for deploying encrypted container images in TEE’s, a critical use case. Figure 1 provides a high-level architectural view of the components required for seamless instantiation.

For containers at rest, encrypted images reside at public/private repositories such as Docker Hub. For containers in transit, container images pushed to registries and pulled from registries are encrypted. Containers at runtime are encrypted and isolated using TEEs. Confidential containers are instantiated as Kata containers, which are essentially light weight VMs using the KVM hypervisor. To isolate them during runtime, the Kata architecture is extended so containers can run inside a trusted execution environment, like Intel TDX.

A crucial element is attestation, the mechanism that verifies the trustworthiness of the TEE and code running inside it. This verification of trust worthiness is the ground truth, determining how sensitive information and key material are provided to that container to do useful work. In this case, attestation lets the workload owner verify that the Kata Containers guest is running on a TEE. The attestation agent (amber agent in the picture) gets a decryption key from the Key Broker Service only after the quote is attested in the background mode, verifying it’s safe for auditing and secret release.

Why Project Amber Is Best for Container Security

Project Amber is an Intel code-name for a project to deliver an independent, transparent, and auditable service that verifies the trust worthiness of execution environments for confidential computing workloads. It’s a key step in creating a zero-trust, multi-cloud, multi-TEE for public, private and hybrid clouds, and edge.

Intel’s approach lies in the ability to offer neutral, third-party attestation and policy verification, and the use of open-source Kata containers with extensions that ensure they can run inside trusted execution environments like Intel TDX.

Unlinking attestation verification from infrastructure vendors allows assurance to be provided directly to the container owner or user. It’s analogous to certificate authorities that assert identities regardless of where the application runs.

Uniform, portable, independent attestation of container workloads offers numerous benefits to enterprises and industry vendors: It facilitates easier expansion across multi-cloud and hybrid cloud environments and vendors; enables verification of workload specific policies for auditing and compliance.

Intel® Trust Domain Extensions (Intel® TDX)

Another key element in the Intel’s confidential computing direction is Intel® Trust Domain Extensions (Intel® TDX). Intel TDX brings new, architectural elements to help deploy hardware-isolated, virtual machines (VMs) called trust domains (TDs). Intel TDX is designed to isolate VMs from the virtual-machine manager (VMM)/hypervisor and any other non-TD software on the platform to protect TDs from a broad range of software. Check out Intel’s website for more information on Intel TDX.

Kata Containers

Kata Containers is an open source community project that provides an open source container runtime with lightweight virtual machines that feel and perform like containers, but provide stronger workload isolation using hardware virtualization technology as a second layer of defense. Kata Containers are as light and fast as containers and integrate with the container management layers—including popular orchestration tools such as Docker and Kubernetes (k8s)—while also delivering the security advantages of VMs.

The Confidential Containers project is an industry effort with players like Intel, Apple, IBM, and Alibaba contributing and driving a set of primitives, components and defining the architecture for end-to-end protection of containers.

Two Important Use Cases

The Confidential Container project is heavily focused on end-to-end encryption of container images, that is, encryption at rest, in transit, and during execution. To see how this works in an everyday application, let’s take a brief look at two common use cases, as illustrated in figure #1.

Launch Time Verification of Attestation

A fully encrypted container image is deployed by the user into the cloud and a Kubernetes environment. A built-in workflow launches the container image inside the Intel TDX Trusted Execution Environment. The container then negotiates via a secure channel with a back-end key broker service to download or fetch a decryption key. If Project Amber verifies it’s a genuine TDX environment, the broker will release the key directly into the TEE. The container gets runtime decrypted, and the image executes just like any other application.

Runtime Verification of Attestation

Now let’s say the container is running. In the launch time use case, the decryption key is provided by the broker service to the “owner” who encrypted and deployed the image. In this case, however, the user is not the image owner, but the data owner who wants to run an encrypted AI model inside the container. How do I provide the decryption key and how do I trust the confidential computing environment?

Following the zero-trust principles, the data owner interfaces with Project Amber to verify that AI model in the container is indeed a trusted environment. If so, the key is released directly into the container image, so the data set can be decrypted there. So, it’s similar attestation verification, but by a completely different user. In both cases, the attestation is appropriate for the use case and requestor roles.

Complete Turnkey Protection for Containers

Extending confidential computing to containers promises to greatly mitigate the threat landscape for containers, and enable a new and interesting set of use-cases. The confidential container project is making great strides in defining the primitives and the software components needed to define With Project Amber, Intel is pioneering the industry’s first turnkey solution for verifying the trust worthiness of TEEs, using the zero-trust policies and models.

Learn more

More information about Confidential Computing is found at: https://www.intel.com/content/www/us/en/security/confidential-computing.html.

You can learn more about Confidential Containers at

https://github.com/confidential-containers

You can learn more about Project Amber at:

https://www.intel.com/content/www/us/en/security/project-amber.html

Notices & Disclaimers:

Intel technologies may require enabled hardware, software or service activation.

No product or component can be absolutely secure.

Code names are used by Intel to identify products, technologies, or services that are in development and not publicly available. These are not "commercial" names and not intended to function as trademarks.

© Intel Corporation. Intel, the Intel logo, and other Intel marks are trademarks of Intel Corporation or its subsidiaries. Other names and brands may be claimed as the property of others.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.