Julia* is a high-performance, dynamically typed programming language that excels at numerical and scientific computing. The Julia computing language was conceived in 2009 in a project born at MIT, open sourced, and announced to the world in 2012. It has become one of the most loved programming languages, adopted by many of the world's top scientific organizations including NASA*, Climate Modeling Alliance, and CERN.

The language is designed for speed, simplicity, and flexibility. Julia's type system, multiple dispatch mechanism, and sophisticated package management makes it a powerful and extensible language that is easy to learn. Its comprehensive documentation and supportive community ensure help developers get up to speed quickly and have fun while doing it!

Julia is also the “Ju” in Jupyter—as in Jupyter Notebooks—which stands for Julia, Python, and R.

The creators of Julia partnered with others to found JuliaHub* (formerly Julia Computing) as a secure, software-as-a-service platform for developing, deploying, and scaling Julia programs to thousands of nodes. Consider it a cloud platform for high-performance, distributed compute, making supercomputing more accessible to data scientists and engineers.

As Viral Shah, one of the co-inventors of Julia and Co-founder and CEO of JuliaHub, noted at Intel Innovation, Julia “started out with this promise of … the ease of Python but the speed of C. Can you have your cake and eat it too? It turns out it is possible.”

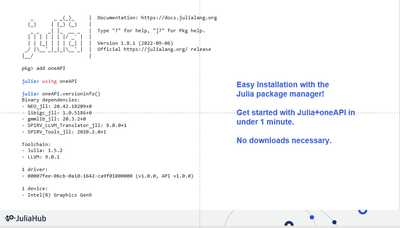

“The language evolved with support for [hardware] accelerators. Like, that was not part of the original language, but we were able to refactor the compiler to the point now that all our GPU backends—whether it's oneAPI, whether it's CUDA, whether it's ROCm—they're all external to the language, so we refactored the compiler APIs to make that possible.” In November 2020, JuliaLang.org announced the first version of oneAPI.jl, a Julia package for programming accelerators with oneAPI, the open, standards-based, multiarchitecture programming model that provides developers the freedom to choose the best hardware (CPU, GPU, FPGAs, and other accelerators) for accelerated computing. oneAPI.jl 1.0 “adds integration with the oneAPI Math Kernel Library (oneMKL) to accelerate linear algebra operations on Intel GPUs. It also brings support for Julia 1.9 and Intel Arc GPUs.”

Recently, Intel and JuliaHub conducted a workshop to educate developers on Julia for computational thinking and scientific computing, delivered by Alan Edelman, one of the co-creators of Julia, Co-founder and Chief Scientist at JuliaHub, and professor of applied mathematics at Massachusetts Institute of Technology (MIT). Check out The Scientific Computing Power of Julia Revealed (intel.com) to learn about real-world applications, including:

- Applying data analysis and computational and mathematical modeling effectively

- Using the Intel® oneAPI Math Kernel Library (oneMKL) in Julia to achieve accelerated performance for vector, vector-matrix, and matrix-vector operations

- Discovering programming advantages of Julia when used with oneAPI

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.