Published July 22nd, 2021

Sairam Sundaresan is a deep learning researcher at Intel Labs, where he focuses on developing artificial intelligence (AI) solutions and exploring efficient AI algorithms for various modalities such as vision and language.

Highlights:

- Transformers and self-attention, in general, have shown great promise in replacing convolutional neural networks for a variety of tasks.

- Intel Labs has created a novel framework for producing a class of parameter- and compute-efficient models called AttentionLite, which leverages recent advances in self-attention as a substitute for convolutions. AttentionLite can simultaneously distill knowledge from a compute-heavy teacher while also pruning the student model in a single pass of training, resulting in considerably reduced training and fine-tuning times. In addition, AttentionLite models can achieve up to 30x parameter efficiency and 2x computation efficiency with no significant accuracy drop compared to their teacher.

Convolutional neural networks (CNNs) have been the backbone for several computer vision tasks, including image recognition, object detection, and image segmentation. Despite their strengths, convolutional networks suffer from a few limitations. First, they are content-agnostic, meaning that the same weights are applied at all locations of an input feature map. Second, both parameter count and floating-point operations (FLOPs) scale poorly with an increase in the receptive field, which is essential for capturing long-range interaction of pixels. To mitigate this, prior work has turned to knowledge distillation and pruning.

At the Intel AI Lab, we wondered if we could take advantage of self-attention mechanisms which have been used to completely replace convolutions in vision models in recent work. Why? Because they typically consume fewer parameters and FLOPs, scale much better with larger receptive fields, are highly parallelizable, and can be accelerated in suitable hardware. To build a class of compact models for vision tasks, we explored the possibility of combining them with distillation and pruning.

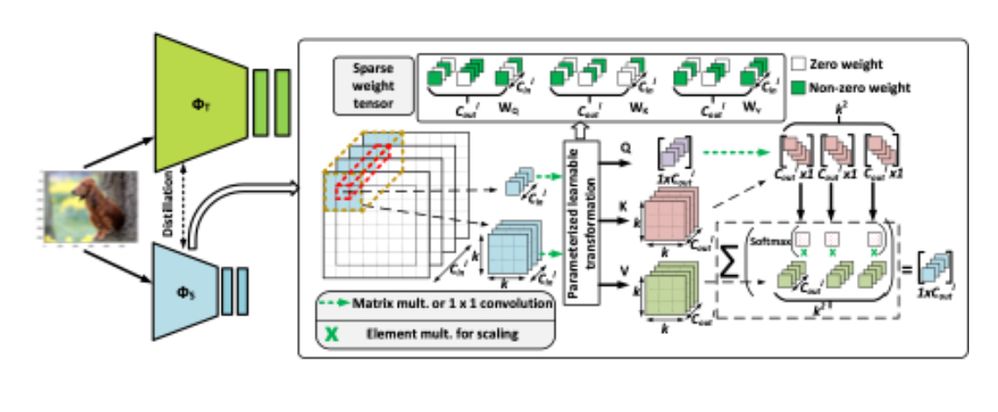

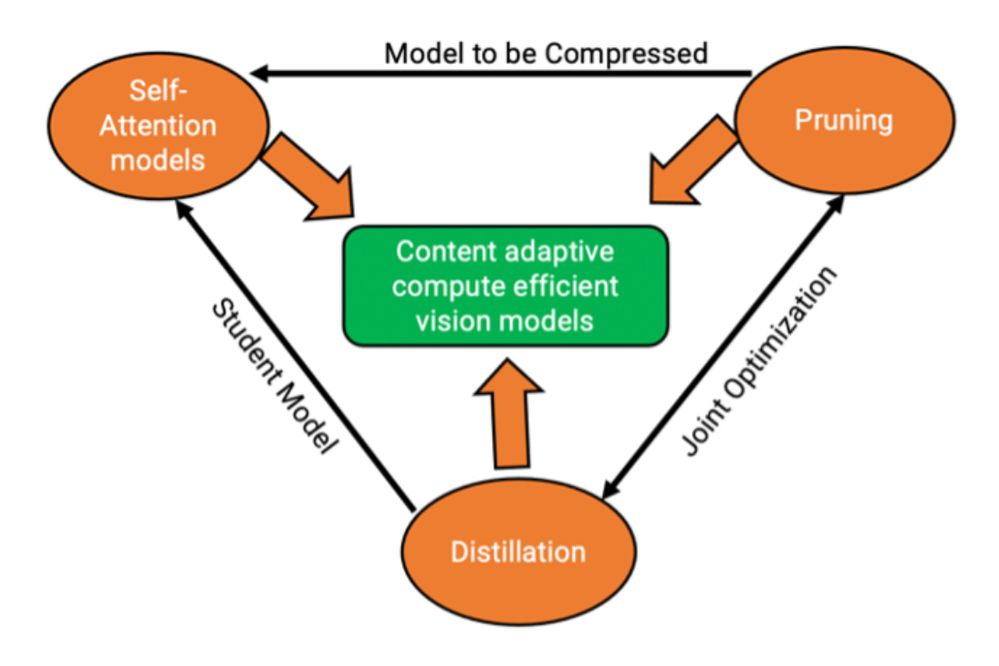

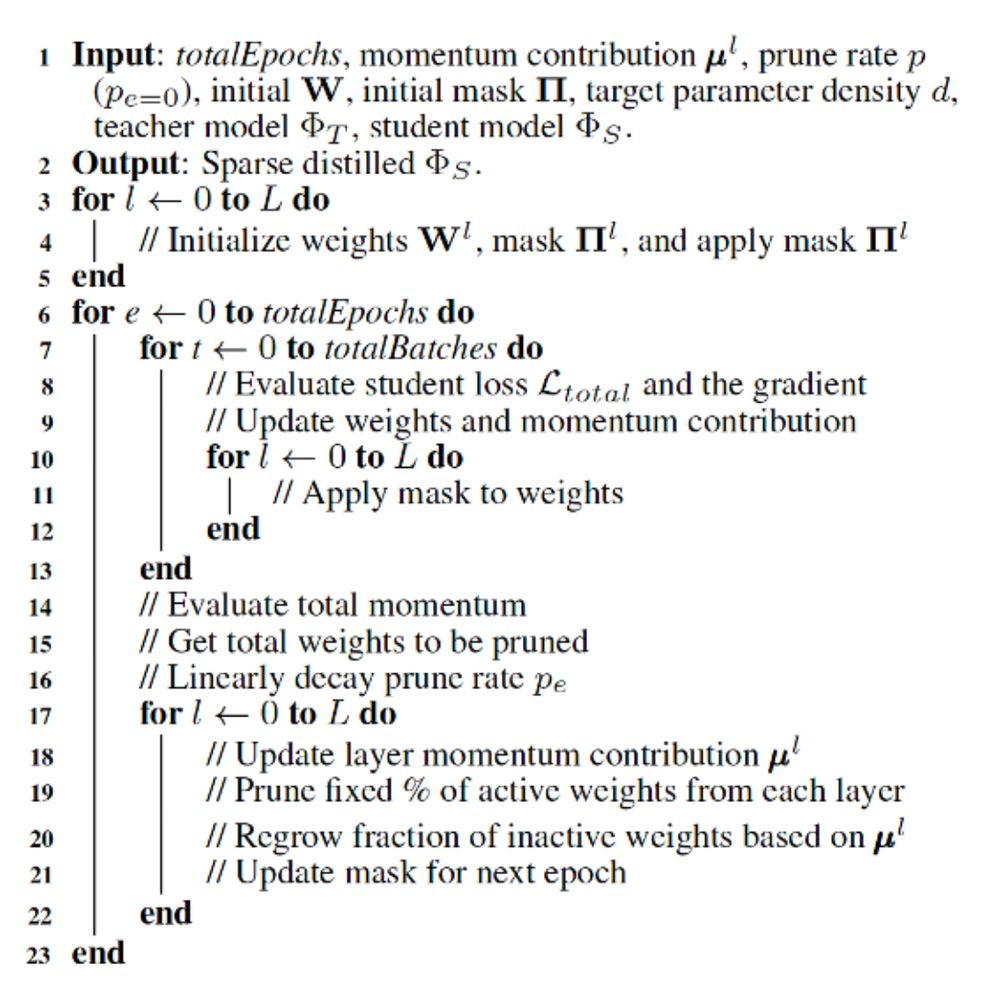

Figure 1

This led to our joint model optimization framework called sparse distillation, wherein we exploit the benefits from pruning, distillation, and self-attention mechanisms. Using a compute-heavy CNN with rich positional information as a teacher, we distill knowledge into a self-attention-based student model. Simultaneously, we enforce the idea of sparse learning of the student to yield a pruned self-attention model as detailed in Figure 1 above.

Additionally, our framework supports structured column pruning to yield models that can increase inference speeds as well as offer the benefit of reduced parameters. Experiments on multiple datasets show that our framework can perform remarkably well compared to unpruned baselines and convolutional counterparts while requiring a fraction of the parameters and FLOPs.

Figure 2

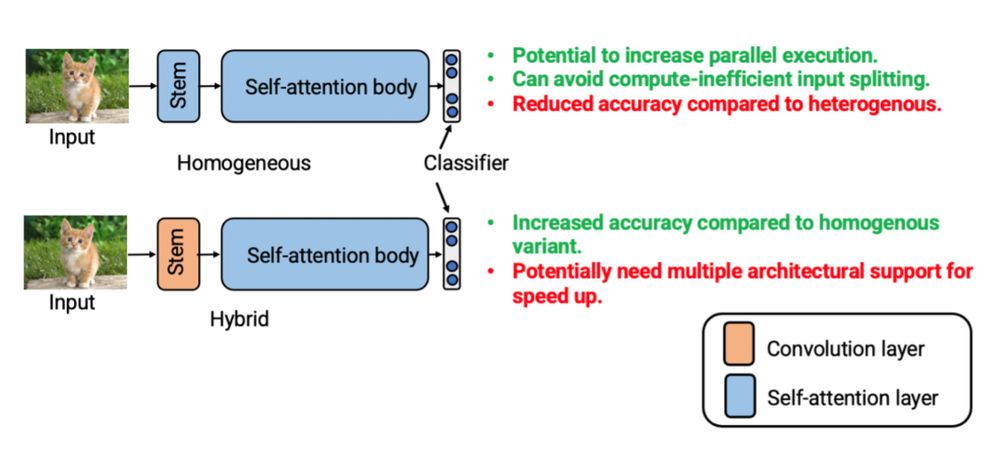

First, we began by replacing the basic convolution operator with the dot product self-attention mechanism as used in Ramachandran et. al. For the distillation process, our student models come in two flavors, namely the homogenous and hybrid variants. The key difference between the two variants is that while the former employs self-attention throughout the architecture, the latter uses convolution only in the stem, as shown below in Figure 3.

Figure 3

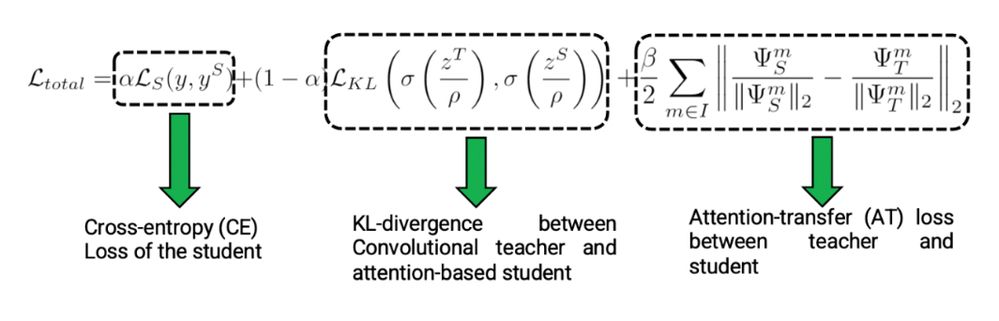

ResNet-50 was used as the teacher model for all the experiments. As part of our optimization process, the loss function we use (shown in Equation 1), combines multiple factors, including classification loss (task loss), distillation loss, and attention transfer loss. These factors help the model learn both from examples and the teacher.

Equation 1

To prune the student model while simultaneously distilling knowledge from the teacher, we first update the total trainable parameters for a given layer and then use a mask to forcibly set a fraction of these parameters to zero. Inspired by the idea of sparse-learning, a layer’s importance is evaluated by computing the normalized momentum contributed by its non-zero weights during an epoch. This process enables us to decide which layers should have more non-zero weights under the given parameter budget. To do this, the pruning mask is updated (for both structured and column pruning) accordingly. Details of the sparse distillation training are presented below in Figure 5:

Figure 5

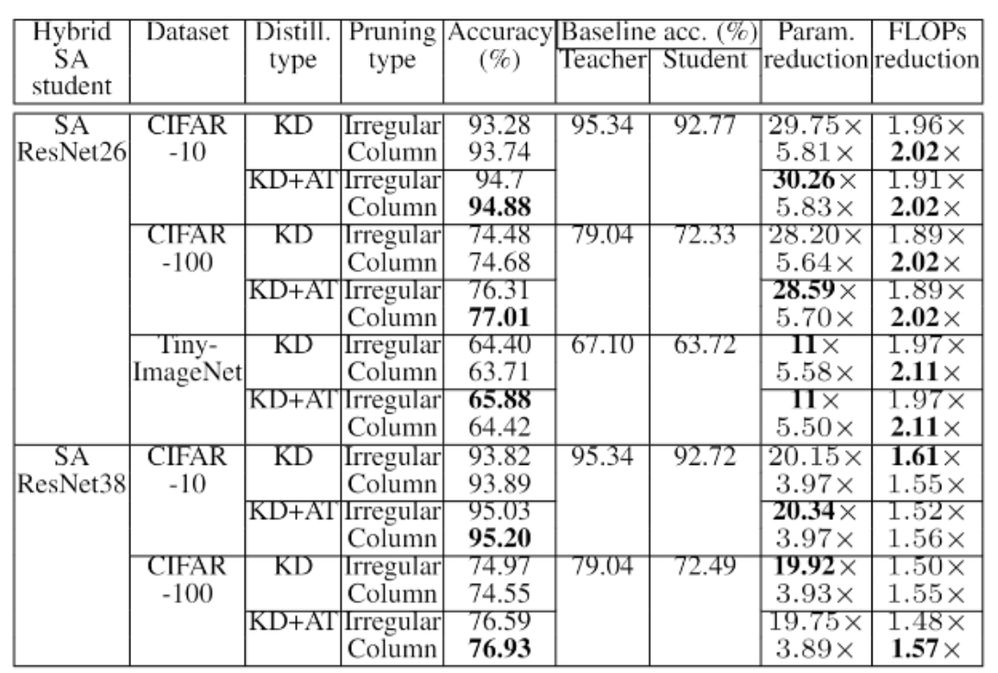

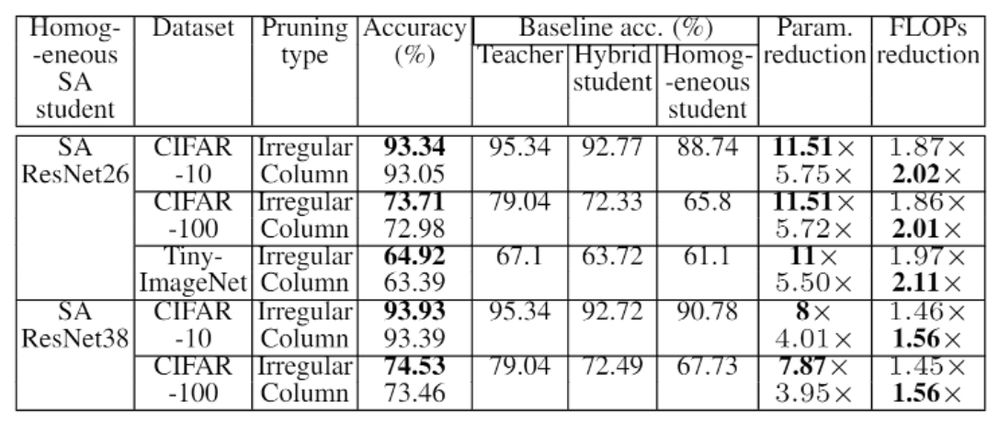

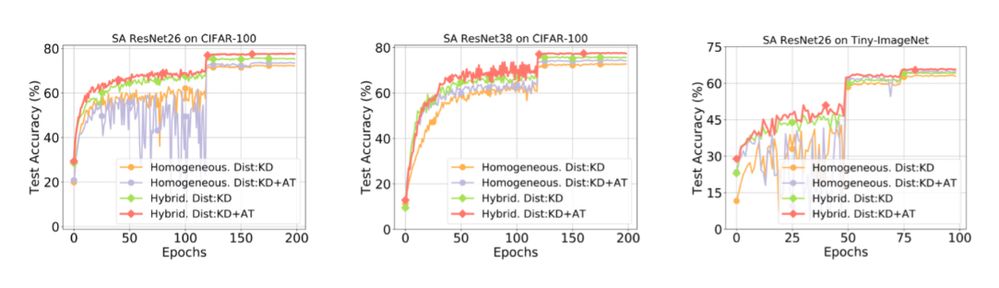

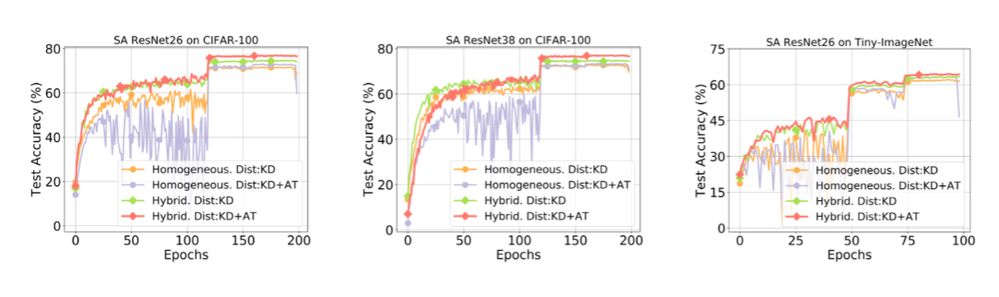

When we evaluated our idea on three widely used datasets, namely, CIFAR-10 CIFAR-100, and Tiny-ImageNet, we obtained very promising results. Across the board, AttentionLite models used much fewer parameters than their baselines or convolutional counterparts, in addition to surpassing their accuracy numbers as well (seen in Tables 1 and 2). What’s also encouraging is that the training converges without any hitches despite the model being pruned and distilled simultaneously during the training process (see Figures 6 and 7).

Table 1

Table 2

Figure 6

Figure 7

Our experiments show that it is possible to produce models that can perform remarkably well compared to complex compute-heavy CNNs while consuming only a fraction of the parameters and FLOPs. Hybrid AttentionLite models offer better accuracy, while homogeneous variants provide the advantage of parallel execution since they consist of the same set of operations throughout the network in comparison to the hybrid variants. Our framework is complementary to existing schemes for producing efficient models and can work well with convolution-based students.

There are several potential directions we're excited to explore further. While we have shown the efficacy of our approach on image classification, there are other tasks such as object detection, image segmentation, and captioning which could benefit from our approach. Given the recent research in using Transformer-based architectures for vision tasks, sparse distilling a Transformer model to produce an efficient yet accurate network is an exciting direction. Evaluating the gains obtained by benchmarking the parallelism-friendly AttentionLite models on custom hardware can inspire the industry to move towards optimized hardware support for attention mechanisms.

Sairam brings technical expertise and innovation to solve challenging problems in Computer Vision and Machine Learning. He has 11 years of work experience in a wide variety of topics in Computer Vision Image Processing, and Machine Learning including object tracking, real time 3D reconstruction, gesture recognition, low-level computer vision functionalities, mixed-precision deep network training and efficient deep learning algorithms for custom accelerators. He also has a proven track record of taking ideas from R&D prototypes to product successfully delivering on multiple projects. Additionally, Sairam has been granted multiple patents and peer-reviewed publications.

Sairam brings technical expertise and innovation to solve challenging problems in Computer Vision and Machine Learning. He has 11 years of work experience in a wide variety of topics in Computer Vision Image Processing, and Machine Learning including object tracking, real time 3D reconstruction, gesture recognition, low-level computer vision functionalities, mixed-precision deep network training and efficient deep learning algorithms for custom accelerators. He also has a proven track record of taking ideas from R&D prototypes to product successfully delivering on multiple projects. Additionally, Sairam has been granted multiple patents and peer-reviewed publications.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.