TensorFlow* is a widely known open-source AI and machine learning platform that has continued to facilitate production AI development and deployment in the ever-evolving AI development space. Oftentimes such cutting-edge work involves complicated deep neural networks and large data sets that can lead to computational resource challenges, however. In an ongoing collaboration between Intel® and Google, the Intel® Optimization for TensorFlow* has become one such solution. TensorFlow 1.0 was originally released back in 2017, but it was only later that same year that Intel would introduce its integration of the Intel® oneAPI Deep Neural Network Library (oneDNN) into TensorFlow.

Fast-forward to 2022, and the Intel® Extension for TensorFlow*, which is open-source on GitHub, emerged as a result of the co-architecting of various pluggable device mechanisms for AI development with TensorFlow*. This mechanism essentially allows existing TensorFlow programs to run a new device without the user changing any code. Rather, a user only needs to install the extension to a specified directory and through the device mechanism can discover and extend the expected functionalities of the given extension. Then in 2022, Intel® Advanced Matrix Extensions (Intel® AMX) was released. This new built-in accelerator for AI capabilities on 4th Gen Intel® Xeon® Scalable processors in turn includes significant accelerations with AI workflows that include TensorFlow.

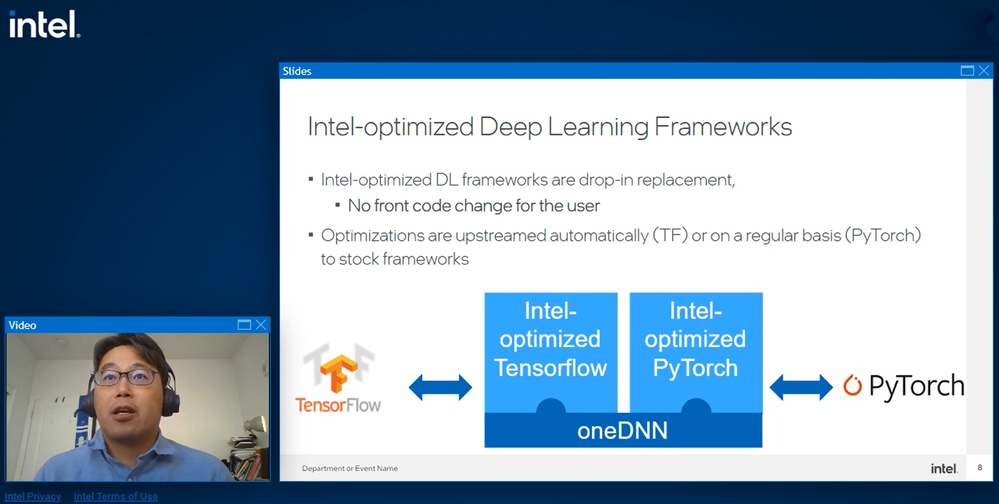

The Intel® Extension for TensorFlow* creates optimizations that benefit developers on the GPU and CPU sides (note that CPU optimizations are in the experimental phase and will release with product quality in Q2’23) by providing an Intel® XPU engine implementation strategy to identify the best compute architecture based on the application needs. Eventually, the Intel® Extension for TensorFlow* will take over as the main optimization product for TensorFlow by Intel, as the other major product, the Intel® Optimization for TensorFlow*, will be phased out. Specifically, when the CPU optimizations are stabilized enough in a future release, it is at that point that the Intel® Extension for TensorFlow* can take the reins. It will be positioned as the only Intel TensorFlow product that will provide aggressive performance optimizations for those customers who have especially sensitive performance or benchmarking needs. Intel will also continue to upstream CPU optimizations to the official TensorFlow codebase as improvements are made. Intel optimizations are also up streamed into many other AI Frameworks including PyTorch, Scikit-learn, XGBoost and others.

There are three main model optimization methods utilized by the Intel® Extension for TensorFlow*: operator optimizations, graph optimizations, and mixed precision (different data types) optimizations. The public API for extended XPU operators is provided by the itex.ops namespace. This extended API provides greater performance than the original default API. Refer to the documentation provided for specific examples of these itex.ops namespace operator optimizations.

With graph optimizations, the goal is to fuse specified operator patterns into a single new operator to achieve better performance. This fusion can be done via basic fusion, whereby the input and output are expected to be the same data type, or via mixed data type fusion, whereby different data types can still be fused by removing the data type conversions on the graph level. This mixed data type fusion is not supported by stock TensorFlow and thus an advantage provided only through the Intel® Extension for TensorFlow*.

The third optimization type Auto Mixed Precision (AMP) improves upon standard mixed-precision, using lower-precision data types (such as FP16 or BF16) to make models run faster and more efficiently by using less memory during training and inference. AMP can be broken down into three categories of operators. First, the lower_precision_fp operators, which are computational bound operators, create performance improvements to bfloat16 (a floating-point format that as the name implies occupies 16 bits of computer memory). Second, there is Fallthrough, a category of operators that run with both float32 and bfloat16 formats but is less aggressive in boosting the bfloat16 performance compared to the former category. Lastly, FP32 consists of operators not yet enabled with bfloat16 support; currently, inputs are cast into Float32 prior to execution. The Intel® Extension for TensorFlow* takes AMP one step further with Advanced AMP, which features greater performance gains (on Intel® CPU and GPU) than stock TensorFlow* AMP. It provides more aggressive sub-graph fusion, such as LayerNorm and InstanceNorm fusion, as well as mixed precision in fused operations.

GPU profiling is also critical for identifying performance issues. Thus, the Intel® Extension for TensorFlow* also provides support for TensorFlow* Profiler, which can be enabled by exposing three environment variables. See the documentation here for further details on this feature.

Being that AI modeling is often so computationally expensive and resource heavy, the greater the improvements that can be made to mitigate these costs, the better. Thus, it is the goal of the Intel® Extension for TensorFlow* to tackle both the CPU and GPU sides of the resource “coin” to give the aggressive gains developers are looking for. AI innovations require continuous effort and support from developers on all fronts, and the Intel® Extension for TensorFlow* is shaping up to be an amazingly robust new implementation of the powerful open-source software library that is TensorFlow*.

See the webinar replay: Get Better TensorFlow* Performance on CPUs and GPUs

About our experts

Louie Tsai

AI Software Solutions Engineer Intel

Louie is responsible for driving customer engagements with and adoption for Intel® Performance Libraries, leveraging the synergies between Intel® Optimization for TensorFlow* and the Intel® oneAPI AI Analytics Toolkit. Louie holds a master’s degree in Computer Science and Information Engineering from National Chiao Tung University.

Aaryan Kothapalli

AI Software Solutions Engineer Intel

Aaryan enables customers in building and optimizing their AI and analytics workflows using optimizations from Intel® architectures. He holds a bachelor’s degree in Computer Science Engineering from the University of Texas at Dallas and a master’s degree in Computer Science with a specialization in Machine Learning and Data Mining from Texas A&M University.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.