Cloud native deployment with Kubernetes orchestration has enabled the “Write Once, Deploy Anywhere” paradigm for applications. This application development and deployment model enables scale and agility in today’s hybrid and multi-cloud environments. Applications or services packaged as containers can be deployed and managed with the same Kubernetes based eco-system tools in the public cloud, on premise or Edge locations. Microsoft Azure Arc-Enabled Kubernetes (Reference 1) could be viewed as one such ecosystem tool the enables central management of Kubernetes clusters deployed on premises locations or across different public clouds. Kubernetes based offerings from different vendors are supported and they need not be based on Azure Kubernetes Service (AKS) (Reference 2). Azure Arc-Enabled Kubernetes enables centralized management of heterogenous and geographically separate Kubernetes clusters from Azure public cloud.

Intel OpenVINO™ is an open-source software toolkit for optimizing and deploying AI inference across a variety of Intel CPU and accelerator devices (Reference 3). OpenVINO toolkit includes pre-trained and optimized models to enable inference applications. It also includes OpenVINO Model Server (OVMS) for serving high performance machine learning models as a service (Reference 4). OVMS is based on the same architecture as TensorFlow Serving and Inference as a service is provided via gRPC or REST API, making it easy to consume by applications that require AI model inference.

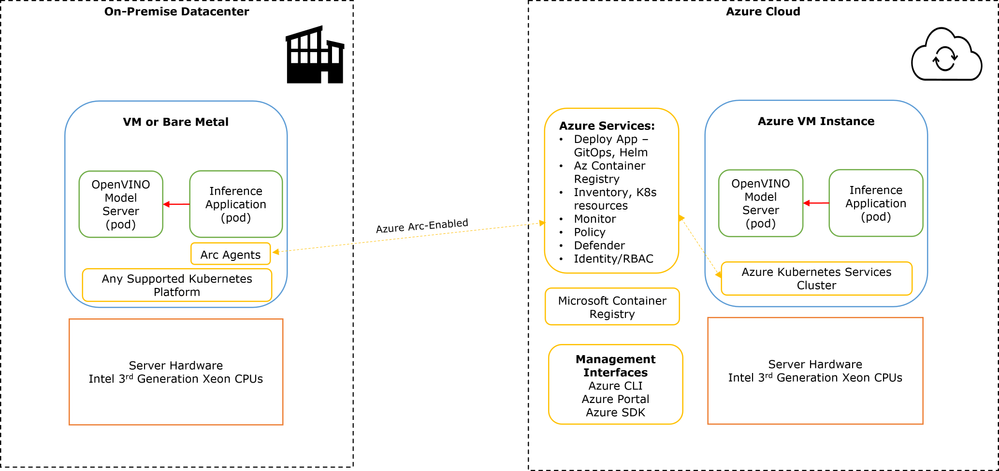

This article covers a proof of concept (PoC) to deploy OpenVINO Model Server and a demo Inference application on both Azure cloud and on premised Kubernetes cluster, using the same tools and application images. It will also cover centrally monitoring the 2 deployments from Azure public cloud. The highlighted capability provides Enterprises the choice to deploy optimized Intel AI Inferencing applications either on cloud or on premise or both based on their business needs and available capacity. The key benefit is seamlessly deploying Intel OpenVINO software that is highly optimized for Intel Xeon processors at their on premise or cloud or edge locations in a consistent manner. This benefit comes along with utilizing a “single pane” for management and application deployment of disperse Kubernetes clusters from Azure Cloud as show in Solution Overview Figure 1 below.

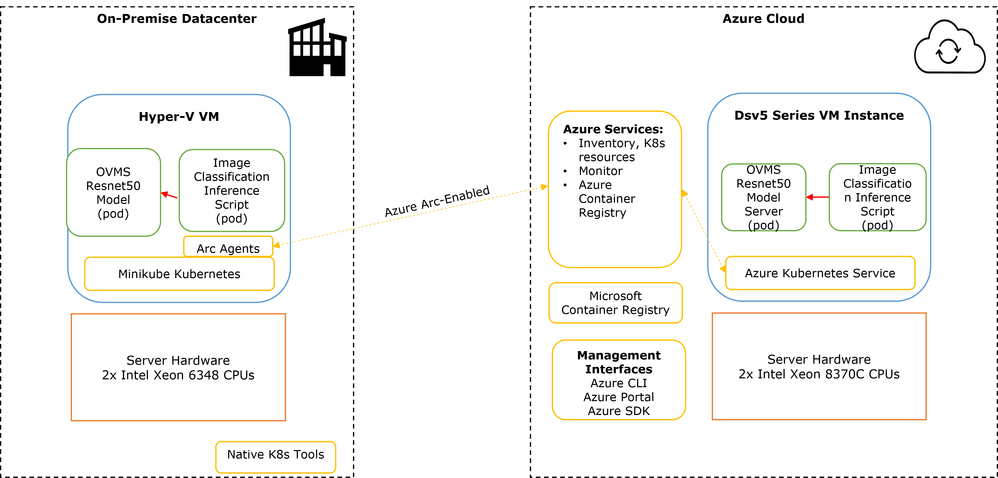

The PoC configuration used for demonstration is shown in Figure 2 below.

1 ON-PREMISE DEPLOYMENT

As a proof-of-concept, a Kubernetes deployment was setup at one of the Intel onsite lab locations. A dual socket server configured with 2 x Intel(R) Xeon(R) Gold 6348 CPUs (3rd generation Xeon scalable processors, codename Ice Lake) was setup with Windows Server 2002 Hyper-V. A virtual machine was setup and installed with a single node Kubernetes deployment using minikube (Reference 5). For on premises production Kubernetes deployment, this could be Azure Kubernetes Service deployed on Azure Stack HCI, VMware Tanzu on VMware vSphere or RedHat OpenShift or other supported Kubernetes platforms.

1.1 AZURE ARC CONNECTION

The local minikube based Kubernetes deployment was Azure Arc enabled via a connection procedure to the Azure cloud (Reference 6). Key steps for the connection are identified below, using Azure CLI on the local lab machine:

- Ensure kubeconfig is configured on local machine to enable kubectl to administer the local Kubernetes deployment

- Install Azure CLI extension on local machine: az extension add --name connectedk8s

- Register Azure Arc Providers:

az provider register --namespace Microsoft.Kubernetes

az provider register --namespace Microsoft.KubernetesConfiguration

az provider register --namespace Microsoft.ExtendedLocation - Create cloud resource group to host minikube cluster: az group create --name ArcK8s --location CentralUS

- Connect the local minikube Kubernetes deployment to Azure Arc:

az connectedk8s connect --name minikube --resource-group ArcK8s --proxy-https http://xxxx --proxy-http xxxx --proxy-skip-range xxxx

Proxy settings were used since the lab machine hosting minikube was connected via a proxy server to the Internet. - Verify connection of local minikube to Azure Arc: az connectedk8s list --resource-group ArcK8s

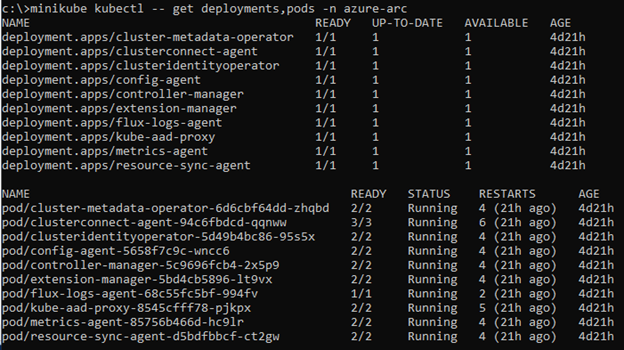

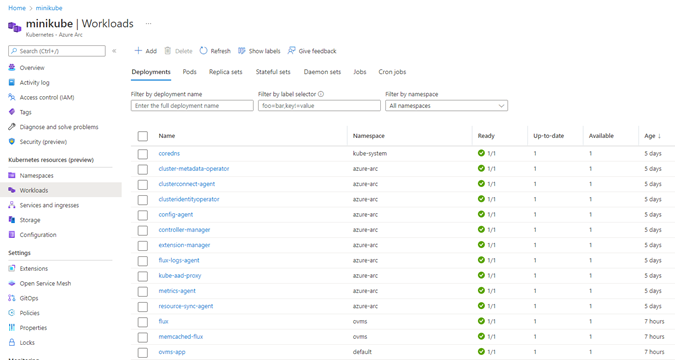

- Azure Arc enablement installs agents via pods on local Kubernetes in a new Kubernetes namespace called “azure-arc”. These agents (deployments and pods) are shown in figure below.

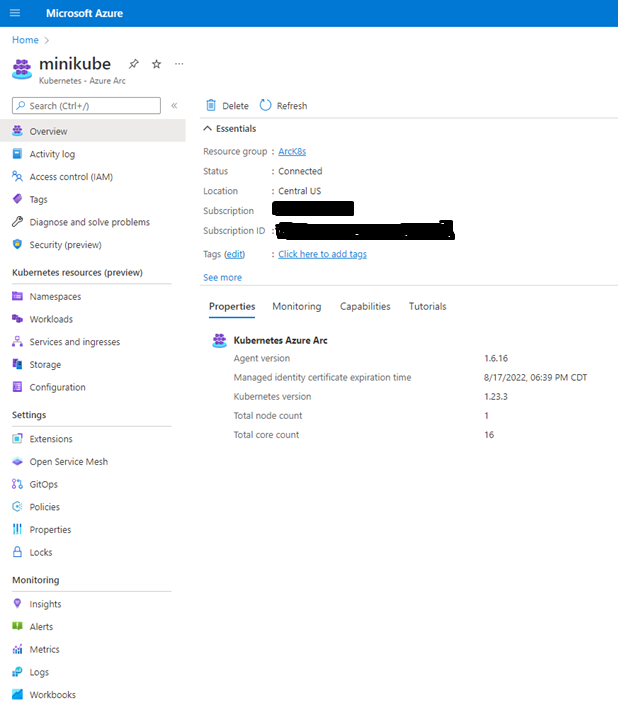

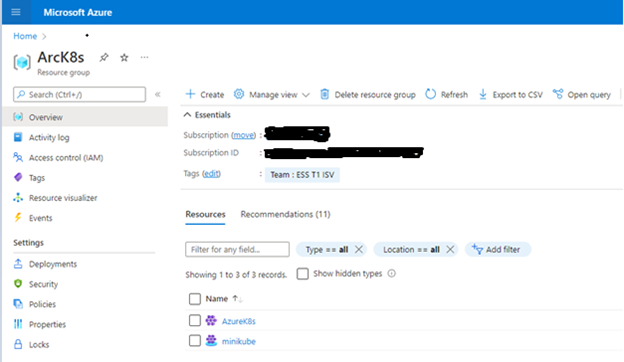

On the Azure portal, the minkube Kubernetes deployment (single node cluster) can be managed under the created resource group “ArcK8s” as shown in screenshot figures below.

1.2 OPENVINO MODEL SERVER (OVMS) & INFERENCE APPLICATION DEPLOYMENT

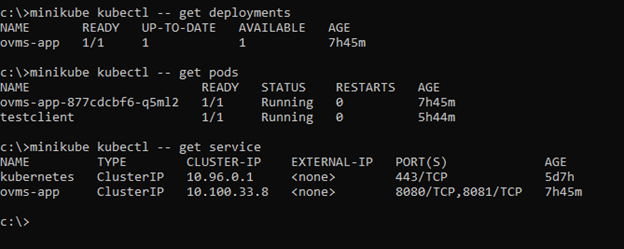

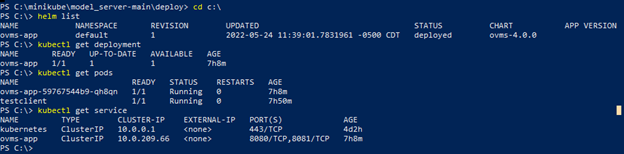

OVMS can be deployed on the local minikube Kubernetes cluster using Intel provided sample Helm chart at the location - https://github.com/openvinotoolkit/model_server/tree/main/deploy. Model data is required by OVMS in a specific directory and file format to support Inference requests for the models (Reference 7). The model data can be provided to OVMS pod in a Kubernetes environment via different pod storage provisioning options – using cloud storage, local host node storage or external storage solutions via Kubernetes persistent volumes. For simplicity, we used local host VM storage to host the model data and attach to the deployed OVMS pod via Kubernetes “hostPath” mechanism. A Resnet50 trained model’s data was hosted in the OVMS pod. The model data was provided in OpenVINO optimized format (.bin and .xml files). The steps for installing OVMS in minikube environment is provided in the Appendix (below). The Kubernetes OVMS deployed state details are shown in figure below.

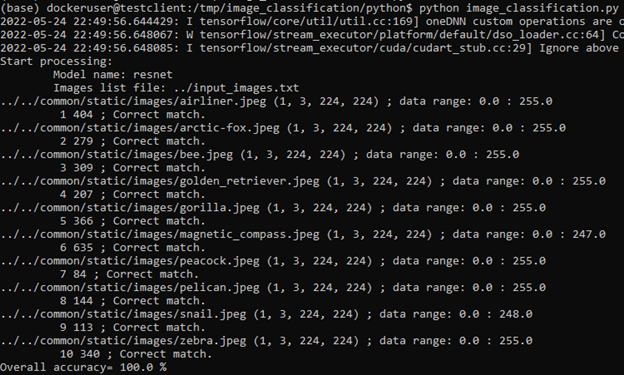

The OVMS deployment can verified with a demo Inference application. Demo inference scripts are provided at the location - https://github.com/openvinotoolkit/model_server/tree/main/demos. The image classification script was used that submits a list of images one at a time to OVMS for inference. The inference request included specifying the Resnet50 model name to be used for inference. A test client container pod was used to launch the demo inference script. The steps for launching the test client pod and executing the demo inference script is provided in the Appendix. The output of the inference script is shown below in figure 7.

2 AZURE CLOUD DEPLOYMENT

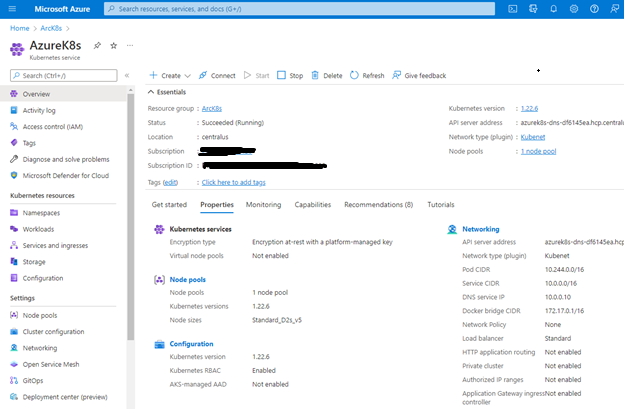

A Kubernetes cluster was deployed in Azure cloud using Azure Kubernetes service. A single Azure VM (node pool with 1 node) was used for this deployment. The VM instance type chosen was “Standard_D2s_v5” which includes vCPUs based on 2 x Intel(R) Xeon(R) Platinum 8370C (3rd generation Xeon scalable processors, codename Ice Lake). The Azure Kubernetes instance was deployed under the same Azure Resource Group as the earlier minikube local Kubernetes instance. This is shown in Azure Portal figure below (Resource Group is “ArcK8s” and Kubernetes instances are “AzureK8s” and “minikube”)

The Azure Kubernetes instance details are shown in figure below.

2.1 OVMS AND INFERENCE APPLICATION DEPLOYMENT

The same steps used for the on premise minikube instance was used for OVMS deployment. Instead of local VM node, steps were performed on the Azure cloud VM node and the Azure cloud Kubernetes instance. A client machine was installed with Azure CLI and was configured to operate on the Azure cloud Kubernetes cluster with kubectl (Reference 10). The same steps were performed to deploy Inference demo application container pod to verify the deployment.

The figure below shows the OVMS deployed state details in Azure cloud Kubernetes instance:

3 SUMMARY

In this article we demonstrated Intel OpenVINO based inference deployment on 3rd Generation Intel Xeon processors, both on-premise and in Azure cloud with Kubernetes. These processors include Intel® Deep Learning Boost Vector Neural Network Instructions (VNNI), based on Intel Advanced Vector Extensions 512 (AVX-512) for optimized and improved inference performance. Further both deployments uses the same tools, install methods and were monitored centrally from Azure cloud. The consistency and mobility of deploying inference applications using OpenVINO in a hybrid Kubernetes based cloud environment was demonstrated.

4 APPENDIX

4.1 OVMS DEPLOYMENT ON MINIKUBE

Azure GitOps with Flux enables deploying applications on Arc enabled Kubernetes clusters with Helm Charts (Reference 11). This method was not used for this deployment since minkube Kubernetes version was incompatible with resource types required for Azure GitOps agents. Instead Helm was used locally to deploy.

The following installation steps were performed on the local machine managing minikube with kubectl:

- Copy model data to minikube host node under /models folder. Pre-trained Resnet50 model data in OpenVINO format (Reference 8)

was downloaded and posted under folder structure /models/resnet/1 on minikube host node. The folder convention is /models/model name/version number. - Run: git clone https://github.com/openvinotoolkit/model_server

- Run: cd model_server/deploy

- Modify ovms/values.yaml file for deployment. In our case, the following values were changed:

service_type: "ClusterIP"

models_host_path: "/models"

- Execute helm chart installation: helm install ovms-app ovms --set model_name=resnet --set model_path=/models/resnet

The above installation will pull image openvino/model_server:latest from docker hub and deploy on the minikube cluster. The host node /models folder will be mapped to /models folder within the deployed container pod. The model path in helm install is set to match the host folder /models/resnet created in step 1 above. Instead of docker hub for source container image, a private azure registry can be created and the docker image can be pulled into the private registry. The private registry then can be associated with local Kubernetes cluster to pull images from (Reference 9).

NOTE - When using GitOps recommended method , a private Azure container registry can be setup to pull image from instead of docker hub. The OpenVINO model server image can be pulled into private Azure container registry from Azure market place - https://azuremarketplace.microsoft.com/en-hk/marketplace/apps/intel_corporation.openvino?tab=PlansAndPrice

4.2 DEMO INFERENCE SCRIPT EXECUTION ON MINIKUBE

The following installation steps were performed on the local machine managing minikube with kubectl:

- Launch an OS container pod on local minikube as test client. In our case we used an image from Microsoft container registry: minikube kubectl -- run testclient --image=mcr.microsoft.com/azureml/tensorflow-2.4-ubuntu18.04-py37-cpu-inference:latest

- Login into the pod interactively: minikube kubectl -- -it exec testclient -- /bin/bash

- Copy or git clone https://github.com/openvinotoolkit/model_server. Go to model_server/demos/image_classification/python folder.

- Install any missing python packages in this list: grpcio, numpy, tensorflow, tensorflow-serving-api, opencv-python, opencv-python-headless

- Execute demo script: python image_classification.py --grpc_address 10.100.33.8 --grpc_port 8080 --input_name 0 --output_name 1463 --images_list ../input_images.txt --model_name resnet

The script uses grpc API to make inference request to OVMS container pod. The IP address is the Kubernetes cluster service IP assigned to the OVMS service. The inference request is a sequence of 10 images sent one at a time to classify the objects in the images using resnet model uploaded earlier to the OVMS.

5 REFERENCES

- https://docs.microsoft.com/en-us/azure/azure-arc/kubernetes/overview

- https://docs.microsoft.com/en-us/azure/azure-arc/kubernetes/validation-program

- https://docs.openvino.ai/latest/index.html, https://github.com/openvinotoolkit/openvino

- https://docs.openvino.ai/latest/ovms_what_is_openvino_model_server.html, https://github.com/openvinotoolkit/model_server

- https://minikube.sigs.k8s.io/docs/start/

- https://docs.microsoft.com/en-us/azure/azure-arc/kubernetes/quickstart-connect-cluster?tabs=azure-cli

- https://docs.openvino.ai/latest/ovms_docs_models_repository.html

- http://storage.openvinotoolkit.org/repositories/open_model_zoo/2022.1/models_bin/2/resnet50-binary-0001/FP32-INT1/

- https://docs.microsoft.com/en-us/azure/container-registry/container-registry-get-started-docker-cli?tabs=azure-cli, https://docs.microsoft.com/en-us/azure/container-registry/container-registry-auth-kubernetes

- https://docs.microsoft.com/en-us/azure/aks/learn/quick-kubernetes-deploy-cli

- https://docs.microsoft.com/en-us/azure/azure-arc/kubernetes/use-gitops-with-helm

8/2/22 UPDATE: Blog Reposted under Original Author's name/Intel Community Profile.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.