Published January 12, 2022

Gadi Singer is Vice President and Director of Emergent AI Research at Intel Labs leading the development of the third wave of AI capabilities.

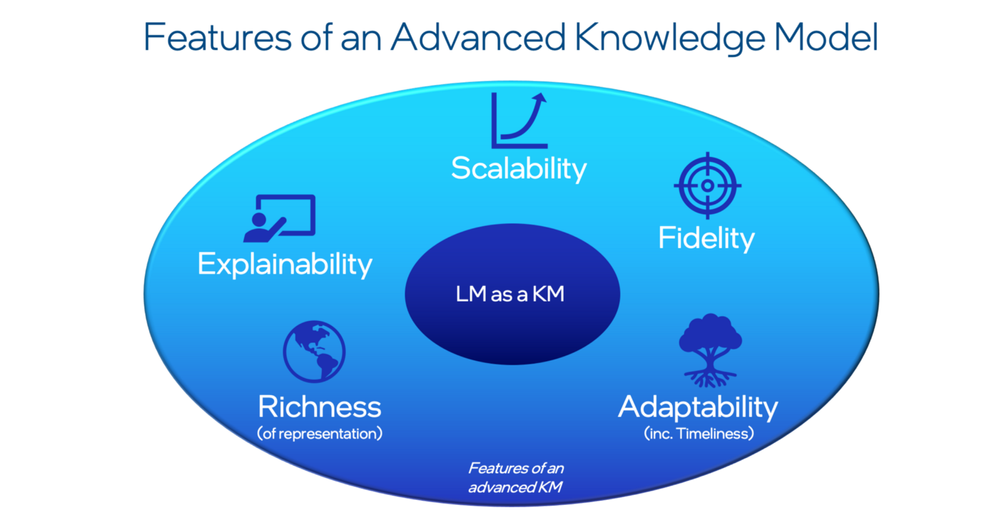

Large language models (LMs) have demonstrated that they can serve as relatively good knowledge models (KMs). But do they excel at performing all the functions required for enabling truly intelligent, cognitive AI systems? The answer, I believe, is no. This post will discuss the five capabilities that make up an advanced KM and how these areas cannot be easily addressed by LMs in their present form. These capabilities are Scalability, Fidelity, Adaptability, Richness and Explainability.

The next wave of AI will shift towards the higher functions of cognition such as commonsense reasoning, insights through abstractions, acquisition of new skills, and novel use of complex information. Rich, deeply structured, diverse, continuously evolving knowledge will be the foundation. Knowledge constructs that allow an AI system to organize its view of the world, comprehend meaning, and demonstrate understanding of events and tasks will likely be at the center of higher levels of machine intelligence. It is therefore valuable to understand the required advanced knowledge model (KM) characteristics and assess the suitability of contemporary fully encapsulated end-to-end generative Language Models (LMs) for the fulfilment of these requirements.

LMs like GPT-3 and T5 are gaining popularity amongst researchers as potential sources of knowledge, often with considerable success. For example, Yejin Choi’s group has pioneered approaches like Generated Knowledge Promptingand Symbolic Knowledge Distillation, successfully extracting high-quality commonsense relations by querying a large LM like GPT-3. Clearly, value can be gained from such approaches because they allow for automated database creation without schema engineering, pre-set relations, or human supervision.

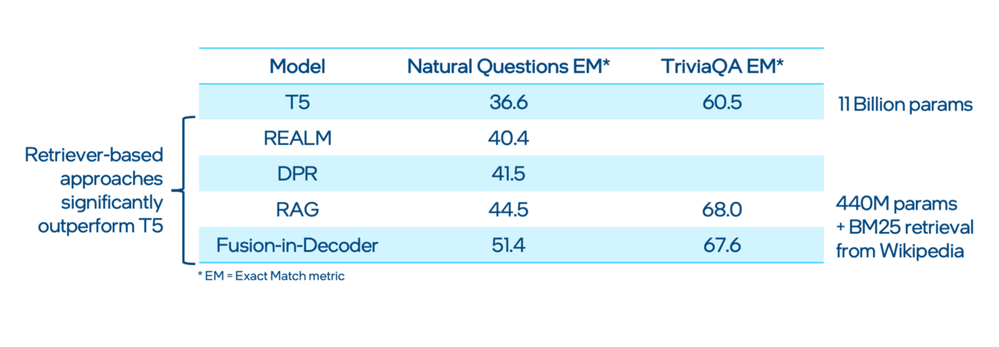

It is tempting to hope that LMs could evolve incrementally to provide the full scope of capabilities required from knowledge bases. However, despite their many advantages and valuable uses, LMs have several structural limitations that make it more difficult for them to become a superior and more functional repository and interface to knowledge. For example, it can be seen in benchmarks like open-domain question answering, where retriever-based approaches outperform generative LMs significantly.

Figure 1. Retriever-based approaches outperform generative LMs for open domain question answering. Source of data: [3].

Image credit: Gadi Singer.

It should be noted that LMs were designed to perform next word prediction rather than with the specific purpose of storing knowledge. Therefore, even though they might do well in some cases, their architecture is not an optimal way of representing knowledge, is different from human memory, and their performance is not streamlined for knowledge-related tasks.

To better understand these shortcomings, consider what an advanced KM could be capable of. I propose five main capabilities: Scalability, Fidelity, Adaptability, Richness, and Explainability.

Image credit: Gadi Singer.

Let’s look at each of them in more detail.

Scalability

Obviously, there is a tremendous amount of information in the world. Large LMs store as much relevant information as their capacity allows in parametric memory and offer fast access at the cost of training efficiency. Such models typically require a very large corpus of data and significant time investment to achieve performance improvements, and thus are limited in the scope of information they can access once deployed. Retrieval-based approaches such as those referenced in Figure 1 expand the scope of reliably accessible information well beyond what is encoded in the LM. Further impressive demonstration of the essential value of retrieval to accommodate scale came in December 2021 as part of DeepMind’s RETRO. By creating a separation between language skills (embedded in the model) and external knowledge retrieved from a database with a trillion-scale tokens, they demonstrated substantial improvement in results of their 7B parameter RETRO compared with 280B parameter Gopher and 178B parameter Jurrasic-1.

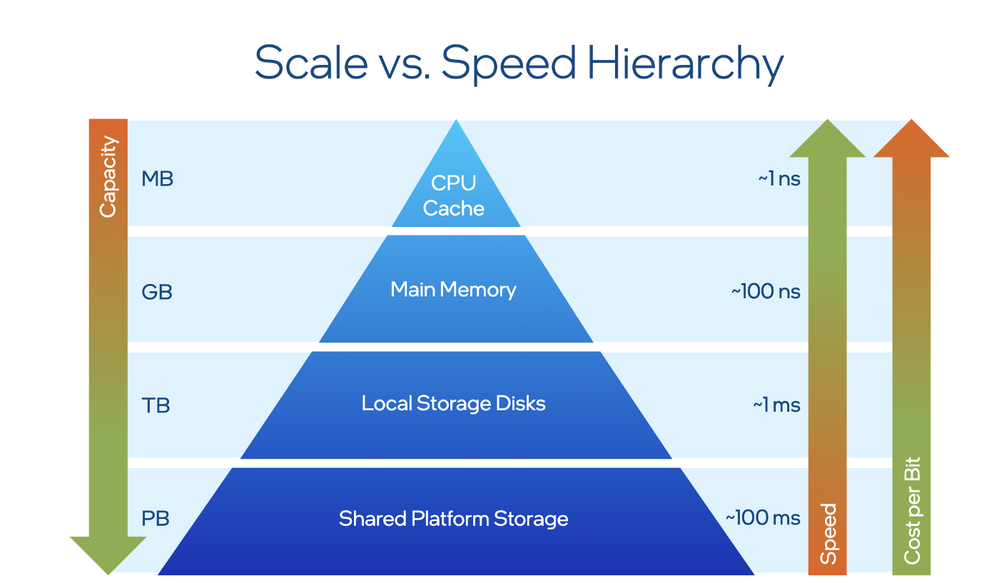

However, retrieval systems might lack nuance that can be achieved with the help of integrated knowledge. Generally, designing systems that are optimized for accessing relevant information at high speeds creates an inherent tension between scale and expediency and future systems are expected to have sophisticated layered access architectures. As detailed in the blog “No One Rung to Rule Them All”, cost-effective access to information necessitates a tiered architecture and means that a KM must include specialized modules for dealing with each tier.

For example, we may want to differentiate between data items accessed every ten-time instances from items that only need to be accessed every ten trillion-time instances. Providing an access hierarchy, such as in a computer system, makes it possible to separate data by the frequency it needs to be accessed and design a different hardware architecture for each frequency tier (think cache registers vs. hard drives in a computer).

The most frequently used knowledge (or one that requires the most expediency) should also be the most easily accessible, which requires more expensive architectures and limits the maximum economically feasible capacity of this tier. For example, as the storage capacity in a computer architecture goes up, the system must sacrifice some of the speed at which it is accessed (Figure 3).

Figure 3. The scale vs. speed hierarchy.

Image credit: Gadi Singer.

An ideal KM must be scalable and include the entirety of wisdom that has been accumulated by human civilization (to the extent that might be needed by the AI as it performs and evolves). The system must also be expedient in executing the most critical and time-sensitive tasks and will likely require a tiered architecture like the one described above. While LMs may pick up knowledge about the world through training on large corpora, they have no specific architecture for storing knowledge in an optimized fashion.

Fidelity

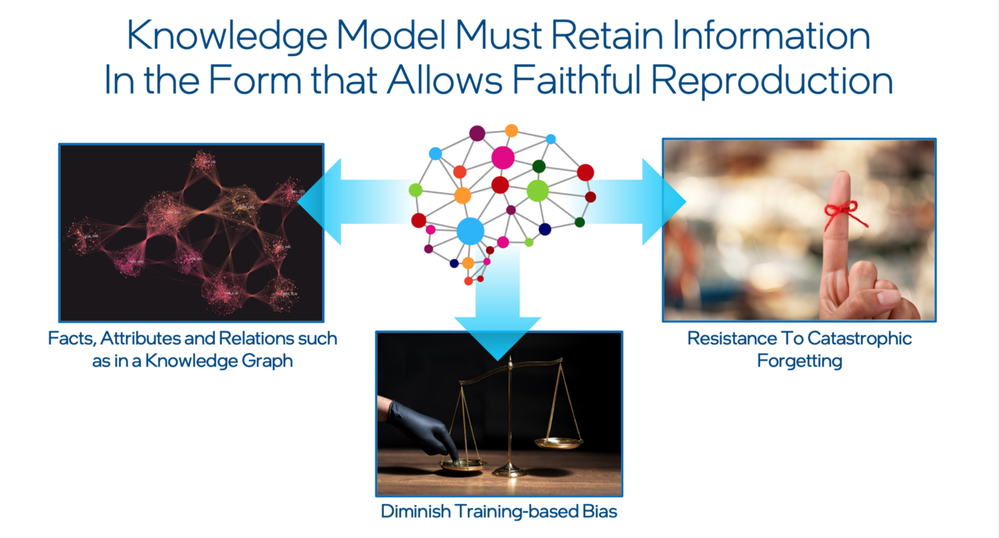

A KM must have high fidelity, that is, it must allow for the reproduction of facts, attributes, and relationships that is inherent to their origin and not dependent on the statistics of their occurrence. The precision of the record for a single observation must be equivalent to that of a well-tested multi-observation theory even if the confidence bounds are different between the two. A high-fidelity KM is less prone to catastrophic forgetting or other types of information decay and allows for explicit protections against the various types of training-based bias.

Figure 4. KMs must retain information that allows for faithful reproduction.

Image credit: Gadi Singer.

In general, LMs, and machine learning (ML) models are somewhat weak along this dimension. While there are many approaches to mitigating this problem, none expect the LM to rid itself of the bias. The LM must rely on specialized modules and external processes instead.

Adaptability

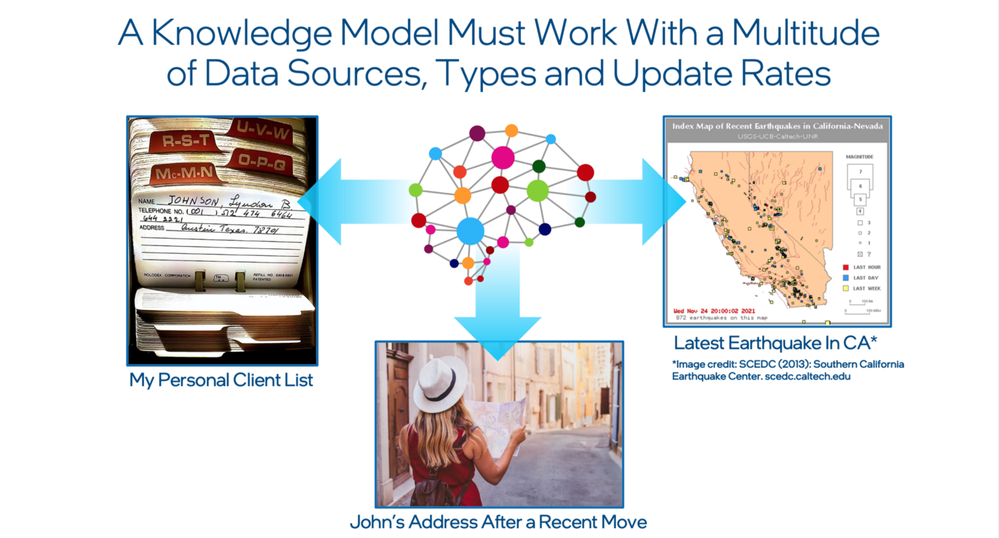

A KM must work with many data sources, types, and update rates. A dentist in a small office needs to work with patient information, update it as often as it changes, and associate different data types with the same patient (diagnosis in a text file, X-ray images). Each patient record is updated on a per-visit basis. On the other hand, a financial institution deals with a completely different type of information profile with much faster update rates and much higher volumes. This type of system is existentially dependent on how quickly it can process new information to detect fraud and errors.

Image credit: Gadi Singer and SCEDC.

The LMs of today, even though they may have been trained on very recent data, are not as effective in locally altering the network to reflect an addition or alteration of an isolated data point. Any fine-tuning requires a full or at least a substantial update that goes well beyond the scope of the data point being added or altered and risks corrupting unrelated knowledge stored in the same system. An advanced KM cannot have this limitation. It needs to handle various sources of information with diverse data types, and it must allow for economical and timely updates that match the pace of change of the relevant knowledge.

Richness

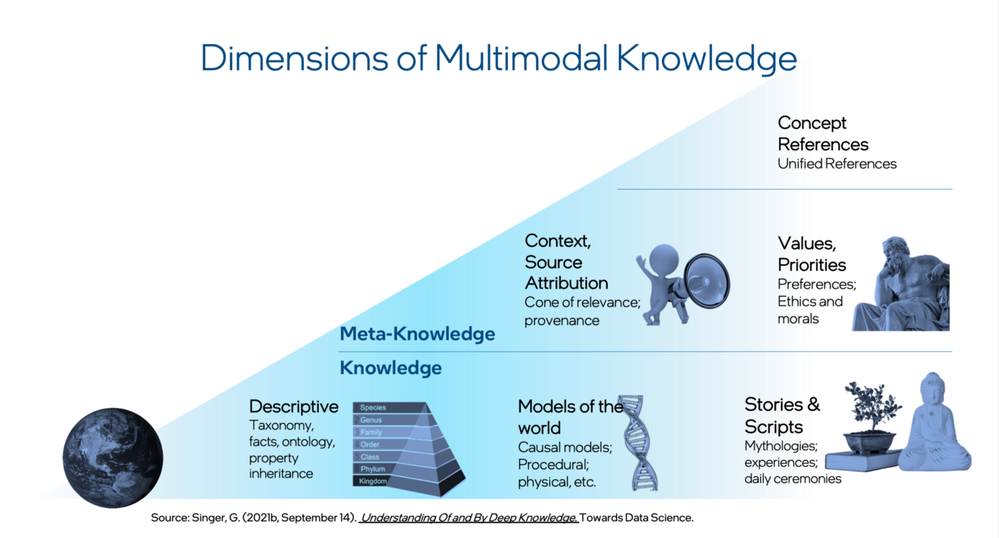

Another capability is deciphering the richness of knowledge, which is the ability to capture all the complexities of the relations within the knowledge expressed through language and other modalities. As discussed in the article on dimensions of knowledge, it can be separated into Knowledge and Meta-Knowledge. Direct knowledge can be descriptive like taxonomies and ontologies, or property inheritance. This type of knowledge can be stored in many different modalities: language, 3D point cloud, sound, image, etc. It could also represent models of the world, such as causal, procedural, or physical models. Finally, it could include stories and scripts, such as mythologies, experience descriptions, daily ceremonies, etc.

Figure 6. Dimensions of multimodal knowledge.

Image credit: Gadi Singer.

The meta-knowledge dimensions and constructs include source attribution, values and priorities, and concepts. A human being can discriminate between sources of information based on their trustworthiness. For example, one cannot expect to treat the average clickbait in the yellow press as being as credible as an article from the Nature science journal.

Humans also assess information in the context of their value systems and preferences and encapsulate it into conceptual structures for long-term storage and reasoning. While LMs reflect a subset of these knowledge dimensions (GPT-3 seems to be quite aware of thetypical joke script, for example), they appear to represent only a partial subset of the dimensions of knowledge. It is likely that a different architecture is needed to capture the full richness of human knowledge.

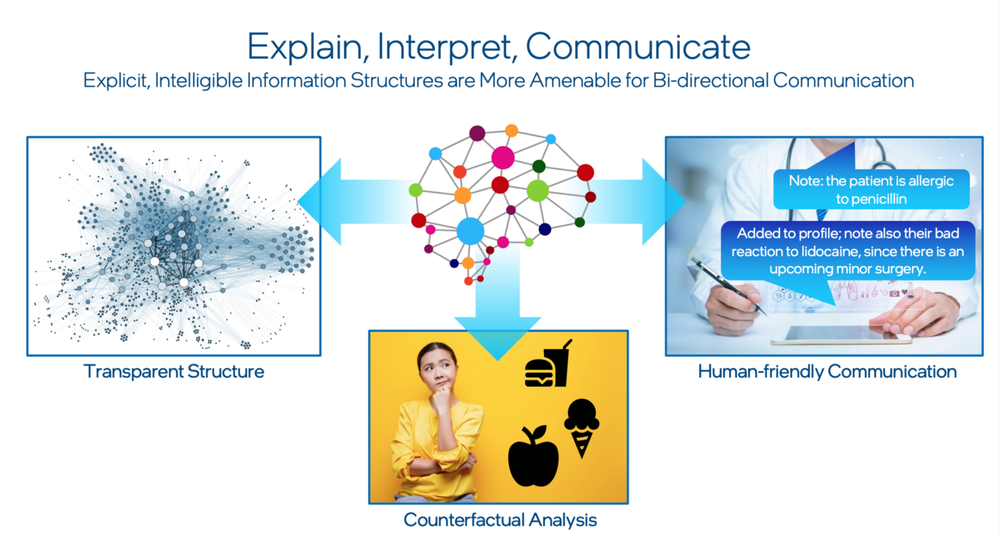

Explainability

When AI interacts with humans, especially on high-stakes tasks such as medical, financial, or law enforcement applications, its ability to explain its choices and communicate naturally becomes very important. Such explainability and interpretability is much easier to achieve with the help of an explicit, intelligible source of knowledge. Explicit knowledge allows for a transparent structure that can be inspected, edited and integrated into causal inference or counterfactual reasoning modules. It provides a better foundation for communication between AI and the human user, where information can be exchanged, adopted, assimilated, and communicated back.

Image credit: Gadi Singer.

Conclusion

An end-to-end LM, however large or expensive, cannot perform all the functions required of a more advanced KM to support the next generation of AI capabilities. But what does this indicate about the actual architecture that can support the next generation of AI systems? Some preliminary progress has already been made in areas like reasoning and automatic expansion of knowledge graphs, and I have spoken about in depth when describing the Three Levels of Knowledge architectural blueprint.

Whatever the future holds, it will require an industry effort to develop AI systems with KMs that cover the five capabilities so that we can achieve truly intelligent cognitive AI. In future blogs we will discuss some potential approaches to developing these capabilities in future AI systems.

References

1. Liu, J., Liu, A., Lu, X., Welleck, S., West, P., Bras, R. L., … & Hajishirzi, H. (2021). Generated knowledge prompting for commonsense reasoning. arXiv preprint arXiv:2110.08387.

2. West, P., Bhagavatula, C., Hessel, J., Hwang, J. D., Jiang, L., Bras, R. L., … & Choi, Y. (2021). Symbolic knowledge distillation: from general language models to commonsense models. arXiv preprint arXiv:2110.07178.

3. Izacard, G., & Grave, E. (2020). Leveraging passage retrieval with generative models for open domain question answering. arXiv preprint arXiv:2007.01282.

4. Merrill, W., Goldberg, Y., Schwartz, R., & Smith, N. A. (2021). Provable Limitations of Acquiring Meaning from Ungrounded Form: What will Future Language Models Understand?. arXiv preprint arXiv:2104.10809.

5. Nematzadeh, A., Ruder, S., & Yogatama, D. (2020). On memory in human and artificial language processing systems. In Proceedings of ICLR Workshop on Bridging AI and Cognitive Science.

6. Singer, G. (2021, December 21). No One Rung to Rule Them All: Addressing Scale and Expediency in Knowledge-Based AI. Medium. https://towardsdatascience.com/no-one-rung-to-rule-them-all-208a178df594

7. Kirkpatrick, J., Pascanu, R., Rabinowitz, N., Veness, J., Desjardins, G., Rusu, A. A., … & Hadsell, R. (2017). Overcoming catastrophic forgetting in neural networks. Proceedings of the national academy of sciences, 114(13), 3521–3526.

8. Singer, G. (2021a, December 19). Understanding of and by Deep Knowledge — Towards Data Science. Medium. https://towardsdatascience.com/understanding-of-and-by-deep-knowledge-aac5ede75169

9. Branwen, G. (2020, June 19). GPT-3 Creative Fiction. https://www.gwern.net/GPT-3#humor

10. Singer, G. (2021b, December 20). Thrill-K: A Blueprint for The Next Generation of Machine Intelligence. Medium. https://towardsdatascience.com/thrill-k-a-blueprint-for-the-next-generation-of-machine-intelligence-7ddacddfa0fe#e6a1-20df55d5621c

11. Borgeaud, S., Mensch, A., Hoffmann, J., Cai, T., Rutherford, E., Millican, K., … & Sifre, L. (2021). Improving language models by retrieving from trillions of tokens. arXiv preprint arXiv:2112.04426.

12. Alammar, J. (2022, January 3). The Illustrated Retrieval Transformer. http://jalammar.github.io/illustrated-retrieval-transformer/

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.