Authors: Corey Heath and Ojas Sawant

Introduction

The 11th Gen Intel® Core™ Processor family, formerly known by the code name Tiger Lake, introduced new processor features that improve performance on AI workloads by taking advantage of lower data representations to produce faster results on inference tasks. Our goal is to walk through how you can run your own benchmarking experiments on Intel® DevCloud for the Edge using the Deep Learning Workbench provided in the OpenVINO™ toolkit.

For this tutorial, we’re going to run our experiments on three systems that are hosted on the DevCloud and provide a performance comparison on AI workloads using FP16 and INT8 date representations. If you’re interested in more formal performance benchmarks, they can be found on the OpenVINO benchmark results page.

System Comparison

For our experiments, we want to select hardware that has similar attributes from three different generations from the systems that are available on the DevCloud. We’ll be examining the performance of Intel® Core™ i7-1185G7E, Intel® Core™ i7-10710U, and Intel® Core™ i7-8665UE processors. A comparison on the selected processors can be found on the Intel product specification page. In Table 1, we can see that the 107010U system, formerly named Comet Lake, has a greater max turbo Frequency and more CPU cores and threads. Ideally, we would be using systems with the same configuration, however, we’ll still be able to observe trends in the systems’ performance in the results from running our experiments with different data representations.

Table 1: At-a-glance comparison of Intel® Core™ systems used in the experiments.

|

Generation |

8th Gen Intel® Core™ |

10th Gen Intel® Core™ |

11th Gen Intel® Core™ |

|

Processor |

i7-8665UE Formerly Whiskey Lake |

i7-10710U Formerly Comet Lake |

i7-1185G7E Formerly Tiger Lake |

|

Platform |

|||

|

Max Turbo Frequency |

4.4 |

4.7 |

4.4 |

|

CPU Cores |

4 |

6 |

4 |

|

CPU Threads |

8 |

12 |

8 |

|

TDP |

15W |

15W |

15W |

|

RAM |

16 GB |

16 GB |

16 GB |

|

Memory Channels |

2 |

2 |

2 |

|

Memory Type |

DDR4-2400 |

DDR4-2666 |

DDR4-3200 |

A key differentiator between i7-1185G7E and the older generation processors is the inclusion of new processor features: Intel® AVX-512, Intel Deep Learning Boost, and Vector Neural Network Instructions. These features enable additional optimizations not possible with previous generations when working with INT8 precision.

Experiments

We’re going to use the Deep Learning Workbench (DL Workbench) hosted on Intel® DevCloud to conduct our experiments. DL Workbench is a graphical user interface for utilizing model execution, optimization, and telemetry tools. Hosting DL Workbench on the DevCloud provides the opportunity to download and optimize models, then run experiments on an array of Intel hardware platforms. In DevCloud experiments are executed directly on the selected hardware platform, not on a virtual machine. DL Workbench is accessible after creating an Intel® Developer account and accessing Jupyter Lab through the Intel® DevCloud homepage.

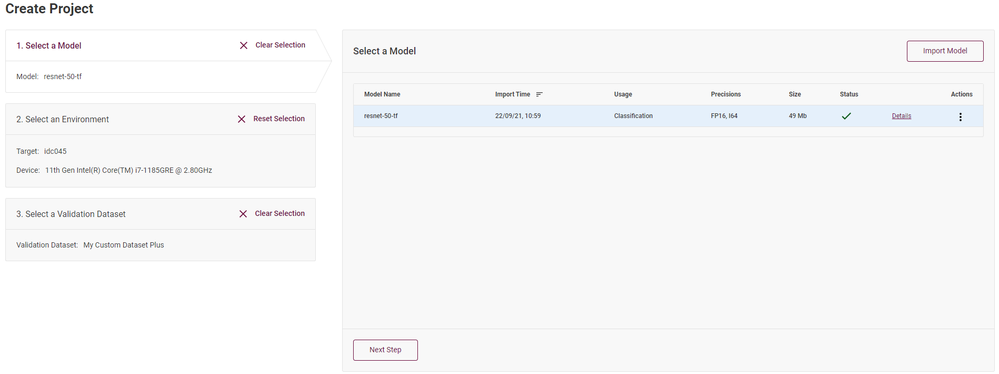

Model

For the comparison experiments, we’ll be using the ResNet-50 TensorFlow model. ResNet-50 is a 50-layer convolutional neural network trained on the ImageNet dataset. We can download the model in DL Workbench through the OpenVINO Open Model Zoo interface. In Figure 1 (see above), having the ‘1. Select a Model’ menu selected and clicking the ‘Import’ button in the top right will guide you through downloading a pre-trained model or uploading a customer model of your own. After downloading the ResNet model, DL Workbench will convert it to OpenVINO’s intermediate representation (IR) at our chosen precision, either FP16 or FP32. To start, we’ll use FP16 for the precision.

Dataset

We’ll use the images provided in the DL Workbench to create a dataset for our experiments. In the menu in Figure 1, clicking ‘3. Select a Validation Dataset’ will display any previous datasets that have been uploaded. To import or create a new dataset, click the button in the top right to access the ‘Import Dataset’ menu. Under the ‘Import Dataset’ menu, there are 14 base images we can select to use as a dataset. Additionally, we can enlarge the dataset using image augmentations (including image flips, noise creation, and color transformations) provided by the interface. For our experiments, we’ll use the base images and augmentations of these images to increase the dataset to 336 samples.

Alternatively, you could upload your own dataset by using the ‘Upload Dataset’ tab provided in the ‘Import Dataset’ menu.

FP16 Experiments

Now that we’ve imported the ResNet-50 and created the dataset, we’re ready to select the platforms and run our experiments. The available platforms are listed in the center of the page, as shown in Figure 1. A combo box below the platform list allows you to switch between GPU or CPU. Our experiments will focus on using CPU.

DL Workbench uses a platform ID for identifying available systems. For reference, you can use the same systems in DevCloud by selecting the platform IDs displayed in Table 2.

Table 2: DL Workbench platform IDs for the 3 systems.

|

Generation |

Processor |

Platform ID |

|

8th Gen Intel® Core™ |

i7-8665UE |

Idc016ai7 |

|

10th Gen Intel® Core™ |

i7-10710U |

Idc022 |

|

11th Gen Intel® Core™ |

i7-1185G7E |

Idc045 |

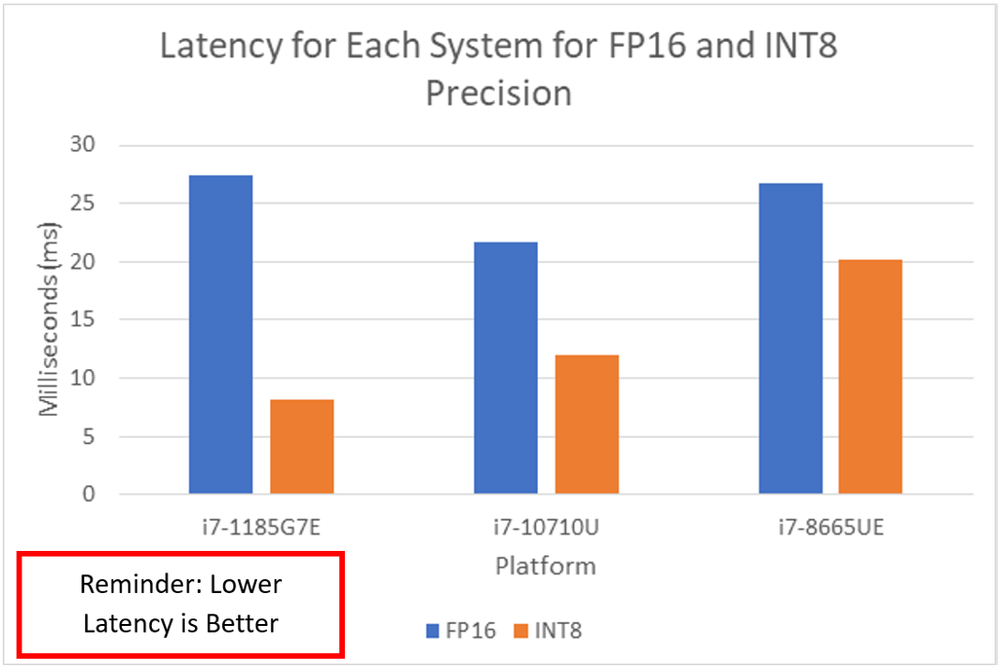

Our experiments were run with the default setting using one batch and one stream for the input. This will use a single thread to run the entire dataset. After running the three experiments, the display will show the throughput the model achieved on our dataset as seen in Figure 2. The throughput is the calculated rate in frames per second (FPS) for performing inference on the dataset. The model’s latency, the average amount of time in milliseconds it took to perform inference on one sample from the dataset, can be viewed in the ‘Selected Experiment’ section of the project page after the experiment completes.

The results show that the i7- 10710U system had the best performance on the dataset, this is due to the higher max turbo frequency. The i7- 8665UE and i7- 1185G7E Gen systems have similar frequency and core count; however, the i7- 1185G7E system had a greater performance. Despite this similarity, the i7- 1185G7E system benefits from the latest architecture design.

INT8 Optimizations

We can optimize the model to INT8 precision on the experiment result view. This view is displayed immediately after an experiment completes, or it can be accessed for a previously run experiment by clicking the ‘Open’ button in the model view (Figure 3). The model view shows the details for the experiments that ran with the model on different platforms. The accuracy column isn’t currently enabled on the DevCloud version of DL Workbench. Additionally, we used an unannotated dataset and wouldn’t have had the accuracy reported. The runtime precision column shows the precision that was used during execution. We imported our modeling using FP16 precision, however, for processing on CPU the bit representation is padded with empty values to increase the size to 32-bit floating point.

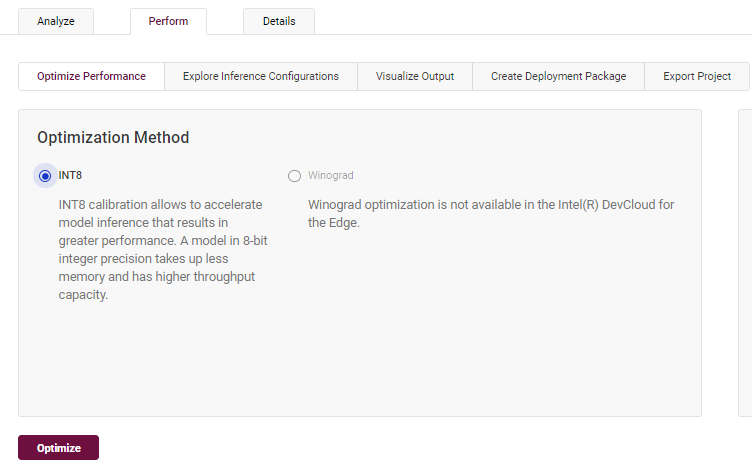

After clicking ‘Open’ from the model view, the INT8 optimization options are located under the ‘Perform’ tab as shown in Figure 4. Selecting the ‘INT8’ and clicking ‘Optimize’ will lead to an options menu. This menu provides different calibration methods. We’re using an unannotated dataset. The options will be selected to maximize the model’s performance.

The performance optimization comes at the cost of model accuracy. Using INT8 optimization means we’re reducing the number of bits being used to represent numeric values in our model. This reduction means we’re handling a smaller amount of data, which allows greater user of cache and memory, as well as reduces data transmission and computation times. However, the reduction in data representation causes a loss of precision. Low representation can lead to a loss of detail the model may use to discriminate between classes, causing a reduced inference accuracy. Although this is generally minimal, specific tasks can’t afford to lower the accuracy significantly.

Quantization isn’t an all-or-nothing option. Calibration can take accuracy into account and find a balance between improved computational performance and acceptable loss of inference precision. In DL Workbench, with an annotated model, you can select options and thresholds for balancing accuracy degradation with increased performance.

The INT8 optimizations are performed on the selected system node using the Post-Training Optimization Tool (POT) included in the OpenVINO toolkit.

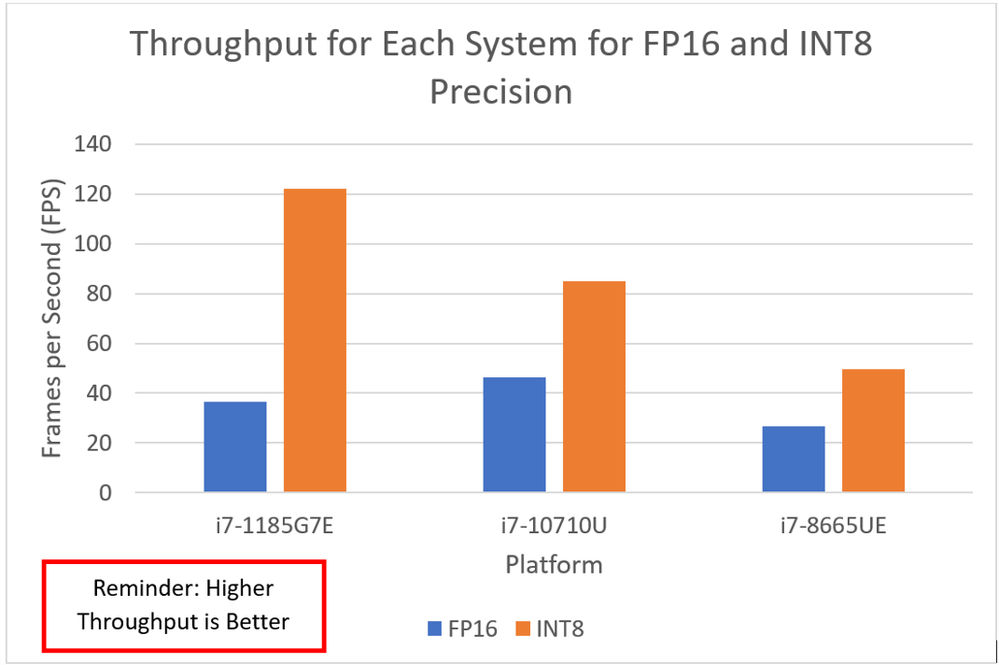

Running the INT8 optimization will execute an experiment with one batch and one stream for the input. These results, presented in Figure 5, show a significant increase in throughput. The i7-1185G7E system now has a much higher throughput compared to the i7-10710U Gen system, with over 37 samples more per second processed.

Results Recap

The bar graph of the throughput, Figure 6, illustrates the generational difference between the three systems in handling the INT8 optimized model. The i7-8665UE system had a minor gain in performance using INT8, nearly matching the FP16 throughput of the i7-10710U system. The i7-10710U system nearly doubled its throughput after the optimization. The improvements in the performance of the i7-1185G7E system illustrates the advancements made by incorporating DL Boost and VNNI in the latest hardware. The i7-1185G7E system nearly triples its performance compared to the FP16 precision model experiment.

As with throughput, the i7-1185G7E system benefits the most from the INT8 optimization with the largest reduction in latency time of the three systems, shown in Figure 7.

INT8 On the Edge

Looking at these experiments, we can see why using INT8 optimizations with the latest hardware is an attractive choice for AI applications, particularly when looking at solutions for the edge. Efficient INT8 optimization reduces the memory cost and computation complexity for inferences tasks. Based on the throughput on individual images, an application using the optimized model on the i7-1185G7E system could potentially process video at over 120 frames per second. This makes it possible to implement applications that can perform AI decision-making using complex data in practically real-time on an edge system. Having real-time solutions is important for a variety of applications from autonomous vehicles to smart security systems and beyond.

Disclaimers

The testing for this article was conducted by Intel® employees using the Intel® DevCloud for the Edge platform on October 4, 2021. The Deep Learning Workbench and OpenVINO toolkit version was 2021.4.1. The operating system for the i7-1185G7E platform was Ubuntu 20.04. The i7-8665UE and i7-10710U systems use Ubuntu 18.04.

Comprehensive processor information is available at the following sites: Intel® Core™ i7-1185G7E, Intel® Core™ i7-10710U, and Intel® Core™ i7-8665UE.

Performance varies by use, configuration, and other factors. Learn more at www.Intel.com/PerformanceIndex

Performance results are based on testing as of dates shown in configurations and may not reflect all publicly available updates. See above for details.

No product or component can be absolutely secure.

Your costs and results may vary.

Intel technologies may require enabled hardware, software or service activation.

Some results may have been estimated or simulated.

© Intel Corporation. Intel, the Intel logo, and other Intel marks are trademarks of Intel Corporation or its subsidiaries. Other names and brands may be claimed as the property of others.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.