You can now watch a recording of the recent webinar Optimize Transformer Models with Tools from Intel and Hugging Face*, presented by Julien Simon, Chief Evangelist at Hugging Face, and Ke Ding, Principal AI Engineer at Intel.

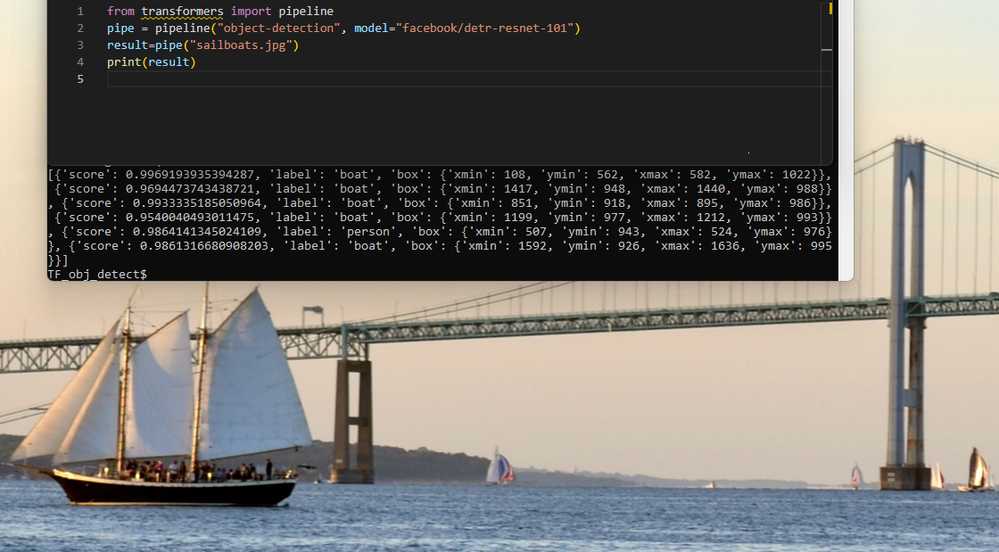

The Hugging Face Hub is a collaboration platform analogous to GitHub for machine learning. It’s growing rapidly and as of the webinar airing (February 2023) boasts over 130 thousand models, 20 thousand datasets, 100 thousand daily users, and sees over 1 million daily downloads. Its simplicity enables this rapid growth – you can access its pipelines directly from your Python* scripts with a couple additional lines of code.

The example above uses the Hugging Face Transformers library, which is most well-known for natural language processing (NLP) applications. However, as you can see, this is an object detection pipeline. According to Julien, even though NLP still represents 41% of transformer applications, transformers are being used for all kinds of pipelines including recommendation systems and vision tasks. He cited a survey that showed over 60% of machine learning practitioners already use transformers.

He then moved on to the purpose of the webinar, which is the work that Hugging Face has been doing with Intel to democratize machine learning hardware acceleration.

Specifically for transformers, Optimum Intel interfaces Intel AI software with the Hugging Face Transformers library. The Intel software tools and frameworks take advantage of the latest Intel hardware features that can accelerate AI workloads. For instance, 4th Gen Intel® Xeon® Scalable processors add new Intel® Advanced Matrix Extensions (Intel® AMX) specifically for the matrix multiply-accumulate operations that tend to dominate AI workloads.

The bulk of the webinar walks through a variety of example workloads running on an AWS r7iz instance, which feature 4th Gen Intel Xeon Scalable processors and DDR5 memory. For full end-to-end AI workloads, optimizing memory bandwidth and keeping compute on the CPU can often have significant benefits, as you will see across these use cases:

- Text classification inference, which compares the latency running on 4th Generation vs 3rd Generation Xeon processors, then adds software optimizations from Intel® Extension for PyTorch*. See the Hugging Face blog post for more details on this comparison.

- Stable diffusion inference – 5 seconds for inference on a CPU! Access the demo on Hugging Face.

- Few-shot learning for text classification, which lets you fine-tune a model with just a few samples. Learn more with this tutorial.

- Distributed training, fine-tuning a question-answering transformer on 4 nodes. Julie also went into more depth on this example in a blog post.

These examples show that you can run many different types of AI workloads on CPUs with optimized software. Being able to run on CPU, combined with the ability to start with pre-trained models with a couple lines of Python, makes AI much more accessible.

If you would like to learn more about the collaboration between Intel and Hugging Face, each company has a landing page for this effort:

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.