Published December 14, 2021

Moshe Wasserblat is currently the research manager for Natural Language Processing (NLP) at Intel Labs.

Outperforming GPT-3 on few-shot Text-Classification while being 1600 times smaller

The GPT-n series show very promising results for few-shot NLP classification tasks and keep improving as their model size increases (GPT3–175B). However, those models require massive computational resources and they are sensitive to the choice of prompts for training. In this work, we demonstrate Sentence Transformer Fine-tuning (SetFit), a simple and efficient alternative for few-shot text classification. The method is based on fine-tuning a Sentence Transformer with task-specific data and can easily be implemented with the sentence-transformers library. We validated SetFit by applying it to the RAFT (Real-World Few-Shot Text Classification) benchmark and show that it surprisingly outperforms models like GPT-3 while being 1600 times smaller, and alleviating the need for prompt training and using unlabeled data. In addition, our method contributes to narrowing the gap between machine and human performance provided only 50 instances for training.

Introduction

Supervised NNs show remarkable performance in many tasks across various fields and domains, but they require a large amount of labeled training data. The data labeling process is labor‑intensive and costly and hinders the deployment of AI systems in the industry. Transfer learning (TL) in AI achieves substantial robustness for low data resource scenarios. TL In NLP (Ruder et al. 2019) involves two steps: A Pre-trained Language Model (PLM) trained using unlabeled data and a second step of fine tuning for a specific task. In standard fine-tuning setups such as BERT (Devlin et al. 2019), a generic classification head on top of the PLM outputs representations that are used to generate the final predictions. Recent advances in LM fine-tuning based Text-to-Text[1] achieved SOTA few-shot performance and include in-context learning and task specific prompt learning.

In-context learning models directly generate the answers based on input-to-output training examples provided as prompts without changing their parameters. GPT-3 (Brown et al., 2020) utilized in-context learning to demonstrate superior few-shot capabilities in many NLP tasks. Its major disadvantages are that it requires a huge model, relies only on the pre-trained knowledge, and necessitates extensive prompt engineering.

Task specific prompt learning converts the downstream task into a masked language model problem, whereby upon provision of a prompt, defined by a task specific template, the model generates textual responses which are predictions of the masked tokens. The pioneering Pattern-Exploiting Training (PET) approach (Schick & Schutze 2021a,b) incorporates such patterns and is demonstrated to outperform GPT-3 in few-shot learning using models that are three orders of magnitude smaller. This setting, however, has several limitations: it requires multi-step training including adapting and an ensemble of several PLMs; it utilizes task-specific unlabeled data; and it necessitates hand-crafting of prompts. ADAPET (Tam et al. 2021) outperforms PET on few-shot SuperGLUE without any unlabeled data by leveraging more supervision to train the model. LM-BFF (Gao et al. 2021) provides improved few-shot fine-tuning performance by combining prompt-based fine tuning (FT) with automated prompt generation, and dynamically selecting task examples as part of the input context.

Recently, several benchmarks have emerged that target few-shot learning in NLP, such as RAFT (Alex et al. 2021), FLEX (Bragg et al. 2021), and CLUES (Mukherjee et al. 2021). RAFT is a real-world few-shot text-classification benchmark, which provides only 50 samples for training and no validation sets. It includes 11 practical real-world tasks such as medical case report analysis and hate speech detection, where better performance translates directly into higher business value for organizations. The benchmark includes results produced by GPT-3 that became a standard baseline for comparisons. In a related paper (Schick & Schutze 2021c), it was shown that prompt-based learners like PET excel on the RAFT benchmark and perform close to human level for 7 out of the 11 tasks without using any validation data.

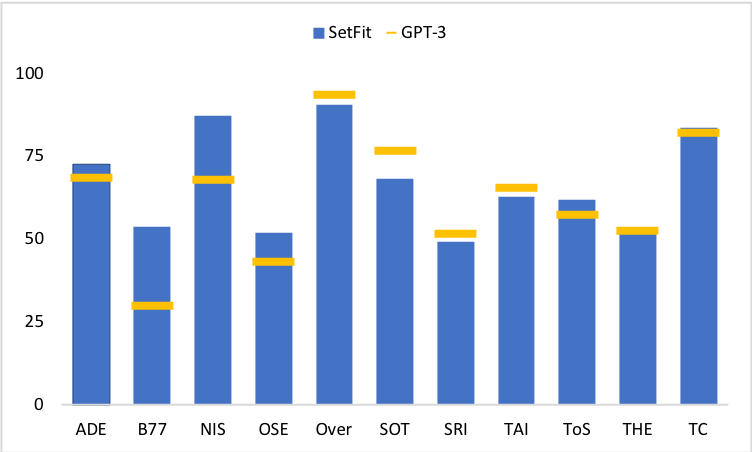

In this blog, we present SetFit, an adapted Sentence Transformer model, which is fine tuned with a small amount of data to solve real world text classification challenges such as RAFT. That being said, it was out of the scope of this work to validate that SetFit is a general panacea for text classification. The blog is organized as follows: we start with a background related to Sentence Transformers and then provide a detailed description of the adaptation method. Since the targeted benchmark lacks any validation data we had to search for the best set of parameters based on an external related task. We choose SST-2 as our validation dataset and conducted an evaluation to choose the best parameters for the RAFT experiment. The next step was to fine tune SetFit for the RAFT tasks and perform prediction RAFT’s test data while exploiting several heuristics and best practices. Our performance per task is reported on RAFT’s leaderboard website. Upon publication[2] SetFit ranked 2nd, immediately after the human baseline and outperformed GPT-3 in 7 out of 11 tasks even though it is x1600 smaller (see Figure 1).

Method

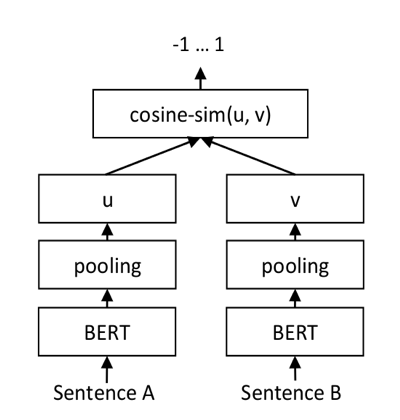

Sentence Transformer (ST) is a very popular approach deployed for semantic search, semantic similarity and clustering. The idea is to encode a unique vector representation of a sentence based on its semantic signature. The representation is built during contrastive training by adapting a transformer model in a Siamese architecture (Remiers & Gurevych 2019), as shown in Figure 2, aiming to minimize the distance between semantically similar sentences, and maximize the distance between sentences that are semantically distant.

Figure 2: Siamese BERT architecture

Sentence Transformers yield very effective representations when applied to large scale comparisons between sentence-pairs, which is a very common scenario in information retrieval tasks. STs can also be effective for other popular NLP tasks such as sentiment analysis and named entity recognition because they convert text into a structure of numeric values which is then very easy to scale, manipulate, and process with off-the-shelf Machine Learning (ML) toolkits and popular NLP production frameworks (e.g. SparkNLP[3]).

The sentence-transformers library[4] is a model hub for ST representations, including an abstract API and code examples for training, fine-tuning and inferencing ST in production.

ST for Text Classification The idea of using ST for text classification is not new and includes an encoding step and a classification step (e.g. Logistic Regression). ST performance outperforms other embedding representations but is not comparable to cross-encoder (e.g. BERT) classification (Remiers & Gurevych 2019). Surprisingly, we did not find any work that performed an end-to-end ST fine-tuning for text classification in a Siamese manner. Perhaps the reason is that STs were pre-trained on semantic similarity tasks (i.e. sentence-pairs convey the same/distant meaning) and it is intuitive to further adapt them for the same objective. In addition, it is only recently that new ST models with a significant performance boost were released as part of the sentence-transformers (e.g. MPNetbased models with large data training) and others (e.g. SimCSE) and they are probably still being explored for non-similarity tasks.

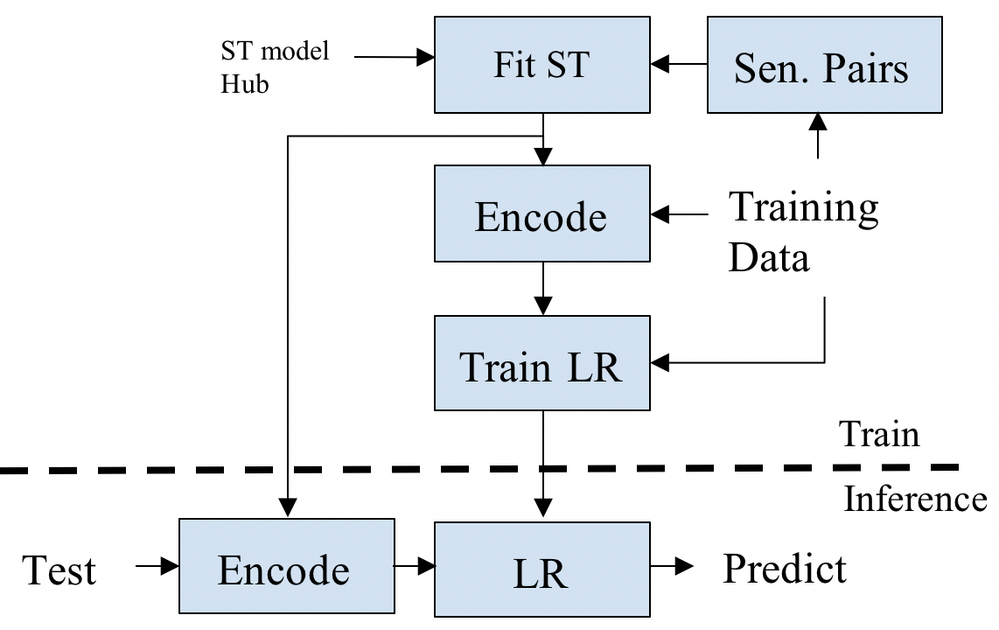

SetFit — Sentence Transformer Fine-Tuning Figure 3 is a block diagram of SetFit’s training and inference phases. An interactive code example can be found here[5].

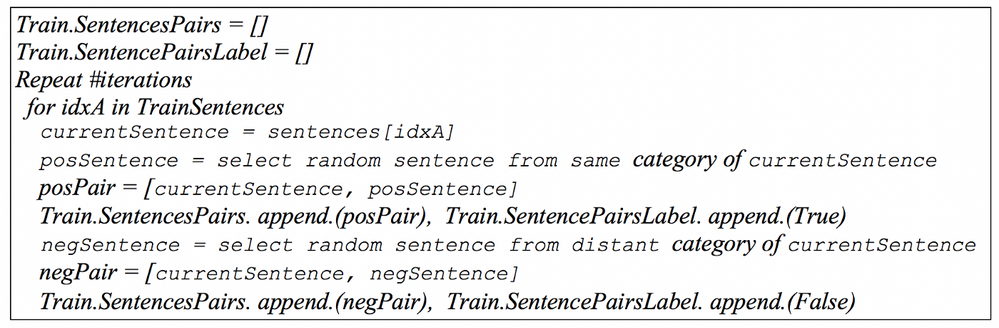

The first step of the training phase is choosing a ST model from the sentence-transformers[6] model hub. The following steps are setting the training class, populating the train dataloader and performing fine-tuning. In order to better tackle the limited amount of labeled training data in the few-shot scenario, we adopt a contrastive training approach that is often used for image similarity (Koch et al. 2015): the training data comprises positive and negative sentence-pairs, where positive pairs are two sentences chosen randomly from the same class and negative pairs are two sentences chosen randomly from different classes. A sentence-pairs generation pseudo-code is shown in Figure 4. In each sentence-pairs iteration we generate 2xN training pairs, where N is the total number of training samples per task. The generated sentence pairs are utilized for FineTuning (Fit) the ST model.

At the end of the FineTuning step, an adapted ST is produced. Next, the sentences training data is encoded using the adapted ST, then the encoded data is utilized to train a Logistic Regression (LR) for simplicity. In the inference phase, each test sentence is encoded with the adapted ST, and then its category is predicted by the LR model.

Figure 3: SetFit’s diagram

Figure 4: Sentence-pairs generation pseudo-code

Experiments

RAFT (Alex et al. 2021) is a few-shot classification benchmark designed to match real world scenarios by restricting the number of training samples to 50 labeled examples per task and not providing validation sets. The former is difficult for practitioners because it is challenging to find the best training hyperparameter set. The prominent characteristics of SetFit that impact its performance include:

- The type of ST: there are many types of STs in the sentence-transformers model hub, and the question is how to choose the best ST for a given task.

- Input data selection: in some of the RAFT tasks, several data fields are provided as input, for example title, abstract, author name, ID, data, etc. This gives rise to the question: which data fields should be used as input and how to combine them?

- Choice of hyperparameters: how does one choose the best fine-tuning hyperparameter set (e.g. #epochs, number of sentence-pairs generation iterations)?

Eventually, we applied simple heuristics and common sense to choose the ST model, input data fields, hyperparameters and sequence length. In the following sections we describe our best practice for choosing those parameters.

ST models the sentence-transformers library contains a wide range of sentence embedding models from which to choose. The model names correspond to the type of transformer they are based on, and the training dataset. For example, the ‘paraphrase-mpnet-base-v2’ model was trained with the MPNet model using the paraphrase similarity dataset.

There are no explicit guidelines for how to choose the best model or the training dataset for a downstream NLP task, although some rules of thumb exist given a similarity task. Since RAFT is a text classification dataset, we hypothesized that it would benefit from an embedding model that was trained to detect semantic similarity between sentence-pairs, because that somewhat resembles similarity between classes in a text classification setup. We therefore considered embedding models that were trained using “all” or “paraphrase” training datasets and were designed as general-purpose models, and we excluded embedding models that were trained using NLI or Q&A datasets which are very distinct from text classification tasks.

We targeted only models with sizes that are comparable to BERT-110M. We also tested distilled versions of STs, such as TinyBERT-80M and MiniLM-80M, but found that their performance was not comparable with the original teacher model (see Annex A).

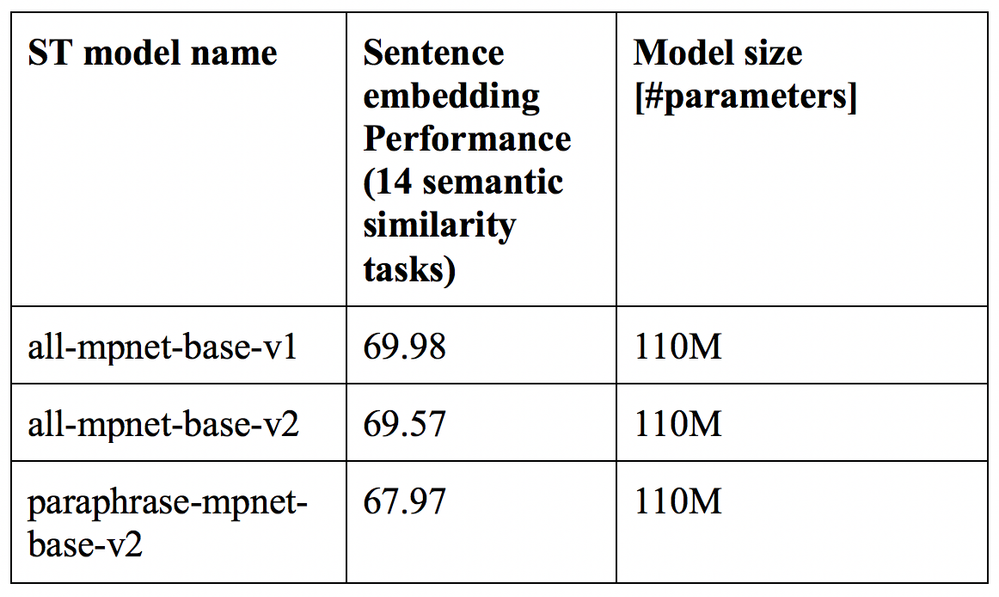

Taking all the above into consideration, we selected the following three candidate models for the validation step. These models were ranked highest in sentence-transformers’ sentence embedding performance, as seen in Table 1.

Using SST-2 as a validation set We validated SetFit on the SST-2 dataset, which is part of the widely used GLUE benchmark. SST-2 was heuristically chosen because it shares several qualities with the majority of the RAFT benchmark tasks such as:

● Relatively short sequence length (<64)

● Rich in semantics and syntactic structures, may be implied by achieving high accuracy with Transformer vs. other classifiers

● Represents real life scenarios

The choice of SST-2 is not optimal for RAFT but can serve as an alternative validation set, given the fact that RAFT does not provide a validation data nor gold labels for the test data.

The few-shot setup is simulated by randomly selecting a small number of training samples (16 or 50) per category from the full SST-2 training data, for adapting and training the ST. The training process is repeated five times using a different random seed each time. The final accuracy is produced by averaging the inference results across the five iterations.

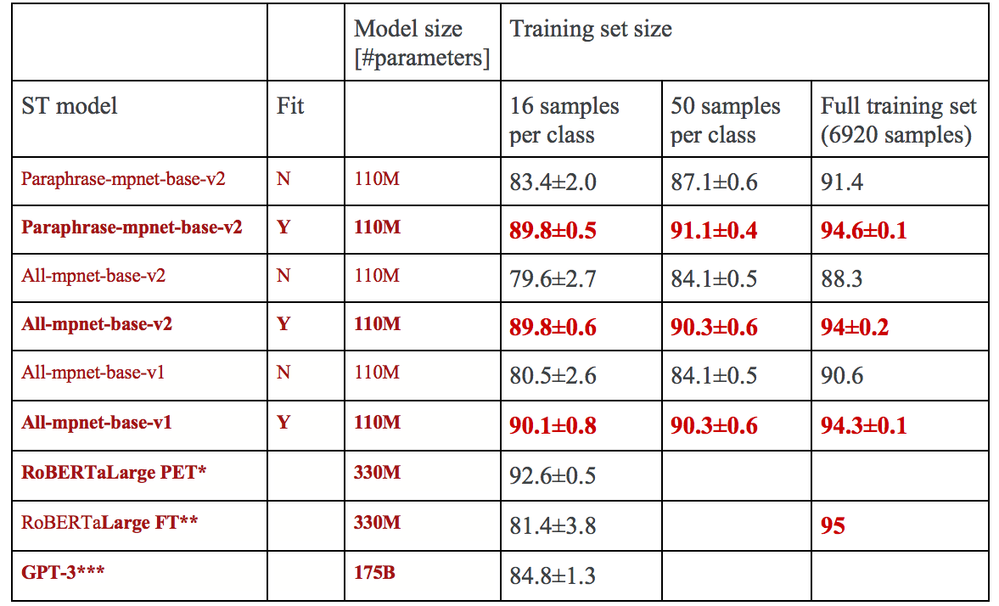

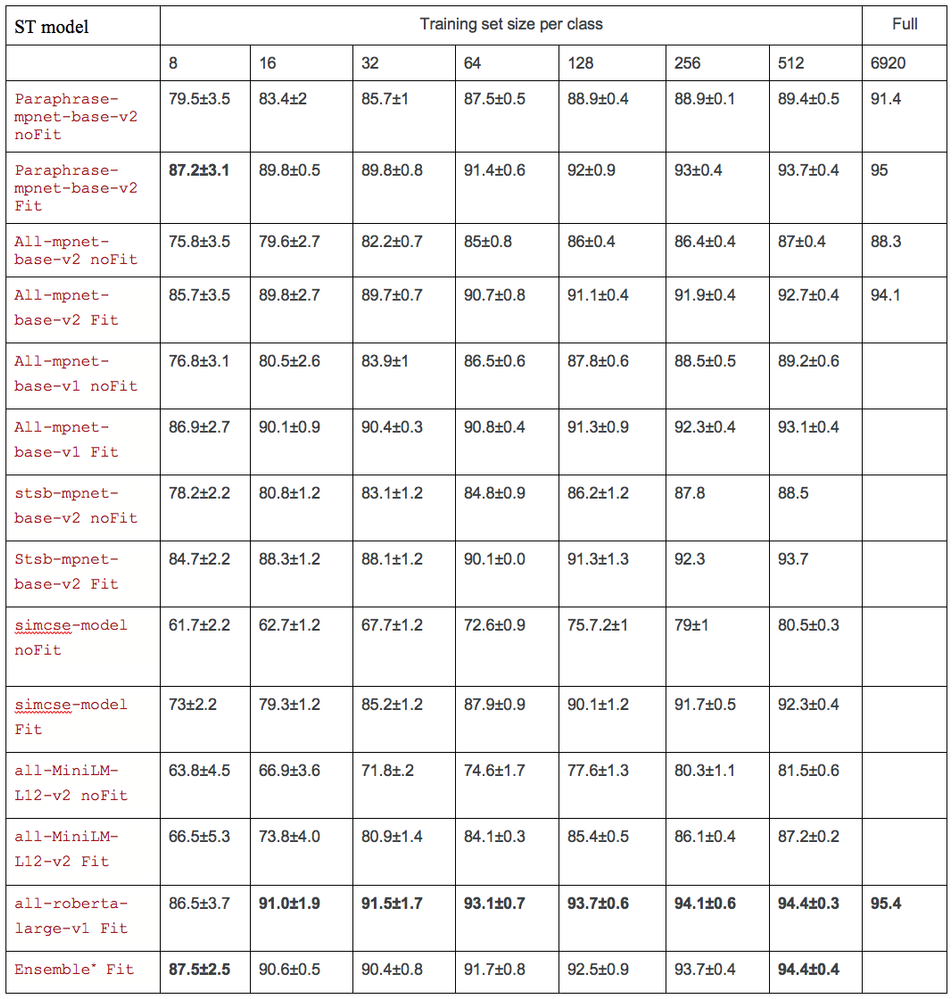

ST Model selection Table 2 shows the performance of the three candidate models for few-shot SST-2 with and without FineTuning (Fit) of the ST model compared to the performance of PET, standard fine-tuning using RoBERTa and GPT-3 as reported by (Gao et al. 2021).

Table 2: Few-shot and full training set performance of different ST models vs. baselines on the SST-2 dataset. *Task specific prompt. **Standard fine-tuning. *** In-context fine-tuning. As reported by (Gao et al. 2021).

As shown in Table 2, the three candidate models are quite comparable, with slightly better performance evidenced by the “paraphrase-mpnet-base-v2” model with 50 training samples. We therefore choose this model for evaluating our method’s performance on the RAFT benchmark.

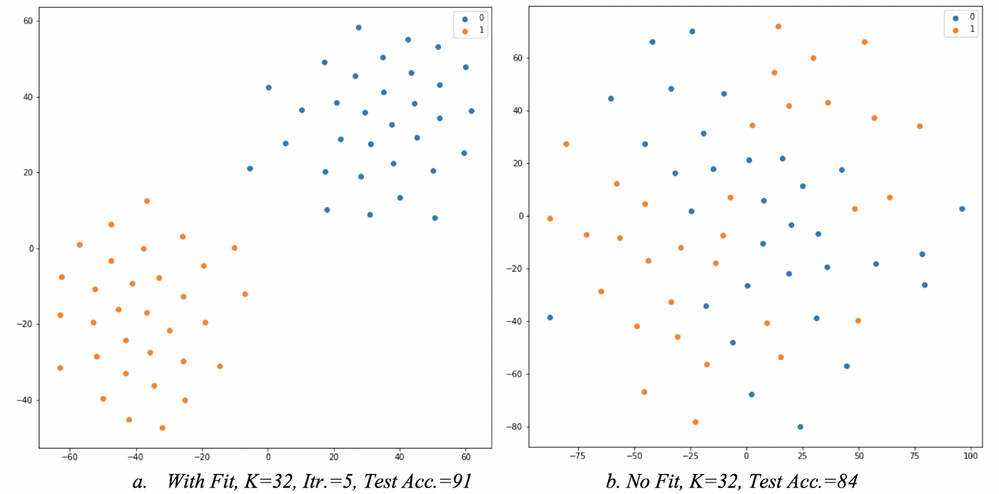

Figure 5: 2D representations of the training vectors of a binary classification task at the output of paraphrase-mpnet-base-v2 ST after fine tuning (left) and before fine tuning (right). K represents the number of training samples per class. The blue dots represent the class ‘0’, whereas the orange dots represent the class ‘1’.

A small number of epochs, five iterations of sentence-pairs generation and ST fine-tuning were sufficient to reach convergence and resulted in good separability in the 2D vector space (built using T-SNE). Figure 5 shows the 2D projection of the training data, with and without ST fine-tuning. As can be seen, the fine-tuning step significantly improved the separability of the few-shot training data and this resulted in higher accuracy on the SST-2 test compared to the same model without fine-tuning.

Hyperparameters selectionFollowing are the parameters that were selected based on the SST-2 dataset experiments. We used the same set of parameters for all of the RAFT benchmark tests, except for task 4 (see Table 3) for which we increased the number of iterations to 10 in order to achieve acceptable separation.

● ST model = ‘paraphrase-mpnet-base-v2’

● train_loss = CosineSimilarityLoss

● #epochs = 3

● number of sentence-pairs generation iterations = 5

● batch size = 16

● warmup steps = 10

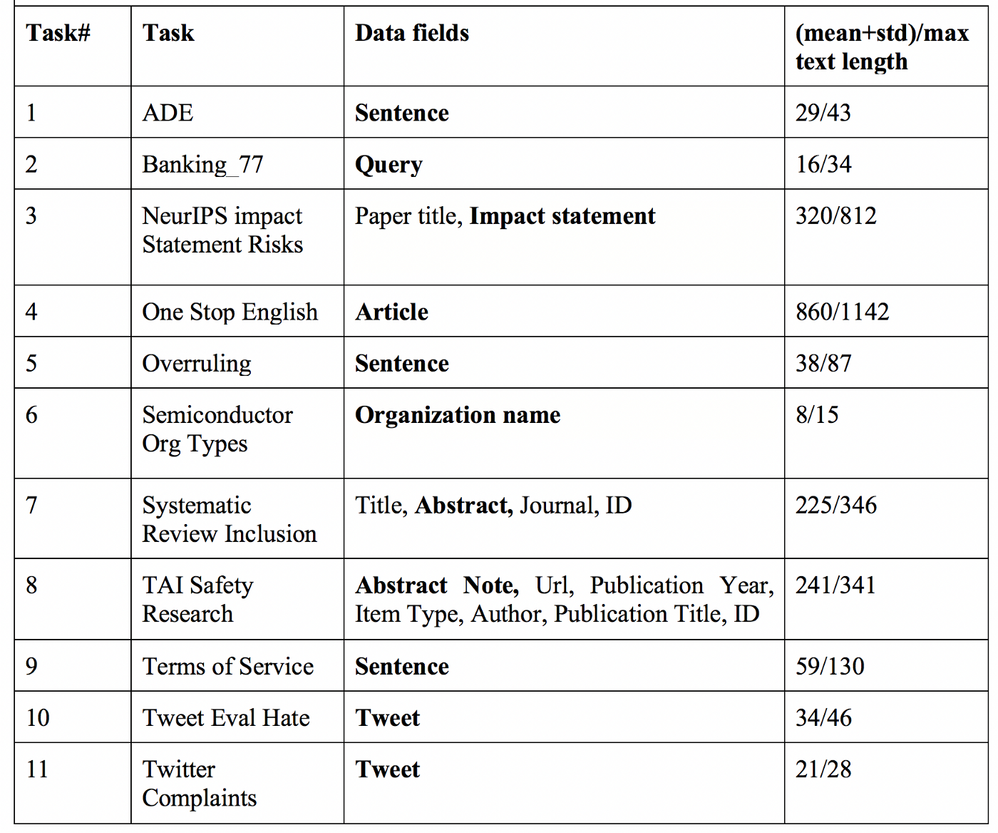

Data fields selection Tasks 3, 7 and 8 in the RAFT benchmark contain multiple data fields as additional metadata (e.g. date, personal name and title). In those cases, we made sure to keep only one dominant textual feature that intuitively includes sufficient context for correctly classifying the sentence. Table 3 shows the available data fields per task, with the data fields that were selected (shown in bold), as well as the (mean+std)/max text length.

Sequence length selection As seen in Table 3, tasks 3,4,7 and 8 include long text sequences (>256 tokens), which may cause unexpected results (SetFit validated only for SST-2) and memory allocation error during fine-tuning (depending on your HW setup). In those cases, we limited the input text length to 128 tokens, as follows: for tasks 4, 7 and 8 we selected the first 128 tokens, but for task 3 we selected the last 128 tokens because we noticed that the relevant context for correct classification usually resides in the last section of the text input.

A number of sentence-pairs generation iterations An analysis of the SST-2 dataset experiments shows that five sentence-pairs generation iterations and ST fine-tuning were sufficient for achieving good separation between the 2D projections of the vectors representing training samples from different classes (see Figure 5). Accordingly, we used five iterations for all of the RAFT tasks, except for task 4 for which we increased the number of iterations to 10 in order to achieve acceptable separation.

Other best practices No pre-processing was performed on the textual inputs. In task 2 (Banking_77) of the RAFT dataset, since label samples had quite sparse representation (only 36 out of 77 of the intent categories include at least one sample in the training data) we added the names of the labels as Input samples to that category. This is a trivial addition since in text classification tasks the names of the classes (or their description) are given.

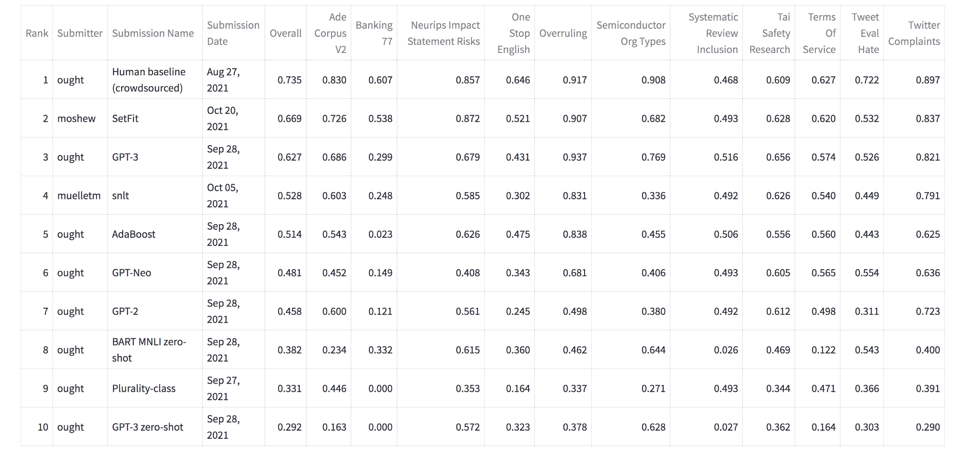

Results Table 4 shows a snapshot (Nov. 2021) of the RAFT leaderboard. SetFit received a total score of 0.669, ranked 2nd after Human Baseline, and topped GPT-3 overall by 0.042 points. Surprisingly, SetFit outperforms GPT3 in 7 of the 11 tasks of the benchmark, and in the NIS task SetFit surpasses even the human baseline.

Table 4: Snapshot of the RAFT leaderboard (Nov. 2021)

Discussion

Following are some thoughts based on the results of applying SetFit to the RAFT benchmark:

● We expect that an ensemble of ST models, and ST models that are based on larger models such as RoBERTa large, will eventually achieve better overall performance on RAFT (as shown for SST-2 in Annex A).

● Limiting the text input length to the last 128 tokens for the NIS task seems like good practice and has a positive impact on performance.

● The addition of the category names to the Banking_77 task’s training data seems to be very effective probably leading to a large accuracy edge (more than 0.2 points) over GPT-3.

● In an interesting anomaly, the table shows that, on the one hand, SetFit outperforms GPT-3 by a large margin in the NIS and OSE tasks and even outperforms the human baseline in the case of the NIS task. On the other hand, GPT-3 outperforms SetFit by a large margin in the SOT task; this may be related to the intuition that the SOT task requires more factual knowledge compared to other tasks.

● The significant accuracy/efficiency improvement achieved by decoupling the general problem of text-classification into the two stages of sentence embedding generation and prediction may seem surprising at first, but it is not new for SOTA NLP tasks. It was shown, that retrieval and reader architectures for Open Q&A (e.g. RAG by Lewis et al.) enable significantly smaller models to achieve on-par results with larger models such as T5–11B. Overall the separation in SetFit and RAG leads to higher robustness (new data/category does not require re-training), explainability and scalability (higher level of parallelization).

● The results produced by SetFit for the SST-2 dataset (presented in Annex A), for example accuracy of 95.4 using the full training set with the ‘all-roberta-large-v1’ model, would rank SetFit in the top 20 on the SST-2 leaderboard[7]. Those high-quality results based on sentence representations are counter to the common assumption that sentence representations are considered as conveying only shallow information and therefore lead to a degraded accuracy.

Conclusions and future work

We presented SetFit, an efficient approach to few-shot text classification, based on the popular sentence-transformers library and based on pre-trained ST representation and fine-tuning. Experiments on the SST-2 dataset and submission results on the RAFT benchmark demonstrated the effectiveness of SetFit compared with models that are two to three orders of magnitude larger, such as GPT-3 and GPT-Neo, as well as the importance of contrastive training to further improve STs on downstream NLP tasks. In future work we intend to explore how to enable unsupervised learning with SetFit and how to expand it to other tasks, notably two-sentence text classification such as NLI, Q&A.

Acknowledgments

We are grateful to Oren Pereg (Intel Labs), Nils Reimers (HuggingFace), Luke Bates (UKP — TU Darmstadt) for their fruitful comments and corrections.

[1] https://ruder.io/recent-advances-lm-fine-tuning/

[2] More recently, PET entered the RAFT leaderboard and ranked 1st after the human baseline, PET is based on the ALBERT model (xxlarge,v2, 235M parameter) which is x2 larger than SetFit.

[3] https://nlp.johnsnowlabs.com/

[5]https://colab.research.google.com/github/MosheWasserb/SetFit/blob/main/SetFit_SST_2.ipynb

[6] sentence-transformers includes a fine-tuning option for ST object by default.

[7] https://paperswithcode.com/sota/sentiment-analysis-on-sst-2-binary

References

Tom Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared D Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, Sandhini Agarwal, Ariel HerbertVoss, Gretchen Krueger, Tom Henighan, Rewon Child, Aditya Ramesh, Daniel Ziegler, Jeffrey Wu, Clemens Winter, Chris Hesse, Mark Chen, Eric Sigler, Mateusz Litwin, Scott Gray, Benjamin Chess, Jack Clark, Christopher Berner, Sam McCandlish, Alec Radford, Ilya Sutskever, and Dario Amodei. Language models are few-shot learners. NeurIPS 2020.

Sebastian Ruder, Matthew E. Peters, Swabha Swayamdipta, Thomas Wolf. Transfer Learning in Natural Language Processing. NAACL 2019.

Jacob Devlin Ming-Wei Chang Kenton Lee Kristina Toutanova. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. NAACL 2019.

Timo Schick and Hinrich Schutze. 2021a. Exploiting cloze questions for few-shot text classification and natural language inference. EACL 2021.

Timo Schick and Hinrich Schutze. 2021b. It’s not just size that matters: Small language models are also few-shot learners. NAACL 2021.

Derek Tam, Rakesh R Menon, Mohit Bansal, Shashank Srivastava, Colin Raffel. Improving and Simplifying pattern Exploiting Training. EMNLP 2021.

Timo Schick and Hinrich Schutze. 2021c. True Few-Shot Learning with Prompts -A Real-World Perspective.

Nils Reimers and Iryna Gurevych. Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks. EMNLP 2019

Gregory R. Koch, Richard Zemel, Ruslan Salakhutdinov. Siamese Neural Networks for One-Shot Image Recognition. 2015

Tianyu Gao, Adam Fisch, Danqi Chen 2021. Making Pre-trained Language Models Better Few-shot Learners. ACL 2021.

Neel Alex, Eli Lifland, Lewis Tunstall, Abhishek Thakur, Pegah Maham, C. Jess Riedel, Emmie Hine, Carolyn Ashurst, Paul Sedille,l Alexis Carlier, Michael Noetel, Andreas Stuhlmüller. RAFT: A Real-World Few-Shot Text Classification Benchmark. NeurIPS 2021, Track on Datasets and Benchmarks.

Jonathan Bragg, Arman Cohan, Kyle Lo Iz Beltagy. FLEX: Unifying Evaluation for Few-Shot NLP. NeurIPS 2021.

Subhabrata Mukherjee, Xiaodong Liu, Guoqing Zheng, Saghar Hosseini, Hao Cheng Greg Yang, Christopher Meek, Ahmed Hassan Awadallah, Jianfeng Gao. CLUES: Few-Shot Learning Evaluation in Natural Language Understanding. NeurIPS 2021, Track on Datasets and Benchmarks.

Patrick Lewis, Ethan Perez, Aleksandra Piktus, Fabio Petroni, Vladimir Karpukhin, Naman Goyal, Heinrich Küttler, Mike Lewis, Wen-tau Yih, Tim Rocktäschel, Sebastian Riedel, Douwe Kiela. Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks. NeurIPS 2020.

Annex A

Figure 6: SST-2 Evaluation on sentence-transformer models. *Ensemble of 3 models: Paraphrase-mpnet-base-v2, All-mpnet-base-v1 and Stsb-mpnet-base-v2

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.