Introduction to Distributed Communication

A Walk through the History of Distributed and Parallel Computing

Larry Stewart

Middleware Software Engineer, Intel Corp.

It Begins!

Welcome! This is the first post in what we hope will be an informative and useful series about distributed computing and the communications libraries that make it possible for developers and subject matter experts to write effective parallel and distributed applications.

I am Larry Stewart and I’ve been doing distributed computing since at least 1977. I have had a bit of a varied career, starting at Xerox* PARC, designing multiprocessor workstations at Digital Equipment Corporation*, developing system and communications software for supercomputers at SiCortex*, and independent consulting on distributed FPGAs doing molecular modeling.

Last year, I joined Intel as part of the Parallel Runtimes Engineering Team, and it has been a lot of fun.

In my current work I get to play with parts of the upcoming Argonne Labs* Aurora* exascale supercomputer as part of the collaboration between Intel and Argonne Labs on the frontier of high performance computing.

Why Distributed Computing and Distributed Communication?

Everyone knows about Moore’s law. We have seen computers get faster and cheaper for the last 50 years and the race of ever higher compute density and compute power continues. However, while computers have gotten faster and cheaper, our appetite for computing has grown even faster.

- Today we have fairly reliable ten-day weather forecasts.

- We have fingertip access to all the knowledge of the Internet.

- We can design airplanes, spacecraft, bridges, and nuclear reactors to the point that we’re confident they will work right when built.

- We can optimize wind turbine blades, race yacht sails, and keels.

- We can even determine where to drill for oil, water, or rare minerals.

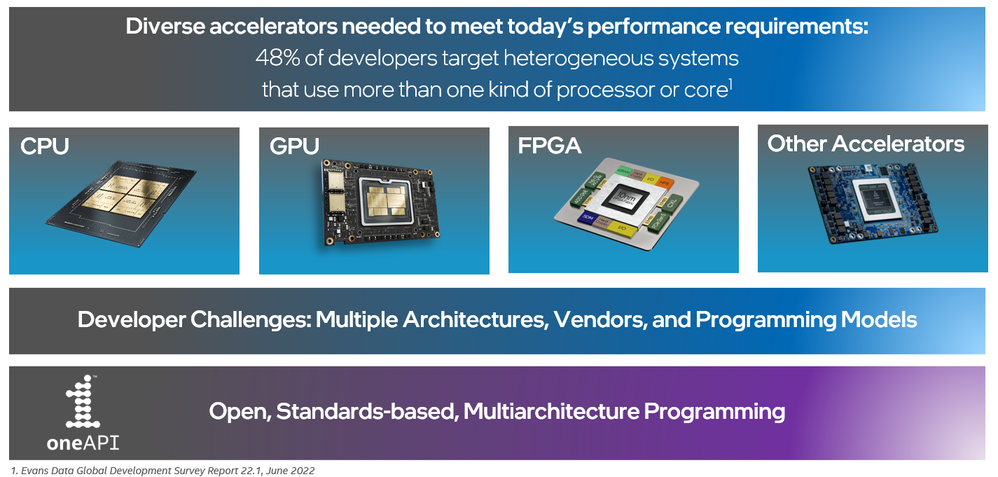

These sorts of problems are not beyond the ability of a single processor. Solving them in a linear fashion would however take too long, so we’ve figured out ways to split up the work by vectorizing, parallelizing, and distributing computation. We also learned how to enlist the aid of somewhat specialized engines like Graphics Processing Units (GPUs) and Field Programmable Gate Arrays (FPGAs).

My colleagues and I are going to provide a technical overview of the ecosystem of tools. We will introduce techniques that make distributed computing work, hopefully in a way accessible to a wide community.

The oneAPI open-standards based industry initiative and the associated Intel® oneAPI Toolkits and products, help to provide a unified approach to mixed-architecture offload computing. Its approach also ensures interoperability with existing distributed computing standards.

This is however a topic for a future article. Let us step back and start with the fundamental concepts of distributed computing:

Parallelism and Offload

Some kinds of parallel computing work inside a single processor. Two examples are vectors and multicore. First, let’s look at vectors.

Vectors and Vectorization

Most machine instructions work on single items of information: a single fixed point or floating point number.

Early on, though, machine designers realized you could get more performance by applying the same operation to each element of a list of numbers, a vector. Most recent computer architectures, like Intel® Architecture, ARM*, PowerPC*, and the open-source RISC-V* support vectors. The operations of vectors are often invisible to the programmer. Modern compilers can translate code such as

int A[16],B[16],C[16];

for (int i = 0; i < 16; i += 1) C[i] = A[i] + B[i];

into a single machine instruction like

C = _mm512_add_epi32(A, B);

which will do all 16 additions at once. A current processor like the Intel® Xeon® Scalable Processor series can do two of these 16-way additions per machine cycle in each processing core.

Multi-Core

Contemporary processors have multiple cores. The first computer systems with more than one CPU were developed in the early 1960s but in recent years nearly all smartphone, laptop, desktop, and datacenter processors have multiple processing cores. The dominant way in which these access data is called “coherent shared memory”. Using this method, all the cores run out of a common main memory system.

Shared memory machines turn out to be remarkably hard to program at low levels, and these difficulties are dealt with by increasing levels of abstraction.

|

Quote: 1 Lampson, Butler “Principles for Computer System Design”, Turing Award Lecture 2007 |

There are several ways to use multicore processors. Among them are processes, threads, parallel libraries, and parallel languages.

Processes

A process is what most of us understand as “a program.” A process has a processor, memory, files, and I/O. In many ways, a process is a virtual computer.

Let’s take look at top(1) on Linux*, or the Task Manager on Microsoft* Windows*, or the Activity Monitor on Apple* macOS*. The items listed by its output are processes. Oversimplified, a four-core processor can run four processes simultaneously. In fact, a typical computer these days will have a few hundred processes active, but mostly they are waiting for something and not truly running. Using processes to do parallel computing is entirely possible.

Linux and UNIX* have “pipelines”, which connect the output of one program to the input of another. Processes also communicate through files, through network connections, and through shared memory. For example, a developer can say “make -j 4” to tell make that it can run multiple compilations at once using different processes.

Threads

Threads are “threads of execution” or “threads of control” inside a single process. Threads have individual machine states like registers and stacks, but they share a common memory space. Threads can communicate with each other through their shared memory. Threads are available in most language environments now, but they are still hard to get right. The execution of multiple threads is logically interleaved at the level of individual machine instructions. Frequently human notions of what is a unit of work in this context are unfortunately wrong.

For example, consider a banking application adding a deposit to a checking account balance. Somewhere inside there is a line of code

balance = balance + deposit;

Which humans have no problem with. Unfortunately, this real execution flow is really more like this:

LOAD balance

ADD deposit

STORE balance

What happens if two threads try to do this? They can, and eventually will, appear to occur in the following interlaced order:

LOAD balance

LOAD balance

ADD deposit 1

ADD deposit 2

STORE balance

STORE balance

The customer is going to be quite angry that deposit 1 got mysteriously lost.

Over the last half century, an enormous amount of machinery has built up to solve problems like this. Locks, atomic operations, and a range of other thread control mechanisms have been introduced. Still, it is quite subtle and error prone to program at that level.

In addition to using raw low-level features like locks and atomics though, you can use prebuilt and tested libraries.

Parallel Libraries

As mentioned, getting thread level parallelism correct is quite subtle and difficult. Programs will appear to work fine on your development machine but fail mysteriously when run on a machine with slightly different timing. Often a much easier and more effective way to build parallel programs is to use prebuilt and pretested libraries.

At low levels, there are libraries like the Intel® oneAPI Threading Building Blocks (oneTBB). These make it straightforward to implement design patterns like processing pipelines, work queues, and data flow without having to deal with too many of the details.

At higher levels of abstractions, there are libraries that invoke parallelism for complex mathematics like Linear Algebra and Fourier Transformation. The Intel® oneAPI Math Kernel Library (oneMKL) is a good example. A single function call to multiply two matrices can use all the compute resources available.

Parallel Languages

Parallel libraries can be called from non-parallel main programs written in a wide variety of languages such as C++ or FORTRAN. They however apply parallelism only to the specific functionality packaged in the library.

Parallel languages can help apply parallelism to the entire program. Parallel languages include some quite excellent but niche languages like Erlang* that is used among other things, for programming some telephone switches, Julia*, and CILK*.

Learning a new language to get extra oomph out of your machine is always fun, but you can still stay with C and C++, by using language extensions for parallel programming. A great example is OpenMP*. OpenMP allows you to make minimal modifications to your code, such as

#pragma omp for

for (i = 0; i < N; i++) {

c[i] = a[i] + b[i];

}

And the compiler will invoke the OpenMP runtime and automatically run your loop on multiple cores.

Another style of parallel language are SYCL*, CUDA*, and OpenCL*. Using these, you write specific functions in the parallel language and embed them in your main program. We’ll talk about these next, as they are used in the context of hardware accelerators.

Accelerators

Over the last 25 years, we have seen extraordinary improvements in computer graphics. Everyone has seen the changes from early 8 bit graphics of game consoles to today’s high framerate gaming machines.

It wasn’t long before developers started to think: “I wonder if I can use this power to run my applications faster?”

Today most laptops and desktops, and even most supercomputers have Graphics Processing Units (GPU) attached to every processor. Many developers are familiar with proprietary language extensions for GPU offload like NVIDIA* CUDA*. In addition, the OpenCL* project provides a low-level framework for writing programs that execute across heterogeneous platforms and thus run compute intensive portions of the application on the attached GPU.

We’re in the middle of a rapid evolution of the environment for supporting GPUs, and the rise of programming systems like SYCL* from the Khronos Group together with the Intel® oneAPI DPC++ Compiler that let you write the program once and run it on either CPUs or GPUs or a combination.

Another style of coprocessor or accelerator is the Field Programmable Gate Array (FPGA), in which the very wiring of an integrated circuit can be changed to give high performance on specialized problems. It is now possible to write code for FPGAs in the same high level SYCL environment rather than deal with low level register transfer languages like Verilog* and VHDL*.

The Joint Power of Many Computers

Moore’s law and the diligent effort of generations of engineers and scientists have provided spectacular improvements in the performance of individual processors and let us add GPUs to each one. But our aspirations didn’t stop there. Distributed computing lets you apply the power of dozens to thousands of individual computers to a single problem.

History

Early supercomputers were individual massive machines like the early HPE* Cray* Y-MP. In the 1990’s a new idea arose, of building supercomputers by connecting more or less ordinary servers with a high-speed network. The original idea is called a Beowulf cluster. Almost all recent supercomputers follow the same model, as do the data center scale computing systems of cloud computing suppliers.

It would be fun to describe the evolution of the hardware for such machines, but let us focus on the software that enabled the usage of these high-performance clusters.

The early years of distributed computing tracked along with the development of networking. Early mechanisms like IBM*’s Remote Job Entry allowed you to submit batch computing jobs remotely and get the resulting printout. The early ARPANET* allowed terminal login to remote time-sharing machines, and file transfer between independent systems. This was followed by an era of client-server computing, electronic mail, and, well, the Internet. Technologies like name servers and remote procedure call and distributed file systems helped tie it all together.

Another line of development though was work on how to harness the efforts of a network of machines to run an individual program. The early years of supercomputers were an age of heroic feats of computer architecture like the work of Seymour Cray, but each successive design was brand new and hideously expensive. There was no way to apply economies of scale. The big idea was to use the new networking technology to connect hundreds or thousands of commodity processors with a fast network and write software to let them work together.

Message Passing

The first hugely successful software standard for distributed parallel computing was launched in May 1994: The Message Passing Interface, or MPI*.

In an MPI application, multiple instances of a program are launched on a cluster of computers, and interact by sending messages to each other. In MPI, each copy is called a rank. One rank can SEND a message to another, which can RECV it. In addition, MPI has collective operations, in which some or all ranks participate in a communications event. Some of the more interesting ones are

- BARRIER: No rank can proceed past this point in the program until all ranks have gotten here

- BROADCAST: One rank sends a message to everyone

- REDUCE: One rank can get, for example, the SUM of contributions from everyone

There is a lot to MPI, and future blog entries will have much more to say about it. The important point is that it works and it is very effective. By now we have had nearly 30 years of experience with MPI.

The single largest takeaway is that subject area experts, such as physicists or atmospheric scientists can use MPI to write 1000-way distributed applications that are substantially faster than any possible single computer. They do not need to be computer communications experts to achieve those performance gains.

This is pretty much the highest tribute for a successful library. Ordinary mortals can use it to get their work done without having to understand all the internal details.

We will of course write more about MPI and the Intel® MPI Library in future blogs.

Partitioned Global Address Space

With MPI, the programmer knows that there are many independent computers cooperating to run a program.

Another approach is Partitioned Global Address Space (PGAS) programming, in which programmers know there are multiple computers, but think of them as multiple processors running in a single large memory space. As in MPI, multiple instances of a program are started, called Processing Elements (PE) for historical reasons. A PE can read and write its own part of global memory, but it can also, with lower performance, read and write any location in the global address space.

These remote operations are PUT and GET calls. There are PGAS Languages, such as Unified Parallel C (UPC) which is C-like, and Titanium*, which is Java-like, but there is also a subroutine library for PGAS, called SHMEM* (for SHared MEMory ). The SHMEM library lets you do PGAS style programming in C or FORTRAN. In PGAS languages, in addition to PUT and GET, there are collectives like REDUCE and synchronization operations like BARRIER.

We will talk more about PGAS in SHMEM in our next upcoming blog.

Distributed Shared Memory

I’d also like to mention Distributed Shared Memory, in which software works to make multiple computers on a network appear to be a multi-threaded single address space, just as though it were running on a single multi-core server. Because the implementation has to deal with sharing at the level of virtual memory pages, it works best for applications in which threads do not do a lot of fine-grained synchronization.

Collective Communications Library

For both MPI and PGAS environments, collective operations play an important role. Collectives are also extremely important for distributing applications like neural networks, machine learning, and artificial intelligence. To that end, Intel has introduced Intel® oneAPI Collective Communications Library (oneCCL), a collective communications library, to make it easier for developers to use multiple GPU equipped systems to run Neural network (NN), Machine Learning (ML), and Artificial Intelligence (AI) applications.

Next Steps and Upcoming Articles

This first post has taken a winding path through parallel and distributed computing. Future entries will delve into individual topics and include code snippets to convey the foundations each area.

My colleagues and I will also provide pointers to examples, tutorials, and documentation so that readers wishing for more will know where to look.

Please join me on this exciting journey through the wonders of Distributed Computing!

I am looking forward to your feedback and suggestions on additional topics as we go down this road together.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.