- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am trying to transfer(read & write) data using the mSGDMA cores in QuartusPro 19.2 , between TSE core and PCIe HIP (avmm-dma) cores on an Arria10 FPGA. In Linux driver, we create ethernet node, allocate socket buffers, then managed to send & receive ethernet packets through the mSGDMA engine to test its full duplex performance.

In the ethenet receiving direction, data is streaming out of TSE_MAC core, then mSGDMA (mm_Write) move data into Linux PC memory through PCIe HIP (txs port). We tested this path, and ethernet performance is good.

While in the ethenet transmission direction, mSGDMA (mm_Read) moves ethernet data from Linux PC memory(socket buffer) through PCIe HIP (txs port), into TSE MAC core. In this direction, we tested and saw the ethernet performance is bad, so performance test failed.

After a lot debugging, we found the "PCIe txs_waitrequest" signal behaviour very differently, which may introduce the [ good performance of eth-receiving ] vs [ N-OK performance of eth-transmit ].

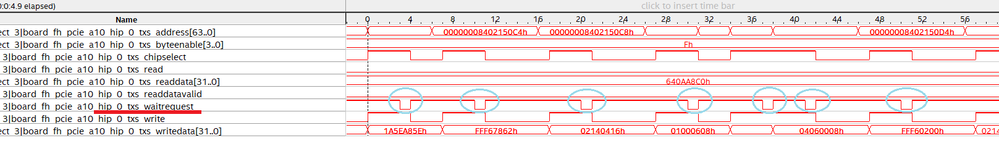

1) For good eth-receiving direction, in which "mSGDMA (mm_Write) moves data from TSE MAC into PCIe HIP TXS ", "PCIe txs_waitrequest" signal can be deasserted to activate the mSGDMA (mm_Write) into Host memory very quickly, and the interval is ~10 clock cycles or so. (see "mSGDMA_writedata.png" )

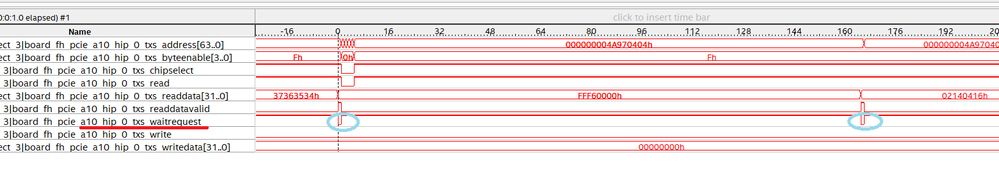

2) For failed eth-transmit direction, in which "mSGDMA (mm_Read) moves data from PCIe HIP TXS " into TSE-MAC, "PCIe txs_waitrequest" signal was very slower to be deasserted to activate the mSGDMA (mm_READ) operation from Host memory into TSE MAC , that interval is ~160 clock cycles which is much bigger. (see "mSGDMA_readdata.png" )

Above 2 pictures show the "txs_waitrequest" behaviour is very different, Has anyone seen this before or have any clue what could be causing this?

( Pls note, we use PCIe AVMM-DMA , instead of AVMM ) .

/best regards

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

kindly reply pls

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @EBERLAZARE_I_Intel ,,

I think you're the expert of " mSGDMA IP" area , would you help to double check my original question (or at least relay it to an more suitable person to reply ) ? Thanks very much

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page