- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

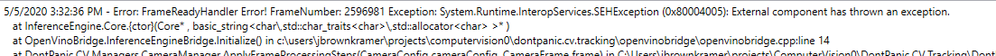

I am trying to embed a call to OpenVINO inference engine in an existing system in Windows 10. I have a model and I have successfully run inference with it on the GPU with a python script. Now I want to do inference in c++. We don't want to go through the entire install process for every machine we put our system on, so ideally we would just ship the necessary dlls. I have copied the contents of openvino\inference_engine\bin\intel64\Debug to the bin directory of my project. At first there were several missing dlls, including ngraphd.dll and tbb_debug.dll. I tracked these down (I think) and put them in the bin directory as well. I no longer get a missing dll execption, but I do get the following:

Below is my cpp code, which I am calling from C#. Any help would be great.

#include <inference_engine.hpp>

#include "pch.h"

#include "OpenVinoBridge.h"

#include <string>

using namespace InferenceEngine;

using namespace OpenVinoBridge;

ExecutableNetwork executable_network;

void InferenceEngineBridge::Initialize()

{

//Set up OpenVino inference

Core ie;

std::string input_model_base = "resnet50-depthFrame7-048313-2365p0991-1402p3900";

CNNNetwork network = ie.ReadNetwork(input_model_base + ".xml", input_model_base + ".bin");

network.setBatchSize(1);

executable_network = ie.LoadNetwork(network, "GPU");

}

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Are you able to run the cpp code on the system with OpenVINO installed? If yes, you can use Deployment Manager which creates a deployment package by assembling the model, IR files, your application, and dependencies into a runtime package for your target device.

Regards,

Ram prasad

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am trying to run the particular cpp code I pasted above on a system that has OpenVINO installed and it is not working. I have been able to run inference through a python script on this system.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Some more info: I have been able to build the cpp examples and run them (although on Windows, reading jpegs seems to be broken). I tried the Deployment Manager, which seems to make a copy of the inference engine and its dependencies as well as setupvars.bat. I am confused about what to do with that. I am trying to build a managed cpp project that compiles down to a DLL. Is there some general guidance about what files have to be pointed to and where in order for things to work?

Thanks for your help.

-Josh Brown Kramer

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The required dlls are copied to the <path_to_openvino_deploy_package>/deployment_tools. Make sure the dlls are exist and run the setupvars.bat file located in <path_to_openvino_deploy_package>/bin. Now you are ready to the application.

It is recommended to use the supported languages(c, c++, python) in OpenVINO 2020.2

Regards,

Ram prasad

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page