- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

hellow, i have some question about the inference result of openvino IR

I download the ssd_mobilenet_v1_coco_2018_01_28 from https://software.intel.com/en-us/articles/OpenVINO-Using-TensorFlow

than I convert the download model to openvino IR format using the following command

python mo_tf.py --input_model <frozen_inference_graph.pb model path> --tensorflow_use_custom_operations_config <openvino path>\deployment_tools\model_optimizer\extensions\front\tf\ssd_support.json --tensorflow_object_detection_api_pipeline_config <pipeline.config path> --input_shape [1,300,300,3]

I convert the model successfully

however when I inference an image using the IR, the result is different from the tensorflow result using frozen_inference_graph.pb

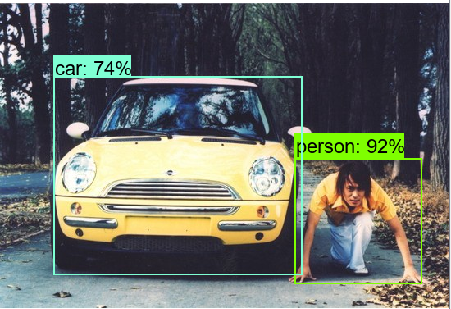

for example, when I inference an image using frozen_inference_graph.pb , I find out all the detected objects with score larger than 30%:

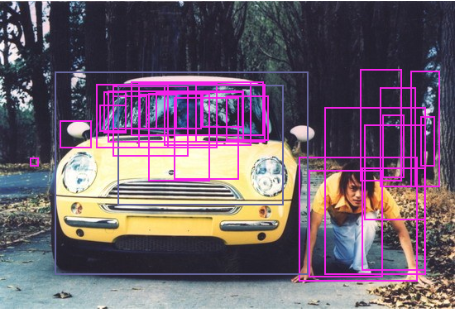

however,when I inference an image using openvino IR and get detected objects with scores larger than 30%, it come out many unexpected boxes:

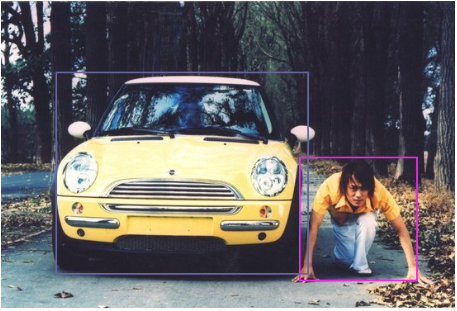

although the 2 objects with largest scores (70%, 62%) is the same with tensorflow result(see the following picture), there are many unexpected boxes with scores about 51%~52%

what I want to know is that is my result is correct?

if I set scores threshold to filter detected objects, I will get many unexpected results, how can I solve it?

is anybody can help? thank you vary much!

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

is there anyone can give me some help?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Dear Liang, JianWei,

Most likely you did not pass in proper pre-processing switches into Model Optimizer. Please review this IDZ post. Actually we provide mean and scale values for the most popular models (including mobilenet) on this page:

Hope it helps !

Thanks,

Shubha

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page