- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you,

Bruce

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Bruce,

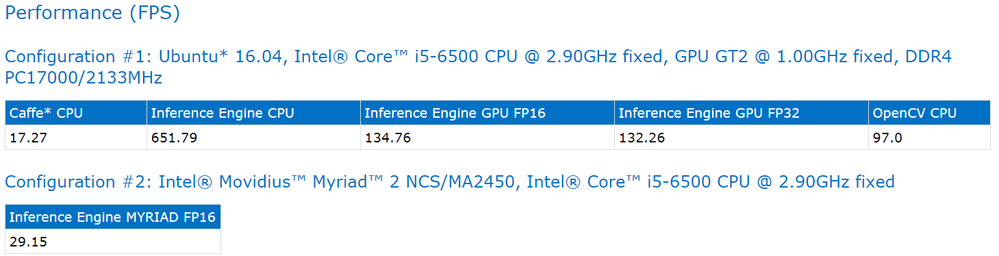

1.That's correct the metric is Frames Per Second(FPS) and that's just running native Caffe framework without Inference engine.

2.GPU doesn't speed up all computations and this BTW is common false logic. It really depends on the type of operations you have occurring on the GPU, the amount of data and if there is data reuse or not. The biggest hit that you normally see with GPUs is the cost to move memory to and from GPUs and just those functions alone can cause a decrease in performance that allows CPUs to have better performance numbers.

3. I can't fully comment on this but understand that MYRIAD has great performance for the low power use cases. I encourage you to take a look at the details here.

Kind Regards,

Monique Jones

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi All

Can you give a sample command that just running native Caffe framework without Inference engine?

Thank you very much,

Rachel

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Rachel,

You can run inference with native caffe with the following python scripts here.

There is a classify.py and a detect.py depending on what type of model you'd like to use. I also recommend that you create a separate post to ask any further questions.

Thanks,

Monique Jones

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page