- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

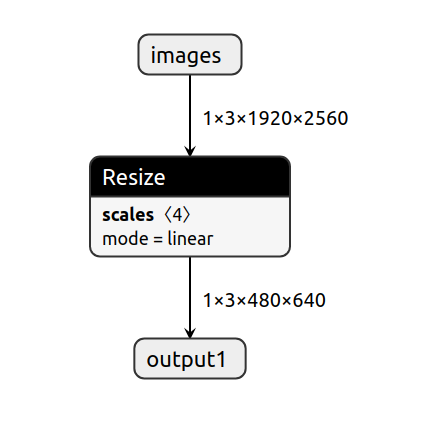

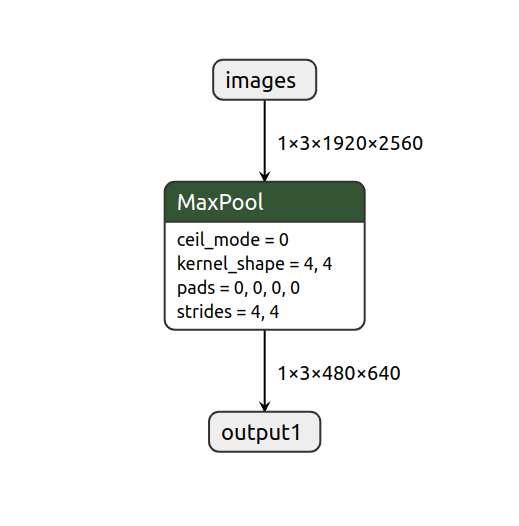

Hi, I wanted to see the effect of resizing an image input on the speed and I see a big difference between CPU and NCS2. I use the C++ benchmark app to measure the speed in asynchronous mode. I have two models that downsample an input image from 1920x2560 to 480x640. One uses maxpooling and the other uses bilinear inerpolation. I use PyTorch to write the models and convert the model to the ONNX format and then convert them successfully by the model_optimizer tool. My CPU : Intel® Core™ i7-9750H CPU @ 2.60GHz × 12

F.interpolate(x, size=(480,640), mode="bilinear")

nn.MaxPool2d(kernel_size=(4,4), stride=(4,4))Downsample by Interpolation: On MYRIAD: Throughput: 15.86 FPS; On CPU: Throughput: 1367.88 FPS

Downsample by MaxPool: On MYRIAD: Throughput: 15.82 FPS; On CPU: Throughput: 323.08 FPS

Why is there such a big drop while using the NCS2?

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi AI6,

The difference you have observed is expected as they are from different class of processors.

The big drop would be the difference on the raw calculating power between the two.

The Neural Compute Stick 2(NCS2) consume around 1 watt compared to 45 watt for i7-9750H.

The clock frequency of NCS2 is around 700MHz max compared to i7-9750H 4.5GHz max(In addition ,i7-9750H has 6 cores for parallel/asynchronous computing).

The NCS2 is a plug and play development kit for AI inferencing to be coupled with low cost edge devices.NCS2 offer exceptional performance per watt per dollar on the Intel® Movidius™ Myriad™ X Vision Processing Unit (VPU)

In your example, even though the two layers are performing differently on the CPU but almost similar for the NCS2.

This is probably due to the Myriad architecture is efficient for convolutional types of calculations,

featuring 16 programmable shave cores and a dedicated neural compute engine for hardware acceleration of deep neural network inferences.

The respective product specification sheets are given as follows

Myriad X

Intel® Core™ i7-9750H

Regards,

Rizal

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Al6,

Thank you for your question. If you need any additional information from Intel, please submit a new question as Intel is no longer monitoring this thread.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page