- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have followed the below two examples from OpenVINO documents to deploy the model in AWS EKS cluster and expose the API externally.

https://github.com/openvinotoolkit/operator/tree/main/helm-charts/ovms

https://medium.com/openvino-toolkit/deploy-ai-inference-with-openvino-and-kubernetes-a8905df45e47

Basically, I'm trying to deploy the model in AWS EKS cluster. Firstly, I created AWS EKS cluster using the following git repo https://github.com/hashicorp/terraform-provider-aws and downloaded models using below commands

wget https://storage.openvinotoolkit.org/repositories/open_model_zoo/2022.1/models_bin/2/resnet50-binary-0001/FP32-INT1/resnet50-binary-0001.bin -P 1

wget https://storage.openvinotoolkit.org/repositories/open_model_zoo/2022.1/models_bin/2/resnet50-binary-0001/FP32-INT1/resnet50-binary-0001.xml -P 1

and pushed those models into AWS S3 bucket

aws s3api create-bucket --bucket testingcheckfolder --region us-east-2 --create-bucket-configuration LocationConstraint=us-east-2

aws s3 cp yourSubFolder s3://testingcheckfolder/model_files/models/resnet50/1/ --recursive

then cloned openVINO model server operator git repo and ran the below command

$ git clone https://github.com/openvinotoolkit/operator.git

$ cd operator/helm-charts

$ helm install ovms-sample ovms --set models_settings.model_name=resnet50,models_settings.model_path=s3://testingcheckfolder/model_files/models/resnet50/,models_repository.aws_access_key_id=xxxxxxxxxxx,models_repository.aws_secret_access_key=xxxxxxxxxx,models_repository.aws_region=us-east-2

I ran all the below commands to make sure whether my model works in the cluster

kubectl get service

kubectl create deployment client-test --image=python:3.8.13 -- sleep infinity

kubectl get pods

kubectl exec -it $(kubectl get pod -o jsonpath="{.items[0].metadata.name}" -l app=client-test) -- bash

after running the above command it gets into the server

curl http://ovms-sample:8081/v1/config

{

"resnet50" :

{

"model_version_status": [

{

"version": "1",

"state": "AVAILABLE",

"status": {

"error_code": "OK",

"error_message": "OK"

}

}

]

}

after this I was able to invoke the model from inside the server using below python script

cat >> /tmp/predict.py <<EOL

import cv2, numpy as np

from ovmsclient import make_grpc_client

client = make_grpc_client("ovms-sample:8080")

img=cv2.imread("/tmp/zebra.jpeg")

resize_img = cv2.resize(img, (224,224))

image = resize_img[..., np.newaxis]

training_data = np.transpose(image, (3, 2,0,1))

inputs = {"0": np.float32(training_data)}

results = client.predict(inputs=inputs, model_name="resnet50")

print("Detected class:", np.argmax(results))

EOL

python /tmp/predict.py

Detected class: 340

However, I'm trying to invoke the model outside the server, which means I'm trying to access the model from public internet. Could you please help me here? Also, I noticed in the following document that Helm chart do not expose the inference server externally by default. Please find the link https://www.intel.com/content/www/us/en/developer/articles/technical/deploy-openvino-in-openshift-and-kubernetes.html

could you please tell/help me where to mention this config? as I have used the following git repo https://github.com/openvinotoolkit/operator/tree/main/helm-charts/ovms could you please tell/help me where to mention this?

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Iamexperimentingnow,

Thanks for reaching out to us.

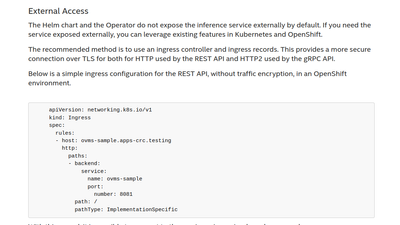

Referring to the section Accessing Cluster Services, sub section External Access in Inference Scaling with OpenVINO™ Model Server in Kubernetes and OpenShift Clusters, the configuration file is a simple ingress configuration for the REST API, without traffic encryption, in an OpenShift environment.

You may refer to Using the AI Inference Endpoints to run predictions in Managing model servers via operator to configure OpenShift route resource or ingress resource in opensource Kubernetes linked with the ModelServer service.

Regards,

Wan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Wan_Intel Thanks for your response. You have referred the same documentation which I have mentioned in my question. However, my question is where to mention those config?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Iamexperimentingnow,

Thanks for your information.

Let us check with our next level, and we'll update you as the earliest.

Regards,

Wan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Iamexperimentingnow,

Thanks for your patience. We've received an update from our next level.

Our developer will update the article to blog.openvino.ai soon since there were slight changes in the operator. We will reach out to you once the latest documentation is up.

Regarding your question on where to mention this config”, the Ingress YAML should be deployed separately (so copy & paste, make modifications if needed, and apply to the cluster).

It’s not part of OpenVINO Model Server deployment, so it’s not deployed via Helm or the operator with the rest of the resources. It should be configured after the OVMS deployment (no matter if via helm or the operator).

You can also take a look at the below link for service networking.

https://kubernetes.io/docs/concepts/services-networking/ingress/

The other option for exposing the service outside of the cluster is changing the service type to NodePort, but this would be for temporary solutions.

Hope this info helps

Regards,

Wan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Iamexperimentingnow,

Thanks for your question.

Please submit a new question if additional information is needed as this thread will no longer be monitored.

Regards,

Wan

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page