- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There is a guy in Russia who sells a program called 3DField - I have used it since the 90's as it is quick contour package.

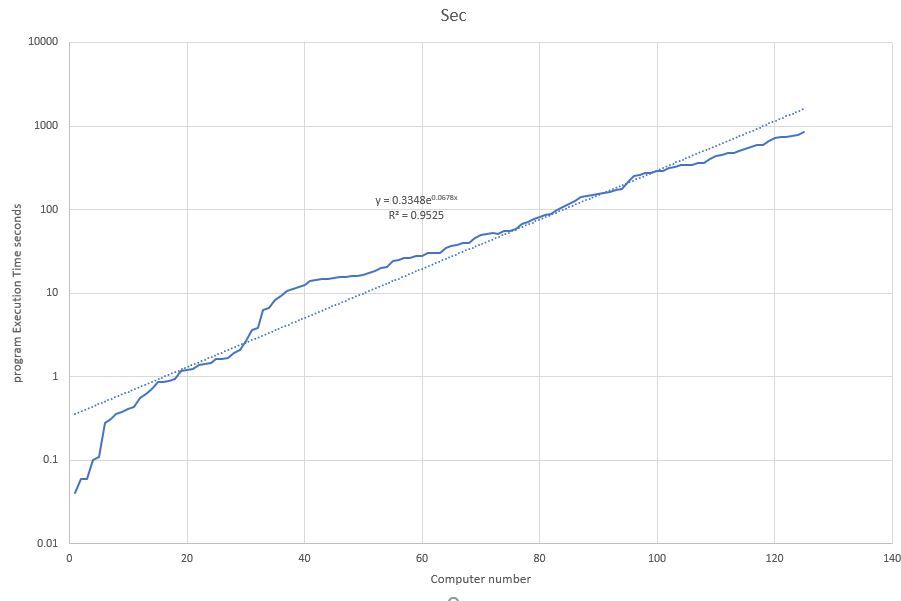

He has tested the same piece of Fortran code against a wide range of computers. The list and results are attached

no 1 is a Intel i9 9900KF (Intel Visual Fortran 2019 x64)

no 126 Intel 386/20 w 387 (Microsoft FORTRAN 5.0)

I used a compaq portable - two floppy drives and Fortran 3.13 - wonder what speed it had.

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Very interesting comparison. Thanks for sharing! I can see and compare the generations of processors with which I grew up on this list.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The major disadvantage of using a modern CPU and compiler (year 2020) is you can no longer use the 16 minutes to go the the break room for a fresh cup of coffee and chat with a colleague.

Jim Dempsey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

jimdempseyatthecove (Blackbelt) wrote:The major disadvantage of using a modern CPU and compiler (year 2020) is you can no longer use the 16 minutes to go the the break room for a fresh cup of coffee and chat with a colleague.

Jim Dempsey

...and to make it worse, since sub-modules arrived I don't get the huge build cascades

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jim,

Interesting concept to blame compiler / hardware advancement for the loss of the "break room". (sitting in my home office) I can blame so many others for that loss.

computers.xls is interesting; 0.04 seconds ! So many old benchmarks that have lost relevance.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

jimdempseyatthecove (Blackbelt) wrote:The major disadvantage of using a modern CPU and compiler (year 2020) is you can no longer use the 16 minutes to go the the break room for a fresh cup of coffee and chat with a colleague.

Jim Dempsey

Remember the days at Uni when you had to walk your punch cards to the central computer room and leave them overnight.

I ran a program on the mainframe once and it grabbed the whole system, I was told the next day - here are the results -- do not do that again.

The same run on my core i5 takes a few minutes

I used to make a cup of tea and drink it in the time a compaq took to complie a program on floppies.

John

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I've had the same job since June 1977, starting as a co-op student. In my 2nd week on the job, I started working on a reservoir system model that has been in continuous use my entire career. In the early days, we'd run it as a batch job on the IBM mainframe, but moved to PC's as soon as they were available. I remember running a large series of model studies in 1990 on whatever the top of the line PC was at the time. We'd run a some short runs during the daytime to see if the change we wanted to make was working, and then we'd start much longer (100 year) run right at quitting time. The run would take about 6 hours to complete and would use up just about all the available disk space, so we'd copy the results to floppy disks to make room for the next alternative. This was using Microsoft Fortran 3.31.

Eventually we switched over to CVF, which just by itself halved the runtimes. These days we use IVF. The same 100 year run on modern hardware and compiler takes under a minute.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thinking back to the IBM 360 days, I remember that the slow part of changing code wasn't the compiling, it was the linking. We'd figured out that anything you could do with batch processing and card decks, you could also do on the time-sharing system (TSO), and then you wouldn't have to wait around for your printouts and cards to come back. Compiling code with the G1,G, or H compiler was reasonably fast, but the linker would take an hour.

Eventually we figured out that you could use your old load module as the linker library instead of using the standard library, so long as you hadn't introduced any new external dependencies. This really improved the link times. The other real problem was getting into the TSO system in the first place, as the system only supported 24 simultaneous users in a company with 20,000 employees. (MAX USERS ON TSO, TRY LATER)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Cryptogram, a back-of-the-envelope estimate gives a speed-up that agrees with your anecdotal figures.

1990 80486 50 MHz 1 core, 1 thread, 32-bit data bus: 6 hours

2018 i5-8400 3 GHz 6 core, 6 thread, 64-bit data bus: < 1 minute

Ratio 60 6 2

Expected speed up, if speed is proportional to frequency, number of threads and data bus size: 720

Actual speed up, running reservoir simulation: at least 360 (from 6 hours to less than 1 minute)

If you actually used MS Fortran 3.3 in 1990, the EXE that it produced would have been a 16-bit program running on a 32-bit CPU.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There is nothing inherently wrong with a 16-bit program provided the program and data fit within the limited amount of RAM (640KB or 1MB). This was a clock speed, FPU speed, memory bus speed, memory access issue, each and all of which were bottle necks (not to mention no vectors).

The CPUs I cut my teeth on (1967/68) clocked at 0.8 MHz and initially had 4K of 12-bit words. "mass storage" was 10cps paper tape. Programming was generally performed using an interpreter FOCAL or an assembler/linker PAL. However, if you really wanted the benefit of coding in a higher level language, you code in FORTRAN-II.

Toggle in the paper tape boot loader (or re-use if not destroyed)

Load the binary loader paper tape

Load the RIM (Read In Mode) paper tape (or re-use if not destroyed)

Load 1st of 3-pass FORTRAN compiler

Load in source code

Punch out intermediary code tape

Load 2nd of 3-pass FORTRAN compiler

Load in pass-1 intermediary code

Punch out intermediary code tape

Load 3rd of 3-pass FORTRAN compiler

Load in 2nd pass intermediary code

Punch out intermediary code tape (SABER assembly source format)

Load in SABER assembler

Load in intermediary code tape (SABER assembly source format)

Punch out binary code

Load the RIM (Read In Mode) paper tape (or re-use if not destroyed)

Load in program (plus any pre-built library binaries)

Run, test or crash program

That was really an experience that you didn't want to repeat too often.

Edit: Found this link after posting:

https://techtinkering.com/2009/07/14/running-4k-fortran-on-a-dec-pdp8/

Jim Dempsey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Early nineties floating point calculation speed on an entry level IBM RISC machine was about 10 times faster than that of any PC.

Risc workstation had 64 bits processor/memory so double precision operations were faster than single precision.

Regards

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

"Early nineties floating point calculation speed on an entry level IBM RISC machine was about 10 times faster than that of any PC" at that time.

I don't have to hand the history of pc processor clock rate increases through the 90's, but it was a significant increase. To make a program faster, it was a simpler approach of buying a newer PC, (although a keen programmer like myself always believed that I could adjust the solution algorithm to the changing hardware.)

My gains in the last 10 years have been via both newer PC and OpenMP. ( I hold on to the hope I can still make a difference)

Tracking clock rate as a measure of improvement must also include the changes to instruction set, ie cycles per flop (floating point operations, or now flops per cycle). Since the late 80's, my bandwidth minimiser has estimated the solution time by using parameters "processor cycle rate" and "cycles per flop". My code from the 80's estimated the Pr1me 750 (VAX 780) at about 30,000 flops. My 486 estimate was 170 cycles per flop, compared to second gen i5 of about 3 cycles. Now i5/i7 are at 1 cycle; 4 Gflops (multiply operations). With 10 threads I am now getting about 40 Gflops practical performance, which is a long way short of quoted AVX2 in-cache performance. "Practical performance" is for achieved floating point multiplies (ignoring addition) and accounts for transfer of information between memory and cache, previously between paging disk and memory.

1970's algorithms to cope with paging disk delays are just as relevant today as "cache smart" strategies. Perhaps hardware purchases were a cheaper approach than all those software "updates" and break room chats.

It is interesting how the IBM RISC machines and their equivalents of the early 90's were replaced by the ever increasing clock rates of the cheaper intel pentium+ chips.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

CISC vs. RISC has been a battle through the ages - often characterized as "Braniacs vs. Speed Demons". RISC seemed to be a winner through the 80s, but out-of-order and micro-ops in CISC architectures leveled the playing field. RISC architectures continued to grow more complex, approximating CISC in the end.

I was doing some web searching related to this thread and ran across my former DEC (and later Intel) colleague Dileep Bhandarkar's retrospective of his career, in which he illustrates CPU architecture through the ages. https://mvdirona.com/jrh/TalksAndPapers/DileepBhandarkarAmazingJourneyFromMainframesToSmartphones.pdf

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

What amazes me is how I can start a simple thread with a random observation it takes off into a whole new world.

The Water Supply Analysis program, Magni, that mecej4 helped a lot with ran many times faster on Fortran than C# as mecej4 predicted on a Friday and I proved to my satisfaction on the Sunday.

It is very easy to change C# programs to Fortran --

But we are left with all of the people who use R and matlab etc.. and do not talk about Python.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page