- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Back in the days when there were only two languages Fortran and everything else.

We had to create dxf files for making drawings using Fortran. The problem we would have is that generating millions of lines and points sometimes led to a number being **** instead of the real number in a format statement.

AutoCAD at that stage would simply stop and not tell you the line number for the error. This is before the internet. I found, I think in Byte - the best magazine God ever invented and I loved Jerry Pournelle's articles, if you have not read his humour, you have led a life not fully engaged, anyway I found a little program called VEDIT. It is Canadian and about 80 dollars.

At the moment I have some people in Europe rather interestingly asking about differences in Frequency. The data comes from a MySQL database in the cloud collected over several years.

I need to create a MySQL table for their requests and then upload the results from the existing data and then amend the collection program so the analysis is automatic. But I need a program to create the data up until today from the cloud data.

One should always consider what should be Pournelle's Sixth law - I can always do it faster in Fortran than anything else, although I can code it faster in LISP, but the lisp interpreter throws away the advantage.

But the data file took 30 minutes to download from the server, not bad there is a good workbench to do it, but it has been running for 9 hours on a core I7 with an ssd to create a text file. I am a third of the way there from the database.

This is not a problem as I can use the early stage of the datafile to check the program, but the data file is now so big, NOTEPAD++ and others of that ilk will not open the file. Hence Vedit, thank god for that program, although it costs about 80 and the guy does not update it much, but it is still the best.

But the MySQL data output has many different non-numeric characters and so I am back to the problem of read the CSV file problem in Fortran + plus it always better to create drawing in AutoCAD, which I have in Fortran. So I dragged out the code Jim helped create for the COVID data files, but the input line is 460 characters long, so I quickly ran out of patience to do nested if statements. But I solved the problem and sped up the program creation - so I was happy

call lookup(iline,k,l,m,numD(j),'-')

call lookup(iline,k+3,l+3,m+3,numE(j),' ')

call lookup(iline,k+6,l+6,m+6,numF(j),':')

call lookup(iline,k+9,l+9,m+9,numG(j),':')

call lookupSEC(iline,k+10,l+15,m+16,numH(j),'"')

write(*,120)num(j),numA(j),numB(j),numC(j),numD(j),numE(j),numF(j),numG(j),numH(j)

120 format(i9,' ',i3,' ',i5,' ',i5,' ',i3,' ',i3, ' ',i3, ' ',i3,' ',F7.3)

endif

end do

400 Write(*,130)

130 Format(" End of File")

end program CX1_Frequency

subroutine lookup(iline,i,j,k,num,A)

use Base

implicit none

integer i,j,k, num

character*460 iline

character*1 A

if((iline(k:k) .eq. A)) then

read(iline(i:j),*)num

endif

return

end

subroutine lookupSEC(iline,i,j,k,num,A)

use Base

implicit none

integer i,j,k,m

REAL (KIND=dp) num

character*460 iline

character*1 A

do 100 m = k-6,k

! write(*,110)iline(m:m)

100 end do

110 Format((A))

if((iline(k:k) .eq. A)) then

read(iline(k-6:k-1),*)num

elseif((iline(k-1:k-1) .eq. A)) then

read(iline(k-6:k-2),*)num

endif

return

end

Anyway so much for one day. It would be much easier if the frequency data did not have temp effects and if the X and Y results did not include ghost frequencies from the dominant Z, a beam cannot vibrate perfectly about a Z axis so you see ghosts of the main freq in the X and Y.

Ah experimental data - you may have a theory as I explained to today but there is always the data, the CLT and the law of large numbers.

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Using NOTEPAD++ or VEDIT on humongous text files with buku # edits is best done:

inputFile -> outputFile

parsed with intelligence.

In years past, my choice would be TECO. While it was hard to learn, it could do just about everything related to text editing. Preferrably, you would not want to buffer the entire file, rather the automation would use a scan buffer.

I may resurrect TECO for x64 systems.

Jim Dempsey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sample file - I cannot send a sample file maximum size is 71 MB and currently it 0.5GB and still growing. But the output into the file is so randomly different. You have the same problem with dxf file generation you have to really careful with the formats.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

815451,1,2049,"2019-03-12 15:27:46.530593"At line 815451 they suddenly change from 46.567 to this - there is nothing special about this number

Whoever wrote the mysql export routine in Workbench is not a kind person.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Did you mean 46.53? (.nn rounded value of 56.530593)

Now that you know the data can be "contaminated" by this, a special filter for this can be added to your pre-processing filtering program:

while not done

read line

filter 1

filter 2

...

filter n

write line

end while

Jim Dempsey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes -- you are correct

I have to run every line through multiple filters to find out the exact form of each number, the person who wrote the output program used the - sign for negative and a separator - they are creating a EXCEL csv file and that is not a nice format.

The mysql output file generator is now putting out 10 lines per second and there are 4.5 million to go

I can run the results into a txt file to look at it as I go , but EXCEL will only load 1.2 million lines and I am up to 2 million - luckily vedit saves the day.

Some of the errors get trapped at an interval of 10000 to 100000 lines - one error popped up once in a million lines - trapping them is fun.

The only program that can plot them easily will be AUTOCAD using a Fortran DXF file generator.

We are moving into a new era of statistics, the era of to much data and looking for the needle in a haystack whereas before we had a needle on a concrete floor and we just needed a bright chap or lady to find it using some statistics.

I had a quick look at the 11.5 Hz band on a timber beam measured over 4 months - the frequency dropped in the 1.9 million points at a rate of 1 by ten to the -8, my friend in England said so what and I said it has a t-Stat of 14, that is not on any t-Stat table - the confidence limit is certain. We can only test 1/6th of the data in EXCEL and this is only 3 years data.

We are measuring the rate of decay of a timber beam that can quite comfortably last 100 years and the issue is not are we measuring something, we are, but we cannot keep the world that still for that long, so what have we measured - the concrete floor could be moving.

Thank the guy who invented Fortran, and it is a lot of fun,

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

1.464844,0.0003,"",24.75,-0.233883

After 817786 lines the output gives us on number 0.0003 where every other number in this column is exactly 0.000389 digits,

I dislike these programmers.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You really need to read the input lines as a text buffer and then apply a set of parsing function. Fixed read formats could have you chasing your tail for a long time and could get very messy/complicated.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I do read the input line as a text buffer, the parsing functions work fine except in some weird situations that I am slowly fixing. The problem is the file is 2 million lines long and has 29 variables on each line so you cannot pick up all the possible combinations.

it is the same problem as one had in generating DXF files as text files. The numbers could be any length but usually they were within a reasonable range and then someone would make a mistake and you get a number like 6431257898.678345 which just came up - the mysql output program wrote the number in that format which is ok, but it means you have to check for a long number, the trick is finding the minimum long or short like 0.0 as most numbers are 0.244451, so you slowly work away and avoid excess filters.

A better way is to create an array that holds the location of the separators like (3,6,9, 23, 14) and what you want is in between, next time.

We work with one company that has changed the standard output from its devices 7 times in 15 years and the device has not changed and then I ran into the problem of the French using , as a 0.000 and also using the , as a separator - I had to write a parser to take the French data apart and create a proper data file.

No one can tell me that from 0,00,0,000,00,0,000,0,0 you can tell what the numbers are unless you have a pattern.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We had a similar problem with data from Land Surveyors - this is 30 miles of survey in one file at 6 meter intervals, I finally got the chief draughtsman to select the format he liked and we simply made it mandatory to submit in that format.

I was with the company that wrote the software who sold it to the Surveyors and they were complaining that they had to support an output format that was old and simple, I looked at them and said you stop supporting it and we will change software and surveyors - perhaps you should talk to the surveyor first.

For the exchange of information the R12 AUTOCAD DXF format is the best as you can read it.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am not sure where you are coming from. If the fields have mixed data types and the fields are not tagged in anyway then the task is impossible unless the field type/number and combinations are each unique to a specific record type. If it is a simple issue of the floating format varies then parse using the delimitator (comma it would seem) and the read the real from the substring using '*' format which will read all normal properly formed real numbers. Using different floating point read formats on a mix and match basis is not a good method.

Personally having worked with both reading and writing dxf , step and iges data I found dxf to be quite messy as that have chopped and changed entities in different ways over the years and isn't very user friendly.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am not sure where he is going, either!

There are some "self-describing" file structures; XML, HDF and NetCDF come to mind.

If such a structure is not used, the metadata has to be written down somewhere else, and the conventions written there have to be followed rigorously.

There have been some spectacular disasters where GB of data was placed into self-describing files, and years later it was found that because of a defect in the structure some parts of the original data could not be extracted.

In addition, of course, we have the problem of storage media and associated hardware going obsolete.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

That observation reminds me of unfortunate incidents with tapes or cassettes being eaten because they were inserted in the wrong type of reading device. No need for the device itself to become obsolete ...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

>> so you slowly work away and avoid excess filters.

The time to execute a filter is relatively short. Unless you do something sophomoric like:

file -> filter-1 -> file

file -> filter-2 -> file

...

file -> filter-n ->file

IOW one pass of file per filter.

The better route is

file ->

do

readBuffer(exit on eof)

filter buffer using filter-1

filter buffer using filter-2

...

filter buffer using filter-n

writeBuffer

end do

-> file

IOW perform all the filtering in one pass.

BTW making the buffer too large or too small is counter-productive.

Jim Dempsey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jim:

You taught me how to do that with the COVID virus European data files. I am using a very slightly modified version that is fast - about 1 minute for 2 million lines including screen writes,

The change I made was to put the filters into subroutines and then call the right filter

call lookup(iline,k,l,m,numD(j),'-')

call lookup(iline,k+3,l+3,m+3,numE(j),' ')

call lookup(iline,k+6,l+6,m+6,numF(j),':')

call lookup(iline,k+9,l+9,m+9,numG(j),':')

call lookupSEC(iline,k+10,l+15,m+16,numH(j),'"',n)

call lookupNUM(iline,n,nt,numI(j),",",1)

call lookupNUMA(iline,nt,na,numJ(j),",",1,9)

call lookupNUMA(iline,na,nb,numK(j),",",1,9)

call lookupNUMB(iline,nb,nc,numL(j),",",1,9,1)

call lookupNUMB(iline,nc,nd,numM(j),",",1,9,2)

call lookupNUMB(iline,nd,ne,numN(j),",",1,9,3)

call lookupNUMB(iline,ne,nf,numO(j),",",1,9,4)

call lookupNUMB(iline,nf,ng,numP(j),",",1,9,5)

call lookupNUMB(iline,ng,nh,numQ(j),",",1,9,6)

call lookupNUMB(iline,nh+3,ni,numR(j),",",1,9,7)

call count8(iline,ni,",",25,nj)

! write(*,*)j,nj,(iline(nj:nj+5))

call lookupNUMB(iline,nj+1,nk,numS(j),",",1,9,8)

call lookupNUMB(iline,nk,nl,numT(j),",",1,9,9)

nf, ng etc are just the location for the first digit and the next "," and each number type has filter.

the first problem is I am still downloading the file - I am up to 2.6 million records and I had 1.4 yesterday. I have to get to 6.5. As the file grows and the number of entries I am filtering grows - the mistakes in the filter constraints show up. My problem at the moment is the last six entries, one combination of zeros fools the filter at the moment. There are 188.5 million records in the final file so expecting interesting errors is to be expected.

It is like the problem with using old code - although mysql Workbench is not old, it is just finding the quirks.

You and mecej4 are excellent teachers, as are all the Titans.

I always wondered who deleted the post where I suggested a long day of beer drinking in an English pub in the snow talking Fortran with you would be a great day. The beer is cold and the view is good and the food is wonderful.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Am I to assume

file-In -> apply all filters -> filtered-file-out

filtered-file -> MySQL (craps out)

add/fix filter

re-do

If that is the case could you...

When MySQL balks at an insert (of the filtered line), that you write the offending line to an exception file, then continue on with the insertions. At the end of this pass, most of the data will be inserted into the database. Then you can update your filters, and run another insertion pass (into existing database), repeat until done.

I assume you are doing something like this.

The only issue then is when MySQL accepts the malformed input data without complaint. To aid in fixing bad input data, you might consider maintaining the original input line number with the record. This way you can go back to the original data to see if it is bad data or error in filter.

Jim Dempsey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

My filter design was wrong - I started looking for

1.000000,2.00000, etc. working down in size

The ones that caused the problem is that I searched for the above first and it picked up

1.0,1.0, before the

1.0,

Simple conceptual mistake -- darn

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

character*460 iline

In reality this is an array of 460 single characters so why do we still use character*460 instead of character iline(460)

The filter gets to the end of the data, I would assume because it has read the file it will pack up the acsii characters for nl and cr - but it appears that all I get is spaces.

Is the default character for a character a space?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The TECO filter to convert 1.000000,2.00000 to 1.0,2.0

<:S.0^E#0^N^ED$; ^S+2C::FD^E#0$>

The above is

loop:

conditionally search buffer (from current position forward) for

period, zero, any number of zeros (including none), followed by NOT digit

if the search fails, exit loop

backup to 2 characters following the start of the found text

anchored delete any number of 0's (including no delete should none follow)

end loop

The above filter can be embellished to exclude enquoted text...

The above filter would be followed by your other filters.

Should you choose to use an overly large buffer, and have a large number of replacements, you can speedup the filter by processing the buffer backwards:

ZJ<-:S.0^E#0^N^ED$; ^S+2C::FD^E#0$>

Jump to end of buffer

loop

conditionally search backwards ...

You can develop and test the filters one at a time, and then insert them into your master loop:

<A -Z; ! append to buffer, exit loop if nothing remains !

your filter 1 here

your filter 2 here

HP HK ! "punch" the wHole buffer to output, kill the wHole buffer !

> ! end loop !

Assume one of the filters is to locate the goofy numbers like 1817287398123981238791.888

IOW search for a string of digits greater than 9 (you pick)

<:S^EM^ED$; ^S+9"G do something '>

Note, the "do something" could be to remove the line from the processing buffer and insert it into an exception buffer:

<:S^EM^ED$; ^S+9"G 0L XA K 1B GA 0B '>

The above would be for buffer 0 being the process buffer, buffer 1 holds the exceptions.

Jim Dempsey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

>>The mysql output file generator is now putting out 10 lines per second and there are 4.5 million to go

That is a horrible lines per second rate.

can you post your filters Inputs and Outputs? (do not post the code)

Filter 1 Line In

Filter 1 Line Out

Filter 2 Line In

Filter 2 Line Out

Filter 3 Line In

Filter 3 Line Out

...

I will compose a set of TECO edit macros, send you a copy of TECO with macros and instructions.

Jim Dempsey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Well it is a lot of fun and I get to converse about the problems with something like the Guernsey Potato Peel Pie Society or No 84 Charing Cross i.e. with a bunch of intelligent humans.

Release 12 of DXF file format is the last one that makes any sense and it is self describing if you can read the integer codes. If you want to produce large drawings quickly it is the best. But when the Fortran programs were written I was using a Compaq Portable with 20 MB hard drive. So in the files generated I needed to make sure there were the min number of extra zeros. VEdit at that stage worked but we were talking about 5 minute file loads and 10 min regen on AutoCAD.

The sort of code I used looked like this:

C ************************************************************

C

SUBROUTINE WRITE3(X3)

C

C ************************************************************

DOUBLE PRECISION X3

IF (X3 .LT. -9999.99999) THEN

WRITE(3,5)X3

5 FORMAT(F10.4)

ELSEIF (X3 .LT. -999.99999) THEN

WRITE(3,4)X3

4 FORMAT(F9.4)

ELSEIF (X3 .LT. -99.99999) THEN

WRITE(3,3)X3

3 FORMAT(F9.4)

ELSEIF (X3 .LT. -9.99999) THEN

WRITE(3,2)X3

2 FORMAT(F8.4)

ELSEIF ( X3 .LT. 0.0000) THEN

WRITE(3,1)X3

1 FORMAT(F7.4)

ELSEIF ( X3 .LT. 9.9999) THEN

WRITE(3,10)X3

10 FORMAT(F6.4)

ELSEIF (X3 .LT. 99.9999) THEN

WRITE(3,20)X3

20 FORMAT(F7.4)

ELSEIF (X3 .LT. 999.9999) THEN

WRITE(3,30)X3

30 FORMAT(F8.4)

ELSEIF (X3 .LT. 9999.9999) THEN

WRITE(3,40)X3

40 FORMAT(F9.4)

ELSEIF (X3 .LT. 99999.9999) THEN

WRITE(3,50)X3

50 FORMAT(F10.4)

ELSEIF (X3 .LT. 999999.9999) THEN

WRITE(3,60)X3

60 FORMAT(F11.4)

ELSEIF (X3 .LT. 9999999.9999) THEN

WRITE(3,70)X3

70 FORMAT(F12.4)

ENDIF

RETURN

ENDSo this is about 1988 and no use of implicit none. There was no internet and no email and only BYTE at the local library.

The problem would be that occasionally a number was needed that was outside that range. So I simply added it until I had covered all the numbers we used at the time. Simple neat and as small as possible.

The added advantage is the Fortran real output gives out a series of ***** if it cannot print a number, so my workmates could look and once in a while say - number not covered is such and such. DXF files allows comments so you could code the routine that created the section of the DXF File.

I was writing and using the code at the same time, the development cycle was continuous.

The other advantage was the users sat in front of me and screamed when it did not work. The review cycle was minutes.

The CX1 accelerometer is good, at the moment it takes 2000 data sets per second and the NUC in a multithreaded program will determine a whole lot of interesting numbers, 29 of the numbers are uploaded to a MySQL database in the cloud every 8.192 seconds and the results can be investigated using a simple PHP web site.

But the people who want to use the data are only interested in the answer, is the bridge safe and why.

This is akin to F.N. David's brilliant treatise on bombing stats in the second world war, she statistically said do not drop two bombs in one hole. Her paper has now been reclassified by the UK government and I cannot find my copy. I paid for it to be scanned to pdf, so it is a bit annoying.

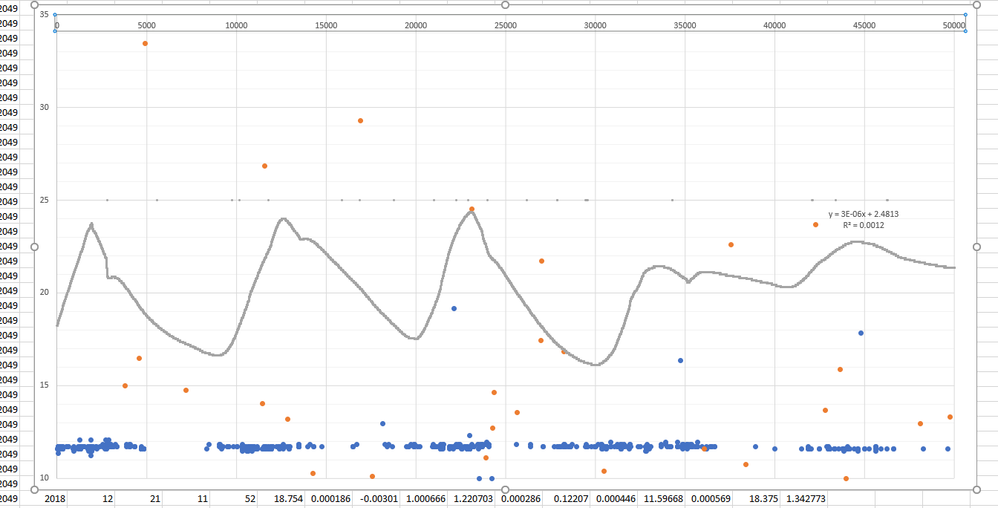

So we have been moving on to look at the key stat - a change in frequency - the only problem is we now have beams and bridges will more than 1.5 million data points for a single quantity. We now know that some of these frequencies change with temperature and some of the frequencies disappear at different temperatures, this is 11.5 Hz on a timber beam over 6 days in EXCEL - I have 3.5 years of data.

The grey line is temperature - 10000 points per day so you about 6 days. Each one of the points comes from a probability distribution. The data file for this beam is over a GB in text and the only question is - is anything changing that is important.

So to answer the question I am going to add some tables to the MySQL database, and it really easy to add the data from the NUC, but I am missing the analysis of the existing data. So I am downloading the MySQL database using the MySQL workbench program and then exporting the data to a text file using the Workbench export function. I could read the MySQL using the DE German Fortran DLL program and I should have but this appeared on the surface to be quicker. I can do the necessary analysis in Fortran and then shoot the results back to tables in the cloud.

So I am taking the csv file apart, one would assume that the csv file from the Workbench would be nice, but it is not nice.

I cannot run the data into EXCEL to the analysis as it will only hold 120 days of data in length -- so I can work out the methods in EXCEL and then use Fortran to replicate them on real sets.

I also have a good set of DXF File writers for Fortran so graphing it is easy. If I rely on the PHP I am stuck with a limit of about 180000 points or three weeks.

I have about 15 databases to fix, the first is the largest and hardest as I code it.

There are many ways to do this -- but the DXF generator will allow me to create some nice pictures.

C# will take a CSV file apart in seconds, but C# means I cannot ask you lot questions and I prefer to be able to ask.

CSV files are not going away as we are teaching the world's youth to use a program from the 70's as their main analysis tool. So who ever wrote the MySQL Workbench export code has some crazy format statements, but that is not difficult considering the number of alternatives. You get the same problem in creating drawing files.

So that is the fun.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page