- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello everyone,

I am doing inverse of a matrix and see that if my program uses both OMP and LAPACK library, I can not use 100% CPU usage, just 10%. I also find out that OMP and MKL library can not use 100% CPU usage if they are used together.

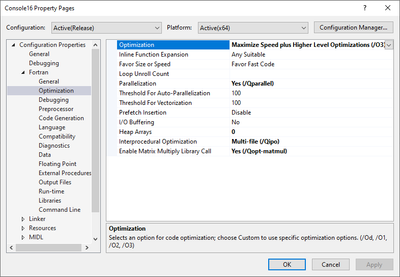

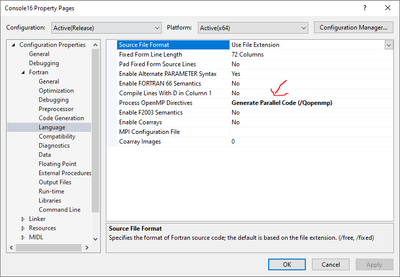

I attached all options I chose. Is there any mistake I made?

Thank you!

program testinv

implicit none

integer i,j,N

double precision, allocatable,dimension(:,:):: A,invA1

N=100000

allocate(A(N,N),invA1(N,N))

!$omp parallel do

do j=1,N

do i=1,N

A(i,j)=1d0

enddo

enddo

!$omp end parallel do

call InverseMatrixD(N, A, invA1)

contains

subroutine InverseMatrixD(N, A, invA)

implicit none

integer N, IPIV(N), INFO

double precision A(N,N), invA(N,N), WORK(N)

invA(:,:) = A(:,:)

call DGETRF (N, N, invA(:,:), N, IPIV(:), INFO)

call DGETRI (N, invA(:,:), N, IPIV(:), WORK(:), N, INFO)

end subroutine InverseMatrixD

end program testinv

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I found my bad. This one is nessessary. Thanks for visiting my stupid question

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I found my bad. This one is nessessary. Thanks for visiting my stupid question

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There are some extenuating issues for your to be aware of....

MKL has two libraries: serial/sequential and threaded/parallel

MKL threaded/parallel internally uses OpenMP for parallelization.

Both MKL libraries are thread-safe (both can be used from threaded and non-threaded applications).

Note, this is counterintuitive to: Link threaded library with threaded program or sequential library with sequential program.

When a preponderantly threaded application call MKL within parallel regions, then the better choice of MKL libraries to use is the sequential MKL library. The reason being, should MKL threaded library be called from within a parallel region (or actually different thread), MKL (threaded) library will instantiate a unique (different) OpenMP thread pool for use by the calling thread(s). For example, a system capable of 16 hardware threads this could result in each of the 16 application threads call into MKL threaded library instantiating 16 different thread pool, each of 16 threads (256 threads) iow grossly over subscription.

If you have a parallel application... but only call MKL from the master thread, what you can do is link in the MKL threaded library AND set the environment variable KMP_BLOCKTIME=0 (or some small value you determine is best). With this setting, there will still be two thread pools but the spin-wait times at the ends of the parallel regions (your app and MKL) is 0, meaning at the end(s) of the parallel region(s) the non-instantiating thread(s) immediately suspends (making that hardware thread available for the other domain's parallel region(s) or other process on the system).

There are other times when you might want to specifically tune the number of threads as used by the main application and by each caller into the MKL threaded library (this gets complicated).

Jim Dempsey

MK

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page